Btrfs vs ZFS: Which Filesystem Protects Your Data Better?

ZFS provides stronger data integrity guarantees with its battle-tested RAIDZ implementations, end-to-end checksumming, and a proven track record on mission-critical NAS systems. Btrfs is the better choice for single-disk desktops and laptops where its tight Linux kernel integration, transparent compression, and snapshot-based rollback offer excellent data protection without the RAM overhead ZFS demands. The right answer depends entirely on your hardware, your workload, and how many disks you are working with.

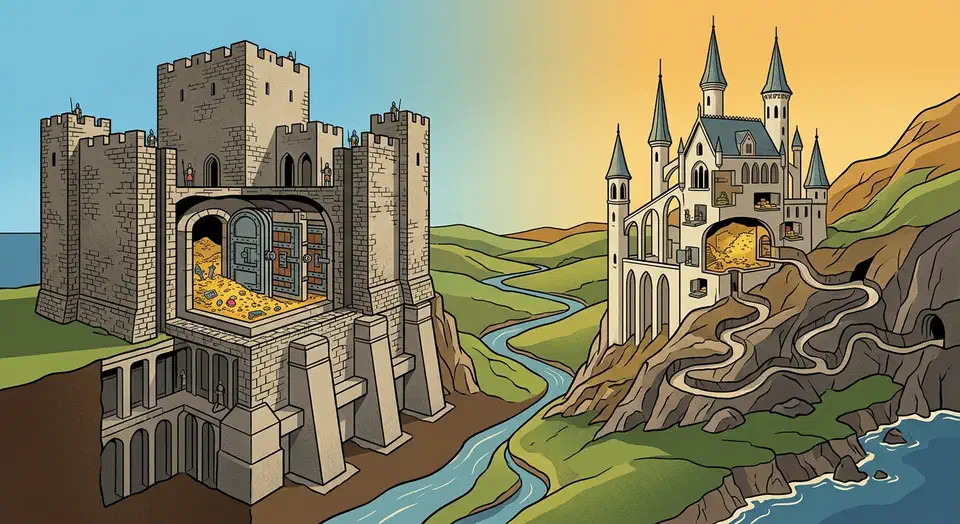

Both filesystems represent a massive leap forward from ext4 and XFS when it comes to protecting your data from silent corruption. But they come from very different lineages - ZFS was born in Sun Microsystems’ enterprise storage division and carries that DNA, while Btrfs was built from the ground up as a Linux-native answer to the same problems. That heritage shows up in every design decision, from memory requirements to RAID implementations.

Data Integrity Features Head to Head

Both Btrfs and ZFS checksum every data block and metadata block by default. This is the single most important feature separating them from ext4 and XFS - they can detect silent data corruption (bit rot) that traditional filesystems are completely blind to. If a cosmic ray flips a bit on your drive, or a cheap SATA cable corrupts a transfer, these filesystems will catch it.

ZFS uses SHA-256 checksums by default, with optional Blake3 available in OpenZFS 2.3 and newer. Btrfs uses CRC32C, which is faster but cryptographically weaker. For data integrity purposes, both are perfectly effective at detecting corruption - you are not trying to prevent a determined attacker from forging data blocks, you are catching random bit flips.

Where things start to diverge is self-healing. ZFS automatically repairs corrupted blocks when using any redundant vdev topology - mirror, RAIDZ1, RAIDZ2, or RAIDZ3. It reads the correct copy from a healthy disk and rewrites the damaged one. Btrfs does the same with its RAID1 and RAID10 profiles, but its RAID5/6 implementation remains unreliable in 2026 and cannot be trusted for self-healing in those configurations.

Scrub operations tell the filesystem to verify every block against its stored checksum. Both ZFS and Btrfs support scrubs and you should be running them regularly - monthly at minimum on any storage you care about. Running a scrub is straightforward on both:

# ZFS scrub

sudo zpool scrub mypool

# Btrfs scrub

sudo btrfs scrub start /mnt/dataThe key difference: on a single-disk Btrfs setup, a scrub can detect corruption but cannot repair it because there is no second copy to pull from. ZFS offers a workaround with the copies=2 property, which stores duplicate copies of data on the same vdev:

zfs set copies=2 mypool/important-dataBtrfs has a similar concept - it stores metadata in dup profile by default on single disks, giving you some protection for the filesystem structure itself, though not for the actual file data.

Both filesystems support send/receive for incremental snapshot replication to remote systems. This is the foundation for building robust off-site backup strategies, and in practice it works well on both sides.

RAID and Multi-Disk Configurations

Multi-disk redundancy is where ZFS and Btrfs diverge most sharply, and it is the primary reason ZFS dominates the NAS space.

ZFS RAIDZ1, RAIDZ2, and RAIDZ3 are rock-solid. They have been trusted in enterprise environments for well over a decade. RAIDZ2 (dual parity) is the standard recommendation for pools of 4-8 drives, and RAIDZ3 (triple parity) makes sense for larger arrays where the probability of multiple simultaneous failures during a rebuild becomes non-trivial. One long-requested feature - RAIDZ expansion, meaning the ability to add a single disk to an existing RAIDZ vdev - landed in OpenZFS 2.3 and is stable in the 2.4 release:

# Expand an existing RAIDZ1 vdev by attaching a new disk

zpool attach mypool raidz1-0 /dev/sdgBtrfs RAID1 and RAID10 profiles are stable and production-ready. RAID1C3 and RAID1C4 (3-copy and 4-copy mirroring) were added in kernel 5.5 and work well, particularly for metadata redundancy on larger arrays.

The elephant in the room: Btrfs RAID5/6 still carries the write hole bug and is not recommended for production data. The Linux kernel wiki still lists it as unstable. This has been the case for years, and if you need parity-based redundancy, ZFS is your only real option between these two filesystems.

ZFS allows mixing vdev types in a single pool. You can combine mirrored vdevs for your data, a striped vdev for L2ARC read cache, and a fast SSD for the ZFS Intent Log (ZIL). This granular control is powerful for tuning NAS performance:

# Create a pool with mirrored data vdevs, a log device, and a cache device

zpool create mypool \

mirror /dev/sda /dev/sdb \

mirror /dev/sdc /dev/sdd \

log /dev/nvme0n1p1 \

cache /dev/nvme0n1p2ZFS also supports special allocation classes, letting you place metadata and small files on a fast SSD vdev while bulk data stays on spinning rust. Btrfs has no equivalent feature.

On the flexibility side, Btrfs handles heterogeneous disk sizes more gracefully. You can add and remove individual devices and rebalance data across them:

# Add a new device to a Btrfs filesystem

sudo btrfs device add /dev/sde /mnt/data

# Rebalance to spread data across all devices

sudo btrfs balance start -dconvert=raid1 -mconvert=raid1 /mnt/dataThis is genuinely useful in a home server where you accumulate drives of different sizes over time.

Snapshot and Backup Workflows

Snapshots are one of the biggest practical benefits of both filesystems, and for many users, they are the primary reason to move away from ext4.

Both create instant, space-efficient snapshots using copy-on-write semantics. A snapshot consumes almost no space initially and only grows as the original data diverges from the snapshot point. Creating one takes milliseconds regardless of how much data the filesystem holds.

# ZFS snapshot

zfs snapshot mypool/data@backup-2026-03-28

# Btrfs snapshot (read-only)

btrfs subvolume snapshot -r /mnt/data /mnt/data/.snapshots/backup-2026-03-28One notable difference: ZFS snapshots are immutable by default. You can clone them into writable datasets, but the snapshot itself cannot be modified. Btrfs snapshots are writable subvolumes by default - you need the -r flag to create a read-only snapshot, which is what you want for backup purposes.

For automated snapshot management, the ecosystem on both sides is mature. On the ZFS side, sanoid

with its companion tool syncoid is the gold standard. Sanoid handles snapshot creation and pruning based on retention policies, while syncoid handles replication:

# Replicate a dataset to a remote host with syncoid

syncoid --recursive mypool/data backup-host:backup-pool/dataOn the Btrfs side, snapper (originally from openSUSE) and btrbk fill the same role. Btrbk is particularly good for backup workflows:

# btrbk.conf example

volume /mnt/data

subvolume home

snapshot_dir .snapshots

target send-receive /mnt/backup/home

snapshot_preserve_min 2d

snapshot_preserve 14d

target_preserve_min 2d

target_preserve 30d 6mWhere Btrfs really shines is desktop integration. It integrates with GRUB and systemd-boot for boot-time snapshot selection, meaning you can roll back a failed system upgrade by selecting a previous snapshot from the boot menu. openSUSE

Tumbleweed has had this working smoothly for years, and Fedora leverages the same capability. ZFS boot environments work on FreeBSD and Ubuntu, but require more manual setup or tools like zsys.

For NAS backup workflows specifically, ZFS replication with syncoid over SSH is hard to beat. It offers resumable, encrypted, and compressed incremental replication with minimal configuration. The --raw flag preserves native ZFS encryption, so your data stays encrypted in transit and at rest on the backup target:

# Encrypted, compressed, resumable send

syncoid --sendoptions="w" --compress=lz4 mypool/data remote:backup/dataPerformance, RAM, and System Requirements

ZFS’s advanced features come with real resource demands, and you need to budget for them.

The ARC (Adaptive Replacement Cache) is ZFS’s in-memory read cache, and it is aggressive about using available RAM. The common rule of thumb is 1 GB of RAM per 1 TB of storage, plus a base of about 8 GB for the operating system and the filesystem itself. A 20 TB NAS should have 28 GB or more of RAM. The ARC is technically reclaimable - the kernel can reclaim it under memory pressure - but in practice, a system that is constantly fighting for memory between the ARC and application workloads feels sluggish.

You can cap the ARC if needed:

# Limit ARC to 8 GB in /etc/modprobe.d/zfs.conf

options zfs zfs_arc_max=8589934592Btrfs has no special RAM requirements beyond the standard Linux page cache. It performs well on systems with as little as 2-4 GB of RAM, which makes it the obvious choice for lightweight systems, embedded devices, or virtual machines where memory is at a premium.

Transparent compression is available on both and worth enabling for most workloads. ZFS supports LZ4 (default, very fast), Zstd (tunable levels 1-19), and Gzip. Btrfs supports LZO, Zlib, and Zstd (levels 1-15). On both filesystems, Zstd at a moderate level hits the sweet spot of compression ratio versus CPU overhead:

# Enable Zstd compression on ZFS

zfs set compression=zstd-3 mypool/data

# Enable Zstd compression on Btrfs (mount option)

sudo mount -o compress=zstd:3 /dev/sda1 /mnt/dataExpect compression ratios of 1.5-3x on typical data like text files, logs, and source code. Binary data and already-compressed media files will see minimal benefit.

For sequential write performance, the two are roughly comparable on modern NVMe storage. ZFS has a slight edge on RAIDZ configurations due to dynamic stripe width, while Btrfs tends to be faster on single-disk setups because of lower overhead.

A word on deduplication: ZFS supports inline deduplication, but it is extremely RAM-hungry - roughly 5 GB of RAM per TB of deduplicated data for the dedup table (DDT). Unless you have a very specific workload with high data redundancy and RAM to spare, leave it off. Btrfs does not support inline dedup, but offline tools like duperemove and bees can reclaim space from duplicate blocks after the fact, which is a more practical approach for most users.

One final practical consideration: ZFS requires building the kernel module via DKMS on most distributions. Ubuntu ships it pre-built, which saves hassle, but on Fedora or Arch you are dealing with DKMS builds after every kernel update. Btrfs is built directly into the mainline Linux kernel - zero installation effort, guaranteed compatibility, and no waiting for module rebuilds before you can reboot.

Desktop vs NAS: Which Filesystem for Which Workload

The right filesystem depends on whether you are protecting a laptop’s root partition or building a multi-disk storage server.

On a desktop or laptop with a single SSD, Btrfs is the clear winner. It is the default filesystem on Fedora, openSUSE Tumbleweed, and Ubuntu’s installer offers it as a first-class option. Snapshots enable painless rollback after updates, compression saves SSD space, and there is zero RAM overhead to worry about. Set up snapper, enable Zstd compression in your fstab, and you are done.

For a NAS with 4 or more drives, ZFS is the safer choice. RAIDZ2 gives you two-disk fault tolerance and the self-healing scrub process actively protects against silent corruption across large storage pools. Pair it with ECC RAM if your budget allows, set up sanoid for automated snapshots, and schedule monthly scrubs.

If you have a home server with just 2 drives, either filesystem works well in a mirror configuration. A ZFS mirror or a Btrfs RAID1 both provide redundancy and checksumming. Pick whichever you are more comfortable managing. If you are already running Ubuntu, ZFS is easy to set up. If you are on Fedora, Btrfs is already there.

A mixed workload where you have a desktop with external backup drives is actually a great use case for running both. Use Btrfs on the root filesystem for snapshots and system rollback, and ZFS on the backup pool for maximum data integrity. Both coexist fine on the same machine.

Proxmox VE users should lean toward ZFS, which is natively supported for both root filesystem and VM storage, with an excellent management GUI in the web interface. Btrfs support exists in Proxmox but is less mature and lacks the same level of GUI integration.

For container storage with Docker or Podman , both offer native storage drivers. ZFS is slightly more mature for container workloads, but Btrfs works perfectly well for rootless Podman on desktop systems.

| Use Case | Recommended FS | Why |

|---|---|---|

| Single-disk desktop | Btrfs | Kernel-native, low overhead, great snapshots |

| NAS (4+ drives) | ZFS | RAIDZ2, self-healing, proven reliability |

| 2-drive mirror | Either | Both are solid in mirror mode |

| Proxmox VM storage | ZFS | Native support, GUI management |

| Laptop with limited RAM | Btrfs | No ARC overhead, works with 2-4 GB |

Neither filesystem is universally better. ZFS is the more conservative, battle-hardened choice for multi-disk storage where data loss is unacceptable. Btrfs is the more practical, lightweight choice for Linux desktops and single-disk systems where kernel integration and low resource usage matter more than parity RAID. Pick the one that matches your hardware and sleep well knowing your data is actually being protected.