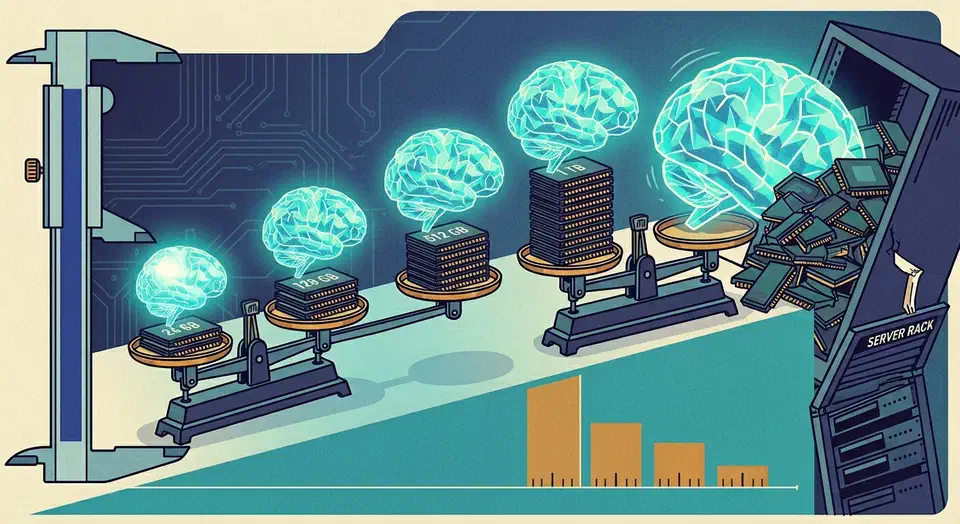

No single local image model wins everything in 2026. After running one prompt set on a single 24 GB GPU, the picture is clear: Qwen-Image renders legible in-image text, FLUX leads prompt adherence, and SDXL keeps the deepest LoRA library on the lowest VRAM. The real frontier is quality-per-VRAM, not one champion.

Key Takeaways

- No local model wins on everything; pick the one that fits your bottleneck.

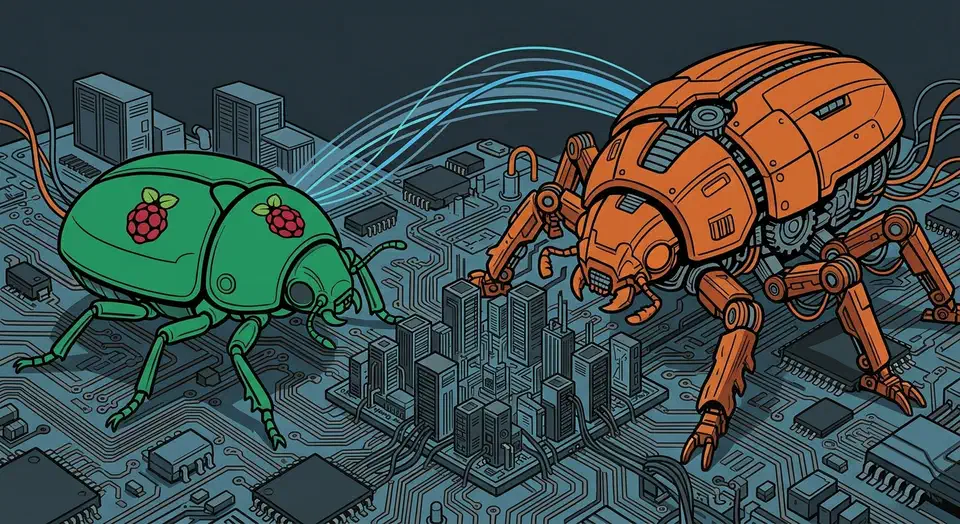

- Qwen-Image renders legible in-image text far better than its rivals.

- FLUX.2 leads prompt adherence but is the heaviest on VRAM.

- SDXL still has the biggest LoRA and ControlNet library by far.

- Check the license: FLUX dev blocks selling output, Qwen and SDXL don’t.

How Do I Choose a Local Image Model in 2026?

Match the model to the one thing you can’t compromise on. That single rule beats chasing a mythical “best” pick, because each model sits in a different corner of the quality-per-VRAM map. The 2026 local field narrows to three serious families, and the rest are mostly noise.