How to Build a Multi-Modal RAG Pipeline with Vision and Text

You can build a multi-modal RAG pipeline that searches across text documents, diagrams, and screenshots simultaneously by combining CLIP-based image embeddings with text embeddings in a shared vector space. Store them in a unified ChromaDB or Qdrant collection, route queries through a retrieval layer that returns both textual passages and relevant images, and feed everything into an LLM for generation. Using OpenCLIP ViT-G/14 for images and a matching text encoder, plus a local LLM like Llama 4 Scout for generation, the entire pipeline runs offline on consumer hardware with an RTX 5070 or better.

This approach fills a real gap in most RAG setups. The typical pipeline only indexes text, which means it ignores information inside diagrams, charts, architecture drawings, and screenshots. For technical documentation, product manuals, and research papers, that missing visual context can represent 30-50% of the actual information content.

Why Multi-Modal RAG Beats Text-Only Retrieval

Text-only RAG has some obvious failure modes that most teams discover the hard way. Technical documentation is full of flowcharts and diagrams where the critical information never appears in the surrounding text. Product manuals rely on annotated screenshots to show users where to click. Research papers embed data visualizations that tell a different story than their captions suggest. If your RAG pipeline only indexes the text, you are working with an incomplete picture of your knowledge base.

Multi-modal retrieval opens up new query patterns. Users can ask things like “Show me the network architecture diagram for the microservices setup” or “Find the screenshot where the error dialog appears” - queries that are impossible with text-only indexing. In any organization with a substantial internal knowledge base, visual content carries significant weight, and these kinds of queries come up often.

The concept that makes this work is a shared embedding space. CLIP (Contrastive Language-Image Pre-training) learns a joint embedding space where text and images describing the same concept end up with high cosine similarity. That means you can encode a text query and compare it directly against image embeddings, or vice versa. A single query produces results across both modalities without needing separate search pipelines.

In practice, a well-built multi-modal pipeline achieves 75-85% retrieval accuracy (Recall@5) on mixed-media corpora, compared to 40-50% when ignoring visual content. That difference shows up directly in answer quality - users get complete responses instead of partial ones that miss the visual context.

Common applications include internal knowledge bases with mixed media (every company has these), technical support systems that reference annotated screenshots, and medical record analysis combining clinical notes with imaging reports.

Architecture - Embedding, Indexing, and Retrieval Flow

The pipeline has four stages: document ingestion, multi-modal embedding, unified vector storage, and retrieval-augmented generation. Understanding the data flow up front prevents common pitfalls like mismatched embedding dimensions or incorrect similarity scoring.

Ingestion Stage

Document ingestion needs to handle text and images as separate streams from the same source documents. For PDFs, use PyMuPDF

(pymupdf v1.25+) to extract both text chunks and embedded images separately. For web pages, Playwright

can capture screenshots of rendered content alongside extracted text. Pure image files get indexed directly.

The key decision here is chunking strategy for text. Standard approaches work fine - split text into chunks of 256-512 tokens with 50-token overlap. For images, each extracted image becomes its own embedding unit. Keep track of the source document, page number, and position for both modalities so you can provide proper citations later.

Embedding Models

Text embedding has two paths depending on your quality requirements. The simpler option uses sentence-transformers

with all-MiniLM-L6-v2 (384 dimensions, fast inference) or nomic-embed-text-v2 (768 dimensions, higher quality). The trade-off is that these models produce embeddings in a different vector space than CLIP, which complicates cross-modal search.

Image embedding uses OpenCLIP with ViT-G-14 pretrained on laion2b_s34b_b88k, producing 1024-dimension vectors. Images are resized to 224x224 and normalized before encoding.

The dimension alignment problem is the first real architectural decision you face. Text embeddings (384d or 768d) and image embeddings (1024d) live in different spaces. You have two options:

Use CLIP’s own text encoder for everything. Encode both text queries and text chunks using CLIP’s text encoder, keeping everything in the same 1024d space. This is simpler and avoids alignment issues, but CLIP’s text encoder is not as strong as dedicated sentence-transformer models for pure text similarity.

Train a projection layer. Use sentence-transformers for text and CLIP for images, then train a lightweight linear projection to align the sentence-transformer embeddings into CLIP space. This gives better text retrieval quality but adds complexity.

For most projects, option 1 is the right starting point. You can always upgrade to option 2 later if text retrieval quality falls short.

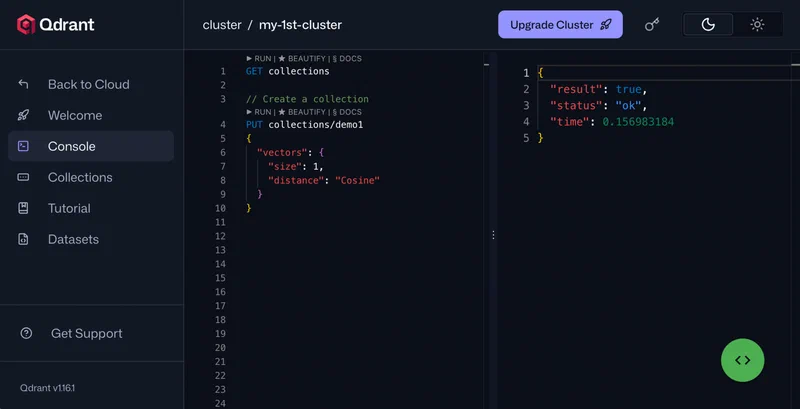

Vector Storage

Qdrant (v1.13+) or ChromaDB (v0.6+) both work well here. You can either use separate collections for each modality or a single collection with metadata tags (type: "text" or type: "image") to enable filtered retrieval. A single collection with metadata filtering is simpler to manage and query.

Retrieval Flow

At query time, encode the user’s query using the CLIP text encoder (since we are using option 1 from above), search the unified collection, and return the top-k results. The results will naturally be a mix of text chunks and images ranked by relevance. If you want more control over the modality balance, you can search each modality separately and merge results using reciprocal rank fusion (RRF).

Implementing the Pipeline Step by Step

Here is the concrete implementation. Every code example uses specific library versions and parameter values so you can reproduce the results.

Environment Setup

pip install open-clip-torch sentence-transformers chromadb pymupdf Pillow torchThis requires Python 3.11+ and PyTorch 2.5+ with CUDA 12.4.

Loading the CLIP Model

import open_clip

import torch

model, _, preprocess = open_clip.create_model_and_transforms(

"ViT-G-14", pretrained="laion2b_s34b_b88k"

)

model = model.eval().cuda()

tokenizer = open_clip.get_tokenizer("ViT-G-14")This takes roughly 6 GB of VRAM. If you are tight on memory, load in float16 with model.half() to cut that to about 3 GB.

Image Embedding Function

from PIL import Image

import torch.nn.functional as F

def embed_image(image_path: str) -> list[float]:

image = preprocess(Image.open(image_path)).unsqueeze(0).cuda()

with torch.no_grad():

embedding = model.encode_image(image)

embedding = F.normalize(embedding, dim=-1)

return embedding.cpu().numpy().flatten().tolist()For batch processing, wrap your images in a DataLoader with num_workers=4 and process them in batches of 32 for better throughput.

Text Embedding Function

Using CLIP’s text encoder to stay in the same embedding space as images:

def embed_text(text: str) -> list[float]:

tokens = tokenizer([text]).cuda()

with torch.no_grad():

embedding = model.encode_text(tokens)

embedding = F.normalize(embedding, dim=-1)

return embedding.cpu().numpy().flatten().tolist()ChromaDB Indexing

import chromadb

client = chromadb.PersistentClient(path="./vector_store")

collection = client.get_or_create_collection(

name="multimodal_docs",

metadata={"hnsw:space": "cosine"}

)

def index_document(doc_id: str, embedding: list[float], metadata: dict):

collection.add(

ids=[doc_id],

embeddings=[embedding],

metadatas=[{

"source_file": metadata["source_file"],

"page_number": metadata.get("page_number", 0),

"chunk_index": metadata.get("chunk_index", 0),

"modality": metadata["modality"], # "text" or "image"

"content_preview": metadata.get("content_preview", ""),

}]

)Query Function

def query_multimodal(query: str, n_results: int = 10) -> dict:

query_embedding = embed_text(query)

results = collection.query(

query_embeddings=[query_embedding],

n_results=n_results,

where={"modality": {"$in": ["text", "image"]}}

)

return resultsThe results come back ranked by cosine similarity, with metadata telling you whether each result is a text chunk or an image. You can use the content_preview field for text results and the source_file path to load image results.

Generating Answers from Multi-Modal Context

With a mix of text and images retrieved, you need an LLM that can consume both modalities and produce a coherent answer. The generation step ties the retrieval pipeline to actual user-facing output.

Context Assembly

For text results, include the raw text chunk directly in the prompt. For image results, you have two options depending on your LLM:

- Pass images directly to a vision-capable LLM. This gives the best results since the model can interpret the image natively.

- Generate text descriptions using a captioning model and include those as text context. This works with any text-only LLM but loses visual nuance.

Vision-Capable Local LLMs

Several local models handle interleaved text and image context well:

| Model | Parameters | Strengths | VRAM Required |

|---|---|---|---|

| Llama 4 Scout | 17B | Native multi-modal, strong reasoning | 12 GB |

| LLaVA v1.6 | 34B | Excellent image understanding | 20 GB |

| Moondream2 | 1.8B | Lightweight, good for captioning | 2 GB |

Ollama Integration

Serve the vision model locally with Ollama and send multi-modal messages via the API:

import ollama

import base64

def generate_answer(query: str, text_contexts: list[str],

image_paths: list[str]) -> str:

# Build context string from text results

context = "\n\n".join(

f"[Text Source {i+1}]: {text}"

for i, text in enumerate(text_contexts)

)

# Encode images

images = []

for path in image_paths:

with open(path, "rb") as f:

images.append(base64.b64encode(f.read()).decode())

prompt = (

f"Answer the question using ONLY the following context. "

f"Context includes both text passages and images. "

f"Cite which source supports each claim.\n\n"

f"{context}\n\n"

f"Question: {query}\nAnswer:"

)

response = ollama.chat(

model="llama4-scout",

messages=[{

"role": "user",

"content": prompt,

"images": images

}]

)

return response["message"]["content"]Image-to-Text Fallback

If you are running a text-only LLM and cannot pass images directly, pre-process image results through a captioning pipeline:

from transformers import pipeline

captioner = pipeline(

"image-to-text",

model="Salesforce/blip2-opt-6.7b",

device="cuda"

)

def caption_image(image_path: str) -> str:

result = captioner(image_path, max_new_tokens=200)

return result[0]["generated_text"]Generate descriptions for each retrieved image and inject them as text context alongside the regular text chunks. This approach loses some detail compared to native vision models but works with any LLM backend.

Citation and Attribution

Include source metadata in the response so users can verify claims against the original document or image. Format citations inline: [Source: diagram from page 3 of architecture.pdf]. This lets users drill down into the original material when they need more detail, and it keeps the RAG system accountable to its sources.

Performance Optimization and Production Considerations

A naive implementation will be slow and memory-hungry. These optimizations make the pipeline viable for real knowledge bases with thousands of documents.

Batch Embedding Throughput

Process images in batches of 32 and text in batches of 128 using PyTorch’s DataLoader with num_workers=4. On an RTX 5080, expect roughly 200 images/second and 500 text chunks/second. Even on an RTX 5070, you should see 150+ images/second, which means a knowledge base with 10,000 images gets fully indexed in about a minute.

VRAM Management

VRAM is the main constraint on consumer hardware. Load CLIP in float16 with model.half() to cut usage from 6 GB to 3 GB. Between the embedding phase and the generation phase, offload models explicitly:

model.to("cpu")

torch.cuda.empty_cache()

# Now load the generation modelThis lets you run both the CLIP encoder and a 17B generation model on a single 12 GB GPU, just not at the same time.

Index Persistence and Incremental Updates

Use ChromaDB’s persistent client (chromadb.PersistentClient(path="./vector_store")) or Qdrant with on-disk storage so you do not re-embed your entire corpus on every startup. Track file modification times and only re-embed changed documents. Use content hashing (SHA-256 of file bytes) as dedup keys in the vector store to avoid duplicate entries when files move or get renamed.

import hashlib

def file_hash(path: str) -> str:

with open(path, "rb") as f:

return hashlib.sha256(f.read()).hexdigest()Query Latency

The full pipeline latency breaks down like this:

| Stage | Time |

|---|---|

| Query embedding (CLIP text encoder) | ~10 ms |

| Vector search (100K documents, HNSW) | ~5 ms |

| LLM generation | 2-5 seconds |

| Total | Under 6 seconds |

LLM generation dominates total latency. Vector search and embedding are negligible in comparison.

Scaling Beyond 1M Vectors

For collections exceeding 1 million vectors, ChromaDB starts to show strain. Switch to Qdrant with quantized vectors (binary or scalar quantization) to keep memory usage under 4 GB while maintaining over 95% recall. Qdrant’s built-in quantization support makes this a configuration change rather than an architecture rewrite:

from qdrant_client import QdrantClient

from qdrant_client.models import VectorParams, Distance, \

ScalarQuantizationConfig, ScalarType

client = QdrantClient(path="./qdrant_store")

client.create_collection(

collection_name="multimodal_docs",

vectors_config=VectorParams(

size=1024, distance=Distance.COSINE

),

quantization_config=ScalarQuantizationConfig(

type=ScalarType.INT8,

always_ram=True

),

)What to Do Next

The pipeline described here works on a single machine with a decent GPU. OpenCLIP handles the unified embeddings, ChromaDB or Qdrant stores the vectors, and a vision-capable LLM generates answers from mixed text-and-image context. Start with the CLIP-only embedding approach (option 1) and a smaller knowledge base to validate the architecture, then scale up the corpus and optimize as your usage patterns become clear. The largest improvement comes from indexing visual content that text-only pipelines skip over entirely - once that content is searchable, retrieval quality jumps noticeably across any mixed-media corpus.