How to Build Full-Text Search with Meilisearch and HTMX: No JavaScript Framework Needed

By combining Meilisearch

v1.12’s fast REST API with HTMX

2.0’s hx-get and hx-trigger="keyup changed delay:300ms" attributes, you can build a real-time, typo-tolerant search interface that returns results in under 50ms - without writing a single line of custom JavaScript or pulling in React, Vue, or any frontend framework. The server renders HTML fragments that HTMX swaps into the DOM, keeping the entire search experience under 15 KB of total JS payload. What follows covers the full setup from Docker Compose to a working search UI with faceted filtering.

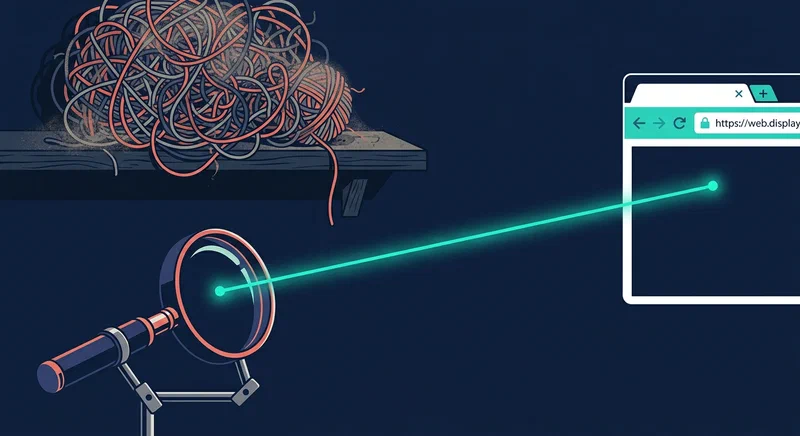

Why Meilisearch and HTMX Fit Together

Most search implementations on the web follow a predictable pattern: spin up Elasticsearch or Algolia on the backend, then ship a fat JavaScript bundle to the frontend so a framework like React can manage state, render results, and handle user input. The result works, but it carries real costs - bundle sizes north of 80 KB gzipped, complex build tooling, and a frontend codebase that needs its own maintenance cycle.

Meilisearch and HTMX take a different approach that sidesteps most of that complexity.

Meilisearch v1.12 (released January 2026) is a search engine built specifically for frontend-facing use cases. It delivers sub-50ms responses with built-in typo tolerance, faceted filtering, and relevancy tuning out of the box. Unlike Elasticsearch, it does not require a cluster, a JVM, or deep tuning to get reasonable results. You run a single binary, push documents to it via a REST API, and start querying. The defaults are sensible for most use cases - blog search, product catalogs, documentation sites - and the API surface is small enough to learn in an afternoon.

HTMX 2.0 (stable since late 2025) works on the other side of the stack. It adds attributes like hx-get, hx-post, and hx-trigger to standard HTML elements, letting you make AJAX requests and swap DOM content without writing any JavaScript. When a user types in a search field, HTMX sends a request to your backend. Your backend queries Meilisearch, renders an HTML fragment, and returns it. HTMX drops that fragment into the page. There are no other moving parts.

Consider the payload sizes. HTMX is roughly 14 KB gzipped. Your application JavaScript is zero bytes because there is none. Compare that to a React + InstantSearch.js setup, which ships 80-120 KB gzipped at minimum before you add your own components. For a search feature on a blog or documentation site, that difference matters - both for page load times and for the ongoing maintenance burden of a JavaScript build pipeline.

This architecture also works with any backend language. Since the server just returns HTML strings, you can use Python with Flask, Go with Chi, Node with Express, Ruby with Sinatra, or PHP with Laravel. There is no API contract to negotiate between a JSON backend and a JavaScript frontend because the contract is HTML itself.

Since the initial page load is fully server-rendered HTML, search engines can index it without executing JavaScript. The search functionality layers on top as progressive enhancement. If JavaScript fails to load or is disabled, the form can fall back to a standard page refresh with query parameters. Accessibility and SEO work out of the box instead of requiring extra engineering effort after the fact.

Setting Up Meilisearch with Docker Compose

Meilisearch runs as a single binary or Docker container with minimal configuration. A production-ready setup takes about ten minutes.

Here is a docker-compose.yml that gets Meilisearch running with persistent storage:

version: "3.8"

services:

meilisearch:

image: getmeili/meilisearch:v1.12

ports:

- "7700:7700"

environment:

MEILI_MASTER_KEY: "your-32-character-minimum-master-key-here"

volumes:

- meili_data:/meili_data

volumes:

meili_data:Set MEILI_MASTER_KEY to a random string of at least 32 characters. This key controls admin access to the instance. In production, never expose port 7700 directly to the internet - put it behind a reverse proxy like Nginx or Caddy with rate limiting enabled.

Once the container is running, create a search index:

curl -X POST 'http://localhost:7700/indexes' \

-H 'Authorization: Bearer your-32-character-minimum-master-key-here' \

-H 'Content-Type: application/json' \

--data-binary '{"uid": "articles", "primaryKey": "id"}'Index your documents by POSTing a JSON array. For a blog, each document should include the fields you want to search and display:

curl -X POST 'http://localhost:7700/indexes/articles/documents' \

-H 'Authorization: Bearer your-32-character-minimum-master-key-here' \

-H 'Content-Type: application/json' \

--data-binary '[

{

"id": 1,

"title": "Getting Started with Docker Compose",

"content": "Docker Compose simplifies multi-container...",

"tags": ["docker", "devops"],

"date": "2026-01-15",

"slug": "getting-started-docker-compose"

}

]'Configure searchable attributes to control match priority. Title matches should rank higher than body content:

curl -X PUT 'http://localhost:7700/indexes/articles/settings/searchable-attributes' \

-H 'Authorization: Bearer your-32-character-minimum-master-key-here' \

-H 'Content-Type: application/json' \

--data-binary '["title", "tags", "content"]'For frontend use, generate a search-only API key through the /keys endpoint. This key is safe to expose in client-side requests because it can only search - it cannot modify indexes, settings, or documents.

If you are running a Hugo

or other static site, write a build-time indexing script that reads your Markdown files, extracts frontmatter and content, and pushes everything to the Meilisearch API during deploy. A simple Python script using python-frontmatter and requests handles this in under 50 lines:

import frontmatter

import requests

from pathlib import Path

MEILI_URL = "http://localhost:7700"

MEILI_KEY = "your-master-key"

POSTS_DIR = Path("content/posts")

documents = []

for i, md_file in enumerate(POSTS_DIR.rglob("*.md")):

post = frontmatter.load(md_file)

documents.append({

"id": i,

"title": post.get("title", ""),

"content": post.content[:5000],

"tags": post.get("tags", []),

"date": str(post.get("date", "")),

"slug": md_file.parent.name

})

requests.post(

f"{MEILI_URL}/indexes/articles/documents",

json=documents,

headers={"Authorization": f"Bearer {MEILI_KEY}"}

)Run this script as part of your CI/CD pipeline after the site builds, and your search index stays in sync with your content.

Building the HTMX Search Interface

The frontend is plain HTML with HTMX attributes - you do not need a build step, a bundler, or a component library. Here is the complete search interface:

<div class="search-container">

<input

type="search"

name="q"

hx-get="/search"

hx-trigger="keyup changed delay:300ms"

hx-target="#results"

hx-indicator="#spinner"

hx-push-url="true"

placeholder="Search articles..."

autocomplete="off"

>

<span id="spinner" class="htmx-indicator">Searching...</span>

<div id="results"></div>

</div>Each attribute does something specific:

hx-get="/search"sends a GET request to your/searchendpoint with the input value as a query parameterhx-trigger="keyup changed delay:300ms"debounces the request, only firing 300ms after the user stops typing. This prevents hammering the API on every keystrokehx-target="#results"tells HTMX to swap the response HTML into the#resultsdivhx-indicator="#spinner"toggles visibility on the spinner element during the request, giving visual feedback on slower connectionshx-push-url="true"updates the browser URL with the search query parameter, so users can share search result links and the back button works as expected

The results themselves are plain HTML returned by the server. Meilisearch provides _formatted fields with <em> tags wrapping matched terms, so highlighting comes for free:

<article class="search-result">

<h3><a href="/posts/getting-started-docker-compose/">

Getting Started with <em>Docker</em> Compose

</a></h3>

<p><em>Docker</em> Compose simplifies multi-container application

deployment by defining services in a single YAML file...</p>

<span class="search-meta">2026-01-15 - docker, devops</span>

</article>Style it with whatever CSS you already use on your site. There is no framework-specific markup to work around.

The Backend Search Endpoint

The backend is a thin proxy: receive the query from HTMX, ask Meilisearch for results, render an HTML fragment, return it. Here is a complete implementation in Python using Flask :

from flask import Flask, request, render_template_string

import meilisearch

app = Flask(__name__)

client = meilisearch.Client("http://localhost:7700", "your-search-only-key")

index = client.index("articles")

RESULT_TEMPLATE = """

{% for hit in hits %}

<article class="search-result">

<h3><a href="/posts/{{ hit.slug }}/">{{ hit._formatted.title | safe }}</a></h3>

<p>{{ hit._formatted._snippetContent | safe }}</p>

<span class="search-meta">{{ hit.date }}</span>

</article>

{% endfor %}

{% if not hits %}

<p class="no-results">No results found.</p>

{% endif %}

"""

@app.route("/search")

def search():

q = request.args.get("q", "").strip()

if not q:

return "<div></div>"

results = index.search(q, {

"limit": 10,

"attributesToHighlight": ["title", "content"],

"attributesToCrop": ["content"],

"cropLength": 30

})

return render_template_string(RESULT_TEMPLATE, hits=results["hits"])The equivalent in Node.js with Express looks almost identical:

const express = require("express");

const { MeiliSearch } = require("meilisearch");

const app = express();

const client = new MeiliSearch({

host: "http://localhost:7700",

apiKey: "your-search-only-key"

});

const index = client.index("articles");

app.get("/search", async (req, res) => {

const q = (req.query.q || "").trim();

if (!q) return res.send("<div></div>");

const results = await index.search(q, {

limit: 10,

attributesToHighlight: ["title", "content"],

attributesToCrop: ["content"],

cropLength: 30

});

const html = results.hits.map(hit => `

<article class="search-result">

<h3><a href="/posts/${hit.slug}/">${hit._formatted.title}</a></h3>

<p>${hit._formatted.content}</p>

<span class="search-meta">${hit.date}</span>

</article>

`).join("");

res.send(html || '<p class="no-results">No results found.</p>');

});

app.listen(3000);A few important details in both implementations:

The attributesToCrop option with cropLength: 30 tells Meilisearch to return content snippets of approximately 30 words centered around the match. This keeps the response payload small and the displayed results clean - you do not need to send the entire article body to the browser just to show a preview.

When the query is empty or missing, return an empty <div> so the results area clears out. Without this, stale results from the previous search persist after the user clears the input field.

For production deployments, add response caching. A 60-second TTL using HTTP Cache-Control headers or a server-side cache (Redis, an in-memory dictionary, or even a simple LRU cache) reduces Meilisearch load on popular queries. Most search workloads follow a power law - a small number of queries account for the majority of traffic.

Advanced Features: Facets, Filters, and Keyboard Navigation

Once basic search works, you can layer on additional features without touching a JavaScript framework.

Faceted Filtering

Enable faceted search by updating your Meilisearch index settings:

curl -X PATCH 'http://localhost:7700/indexes/articles/settings' \

-H 'Authorization: Bearer your-master-key' \

-H 'Content-Type: application/json' \

--data-binary '{

"filterableAttributes": ["tags", "category"],

"sortableAttributes": ["date"]

}'Add a category filter dropdown alongside the search input:

<select

name="category"

hx-get="/search"

hx-include="[name='q']"

hx-trigger="change"

hx-target="#results"

>

<option value="">All Categories</option>

<option value="linux">Linux</option>

<option value="docker">Docker</option>

<option value="ai">AI</option>

</select>The hx-include="[name='q']" attribute tells HTMX to include the current search input value when the dropdown changes. On the backend, translate the category parameter to a Meilisearch filter:

@app.route("/search")

def search():

q = request.args.get("q", "").strip()

category = request.args.get("category", "").strip()

search_params = {

"limit": 10,

"attributesToHighlight": ["title", "content"],

"attributesToCrop": ["content"],

"cropLength": 30

}

if category:

search_params["filter"] = f'category = "{category}"'

results = index.search(q, search_params)

return render_template_string(RESULT_TEMPLATE, hits=results["hits"])Keyboard Navigation

Add arrow-key navigation between search results with a small inline script attached to the HTMX swap event:

<div id="results" hx-on::after-swap="

const first = this.querySelector('a');

if (first) first.focus();

">

</div>This focuses the first link after results load. For full arrow-key support between results, three lines of JavaScript handle it:

document.addEventListener("keydown", (e) => {

if (e.key === "ArrowDown" || e.key === "ArrowUp") {

const links = [...document.querySelectorAll("#results a")];

const i = links.indexOf(document.activeElement);

const next = e.key === "ArrowDown" ? i + 1 : i - 1;

if (links[next]) { links[next].focus(); e.preventDefault(); }

}

});That is the only custom JavaScript in the entire search implementation.

Infinite Scroll Pagination

For longer result sets, add “search as you scroll” with a sentinel element at the bottom of the results:

<div

hx-get="/search?q=docker&page=2"

hx-trigger="revealed"

hx-swap="outerHTML"

>

Loading more results...

</div>The hx-trigger="revealed" fires when the element scrolls into view, loading the next page of results and replacing the sentinel with new result items plus a new sentinel for page 3. On the backend, pass the offset parameter to Meilisearch: search(q, {"offset": (page - 1) * 10, "limit": 10}).

Search Analytics

Meilisearch does not ship built-in query analytics, but you can add basic tracking with minimal effort. Log each query, the number of results returned, and a timestamp to a SQLite database on the backend:

import sqlite3

db = sqlite3.connect("search_analytics.db")

db.execute("""

CREATE TABLE IF NOT EXISTS queries (

q TEXT, hits INTEGER, ts DATETIME DEFAULT CURRENT_TIMESTAMP

)

""")

# Inside your search route, after getting results:

db.execute("INSERT INTO queries (q, hits) VALUES (?, ?)",

(q, len(results["hits"])))

db.commit()This gives you a “top searches” report and zero-result queries (which reveal content gaps) with a single SQL query. No external analytics service required.

Performance Comparison

The practical difference between this stack and a framework-heavy alternative is significant:

| Metric | Meilisearch + HTMX | Algolia + React + InstantSearch.js |

|---|---|---|

| JS payload (gzipped) | ~14 KB | 80-120 KB |

| Search latency (p95) | <50ms | <50ms |

| Build tooling required | None | Webpack/Vite + npm |

| Backend languages supported | Any | Any (but JS frontend locked in) |

| Time to interactive | Fast (minimal JS parsing) | Slower (framework hydration) |

Both stacks deliver fast search results from the server side. The difference shows up in everything around the search: the JavaScript your users download, the toolchain you maintain, and the cognitive overhead of managing client-side state. If your search lives on a blog, documentation site, or content-heavy application where you do not already have a React codebase, the Meilisearch + HTMX combination gets you to the same result with a fraction of the complexity.

The full setup - Docker Compose, a Python or Node backend, and an HTML template - can be up and running in under an hour. You skip the node_modules folder, the build pipeline, and the framework upgrade treadmill entirely.