How to Fix LLM Hallucinations in Production Code

Fixing LLM hallucinations in production requires a layered defense strategy: rigorous Chain-of-Verification steps at inference time, grounding the model’s output in verified external data sources, and automated evaluation suites that give you a hallucination rate you can track and regress against in CI . No single technique eliminates the problem, but combining prompt-level constraints, retrieval-augmented grounding , inference-time self-verification, and architectural validation layers reduces it to a manageable - and measurable - engineering challenge.

What Is Hallucination? A Taxonomy for Developers

The term “hallucination” has become an umbrella label that software engineers use to describe almost any unexpected LLM output. That imprecision is dangerous in production because each failure mode has a distinct cause and a distinct fix. Grouping them together leads to applying the wrong remedy to the wrong problem, spending engineering cycles on prompt engineering when the actual issue is retrieval quality, or adding RAG when the real failure is instruction-following. Before you can fix hallucinations, you need a precise vocabulary for what you are actually observing.

Factual hallucination is the most widely discussed type: the model invents facts that do not exist. In a developer context this is particularly insidious - wrong API endpoint paths, non-existent library functions, fabricated parameter names, incorrect version numbers. Unlike a human writer who might hedge (“I think the method is called…”), an LLM delivers invented facts with the same fluency and confidence as correct ones. The model is not lying; it is completing a token sequence that is statistically coherent but factually wrong. The parametric memory encoded during training is both the model’s strength and its liability - it has seen millions of code examples, but those memories are blended and reconstructed, not retrieved.

Faithfulness hallucination is the most dangerous variant for teams building RAG systems, because it occurs precisely when developers believe they have solved the problem. The model has been given a retrieved context chunk that contains the correct answer, yet it produces an output that contradicts that context. This happens when the model’s parametric priors are stronger than the retrieved signal, when the context window is crowded with competing information, or when the prompt does not sufficiently instruct the model to prefer retrieved evidence over internal knowledge. Faithfulness failures undermine the entire value proposition of RAG - you have done the retrieval work, but the model is still making things up.

Instruction-following failures are often labeled as hallucinations even though they are technically a different problem: the model ignores format constraints. It outputs JSON with extra, undeclared fields. It skips required keys. It returns a numbered list when the schema called for an array of objects. These failures matter enormously in production pipelines where downstream code attempts to parse model output - a missing required field can throw a KeyError that crashes the application. Understanding that this is a prompt constraint problem, not a factual accuracy problem, means the fix is structured output enforcement rather than grounding.

Confidence miscalibration is a subtler but production-critical issue. The model states false information with high confidence and true information with hedging. It says “The correct answer is X” when X is wrong, and “I believe it might be Y, though I am not entirely certain” when Y is right. This makes programmatic filtering extremely difficult - you cannot simply threshold on linguistic confidence markers to route uncertain outputs to a human reviewer, because the signals are inverted. Calibration is a property of the model’s training, and different model families have dramatically different calibration characteristics. Some frontier models have improved significantly here, but miscalibration remains a real operational concern.

Context sensitivity rounds out the taxonomy: the same model, same prompt, same question - different answers on different runs. This is not a bug but a feature of temperature sampling. At temperature > 0, the model samples from a probability distribution rather than returning the single highest-probability token. Stochasticity is useful for creative tasks; it is a liability when you need deterministic, factually consistent answers. Understanding sampling as a root cause - and knowing when to set temperature to 0 for factual tasks - is a prerequisite for everything that follows.

Prevention at the Prompt Level

The cheapest hallucination fix is a better prompt. Before spending engineering cycles on RAG infrastructure or eval pipelines, it is worth exhausting what is achievable through prompt engineering, because these changes require no code beyond the prompt itself and can be deployed in minutes. The techniques below have documented effects on hallucination rates across standard benchmarks and are applicable to any hosted or self-hosted LLM.

System prompt constraints are the first line of defense. An explicit system instruction to say “I don’t know” when uncertain, to cite sources for factual claims, and to refuse questions that fall outside the provided context does measurably reduce confabulation. The key insight is that without explicit permission to express uncertainty, most models default to answering - the training process rewards fluent, helpful responses. Giving the model explicit license to defer, and providing examples of what deferral looks like, changes the probability distribution over its output tokens in a useful direction. This is not a complete solution, but it is a free one.

Chain-of-Thought (CoT) prompting forces the model to reason step-by-step before committing to a final answer. Instead of prompting “What is the output of this function?”, you prompt “Think through this step by step, then give your final answer.” On reasoning-heavy tasks - code analysis, multi-hop factual questions, logical deduction - CoT has been shown to reduce factual errors by 20–40% on standard benchmarks. The mechanism is not mysterious: by externalizing intermediate reasoning, the model is less likely to skip steps that would reveal an inconsistency. The chain of reasoning also gives you a diagnostic artifact - when the final answer is wrong, you can read the chain and identify exactly where the reasoning went astray.

A concrete CoT prompt for a code analysis task looks like this:

System: You are a code reviewer. When asked to analyze code, always:

1. Identify the purpose of each function

2. Trace the data flow step by step

3. Note any potential edge cases

4. Then state your conclusion

Only after completing steps 1-4 should you give your final answer.

If you are uncertain about any step, say "I am not sure about this step"

rather than guessing.Structured output constraints address instruction-following failures directly. Using the response_format parameter in the OpenAI API to specify a JSON schema, or using the instructor library for any OpenAI-compatible endpoint, forces the model to produce output that conforms to a defined structure. The model cannot add extra fields that are not in the schema; required fields cannot be omitted. This does not prevent the model from generating wrong content inside a valid field, but it eliminates an entire class of failures caused by format non-compliance. For production pipelines where model output feeds into downstream parsing logic, structured output enforcement is not optional.

Few-shot examples with “I don’t know” responses are underused. If your few-shot examples only demonstrate the model answering correctly, you are implicitly training the model (at the context level) to always provide an answer. Including 1–2 examples where the model correctly defers - “I don’t have enough information to answer this accurately” - recalibrates the model’s in-context behavior. This is especially valuable when the question distribution includes edge cases that are likely outside the model’s reliable knowledge.

The Inference-Time Compute Solution

Giving the model more “thinking time” before it commits to a final answer is one of the most effective and most underutilized hallucination reduction techniques available in 2026. The core insight is that a single forward pass - one call to the model, one response - is the cheapest and most hallucination-prone mode of operation. Spending more compute at inference time, by generating multiple candidates or by running a verification pass, trades cost for accuracy in a controlled way.

Best-of-N sampling is the simplest form of inference-time compute: generate N candidate responses to the same query, score each one against a quality criterion, and return the highest-scoring response. For factual questions with a verifiable answer, the scoring can be as simple as checking which responses are consistent with each other - if 4 out of 5 sampled responses agree on a factual claim, the consensus answer is likely more reliable than any single sample. For more complex quality assessment, a secondary LLM call can score each candidate. Best-of-N is embarrassingly parallelizable - all N samples can be requested simultaneously - so the latency increase is much smaller than the cost increase.

Chain-of-Thought plus Self-Verification is a two-call pattern worth implementing explicitly. Call 1 generates a draft answer with full reasoning chain. Call 2 receives the original question, the draft answer, and the reasoning chain, and is asked to identify any errors or inconsistencies before producing a revised final answer. This pattern mimics the cognitive process of checking your own work. In practice it looks like this:

# Call 1: Generate draft with reasoning

draft_prompt = f"""

Question: {question}

Think through this carefully, step by step. Show your reasoning,

then provide your answer.

"""

draft_response = llm.complete(draft_prompt)

# Call 2: Self-verify

verification_prompt = f"""

Original question: {question}

A draft answer was produced with this reasoning:

{draft_response}

Review this answer carefully. Identify any factual errors, logical

inconsistencies, or unsupported claims. If the answer contains errors,

correct them and explain what was wrong. If the answer is correct,

confirm it and explain why you are confident.

Provide a final, verified answer.

"""

final_response = llm.complete(verification_prompt)The Reflexion pattern is a lighter-weight version of the same idea. After receiving the model’s initial response, a single follow-up call asks: “Is this answer correct? If not, correct it.” This is simple enough to implement in a few lines of code and has documented hallucination reduction across a wide range of task types. The Reflexion pattern works because the model, when explicitly asked to evaluate rather than generate, activates different internal attention patterns that are more skeptical and error-detecting. It is not a perfect verifier - the model can confirm its own errors - but it catches a meaningful fraction of hallucinations at low cost.

The cost-versus-quality trade-off for inference-time compute techniques is real and must be evaluated per application. A best-of-5 sampling strategy multiplies inference cost by 5x; a two-call Chain-of-Thought plus Self-Verification pattern adds roughly 1.5–2x cost. For a casual chatbot where occasional errors are tolerable, this is not justified. For applications in medical, legal, financial, or safety-critical contexts - where a single confident hallucination can cause real harm - the cost increase is negligible compared to the liability exposure. The right answer depends on the application, and the only way to know the improvement you are getting is to measure it with an eval suite.

Grounding with RAG and Knowledge Graphs

When factual accuracy is non-negotiable, grounding the model’s answers in verified external data sources is the most reliable architectural choice available. Prompt engineering and inference-time compute reduce hallucination rates; grounding changes the fundamental source of the model’s factual claims from unreliable parametric memory to a controlled, version-managed knowledge base that you own.

RAG as the first-line grounding solution works by providing relevant, verified document chunks alongside the query in the prompt context. The model is instructed to answer from the provided evidence rather than from internal memory. When the retrieved context contains the correct answer, and the model faithfully uses that context, factual hallucination on the covered domain drops dramatically. RAG is now table-stakes for any production LLM application where domain-specific accuracy matters. The implementation cost is low - vector databases like Qdrant, Weaviate, and pgvector are mature, and embedding models are cheap to run. The real engineering work is in document ingestion, chunking strategy, and retrieval quality.

Citation enforcement closes the loop between retrieval and output. Rather than allowing the model to produce a response that may or may not be supported by the retrieved context, the prompt requires the model to cite a specific chunk ID for every factual claim: “According to [source: chunk_42]…” A post-processing step then verifies that the cited chunk actually contains text that supports the claim - a relatively simple semantic similarity check. Claims that lack citations or cite chunks that do not support them are flagged, and the response can be re-routed to a verification queue or a secondary model for validation. Citation enforcement also creates an audit trail - when a user challenges a factual claim, you can immediately retrieve the source document and verify.

Knowledge Graphs for structured fact grounding address the domain where RAG performs least well: precise, structured facts about entities. Who is the CEO of a company? What is the current version of a software library? What are the known drug interactions for a medication? Unstructured document retrieval is a blunt instrument for these queries because the answer may be scattered across many documents and the retrieval may return outdated information. A local Neo4j graph or a SPARQL-queryable Wikidata endpoint provides deterministic, structured answers to entity-relationship queries. The LLM can use tool-calling to query the knowledge graph and incorporate the structured result into a natural language response, with the graph serving as a single source of truth for factual claims.

Retrieval quality as a hallucination multiplier is a point that gets less attention than it deserves. A RAG system with poor retrieval - chunks that are too large, embeddings that are semantically misaligned with the query, or a knowledge base that is stale or incomplete - can actively increase hallucination rates compared to a model with no retrieval at all. When the retrieved context is irrelevant or misleading, the model is forced to reconcile contradictory signals: the provided context says one thing, the model’s parametric knowledge says another. This conflict often resolves in the direction of confabulation. Retrieval quality is not a nice-to-have; it is a load-bearing component of your hallucination defense.

Automated Evaluation Suites

You cannot fix what you cannot measure. An intuition-based assessment of whether your model “seems to hallucinate less” after a prompt change is not engineering - it is guesswork. A production LLM system needs a hallucination rate metric with the same rigor as a service’s error rate: tracked over time, regressed in CI, tied to deployment decisions. Building an automated eval pipeline is the investment that makes every other technique in this post actionable.

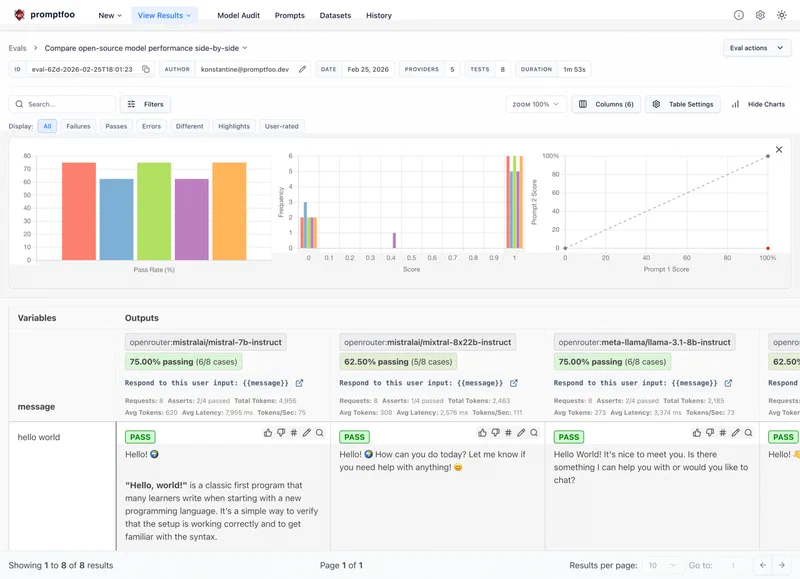

The current landscape of evaluation frameworks offers distinct tools for different use cases. The table below summarizes the four most widely used options:

| Framework | Best For | Metrics | CI/CD Integration | Licensing |

|---|---|---|---|---|

| Promptfoo | CLI-driven prompt testing, multi-model comparison, regression testing | Custom assertions, LLM-as-judge, similarity scores | First-class GitHub Actions support | Open source (MIT) |

| Ragas | RAG-specific evaluation, faithfulness and relevance scoring | Faithfulness, answer relevance, context precision, context recall | Python library, integrates with any test runner | Open source (Apache 2.0) |

| HELM | Comprehensive academic-grade benchmarking, cross-model comparison | 100+ metrics across 40+ scenarios | Designed for batch evaluation, not streaming CI | Open source (Apache 2.0) |

| DeepEval | Production monitoring, A/B testing, real-time evaluation | Hallucination, coherence, toxicity, custom G-Eval metrics | Native CI/CD integration, Pytest plugin | Open source + cloud tier |

For most production applications, Ragas and Promptfoo cover the essential bases. Ragas gives you the three metrics every RAG application must track: faithfulness (does the answer contradict the retrieved context?), answer relevance (does the answer actually address the question asked?), and context precision (is the retrieved context useful for answering the question, or is it noise?). A faithfulness score below 0.8 on your golden dataset is a signal that your system prompt needs stronger context-grounding instructions or your retrieval is returning irrelevant chunks. Promptfoo handles the prompt regression layer - ensuring that a prompt change intended to improve one metric does not silently degrade another.

Building a golden dataset is the prerequisite for meaningful evaluation, and it is a one-time investment that pays forward indefinitely. A golden dataset is a curated collection of 100–200 question-answer pairs with verified correct answers, representative of your application’s real query distribution. Each entry needs a question, a reference answer that has been human-verified, and (for RAG evals) the ground-truth context chunks that should support the answer. The curation process is tedious but not difficult. It is also the moment when you often discover that you did not have a clear definition of “correct” for edge cases - and resolving those ambiguities is valuable even before you run a single evaluation.

G-Eval - using a strong LLM as a judge to score another model’s outputs - has emerged as the current state-of-the-art for nuanced evaluation that goes beyond simple string matching or keyword overlap. A reference answer and a model-generated answer are both provided to a judge model (typically a frontier model like GPT-4o or Claude Opus) alongside a scoring rubric, and the judge assigns a numerical score. G-Eval correlates strongly with human judgments and can evaluate dimensions that are impossible to measure with deterministic metrics - factual completeness, appropriate hedging, logical coherence. DeepEval and Ragas both support G-Eval-style evaluation. The cost is a few cents per evaluation run against a frontier model API, which is trivial for a golden dataset of 200 examples.

Integrating evals into CI/CD is the step that transforms evaluation from a periodic check into a safety net. With Promptfoo, a GitHub Actions workflow can run your full eval suite on every pull request that touches a prompt template or model configuration, and block the merge if the faithfulness score drops below a defined threshold. A practical implementation:

# .github/workflows/eval.yml

name: LLM Eval Suite

on:

pull_request:

paths:

- 'prompts/**'

- 'src/llm/**'

jobs:

eval:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Run Promptfoo evals

run: npx promptfoo eval --config promptfooconfig.yaml

- name: Check faithfulness threshold

run: |

SCORE=$(cat eval-results.json | jq '.summary.faithfulness')

if (( $(echo "$SCORE < 0.80" | bc -l) )); then

echo "Faithfulness score $SCORE is below threshold 0.80"

exit 1

fiThis pattern means that a well-intentioned prompt edit that accidentally weakens grounding constraints is caught before it ships to production users.

Architectural Patterns for Hallucination-Resistant Production Systems

Individual techniques - better prompts, CoT, RAG, evals - are necessary but not sufficient. A production-grade LLM system treats hallucination as a systems problem, not a model problem, and implements defense-in-depth at every layer. The architecture should assume that the model will hallucinate on some percentage of requests and build infrastructure to detect, handle, and recover from those failures gracefully.

Output validation layer is the most important architectural addition for pipelines where model output feeds downstream systems or is presented to users without human review. Every model response should pass through a validation stage before it is consumed. For structured outputs, Pydantic models with strict field validation catch format violations. For factual content, a secondary LLM call can evaluate the response against a set of known facts or the retrieved context. For code generation, the output can be run through a linter or static analyzer. The validation layer is not about eliminating all errors - it is about catching the most egregious failures before they cause visible damage. Validation failures should be logged with the full request context for later analysis; this log is your most valuable source of data for improving the system.

from pydantic import BaseModel, field_validator

from typing import Optional

class LLMResponse(BaseModel):

answer: str

confidence: float

sources: list[str]

@field_validator('confidence')

@classmethod

def validate_confidence(cls, v):

if not 0.0 <= v <= 1.0:

raise ValueError('Confidence must be between 0 and 1')

return v

@field_validator('sources')

@classmethod

def sources_not_empty(cls, v):

if len(v) == 0:

raise ValueError('Response must cite at least one source')

return v

def validated_llm_call(query: str, context: str) -> Optional[LLMResponse]:

raw = llm.complete(build_prompt(query, context))

try:

return LLMResponse.model_validate_json(raw)

except Exception as e:

log_validation_failure(query, raw, str(e))

return NoneHuman-in-the-loop for high-stakes outputs is not a fallback for a broken system - it is a deliberate architectural choice for applications where the cost of a hallucination exceeds the cost of human review latency. When the output validation layer detects a low-confidence response, or when the model explicitly reports uncertainty in its self-assessment, the response is routed to a human reviewer queue rather than auto-published or auto-executed. This pattern is standard in medical AI applications (where every diagnosis suggestion is reviewed by a clinician), in legal AI (where every contract clause suggestion is reviewed by a lawyer), and should be standard in any domain where a single confident hallucination can cause material harm. The reviewer’s decision - approve, reject, or correct - also feeds back into your golden dataset, improving future evaluation quality.

Canary deployments for prompt changes apply the same deployment discipline to LLM prompts that you would apply to application code. A new prompt version is released to 5% of traffic while the existing prompt continues to serve the remaining 95%. Hallucination rate metrics, validation failure rates, and user feedback scores are compared between the two groups over a defined observation window - typically 24–48 hours of production traffic. If the new prompt version performs at least as well as the baseline across all metrics, the rollout proceeds. If any metric regresses, the canary is stopped and the prompt change is reverted. This pattern prevents prompt regressions that pass automated evals but fail on the long tail of real-world query distributions that no golden dataset fully covers.

Fallback strategies complete the defense architecture. When the primary model produces an output that fails the validation layer - wrong format, citation-unsupported claims, self-reported low confidence - the system should not simply return an error or silently serve a bad response. A tiered fallback strategy handles these cases:

- Retry with a corrective prompt: on first validation failure, retry the same model with an augmented prompt that explicitly surfaces the validation failure: “Your previous response was missing required citations. Please try again, ensuring every factual claim includes a [source: id] citation.”

- Escalate to a higher-capability model: if the retry also fails, route the request to a more capable (and more expensive) model. A pipeline that normally uses a fast, cost-efficient model for routine queries can escalate to a frontier model for edge cases that require higher reliability. This keeps average costs low while preserving quality on hard cases.

- Return a safe default response: if both retries fail, return a graceful degradation response - “I was unable to provide a verified answer to this question. Here is what I know, but please verify before acting on it: [partial response]” - rather than silently serving a hallucinated answer or throwing an unhandled exception.

Logging the full context of every fallback invocation is essential. Frequent fallbacks on a specific query type indicate a systematic gap - in your golden dataset, in your retrieval quality, or in your prompt design - that deserves engineering attention.

Code Hallucinations: The Most Common Developer Failure Mode

Code generation deserves its own section because it is where most developers first encounter LLM hallucinations in practice, and the failure modes are distinct from general factual hallucination. A hallucinated fact in a prose response is embarrassing; a hallucinated function signature in generated code causes a TypeError at runtime, often in a context that is difficult to debug because the developer trusted the model and did not independently verify the generated code.

The most common code hallucinations are: non-existent library methods (the model invents a plausible-sounding method that does not exist in the actual library), wrong function signatures (calling an existing function with the wrong argument names, wrong argument order, or wrong types), outdated API usage (using an API that was valid in training data but has since been deprecated or changed), and version confusion (mixing syntax from incompatible library versions).

The systematic fix for code hallucinations is to provide the actual library documentation, type signatures, or source code as grounding context in the prompt. This is a specialized form of RAG - instead of grounding against a document knowledge base, you are grounding against the source of truth for the code you are generating. For libraries that change frequently, maintaining an up-to-date index of type stubs and docstrings in your RAG knowledge base and retrieving the relevant signatures at generation time eliminates the “outdated API” hallucination almost entirely. For any generated code that will be committed to a production codebase, static analysis (mypy, pyright, or language-server-based validation) in your CI pipeline catches signature errors that passed through all other defenses.

Decision Framework: Which Technique to Use

The right combination of hallucination mitigation techniques depends on your application’s accuracy requirements, budget, and latency constraints. Rather than applying every technique to every application, use this decision framework:

For applications where occasional errors are tolerable (creative assistants, content brainstorming, exploratory chat): start with system prompt constraints and CoT. Add structured output enforcement if the output feeds downstream parsing. Skip inference-time compute and RAG unless you have a specific factual domain.

For applications where factual accuracy matters but errors are recoverable (internal knowledge bases, developer tooling, document summarization): add RAG grounding with citation enforcement. Implement a Ragas eval suite and run it in CI. Add output validation with Pydantic. Use the Reflexion pattern for queries that trigger low-confidence signals.

For applications where a single hallucination can cause material harm (medical, legal, financial, safety-critical): implement the full stack - RAG with knowledge graph grounding for structured facts, best-of-N sampling or two-call Chain-of-Thought plus Self-Verification, strict output validation, human-in-the-loop for low-confidence responses, canary deployments for all prompt changes, and a tiered fallback strategy. Accept the 2–5x cost increase as the price of operating in a high-stakes domain.

The most important meta-principle is that you cannot optimize what you do not measure. Whatever tier your application falls into, the eval suite should come first. Build your golden dataset, establish a baseline hallucination rate, and then apply techniques in order of cost-effectiveness. Every change to your prompt, retrieval strategy, or model should be validated against the eval suite before shipping. Hallucination is not a solved problem, but it is an engineerable one.