The Claude Code Source Leak: What 512,000 Lines of TypeScript Revealed About AI Agent Architecture

A missing line in a build configuration file caused the largest accidental source code disclosure in AI tooling history. On March 31, 2026, Anthropic shipped version 2.1.88 of its @anthropic-ai/claude-code package with a 59.8 MB JavaScript source map file included. That file contained the complete client-side agent harness for Claude Code - 512,000 lines of unobfuscated TypeScript across 1,906 files. Within hours, the code was mirrored thousands of times, a clean-room Python/Rust rewrite became the fastest-growing repository in GitHub history, and Anthropic’s legal response caused collateral damage across the platform. The incident happened to coincide with a separate supply-chain attack on the axios npm package, compounding an already chaotic day for developers who depend on these tools.

How a Missing .npmignore Turned a Routine Release Into Front-Page News

Anthropic uses Bun

as its bundler for Claude Code. Bun generates JavaScript source map files (.map) by default during the build process. These map files are debugging artifacts that link minified output back to original TypeScript sources, reversing the entire obfuscation step.

The failure was a textbook npm packaging mistake: version 2.1.88’s release pipeline did not exclude the .map file. A properly configured .npmignore entry (such as *.map) or a restrictive files field in package.json would have prevented the file from shipping. Neither was in place.

The source map itself did not contain the TypeScript inline. It held a sourcesContent reference pointing to a zip archive on Anthropic’s Cloudflare R2 storage bucket, which was publicly accessible. Anyone who inspected the .map file could download and decompress the full source archive.

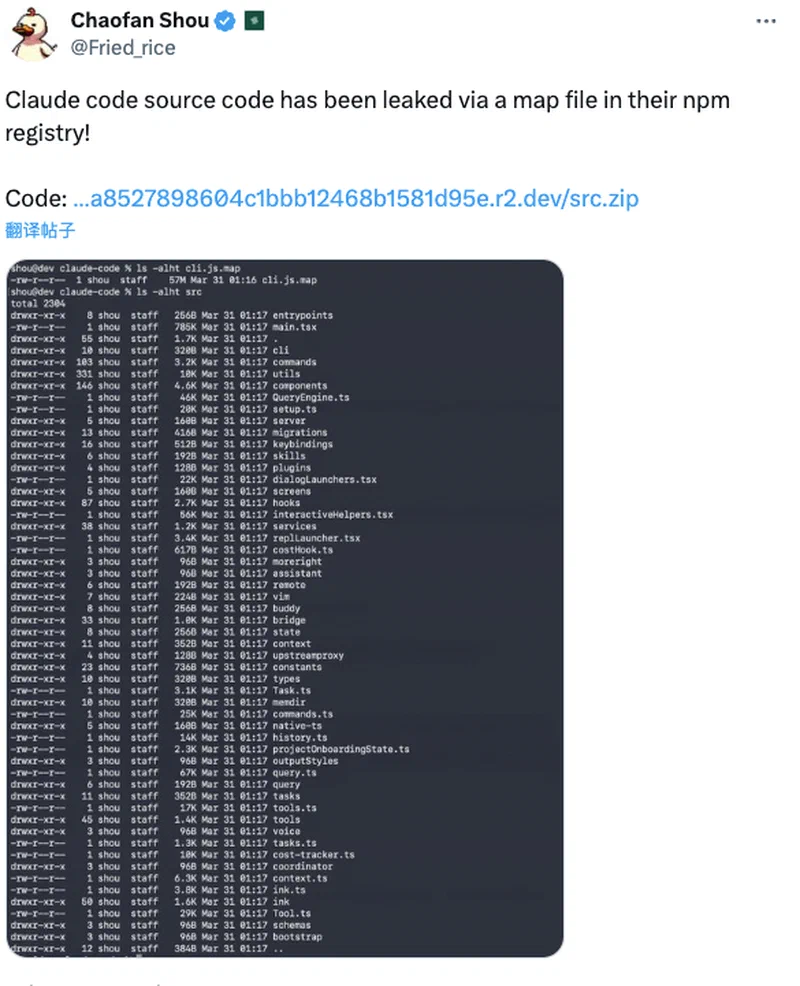

At 4:23 AM ET on March 31, security researcher Chaofan Shou (an intern at Solayer Labs) posted the finding on X with a direct download link. The tweet accumulated over 21 million views. By the time Anthropic pulled the package version and locked down the R2 bucket, the code was already being analyzed by thousands of developers worldwide.

The timing was especially painful. This came just days after Anthropic accidentally leaked references to an unreleased model codenamed “Mythos” through a separate CMS misconfiguration, making it the second public embarrassment in under a week. Anthropic’s official statement called it “a release packaging issue caused by human error, not a security breach.” Boris Cherny, the engineering lead of Claude Code, confirmed that no one was fired over the incident.

What 512,000 Lines Actually Contained

The leaked code contained no model weights or training data. Instead, the 1,906 files comprised the complete orchestration layer that wraps Claude’s API and turns it into an autonomous coding agent. This makes it the most detailed publicly available blueprint of a production AI agent harness - and the architectural patterns are worth studying regardless of which AI tools you use.

Three-Layer Self-Healing Memory

Rather than a store-everything RAG approach, Claude Code uses hierarchical memory across three tiers: an index layer (MEMORY.md, always loaded, about 150 characters per pointer per line), topic files (demand-loaded when relevant), and transcripts (grep-only, never loaded into context directly). A background process called “autoDream” runs during idle time via forked subagents with restricted tool access. It consolidates memories, merges observations, removes contradictions, and converts vague insights into concrete facts - maintaining clean, relevant context for when the user returns.

Five-Stage Context Management Cascade

The main query loop (query.ts, lines 307-1,728) manages context pressure through a strict sequence:

| Stage | Function | Trigger |

|---|---|---|

| Tool result budgeting | Caps individual tool outputs | Line 379 |

| Microcompact | Trims low-value content | Line 413 |

| Context collapse | Merges related entries | Line 440 |

| Autocompact | Summarizes older conversation turns | Line 453 |

| Hard truncation | Drops content as last resort | Final fallback |

Each stage has distinct criteria for what to keep and what to discard. The system only escalates to the next stage when the previous one cannot free enough tokens. This is far more sophisticated than what most open-source agent frameworks implement - where context management typically means simple truncation.

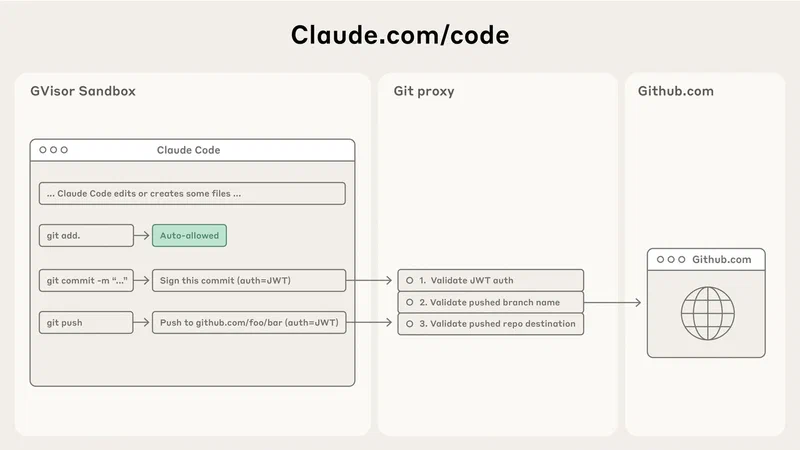

MCP-Native Tool Architecture

Every capability - bash execution, file read/write, grep, glob, LSP integration, even Computer Use - runs as a Model Context Protocol (MCP) tool call. The leaked tool definitions show bash as the most versatile tool, designed to handle file manipulation, git operations, and package management. Dedicated Grep and Glob tools provide structured search results rather than relying on shell commands. The architecture also revealed approximately 40 tools in a plugin system, with React + Ink terminal rendering using game-engine techniques.

Prompt Cache Optimization

A SYSTEM_PROMPT_DYNAMIC_BOUNDARY marker splits the system prompt into static and dynamic sections. Static sections are cached across turns to avoid redundant token costs. Sections marked DANGEROUS_uncachedSystemPromptSection signal to developers that changes will break the cache and increase costs - a pattern that forces cost-awareness into prompt engineering decisions.

Subagent Execution Models

The source revealed three distinct subagent variants: fork (inherits byte-identical context from parent for cost-efficient cache hits), teammate (independent context with peer-to-peer messaging), and worktree (git worktree isolation for parallel file edits). Each model has different context inheritance, tool access, and communication patterns.

Hidden Features: KAIROS, Anti-Distillation, and 44 Feature Flags

The source also contained unshipped features that amount to a product roadmap Anthropic never intended to publish. These are the discoveries that generated the most media coverage and community discussion.

KAIROS Autonomous Daemon Mode

Referenced over 150 times in the source, KAIROS

(named after the Greek concept of “the right moment”) is an unreleased background agent mode hidden behind the PROACTIVE and KAIROS feature flags. It operates as a persistent daemon that receives periodic heartbeat prompts (“anything worth doing right now?”), can independently monitor GitHub webhooks, send push notifications, and take actions without user initiation. It maintains append-only daily decision logs and persists across sessions and system restarts. KAIROS has exclusive tools unavailable in standard Claude Code, including push notification delivery, file delivery, and pull request subscription management. No open-source agent framework has shipped anything comparable.

Anti-Distillation Defenses

When enabled, Claude Code sends anti_distillation: ['fake_tools'] in API requests, instructing the server to inject decoy tool definitions into the system prompt - targeting competitors attempting to distill Anthropic’s tool-use training data. A second mechanism called CONNECTOR_TEXT summarizes assistant responses with cryptographic signatures server-side, preventing competitors from capturing full reasoning chains via proxy interception. Security researchers estimated these defenses could be bypassed within about one hour using MITM proxies or environment variable manipulation.

Undercover Mode

A 90-line module (undercover.ts) implements one-way suppression of Anthropic-internal identifiers for contributions to public open-source repositories. The system prompt instructs: “You are operating UNDERCOVER… Your commit messages… MUST NOT contain ANY Anthropic-internal information. Do not blow your cover.” Internal codenames (Capybara, Numbat, Fennec, Tengu), Slack references, and product identifiers are stripped in external repository builds. This confirmed that Anthropic uses Claude Code for stealth contributions to public open-source projects - prompting sharp criticism from the open-source community.

44 Feature Flags

The flags compile to false in external builds but reveal fully implemented features: 24/7 background agent orchestration, multiple Claude worker coordination, cron scheduling, voice command mode, Playwright browser control, agent sleep/resume cycles, and more. Internal model codenames were also exposed: Capybara/Mythos (version 8, 1M context), Numbat (launch-window model), and Fennec (speculated to be Opus 4.6). A frustration-tracking system using regex patterns to detect user anger in real-time was widely mocked as the world’s most expensive company using regex for sentiment analysis.

Claw-code and the Community Explosion

The leak triggered the largest spontaneous open-source mobilization in AI tooling history. Developer Sigrid Jin, previously profiled by the Wall Street Journal as one of the world’s most active Claude Code power users (over 25 billion tokens consumed in the prior year), began a clean-room Python/Rust rewrite within hours of the disclosure. Built overnight using oh-my-codex (an orchestration layer on top of OpenAI’s Codex), Claw-code reimplemented the core architectural patterns - tool system, query engine, multi-agent orchestration, memory management - without copying Anthropic’s proprietary source.

Claw-code hit 50,000 stars in approximately two hours, surpassed 100,000 stars within roughly one day, and shattered GitHub’s previous growth records.

Anthropic’s DMCA Response and Collateral Damage

Anthropic filed DMCA takedown requests that initially led to the removal of approximately 8,100 GitHub repositories . The operation caused significant collateral damage - many legitimate repositories unrelated to the leak were accidentally deleted because they belonged to the fork network associated with Anthropic’s public Claude Code repository. Developers reported receiving DMCA notices for forks containing only skills, examples, and documentation - nothing from the leaked source. Boris Cherny acknowledged the mistake, calling it accidental, and retracted the bulk of the notices, limiting enforcement to one repository and 96 forks that actually contained the accidentally released source code.

The irony was not lost on anyone. The open-source community pointed out that Anthropic was aggressively enforcing copyright on its own leaked code while its AI models were trained on vast quantities of publicly available code. The clean-room rewrite created a novel legal puzzle: if Anthropic claims an AI-generated transformative rewrite infringes copyright, it could undermine their own defense in training-data copyright cases. Gergely Orosz of The Pragmatic Engineer observed that Anthropic faces a genuine dilemma on this front.

Security Fallout: CVEs, Supply-Chain Attacks, and Weaponized Repos

The source leak also became a security event with real-world consequences that extended well beyond intellectual property concerns.

CVE-2026-21852

Discovered by Adversa AI

days after the leak, this vulnerability exploited a design choice visible in the leaked source: when Claude Code processes a bash command composed of more than 50 subcommands, it overrides compute-intensive security analysis for subcommands beyond the 50th and simply prompts the user for approval. A malicious CLAUDE.md file could instruct the AI to generate a 50+ subcommand pipeline disguised as a legitimate build process, enabling exfiltration of SSH private keys, AWS credentials, GitHub tokens, and environment secrets

before the trust prompt appeared.

The Axios Supply-Chain Attack

In a separate but coincidental incident on the same day, attackers attributed by Google Threat Intelligence Group

to a North Korean threat actor (tracked as UNC1069 by Google, Sapphire Sleet by Microsoft

) compromised the npm account of axios’s lead maintainer and published malicious versions 1.14.1 and 0.30.4. The poisoned packages installed a cross-platform Remote Access Trojan via a hidden dependency called plain-crypto-js. The attack was live for approximately 2-3 hours before npm removed the packages. Anyone who installed or updated Claude Code via npm during that window between 00:21 and 03:29 UTC may have pulled the compromised axios version alongside the source map leak.

Malware-Laden Fake Repos

Threat actors created GitHub repositories claiming to host the leaked Claude Code source but actually distributing infostealer malware. BleepingComputer reported multiple campaigns exploiting developer eagerness to examine the leaked code, making the search for “Claude Code source” itself a security risk.

Users who installed Claude Code on March 31 were advised to check lockfiles for axios 1.14.1 or 0.30.4, treat affected machines as fully compromised (requiring credential rotation and clean OS reinstallation), and audit any CLAUDE.md files in cloned repositories for prompt injection payloads.

What This Means for AI Developer Tools

The strategic damage likely exceeds the code damage. Feature flag names alone - KAIROS, the anti-distillation flags, internal model codenames - are product strategy decisions that competitors can now plan around. You can refactor code in a week. You cannot un-leak a roadmap.

For Anthropic specifically, the reputational harm compounds at a sensitive moment. The company reportedly generates $2.5 billion in annualized revenue (80% from enterprise) and is preparing for an IPO. Enterprise customers partly pay for the belief that their vendor’s technology is proprietary and protected. Two leaks in one week challenges the safety-first brand that is Anthropic’s core differentiator. Fortune reported the leak “rattled” IPO ambitions.

For the broader ecosystem, the leak accelerates a shift already underway. When orchestration architecture is no longer secret, differentiation moves entirely to model capabilities and user experience. The exposed permission system, sandboxing approach, and multi-agent coordination patterns may become de facto standards - they are now the only fully documented production-grade implementation in the industry. Open-source projects can now build on battle-tested architectural patterns rather than guessing. Several prominent developers on Hacker News and Reddit argued the CLI should have been open source from the start, noting that Google’s Gemini CLI and OpenAI’s Codex are already open.

The incident is also a case study in AI tool supply-chain risk. AI coding tools have deep dependency trees, run with broad filesystem and network access, and are increasingly trusted with credentials and secrets. A supply-chain compromise of any dependency becomes an attack on every developer using the tool. Security researchers at Zscaler ThreatLabz and SANS Institute published detailed analyses arguing the leak demonstrated the need for stricter sandboxing, dependency pinning, and build reproducibility in AI developer tools.

Nothing says “agentic future” quite like shipping the source by accident. Anthropic can refactor the code, but the trust deficit from two leaks in five days will take considerably longer to repair - especially with an IPO on the horizon and enterprise customers watching closely.