Moving from VirtualBox to Docker Desktop on Linux

If your Linux development workflow still depends on one or more VirtualBox VMs, you are not doing anything wrong. VirtualBox has been the default answer for isolated dev environments for years: predictable snapshots, clear network modes, and a full guest OS that behaves exactly like a separate machine.

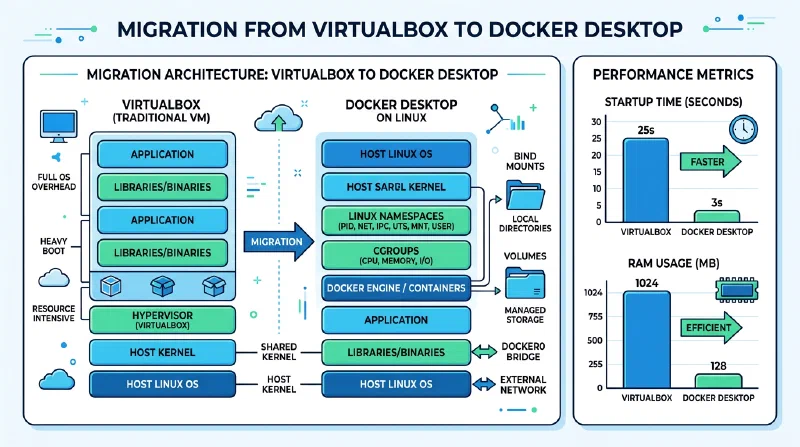

But in 2026, most application development tasks do not need full hardware emulation. They need fast startup, easy sharing, consistent dependencies, and low resource overhead. That is exactly where Docker Desktop

and docker compose

shine.

This guide walks through a practical migration path from VirtualBox-based Linux development to Docker Desktop on Linux. It also covers the trade-offs honestly: cases where you should keep VirtualBox, where Podman is a better fit, and when you should step up from Compose to Kubernetes .

Why this migration now

The shift is less about trends and more about developer feedback loops.

A typical VirtualBox workflow for a web stack looks like this:

- Boot a VM.

- Wait for guest OS startup.

- SSH or open a console.

- Start services.

- Sync project files via shared folders.

A containerized workflow usually looks like this:

- Run

docker compose up -d. - Start coding immediately on host files with bind mounts.

- Rebuild a single service when needed.

That difference compounds throughout the day. Faster startup and lower idle memory use mean less friction during context switches, branch testing, and pair development.

A second reason is reproducibility. A Compose file plus a few Dockerfiles are easier to review in Git than binary VM images. Teams can clone a repo and run the same stack with fewer machine-specific differences.

VirtualBox and Docker solve different problems

You should not treat Docker as a 1:1 replacement for every VM use case.

VirtualBox is a Type 2 hypervisor. It emulates hardware and runs a full guest kernel per VM. That gives strong OS-level separation and lets you run different operating systems easily.

Docker containers on Linux share the host Linux kernel and isolate processes using namespaces , cgroups , and seccomp . You get speed and density, but not a separate guest kernel.

The practical rule:

- If you used VirtualBox to run Linux services for app development, Docker is usually a better fit.

- If you used VirtualBox to run Windows, test kernel modules, or validate desktop GUI behavior on different OSes, Docker is not a full replacement.

Here is the quick architecture contrast:

| Dimension | VirtualBox VM | Docker Container |

|---|---|---|

| Kernel | Guest kernel per VM | Shared host kernel |

| Startup | OS boot (seconds to minutes) | Process start (sub-second to seconds) |

| Memory overhead | High (GBs per VM typical) | Low (tens to hundreds of MB per service) |

| OS variety | Linux/Windows/*BSD guests | Linux userland on Linux host |

| Isolation model | Hardware virtualization | Process-level isolation |

| Best for | Full OS testing, kernel work, desktop emulation | App stacks, APIs, workers, databases |

When you should keep VirtualBox

A good migration guide includes “do not migrate” scenarios.

Keep VirtualBox (or move to KVM /QEMU ) when you need:

- Windows guest testing on a Linux host.

- Cross-desktop GUI validation with complete session behavior.

- Security research requiring guest kernel boundaries.

- Legacy workflows built around snapshots of entire OS state.

Docker can run GUI apps with X11/Wayland socket sharing, but that setup is fragile and usually not the right replacement for desktop QA.

You can also run a hybrid model:

- Keep one VM for OS-specific testing.

- Move service-heavy development stacks to Compose.

That gives most of the speed benefit without losing needed VM capabilities.

Prerequisites and migration audit

Before uninstalling anything, audit what your VMs actually do.

List VMs and capture purpose:

VBoxManage list vms

VBoxManage list runningvmsFor each VM, document:

- Main purpose (database, API, full desktop test, CI sandbox).

- Exposed ports and network mode.

- Data location (database dirs, config dirs, uploads, artifacts).

- Services started on boot.

- Secrets currently stored in guest files.

A simple mapping template helps:

| VM Name | Current Role | Docker Equivalent | Keep VM? |

|---|---|---|---|

dev-lamp | PHP + Nginx + MySQL | php-fpm, nginx, mysql services | No |

redis-worker-box | Queue + worker + Redis | worker, redis services | No |

win11-qa | Browser/desktop QA | None (needs Windows guest) | Yes |

kernel-lab | Kernel module testing | None (needs custom kernel) | Yes |

Extract data before migration. For VM guests reachable over SSH:

rsync -avz user@vm-ip:/var/lib/postgresql/data/ ./vm-export/postgres-data/

rsync -avz user@vm-ip:/etc/nginx/ ./vm-export/nginx-conf/For non-networked guests, mount shared folders and copy app data out. Do not rely on “I can always boot the VM later” as your backup strategy.

Install Docker Desktop on Linux (2026)

On Linux, you now have two mainstream Docker paths:

- Docker Engine : native daemon + CLI, no desktop UI.

- Docker Desktop: GUI, Docker Scout , extension ecosystem, and a managed VM runtime layer.

If you want dashboard workflows and team consistency with macOS/Windows colleagues, Docker Desktop is often worth it.

Example Debian/Ubuntu .deb flow:

# Download latest package from Docker's official Linux Desktop page first.

sudo apt update

sudo apt install ./docker-desktop-amd64.deb

# Add your user to docker group so CLI works without sudo.

sudo usermod -aG docker "$USER"

newgrp docker

# Validate daemon and CLI path.

docker version

docker run --rm hello-worldIf your distro supports repository-based install for Docker Desktop, prefer official repo instructions for easier updates.

After installation, open Docker Desktop and tune resources.

Recommended starting point on a 32 GB dev machine:

- CPUs: 6

- Memory: 8-10 GB

- Swap: 1-2 GB

- Disk image: size based on project needs, usually 60-120 GB

If Docker Desktop starts consuming too much RAM during indexing/builds, lower memory first before changing CPU count.

Build your first Compose stack from a former VM

Let us migrate a common VirtualBox “single dev VM” pattern into Compose.

Assume your old VM hosted:

- Python API

- PostgreSQL

- Redis

- Nginx reverse proxy

Use this structure:

project/

docker-compose.yml

.env

app/

nginx/

default.confExample docker-compose.yml:

services:

api:

build:

context: ./app

dockerfile: Dockerfile

container_name: dev_api

env_file:

- .env

depends_on:

- db

- redis

volumes:

- ./app:/app

ports:

- "8000:8000"

command: ["uvicorn", "main:app", "--host", "0.0.0.0", "--port", "8000", "--reload"]

db:

image: postgres:17

container_name: dev_db

environment:

POSTGRES_USER: app

POSTGRES_PASSWORD: app

POSTGRES_DB: appdb

volumes:

- pgdata:/var/lib/postgresql/data

ports:

- "5432:5432"

redis:

image: redis:8

container_name: dev_redis

ports:

- "6379:6379"

nginx:

image: nginx:1.29

container_name: dev_nginx

depends_on:

- api

volumes:

- ./nginx/default.conf:/etc/nginx/conf.d/default.conf:ro

ports:

- "8080:80"

volumes:

pgdata:Day-to-day commands:

docker compose up -d

docker compose ps

docker compose logs -f api

docker compose exec api bash

docker compose down

# Remove named volumes only when you intentionally want a clean DB state:

docker compose down -vMove environment-specific settings from VM shell profiles into .env so teammates can run the same stack.

Example .env:

APP_ENV=development

DATABASE_URL=postgresql://app:app@db:5432/appdb

REDIS_URL=redis://redis:6379/0

SECRET_KEY=change-meFor editor integration, Dev Containers can replace your old “open terminal inside VM” habit.

A minimal .devcontainer/devcontainer.json:

{

"name": "api-dev",

"dockerComposeFile": "../docker-compose.yml",

"service": "api",

"workspaceFolder": "/app",

"customizations": {

"vscode": {

"extensions": [

"ms-python.python",

"ms-azuretools.vscode-docker"

]

}

}

}Now the environment definition lives in source control, not in a VM image only you can reproduce.

Data migration patterns from VM disks

The main migration risk is state, not containers.

Use these patterns:

- Databases: export dump from VM, import into containerized DB.

- App uploads/files: copy into named volume or host bind mount.

- Service config: convert

/etc/...files into mounted config files.

PostgreSQL example:

# From inside old VM

pg_dump -U app -d appdb > appdb.sql

# On host

docker compose cp appdb.sql db:/tmp/appdb.sql

docker compose exec db psql -U app -d appdb -f /tmp/appdb.sqlMySQL example:

# Old VM

mysqldump -u root -p appdb > appdb.sql

# Host

docker compose cp appdb.sql mysql:/tmp/appdb.sql

docker compose exec mysql sh -lc 'mysql -u root -p"$MYSQL_ROOT_PASSWORD" appdb < /tmp/appdb.sql'If you need to preserve ownership/permissions for shared data, use consistent UID/GID strategy between host user and container user.

Podman as an alternative path

If your goal is containers but you do not want Docker Desktop or a long-running root daemon, evaluate Podman.

Podman highlights:

- Daemonless architecture.

- Strong rootless defaults.

- Docker-compatible CLI for many workflows.

podman composeandpodman-composefor Compose-style orchestration.

Quick comparison:

| Feature | Docker Desktop | Docker Engine | Podman |

|---|---|---|---|

| GUI dashboard | Yes | No | Optional (Cockpit/third-party) |

| Rootless support | Yes | Yes | Yes (first-class) |

| Daemonless | No | No | Yes |

| Compose support | Native docker compose | Native docker compose | podman compose / podman-compose |

| Team familiarity | Very high | High | Medium |

Minimal Podman migration test:

sudo apt install podman podman-compose

podman --version

podman compose up -dIf your team standardizes on Docker tooling, use Docker Desktop/Engine. If you prioritize rootless operation and minimal background services, Podman may be the better endpoint.

Beyond Compose: Kubernetes with kind or minikube

Compose is excellent for local multi-service apps. But if your production target is Kubernetes, running a local cluster may reduce deployment drift.

Two practical choices:

kind(Kubernetes in Docker): lightweight, great for CI and API testing.minikube: broader runtime options and richer local cluster features.

Install kind and create a cluster:

kind create cluster --name dev-k8s

kubectl cluster-info --context kind-dev-k8sTranslate Compose concepts to Kubernetes gradually:

- Compose service -> Deployment + Service

- Named volume -> PersistentVolumeClaim

.envvariables -> ConfigMap/Secretdepends_on-> readiness probes and proper startup handling

Use Compose for daily iteration and Kubernetes for integration testing, or move fully when your team is ready. Do not force full Kubernetes early if Compose still solves your real bottleneck.

Security hardening for daily dev containers

Container migration is a good moment to harden defaults.

At minimum, adopt these controls:

- Run app containers as non-root.

- Use read-only root filesystem where possible.

- Drop Linux capabilities you do not need.

- Avoid mounting the Docker socket into app containers.

- Scan images regularly and pin image tags.

Example hardened service snippet:

services:

api:

build: ./app

user: "1000:1000"

read_only: true

tmpfs:

- /tmp

security_opt:

- no-new-privileges:true

cap_drop:

- ALL

cap_add:

- NET_BIND_SERVICE

volumes:

- ./app:/app:rw

ports:

- "8000:8000"Additional practical tips:

- Replace broad bind mounts like

.:/appwith narrow path mounts when possible. - Put secrets in dedicated secret stores or at least environment injection at runtime, not committed

.envfiles. - Keep base images small (

python:3.13-slim,debian:bookworm-slim,alpineonly if your stack supports musl constraints).

Security is not only production concern. A compromised developer container with broad host mounts can leak source, tokens, and SSH keys.

Performance comparison: VM vs Docker Desktop vs Podman

Real numbers vary by hardware, but representative measurements on a Linux laptop (Ryzen 7, 32 GB RAM, NVMe) for an equivalent API+Postgres+Redis stack look like this:

| Metric | VirtualBox VM Stack | Docker Desktop Stack | Podman Rootless Stack |

|---|---|---|---|

| Cold start to usable app | 55-90s | 6-12s | 5-11s |

| Idle memory footprint | 2.4-3.8 GB | 450-900 MB | 350-800 MB |

| Rebuild app image | 25-70s | 10-35s | 10-33s |

| Restart single API service | 10-20s | 1-4s | 1-4s |

| Disk overhead growth over week | High (guest OS churn) | Medium (layers + volumes) | Medium (layers + volumes) |

What these numbers mean in practice:

- VMs pay a fixed “full OS tax” before your app runs.

- Containers reduce boot overhead and make service-level restarts cheap.

- Docker Desktop adds convenience features but may use slightly more memory than a pure rootless Podman path, depending on your runtime configuration.

If you care about tight loops, the biggest win is not raw benchmark speed. It is how often you can restart, rebuild, and validate changes in under 10 seconds.

Troubleshooting common migration issues

Most migration failures are predictable.

Port collision:

- Symptom:

Bind for 0.0.0.0:5432 failed. - Fix: change host port mapping or stop local service using that port.

Permissions mismatch on bind mounts:

- Symptom: app cannot write to mounted directory.

- Fix: align UID/GID, or set container user explicitly.

DNS/service discovery confusion:

- Symptom: app cannot reach DB even though both run.

- Fix: use Compose service name (

db) as hostname, notlocalhost.

Volume surprises:

- Symptom: old schema/data persists after code reset.

- Fix: inspect named volumes and remove intentionally with

docker compose down -v.

Network assumptions from VirtualBox:

- Symptom: tools expecting host-only adapter semantics break.

- Fix: model required topology in Compose networks and explicit port mappings.

Useful diagnostics:

docker compose ps

docker compose logs -f

docker inspect <container>

docker network ls

docker volume lsIf Docker Desktop itself fails to start cleanly after upgrade, restart its backend service and verify no conflicting low-level runtime configuration was left from older Docker Engine installs.

Migration checklist and next steps

Use this checklist to complete migration safely:

- Inventory VirtualBox VMs and classify replace/keep.

- Export all critical state (DB dumps, configs, uploads).

- Install Docker Desktop and verify

hello-world. - Create initial

docker-compose.ymlfor one stack. - Move secrets/config to

.envplus docs. - Add Dev Container config for editor reproducibility.

- Apply hardening baseline (non-root, read-only, dropped capabilities).

- Benchmark startup and memory before/after.

- Decide whether Podman better fits your security/ops model.

- Add local Kubernetes (

kindorminikube) only when needed.

The best migration is incremental. Start with one VM-backed project, validate developer ergonomics, and then standardize templates across repos.

You do not need to “containerize everything” in one weekend. You only need to remove the parts of your workflow where full VMs are adding cost without adding value.