How to Train a Custom Text-to-Speech Voice with Coqui TTS

You can clone your own voice or build a completely custom synthetic voice using Coqui TTS with as little as 5 minutes of recorded audio, running entirely on consumer hardware. The workflow is straightforward: record clean audio samples, preprocess them into a training dataset, fine-tune an XTTS v2 or VITS model using the Coqui TTS trainer, and export the result for real-time inference. On a modern GPU like the RTX 5070 with 12 GB VRAM, fine-tuning takes 2-4 hours and produces natural-sounding speech that captures the target voice’s timbre, pacing, and accent.

What follows covers the full process - picking a model architecture, recording and preparing audio, training, and deploying your custom voice.

Coqui TTS in 2026 - Current State and Alternatives

Coqui the company shut down in late 2023, but the open-source project survived through community effort. The original coqui-ai/TTS repository is archived on GitHub but still installable. The most actively maintained fork comes from Idiap Research Institute

, which keeps the project compatible with Python 3.12+ and PyTorch 2.5+.

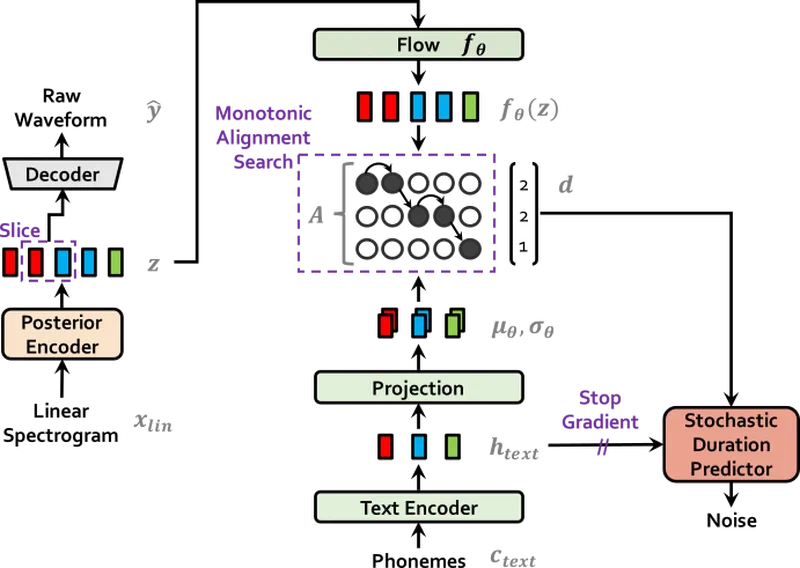

The flagship model in the Coqui ecosystem is XTTS v2 - a multi-speaker, multi-lingual TTS model that supports voice cloning from a 6-second reference clip without any fine-tuning (zero-shot mode). Fine-tuning it with your own dataset produces substantially better results. The earlier VITS (Variational Inference with adversarial learning for Text-to-Speech) model is a single-speaker architecture that requires more training data (30+ minutes) but produces very high quality output for a dedicated voice.

Other options in the space include Bark from Suno, StyleTTS2 , Fish Speech , and OpenVoice . Coqui TTS with XTTS v2 remains the best balance of voice cloning quality, training speed, and ease of use for consumer hardware.

Installation is simple:

pip install TTSOr from the community fork:

pip install git+https://github.com/idiap/coqui-ai-TTS.gitVerify the installation by listing available pre-trained models:

tts --list_modelsOn the licensing side: Coqui TTS itself is MPL-2.0 licensed, but the XTTS v2 model weights carry a non-commercial Coqui Public Model License. If you plan commercial use, check the licensing carefully. VITS models that you train from scratch on your own data are yours to use however you want.

Recording and Preparing Your Voice Dataset

The quality of your cloned voice depends almost entirely on the quality of your training audio. Garbage in, garbage out applies here more than almost anywhere else in machine learning.

Recording Equipment and Environment

A USB condenser microphone like the Blue Yeti or Audio-Technica AT2020USB+ in a quiet room is sufficient. Avoid laptop microphones and Bluetooth headsets - they introduce too much noise and compression. If you do not have a treated recording space, record inside a closet or hang blankets around your desk. The goal is to minimize reverb and background noise.

Record in 44.1 kHz or 48 kHz, 16-bit WAV, mono. The training pipeline resamples to 22.05 kHz internally, but starting with higher quality preserves detail that matters during downsampling.

Session Guidelines

Read prepared scripts that cover diverse phonemes and sentence patterns. Speak naturally at your normal pace and volume. Avoid whispering or shouting. Take breaks every 15-20 minutes to maintain consistent energy - vocal fatigue changes your voice characteristics in ways that confuse the training process.

For scripts, use a phonetically balanced corpus like the Harvard Sentences or CMU Arctic prompts. If you need the voice to handle technical terms specific to your use case, supplement with domain-specific vocabulary. Aim for 100-200 sentences, which gives you roughly 5-15 minutes of audio.

Preprocessing Pipeline

After recording, split your audio into individual utterances - one sentence per WAV file. You can use Audacity’s “Silence Finder” feature or do it programmatically with Python:

from pydub import AudioSegment

from pydub.silence import split_on_silence

audio = AudioSegment.from_wav("recording.wav")

chunks = split_on_silence(audio, min_silence_len=500, silence_thresh=-40)

for i, chunk in enumerate(chunks):

chunk.export(f"audio_{i:03d}.wav", format="wav")Normalize volume across all files to -3 dBFS:

ffmpeg -i input.wav -filter:a "loudnorm=I=-23:TP=-3:LRA=7" output.wavRemove leading and trailing silence from each file, and listen through them to verify there is no clipping or background noise.

Dataset Structure

Create a directory containing your WAV files and a metadata.csv file. The format is pipe-delimited with no header - one line per utterance:

audio_001.wav|The quick brown fox jumps over the lazy dog.

audio_002.wav|She sells seashells by the seashore.

audio_003.wav|How much wood would a woodchuck chuck.This structure is what Coqui TTS expects for both XTTS and VITS training.

Fine-Tuning XTTS v2 for Voice Cloning

XTTS v2 can clone a voice from a 6-second clip in zero-shot mode, but fine-tuning with your full dataset produces dramatically better results. Zero-shot cloning captures the general timbre but often misses the target voice’s natural rhythm and intonation patterns.

Zero-Shot Baseline

Start by testing the zero-shot capability to establish a baseline:

tts --model_name tts_models/multilingual/multi-dataset/xtts_v2 \

--speaker_wav reference.wav \

--language_idx en \

--text "Hello, this is a test of voice cloning." \

--out_path test_zero_shot.wavListen to the output. It will sound reasonable but likely lacks the subtle speech patterns that make a voice recognizable to someone who knows the speaker.

Training Setup

Create a training script train_xtts.py that loads the XTTS configuration, points to your dataset, and sets training parameters:

from TTS.tts.configs.xtts_config import XttsConfig

from TTS.tts.models.xtts import Xtts

from trainer import Trainer, TrainerArgs

config = XttsConfig()

config.output_path = "./output_xtts/"

config.datasets = [{

"path": "./my_dataset/",

"meta_file_train": "metadata.csv",

}]

config.batch_size = 4 # fits in 12 GB VRAM

config.num_epochs = 50 # usually converges in 20-30

config.lr = 5e-6 # low LR avoids catastrophic forgetting

config.max_audio_len = 255995 # ~11.6 seconds max per utteranceThe low learning rate is important. XTTS v2 is a large pre-trained model with broad speech capabilities. Training with too high a learning rate causes catastrophic forgetting - the model learns your voice but loses its ability to produce coherent speech.

Training Data Requirements

XTTS v2 fine-tuning works with surprisingly little data:

| Audio Length | Utterances | Result |

|---|---|---|

| 2 minutes | 20-30 | Noticeable voice similarity, some inconsistencies |

| 5-10 minutes | 50-100 | Natural-sounding results for most use cases |

| 15+ minutes | 100+ | Diminishing returns; quality plateaus |

Running Training

Launch training and monitor with TensorBoard:

python train_xtts.py

tensorboard --logdir output_xtts/Watch the loss_gen (generator loss) and loss_disc (discriminator loss) curves. Training takes approximately 2 hours for 50 epochs on an RTX 5070.

Evaluate every 10 epochs by generating test sentences and listening to them. Do not always pick the checkpoint with the lowest loss number - perceptually better models sometimes have slightly higher loss values. Save the best 3 checkpoints for comparison.

Troubleshooting

Common issues and their fixes:

| Symptom | Fix |

|---|---|

| Robotic-sounding output | Increase training data or reduce learning rate |

| Voice sounds like someone else | Verify your metadata.csv entries match the correct audio files |

| Audio artifacts or crackling | Check for clipping in the training audio; re-normalize problematic files |

| Training loss not decreasing | Learning rate may be too low; try 1e-5 |

Training a VITS Model from Scratch

If you need the highest possible quality for a single voice and have 30+ minutes of clean audio, training a VITS model from scratch gives you a dedicated single-speaker model. VITS can outperform fine-tuned XTTS for that specific voice because the entire model capacity is devoted to one speaker.

When to Choose VITS Over XTTS

Pick VITS when you have one target voice with 30+ minutes of audio, you need the lowest possible inference latency (VITS is faster), or you want a model without the XTTS license restrictions. VITS models trained on your own data from scratch carry no licensing baggage.

Data Requirements

VITS needs more data than XTTS fine-tuning:

| Data Amount | Quality |

|---|---|

| 30 minutes | Minimum viable, decent quality |

| 1-2 hours | Good quality, natural prosody |

| 3+ hours | Diminishing returns |

Each utterance should be 2-15 seconds long with a matching transcription in metadata.csv.

Configuration and Training

Set up the VITS configuration with eSpeak-NG as the phonemizer:

apt install espeak-ngfrom TTS.tts.configs.vits_config import VitsConfig

config = VitsConfig()

config.audio.sample_rate = 22050

config.text.phonemizer = "espeak"

config.model.hidden_channels = 192

config.model.num_layers = 6

config.use_noise_augment = True # improves robustnessLaunch training:

python TTS/bin/train_tts.py --config_path config.jsonVITS trains both a generator and discriminator simultaneously. Expect 100-200 epochs, taking 4-8 hours on a modern GPU. Enable use_noise_augment=True to add slight noise variations during training, which improves robustness. Avoid pitch augmentation - it degrades voice identity.

Evaluation

Generate the same 10 test sentences at every checkpoint for consistent comparison. For automated quality scoring, use UTMOS :

pip install speechmosThis gives you a Mean Opinion Score estimate without needing human listeners for every checkpoint evaluation.

The trained model directory contains config.json and model.pth. Generate speech with:

tts --model_path model.pth --config_path config.json \

--text "Your text here" --out_path output.wavInference, Deployment, and Real-Time Integration

With a trained model in hand, the next step is serving it efficiently. The right approach depends on your use case - batch audio generation, real-time narration, or integration with other applications.

CLI and Python API

The simplest method for batch generation is the command line:

tts --model_path ./output/best_model.pth \

--config_path ./output/config.json \

--text "Your text here" \

--out_path speech.wavFor programmatic access, use the Python API:

from TTS.api import TTS

tts = TTS(model_path="./output/best_model.pth",

config_path="./output/config.json", gpu=True)

tts.tts_to_file(text="Hello world", file_path="output.wav")Expect 200-300ms per sentence on GPU.

Real-Time Streaming

For XTTS v2, the streaming inference mode yields audio chunks as they are generated. Pipe these to sounddevice or pyaudio for real-time playback with roughly 500ms latency to first audio:

import sounddevice as sd

for chunk in tts.tts_with_xtts_stream(text, speaker_wav):

sd.play(chunk, samplerate=22050)

sd.wait()HTTP API Deployment

For serving the model over a network, wrap it in FastAPI

with a /synthesize POST endpoint that accepts text and returns WAV audio. Use StreamingResponse for chunked audio delivery. Dockerize the whole setup with an NVIDIA CUDA base image so the model runs on GPU in production.

Batch Processing

For generating audiobooks or podcast content, split text into paragraphs and generate each in parallel:

from concurrent.futures import ThreadPoolExecutor

from pydub import AudioSegment

paragraphs = text.split("\n\n")

with ThreadPoolExecutor(max_workers=2) as executor:

audio_files = list(executor.map(generate_paragraph, paragraphs))

combined = sum(audio_files, AudioSegment.empty())

combined.export("full_audio.wav", format="wav")Limit workers to 2 - GPU memory constrains parallelism.

Pacing and Control

Coqui TTS does not support full SSML, but you can control output through punctuation. Commas create short pauses, periods create longer ones. Insert explicit silence segments between sections using pydub for longer breaks. For emphasis, slight text modifications (adding commas before key words, using shorter sentences) can shape the delivery.

CPU Fallback

For deployment on machines without GPUs, performance varies by model:

| Model | CPU Speed |

|---|---|

| VITS | ~1x real-time (10s audio = 10s generation) |

| XTTS v2 | ~0.5x real-time (10s audio = 20s generation) |

VITS is the clear winner for CPU-only deployments where latency matters.

Wrapping Up

The Coqui TTS ecosystem gives you a practical path to custom voice synthesis without cloud dependencies or commercial API costs. XTTS v2 is the fastest route - fine-tune with 5 minutes of audio and get usable results in a couple of hours. VITS requires more data and training time but produces the highest quality for a single dedicated voice. Either way, you end up with a model that runs locally, generates speech in real time on consumer hardware, and sounds like the voice you trained it on.