Why Small Language Models (SLMs) are Better for Edge Devices

Small Language Models, sub-4B parameter models built to run on local hardware, now handle most of the edge AI work that used to need the cloud. Phi-4 , Gemma 3 , and Llama 3.2-1B run offline on Raspberry Pi boards, phones, and industrial PLCs. The economics, latency, and privacy story all point the same way: edge first.

What Counts as a Small Language Model

In 2023, “small” meant under 13B parameters. Today, three tiers matter for edge work.

Sub-1B models like Llama 3.2-1B target sensor and MCU-class hardware with 512MB of RAM. They handle keyword spotting, intent classification, and simple field extraction. They are not general reasoners.

The 1 to 4B tier is the sweet spot. Phi-4 (3.8B) and Gemma 3 (4B) run on devices with 4 to 8GB of RAM. They handle chat, offline translation, summaries, and complex smart home commands. Phi-4 today beats 2023’s GPT-4 on many reasoning tests, not from a new architecture but from cleaner training data. Microsoft trained it on textbook-quality synthetic text instead of raw web scrape. At INT4 it weighs about 2.2GB and fits on a Pi 5 with 8GB of RAM.

The 4 to 14B tier needs a laptop NPU or a small edge server. The cost angle seals the case below that ceiling. One million cloud inference calls run $8 to $20. The same load on-device costs cents in power.

NPUs and Edge Hardware

Developers who tried LLMs on Raspberry Pi boards got single-digit tokens per second on the CPU. That picture is outdated. The NPU rewrites the math.

A Neural Processing Unit is silicon built for matrix multiply and accumulate. It is purpose-built, not a flexible GPU, and it now ships in every major consumer SoC. Power is the deciding factor. Phi-4 on a Snapdragon X Elite CPU draws 15 to 20W sustained. The same job on the NPU drops to 3 to 5W and runs 30 to 35 tokens per second. For a battery device, that is the difference between shipping and not.

| Platform | CPU / Memory | AI Accelerator | Phi-4 (INT4, tokens/sec) | Llama 3.2-1B (INT4, tokens/sec) | Idle Power Draw | Active Inference Power |

|---|---|---|---|---|---|---|

| Raspberry Pi 5 (8GB) | Cortex-A76, 4-core, 8GB LPDDR4X | None (CPU-only via llama.cpp) | 4–6 t/s | 18–24 t/s | 2.5W | 6–8W |

| Jetson Orin Nano (8GB) | Cortex-A78AE, 6-core, 8GB LPDDR5 | 40 TOPS Ampere GPU + DLA | 22–28 t/s (GPU) | 55–70 t/s (GPU) | 5W | 10–15W |

| STM32N6 (with NPU) | Cortex-M55, 1-core, 4MB SRAM + external Flash | 600 GOPS Cortex-M55 with Helium | Not feasible | Not feasible | 0.05W | 0.3–1W |

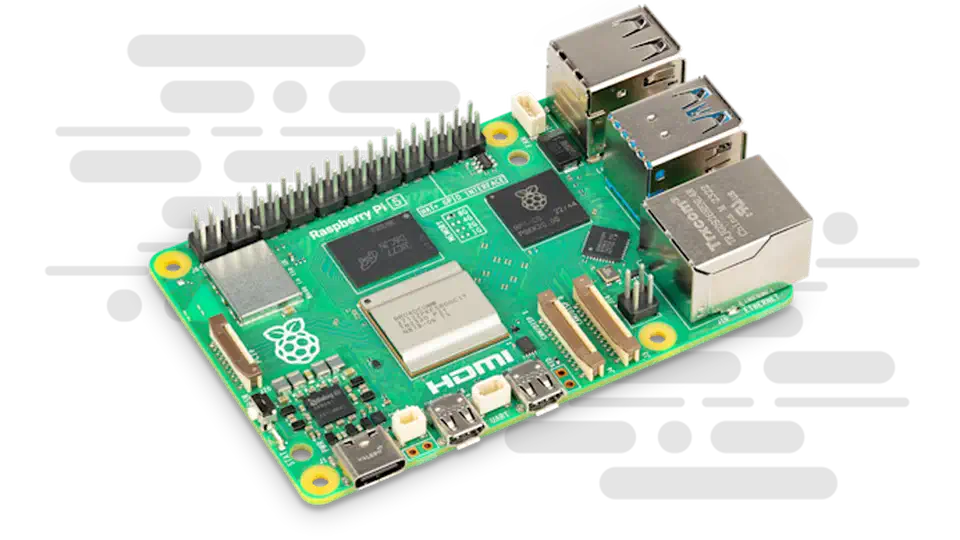

The Raspberry Pi 5 is the hobbyist entry point. With 8GB of RAM and llama.cpp tuned for the Cortex-A76, Llama 3.2-1B runs at 18 to 24 tokens per second. That is fast enough for local voice assistants. Phi-4 also works but crawls at 4 to 6 tokens per second, which suits nightly batch jobs.

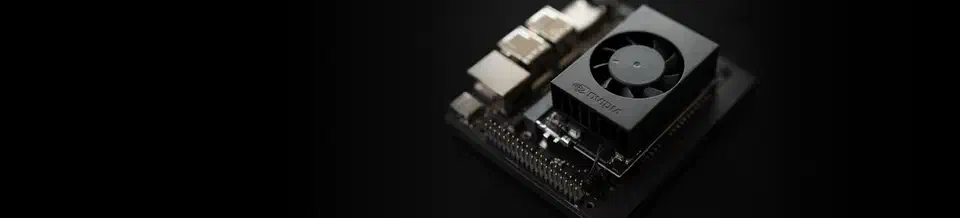

The Jetson Orin Nano is the pro pick. Its 40 TOPS GPU plus DLA hits 22 to 28 tokens per second on Phi-4 via TensorRT. Smart cameras, inspection rigs, and retail kiosks all fit. The STM32N6 cannot run an LLM. It runs tiny intent classifiers at sub-watt power and wakes a bigger chip on demand.

Deployment: Quantization, GGUF, and Cascades

Two formats dominate today. GGUF for CPU work via llama.cpp. ONNX for NPUs and cross-platform builds.

Quantization replaces 16 or 32-bit weights with INT8 or INT4 integers. Memory drops 2 to 4x. Throughput climbs because integer math is cheaper. INT8 costs about 0.5 to 2% on benchmarks. INT4 costs 2 to 5%. For narrow tasks, the loss is invisible.

GGUF bundles weights and tokenizer into one file. Q4_K_M is the typical pick. It fits Phi-4 in 2.4GB and keeps over 95% of the FP16 score. ONNX Runtime picks the right execution provider at startup: QNN for Qualcomm, TensorRT for NVIDIA, Core ML for Apple.

The best edge systems use a cascade. A sub-100M intent classifier tags every request. In-domain queries go to the local 3B model. Out-of-domain queries hit a bigger local model or the cloud. The classifier adds 10 to 20ms and spares the main model most of the work.

Fine-tuning is also cheap now. QLoRA on a 12GB GPU trains a Phi-4 or Gemma 3 adapter in 2 to 4 hours on a few thousand examples. The adapter weighs 50 to 200MB. A tuned model on your support corpus often beats a much larger general model on that one task.

Privacy Is the Blocker, Not the Feature

For regulated teams, privacy is not a nice-to-have. It is a blocker. Cloud inference needs legal review, data processing agreements, and often user consent. On-device AI skips all of it.

GDPR Article 9 restricts processing of health and biometric data. HIPAA needs a signed Business Associate Agreement for every cloud vendor in the chain. Healthcare legal review for a new cloud AI vendor takes 6 to 18 months. On-device runs cut that to zero, because nothing leaves the device.

Benchmarks and When to Choose an SLM

The table below covers INT4 inference on Raspberry Pi 5 class ARM CPUs.

| Model | Parameters | MMLU (5-shot) | ARC-Challenge | GSM8K | RAM (INT4 GGUF) | Tokens/sec (Pi 5) |

|---|---|---|---|---|---|---|

| Llama 3.2-1B | 1.2B | 49.3% | 59.4% | 44.4% | ~0.7GB | 18–24 |

| Gemma 3 (4B) | 4B | 71.2% | 78.3% | 76.1% | ~2.5GB | 7–10 |

| Phi-4 | 3.8B | 78.4% | 83.6% | 91.2% | ~2.4GB | 5–8 |

| Mistral 7B | 7.2B | 64.2% | 70.1% | 52.2% | ~4.5GB | 3–5 |

| Mistral Nemo (12B) | 12B | 68.0% | 74.5% | 68.1% | ~7.5GB | 1–2 |

Phi-4 leads the 3 to 4B class, especially on math (91.2% GSM8K). Gemma 3 trails on raw scores but ships better NPU tooling. Mistral 7B is now awkward: bigger than Phi-4 but weaker, because it predates the 2025 synthetic-data training wave. For a wider rundown of open model families, see our open model comparison .

Pick an SLM when the task is narrow, your hardware has under 8GB of RAM, you need sub-500ms responses without a network hop, or privacy rules block the cloud. Skip an SLM when the task needs broad world knowledge, long multi-step reasoning over big documents, or general chat across any topic. If you have no hardware limit and cloud economics already work, stay in the cloud.

The playbook is to start with an SLM, log its failure rate on real traffic, and route only the cases it cannot handle to a bigger model. Most teams find the small one covers 80 to 95% of production load. The shift from cloud-default to edge-default is structural, not a fad.

Botmonster Tech

Botmonster Tech