FastAPI Webhook Bot: GitHub and Gitea Automation

You can build a bot that labels issues, enforces PR naming, posts review comments, and triggers workflows. Write a FastAPI app that takes webhooks from GitHub or Gitea , checks the signature, and calls back to the right API. The same handler works for both forges. Header names and payload shape differ a bit, so one codebase can serve both.

How Repository Webhooks Work on GitHub and Gitea

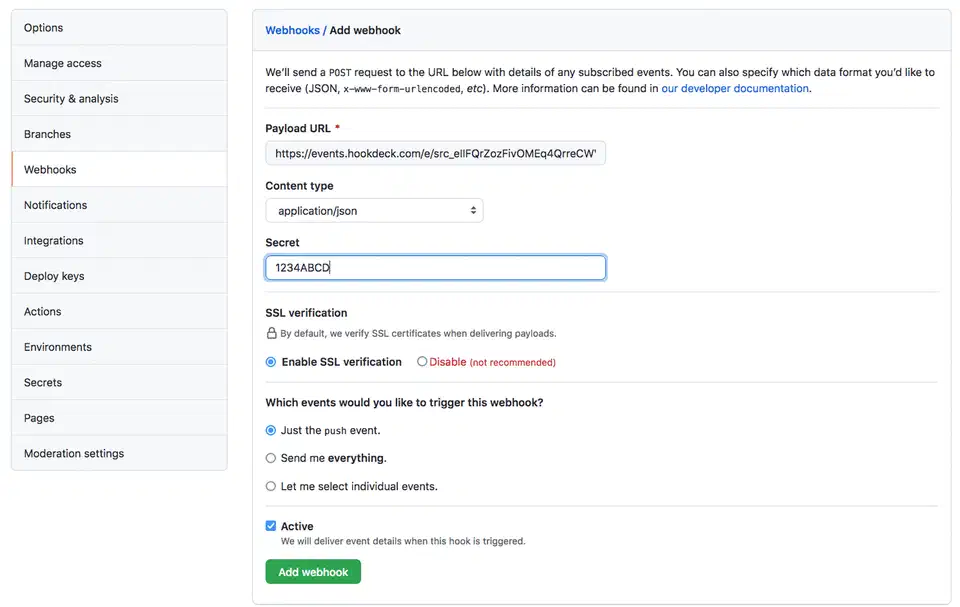

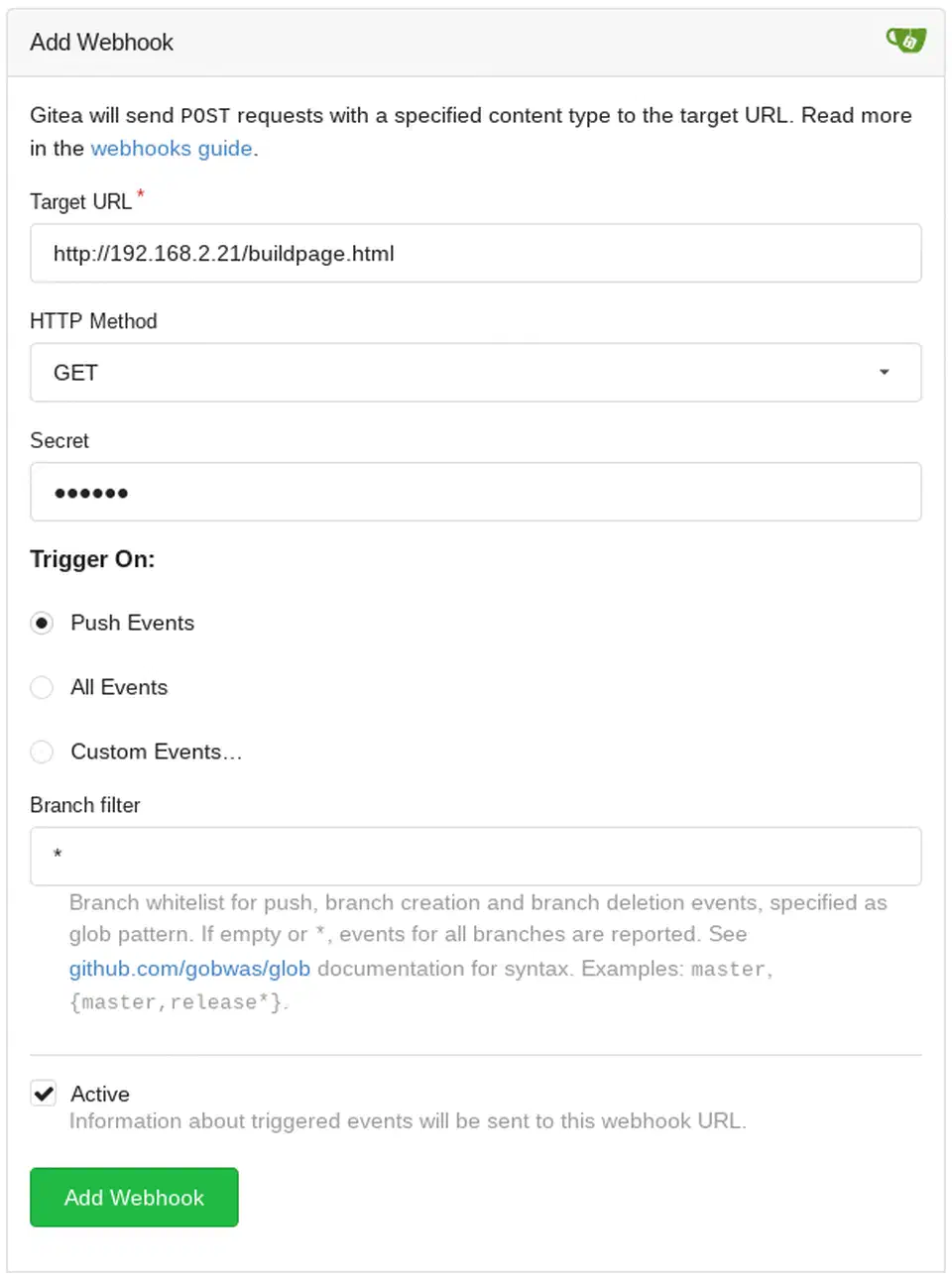

Both GitHub and Gitea let you set up webhooks at the repo, org, or (for Gitea) system level. When an event fires (someone opens an issue, pushes a commit, opens a PR) the forge sends an HTTP POST to a URL you control. The body is JSON and describes what happened.

The event types overlap a lot. push, pull_request, issues, issue_comment, pull_request_review, and release all exist on both. The event name rides in a header: X-GitHub-Event on GitHub, X-Gitea-Event on Gitea. The payload shape is mostly the same, though nested fields can differ in small ways that bite when you parse them.

Both forges sign every payload with HMAC-SHA256. You can check the signature to confirm the call came from the forge and was not changed in transit. GitHub puts it in the X-Hub-Signature-256 header. Gitea uses X-Gitea-Signature. The check is the same on your end: compute hmac.new(secret.encode(), body, hashlib.sha256).hexdigest() and compare it to the header value.

A few operational differences are worth knowing upfront:

| Aspect | GitHub | Gitea (v1.25+) |

|---|---|---|

| Signature header | X-Hub-Signature-256 | X-Gitea-Signature |

| Event header | X-GitHub-Event | X-Gitea-Event |

| Max payload size | 25 MB | 4 MB default (configurable via MAX_SIZE in app.ini) |

| Retry behavior | 3 retries, exponential backoff | Configurable in [webhook] section of app.ini (default: 3 retries) |

| Source IPs | Published at api.github.com/meta | Your Gitea server’s IP |

| API rate limit | 5,000 req/hr (PAT), 15,000 (GitHub App) | No hard limit by default |

GitHub delivers from IP ranges listed at https://api.github.com/meta (the hooks array). That list is what you need if you want to lock down inbound traffic with firewall rules. Gitea delivers from whatever box hosts your instance, so IP filtering is simple.

Building the Webhook Receiver with FastAPI

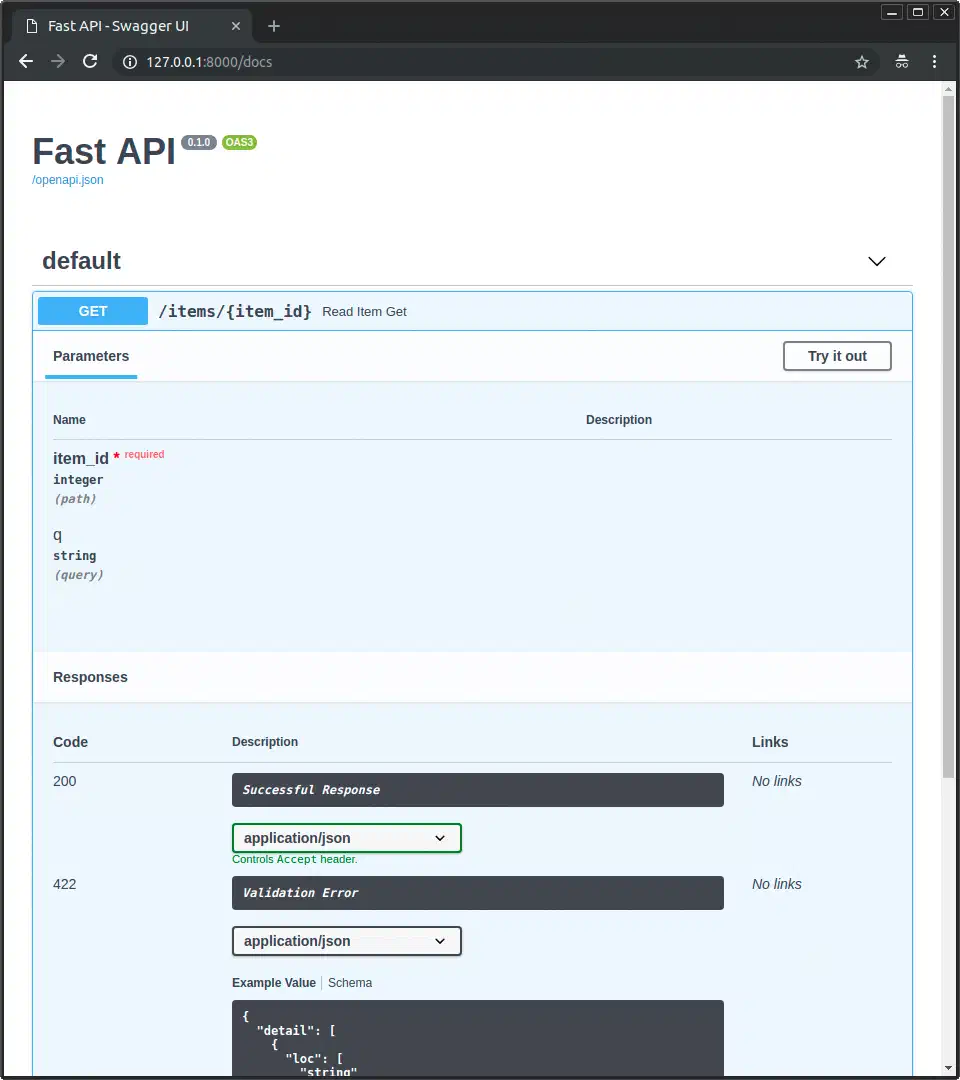

FastAPI (now at v0.135.x) works well as a webhook receiver. It handles async requests by default, checks payloads with Pydantic , and runs on Uvicorn with very little overhead. Here is how to set up the project from scratch with uv :

uv init repo-bot && cd repo-bot

uv add fastapi 'uvicorn[standard]' httpx pydantic python-dotenvThe httpx

library handles async HTTP calls back to the GitHub or Gitea API. With the project set up, create main.py:

import hashlib

import hmac

import json

import os

from dotenv import load_dotenv

from fastapi import FastAPI, Request, HTTPException

load_dotenv()

app = FastAPI()

WEBHOOK_SECRET = os.getenv("WEBHOOK_SECRET", "")

def verify_signature(body: bytes, signature: str, secret: str) -> bool:

expected = hmac.new(secret.encode(), body, hashlib.sha256).hexdigest()

# GitHub prefixes with "sha256=", Gitea sends the raw hex digest

if signature.startswith("sha256="):

signature = signature[7:]

return hmac.compare_digest(expected, signature)

@app.post("/webhook")

async def webhook(request: Request):

body = await request.body()

# Detect platform from headers

event_type = request.headers.get("X-GitHub-Event") or request.headers.get(

"X-Gitea-Event"

)

signature = request.headers.get(

"X-Hub-Signature-256", ""

) or request.headers.get("X-Gitea-Signature", "")

if not verify_signature(body, signature, WEBHOOK_SECRET):

raise HTTPException(status_code=403, detail="Invalid signature")

payload = json.loads(body)

handler = handlers.get(event_type, handle_unknown)

return await handler(payload)The verify_signature function uses hmac.compare_digest() instead of == to block timing attacks. An attacker cannot tell how many bytes of the signature matched from the response time alone.

The routing pattern maps event types to handler functions with a plain dict:

async def handle_issues(payload: dict):

action = payload.get("action")

if action == "opened":

return await auto_label_issue(payload)

return {"status": "ignored"}

async def handle_pull_request(payload: dict):

action = payload.get("action")

if action in ("opened", "edited"):

return await enforce_pr_title(payload)

return {"status": "ignored"}

async def handle_push(payload: dict):

return {"status": "received", "commits": len(payload.get("commits", []))}

async def handle_unknown(payload: dict):

return {"status": "unhandled"}

handlers = {

"issues": handle_issues,

"pull_request": handle_pull_request,

"push": handle_push,

}Store secrets and API tokens in a .env file loaded by python-dotenv:

WEBHOOK_SECRET=your-webhook-secret-here

GITHUB_TOKEN=ghp_xxxxxxxxxxxxxxxxxxxx

GITEA_TOKEN=your-gitea-access-token

GITEA_URL=https://gitea.example.comRun the development server with:

uvicorn main:app --reload --port 8000For dev work, you need to expose your local server so GitHub or Gitea can reach it. Cloudflare Tunnel

or ngrok

both work well. Once exposed, set the webhook in your repo settings to point at https://your-tunnel-url/webhook. For a deeper look at running a persistent webhook relay with Cloudflare Tunnels and FastAPI, that guide covers the infra side in detail, including security and running the relay as a service.

Practical Bot Actions: Labeling, Enforcement, and Automation

The webhook receiver is plumbing. The point, however, is what the bot does when events arrive. Below are a few common automations with working code for each.

Auto-Labeling Issues

When a new issue is opened, scan the title and body for keywords and add labels for you. This saves maintainers from hand triage on busy repos:

import httpx

LABEL_KEYWORDS = {

"bug": ["bug", "error", "crash", "broken", "fix"],

"feature": ["feature", "enhancement", "request", "add"],

"question": ["question", "how to", "help", "why"],

"documentation": ["docs", "documentation", "typo", "readme"],

}

async def auto_label_issue(payload: dict):

issue = payload["issue"]

text = f"{issue['title']} {issue['body'] or ''}".lower()

repo = payload["repository"]["full_name"]

number = issue["number"]

labels = [

label for label, keywords in LABEL_KEYWORDS.items()

if any(kw in text for kw in keywords)

]

if labels:

async with httpx.AsyncClient() as client:

await client.post(

f"https://api.github.com/repos/{repo}/issues/{number}/labels",

json={"labels": labels},

headers={

"Authorization": f"Bearer {os.getenv('GITHUB_TOKEN')}",

"Accept": "application/vnd.github+json",

},

)

return {"status": "labeled", "labels": labels}PR Title Enforcement

Many teams use Conventional Commits for PR titles so changelogs can be built from them. The bot can enforce this by checking the title against a regex. If the title does not match, it posts a comment:

import re

PR_TITLE_PATTERN = re.compile(

r"^(feat|fix|docs|refactor|test|chore|ci|perf)(\(.+\))?: .{10,}$"

)

async def enforce_pr_title(payload: dict):

pr = payload["pull_request"]

repo = payload["repository"]["full_name"]

title = pr["title"]

sha = pr["head"]["sha"]

if PR_TITLE_PATTERN.match(title):

state = "success"

description = "PR title follows Conventional Commits"

else:

state = "failure"

description = "PR title must match: type(scope): description (min 10 chars)"

# Post a comment explaining the expected format

async with httpx.AsyncClient() as client:

await client.post(

f"https://api.github.com/repos/{repo}/issues/{pr['number']}/comments",

json={"body": (

"**PR Title Check Failed**\n\n"

"Please format your title as: `type(scope): description`\n\n"

"Valid types: `feat`, `fix`, `docs`, `refactor`, `test`, "

"`chore`, `ci`, `perf`\n\n"

f"Your title: `{title}`"

)},

headers={

"Authorization": f"Bearer {os.getenv('GITHUB_TOKEN')}",

"Accept": "application/vnd.github+json",

},

)

# Set commit status

async with httpx.AsyncClient() as client:

await client.post(

f"https://api.github.com/repos/{repo}/statuses/{sha}",

json={

"state": state,

"description": description,

"context": "pr-title-check",

},

headers={

"Authorization": f"Bearer {os.getenv('GITHUB_TOKEN')}",

"Accept": "application/vnd.github+json",

},

)

return {"status": state}Stale Issue Management

For repos that pile up old issues, a scheduled task can sweep them. A cron job or a webhook from a scheduler can query for stale issues and warn about auto-closing:

from datetime import datetime, timedelta

async def mark_stale_issues(repo: str, days: int = 30):

cutoff = (datetime.utcnow() - timedelta(days=days)).isoformat() + "Z"

async with httpx.AsyncClient() as client:

response = await client.get(

f"https://api.github.com/repos/{repo}/issues",

params={

"state": "open",

"sort": "updated",

"direction": "asc",

"per_page": 50,

},

headers={

"Authorization": f"Bearer {os.getenv('GITHUB_TOKEN')}",

"Accept": "application/vnd.github+json",

},

)

issues = response.json()

for issue in issues:

if issue["updated_at"] < cutoff and "pull_request" not in issue:

await client.post(

f"https://api.github.com/repos/{repo}/issues/{issue['number']}/labels",

json={"labels": ["stale"]},

headers={

"Authorization": f"Bearer {os.getenv('GITHUB_TOKEN')}",

"Accept": "application/vnd.github+json",

},

)Custom CI Triggers

On push events to certain branches, fire an external CI pipeline by calling its API. This helps when your CI system (Jenkins, Drone, Woodpecker) is not wired into your forge:

async def handle_push(payload: dict):

branch = payload["ref"].split("/")[-1]

if branch in ("main", "staging"):

sha = payload["after"]

async with httpx.AsyncClient() as client:

await client.post(

"https://ci.example.com/api/pipelines",

json={"branch": branch, "commit": sha},

headers={"Authorization": "Bearer CI_TOKEN"},

)

return {"status": "triggered"}If you run Gitea Actions on a self-hosted runner with a GPU, you can extend this pattern. The bot can post LLM-built code feedback as PR comments. The approach in automating code reviews with local LLMs in a CI pipeline pairs well with the webhook handler here.

Handling GitHub and Gitea Differences in One Codebase

If you use GitHub for public projects and Gitea for private or self-hosted repos, you don’t need two bots. The platform gaps are small enough to hide behind a thin layer.

Start with a Platform enum and auto-detection:

from enum import Enum

class Platform(str, Enum):

GITHUB = "github"

GITEA = "gitea"

def detect_platform(headers: dict) -> Platform:

if "X-GitHub-Event" in headers:

return Platform.GITHUB

elif "X-Gitea-Event" in headers:

return Platform.GITEA

raise ValueError("Unknown platform")The core is a ForgeClient class. It swaps the base URL and auth headers based on the platform:

class ForgeClient:

def __init__(self, platform: Platform):

self.platform = platform

if platform == Platform.GITHUB:

self.base_url = "https://api.github.com"

self.headers = {

"Authorization": f"Bearer {os.getenv('GITHUB_TOKEN')}",

"Accept": "application/vnd.github+json",

}

else:

gitea_url = os.getenv("GITEA_URL", "https://gitea.example.com")

self.base_url = f"{gitea_url}/api/v1"

self.headers = {

"Authorization": f"token {os.getenv('GITEA_TOKEN')}",

}

async def add_labels(self, repo: str, issue_number: int, labels: list[str]):

async with httpx.AsyncClient() as client:

await client.post(

f"{self.base_url}/repos/{repo}/issues/{issue_number}/labels",

json={"labels": labels},

headers=self.headers,

)

async def post_comment(self, repo: str, issue_number: int, body: str):

async with httpx.AsyncClient() as client:

await client.post(

f"{self.base_url}/repos/{repo}/issues/{issue_number}/comments",

json={"body": body},

headers=self.headers,

)

async def set_commit_status(self, repo: str, sha: str, state: str,

description: str, context: str):

async with httpx.AsyncClient() as client:

await client.post(

f"{self.base_url}/repos/{repo}/statuses/{sha}",

json={

"state": state,

"description": description,

"context": context,

},

headers=self.headers,

)To normalize payloads, define Pydantic models for a unified event shape. Then write a mapping function:

from pydantic import BaseModel

class PullRequestEvent(BaseModel):

number: int

title: str

author: str

base_branch: str

head_branch: str

head_sha: str

action: str

def normalize_pr_event(platform: Platform, payload: dict) -> PullRequestEvent:

pr = payload["pull_request"]

return PullRequestEvent(

number=pr["number"],

title=pr["title"],

author=pr["user"]["login"],

base_branch=pr["base"]["ref"],

head_branch=pr["head"]["ref"],

head_sha=pr["head"]["sha"],

action=payload["action"],

)The commit status API is the same on both forges since Gitea 1.19+. It uses the same endpoint path (/repos/{owner}/{repo}/statuses/{sha}) and the same state values (success, failure, pending). Gitea mirrors GitHub’s API on purpose in many places to ease migration.

Auth is the main split. GitHub expects Authorization: Bearer ghp_xxxxx. Gitea (v1.22+) takes either Authorization: token <access_token> or Authorization: Bearer <access_token>.

A Note on GitHub Apps vs Personal Access Tokens

For anything past a personal project bot, use a GitHub App instead of a PAT. Apps are not tied to a user account. They use short-lived install tokens, so the blast radius is small if one leaks. They offer fine-grained permissions and higher rate limits (up to 15,000 requests/hour on Enterprise Cloud vs. 5,000 for PATs). They also keep working when the creator leaves the org.

Gitea has no direct match for GitHub Apps, so PATs are still the standard there. If you run a multi-platform bot, your ForgeClient can use a GitHub App install token for GitHub and a PAT for Gitea with no changes to the handler code.

Deploying and Monitoring the Bot

A bot that fails in silence is worse than no bot at all. Your webhook handler is a real service. Treat it like one: a container, structured logs, and health checks.

Docker Container

Package the bot as a single Docker container:

FROM python:3.13-slim

WORKDIR /app

COPY pyproject.toml uv.lock ./

RUN pip install uv && uv sync --no-dev

COPY . .

CMD ["uv", "run", "uvicorn", "main:app", "--host", "0.0.0.0", "--port", "8000"]Using python:3.13-slim as the base and cutting dev deps keeps the image under 100 MB. For Gitea self-hosted setups, run the bot on the same Docker network as Gitea. Set the webhook URL to http://repo-bot:8000/webhook (internal Docker DNS) to dodge hairpin NAT bugs.

A small docker-compose.yml for running next to Gitea:

services:

repo-bot:

build: .

ports:

- "8000:8000"

env_file: .env

restart: unless-stopped

healthcheck:

test: ["CMD", "curl", "-f", "http://localhost:8000/health"]

interval: 30s

timeout: 5s

retries: 3Structured Logging

Add structlog for machine-readable logs. They make prod debugging a lot less painful:

import structlog

import time

logger = structlog.get_logger()

@app.post("/webhook")

async def webhook(request: Request):

start = time.monotonic()

body = await request.body()

event_type = request.headers.get("X-GitHub-Event") or request.headers.get(

"X-Gitea-Event"

)

delivery_id = request.headers.get("X-GitHub-Delivery") or request.headers.get(

"X-Gitea-Delivery", "unknown"

)

# ... signature verification and handling ...

elapsed = (time.monotonic() - start) * 1000

logger.info(

"webhook_processed",

event_type=event_type,

delivery_id=delivery_id,

processing_time_ms=round(elapsed, 2),

repo=payload.get("repository", {}).get("full_name"),

)

return resultShip logs to stdout so Docker picks them up. If you need search and aggregation, send them on to Loki or Elasticsearch.

Health and Metrics Endpoints

Add a /health endpoint for liveness probes from your container orchestrator. Add a /metrics endpoint for Prometheus

to scrape:

from collections import defaultdict

event_counts = defaultdict(int)

@app.get("/health")

async def health():

return {"status": "ok"}

@app.get("/metrics")

async def metrics():

lines = []

for key, count in event_counts.items():

lines.append(f'webhook_events_total{{event_type="{key}"}} {count}')

return "\n".join(lines)For deeper observability, reach for a library like prometheus-fastapi-instrumentator . It tracks request duration, status codes, and in-flight requests for you.

Testing Webhook Deliveries

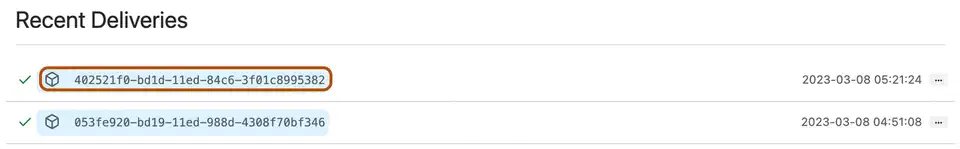

Both forges show delivery logs in the webhook settings UI. You can see the request headers and full payload for every delivery. GitHub’s “Redeliver” button lets you replay a payload against your endpoint. That saves a lot of time during dev.

For local testing with no real events, save a sample payload to a file and use curl:

# Generate HMAC signature for test payload

SIGNATURE=$(cat test-payload.json | openssl dgst -sha256 -hmac "your-secret" | cut -d' ' -f2)

curl -X POST http://localhost:8000/webhook \

-H "Content-Type: application/json" \

-H "X-GitHub-Event: issues" \

-H "X-Hub-Signature-256: sha256=$SIGNATURE" \

-d @test-payload.jsonThis approach lets you build up a library of test payloads, one per event type. Run them in CI to catch regressions before you deploy. To stress the signature check and event router harder, Hypothesis property testing finds edge cases

by feeding hundreds of randomized payloads through verify_signature automatically.

A webhook bot takes maybe an afternoon to build. It saves hours of dull triage each week. The pattern is the same for every automation you add: take a POST, check the signature, route by event type, call the API. Whether you run GitHub, Gitea, or both, the code gaps fit behind a thin layer. Auto-labeling issues is a good first job to wire up. From there you can add PR checks, stale issue cleanup, or whatever else your team keeps doing by hand.

Botmonster Tech

Botmonster Tech