Claude Code Is Built Entirely on MCP - What the Source Leak Revealed

Claude Code does not use MCP

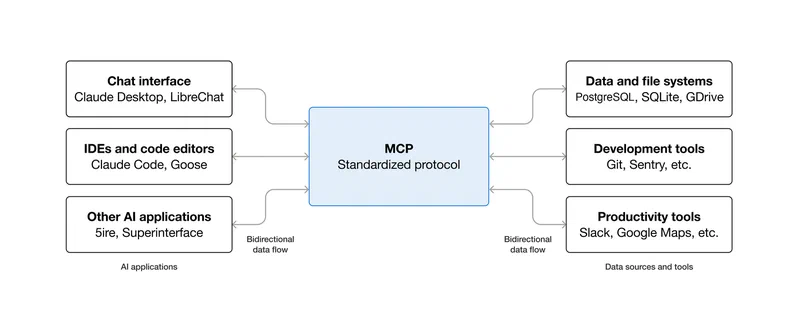

as a plugin system. It is MCP. When Anthropic accidentally shipped a 59.8 MB source map file in npm package @anthropic-ai/claude-code v2.1.88 on March 31, 2026, the developer community got an unprecedented look at how a production AI coding agent actually works. Every single capability in Claude Code - file reads, bash execution, web fetches, Computer Use, IDE bridges - runs as a discrete, permission-gated MCP tool call. There is no special internal API. Third-party MCP servers you connect get the exact same execution path, permission checks, and error handling as Anthropic’s own built-in tools.

This architecture makes Claude Code the largest known production MCP deployment, and the leaked code raises questions about security, extensibility, and where AI agents are going next.

The Accidental Reveal

On March 31, 2026, security researcher Chaofan Shou discovered that Anthropic’s npm package shipped with a JavaScript source map file pointing to a ZIP archive on Anthropic’s Cloudflare R2 bucket. The archive contained approximately 512,000 lines of unobfuscated TypeScript across 1,906 files - the complete Claude Code codebase.

Within hours, the code was mirrored across GitHub , spawning what Cybernews called the fastest-growing repository in GitHub’s history. Anthropic issued DMCA takedowns and accidentally took down thousands of unrelated repositories in the process, drawing further public attention.

The cause was a Bun bug. Anthropic acquired Bun at the end of 2025, and Claude Code is built on top of it. A bug report filed on March 11 (oven-sh/bun#28001 ) documented that source maps were being served in production mode despite Bun’s documentation stating they should be disabled. The issue remained open when the leak happened. As one commentator on Twitter put it: “accidentally shipping your source map to npm is the kind of mistake that sounds impossible until you remember that a significant portion of the codebase was probably written by the AI you are shipping.”

Anthropic’s official response, given to Axios , was terse: “Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach.” They made no public comment on the architectural findings, the feature flags, or the unreleased capabilities the code revealed.

Everything Is a Tool Call

The standout architectural finding: Claude Code treats every capability as an MCP tool call. The full tool system comprises approximately 40 discrete tools , each individually permission-gated: file read, file write, bash execution, web fetch, LSP integration, MCP server calls, and IDE bridges. Computer Use (mouse, keyboard, screenshot) runs through the same MCP tool-call pipeline as everything else.

Each tool is a standalone module with its own permission boundary. The orchestration logic is fully formalized: Claude Code discovers available tools from connected MCP servers at startup and treats them identically to its own built-in tools. There is no “special” internal API that built-in tools get but extensions do not.

The permission system follows a Deny > Ask > Allow precedence model:

| Priority | Type | Behavior |

|---|---|---|

| Highest | Deny | Blocks execution outright |

| Middle | Ask | Prompts user for approval before running |

| Lowest | Allow | Auto-approved, runs without prompting |

MCP-specific patterns support wildcards - you can allowlist all tools from a trusted server (like mcp__github__*) while requiring approval for tools from less trusted sources. The configuration lives in settings.json under autoMode.soft_deny and autoMode.allow, using natural-language rules that the model interprets at runtime.

In practice, this means a third-party MCP server added via configuration runs through the same execution path and permission checks as Anthropic’s own file-read tool. Good for extensibility. Less good when you consider that a malicious MCP server published on npm and added to someone’s agent config would also inherit that same permission level, making MCP supply-chain attacks a real concern.

Every bash command additionally runs through 23 numbered security checks in bashSecurity.ts: 18 blocked Zsh builtins, defense against Zsh equals expansion (=curl bypassing permission checks for curl), unicode zero-width space injection, IFS null-byte injection, and a malformed token bypass discovered during HackerOne review. According to Alex Kim’s analysis

, no other tool has this specific a Zsh threat model.

Hidden Features Behind the Flags

The leaked code contained 44 compile-time feature flags, at least 20 gating capabilities that were fully built but unreleased. Three stand out.

KAIROS is referenced over 150 times in the source. Named after the Ancient Greek concept of “the opportune moment,” KAIROS represents an autonomous daemon mode that transforms Claude Code from a request-response tool into a persistent background process. It runs 24/7 without user input, receiving heartbeat prompts asking “anything worth doing right now?” It has three exclusive tools: push notifications, file delivery, and pull request subscriptions. An autoDream process consolidates learning overnight via append-only daily logs - a form of “memory distillation” where the agent merges observations, removes contradictions, and converts vague insights into concrete facts.

The implementation is heavily gated, and as of April 2026 Anthropic has not publicly acknowledged KAIROS or launched any autonomous background features. But the scaffolding is there, complete with GitHub webhook subscriptions, background daemon workers, and cron-scheduled refreshes every five minutes.

Anti-distillation is the second major revelation. When the ANTI_DISTILLATION_CC flag is enabled, Claude Code sends anti_distillation: ['fake_tools'] in API requests, telling the server to silently inject decoy tool definitions into the system prompt. The purpose: if someone records Claude Code’s API traffic to train a competing model, the fake tools pollute that training data. Anthropic had published a report in February 2026 documenting industrial-scale distillation campaigns by three Chinese AI labs that generated over 16 million exchanges, and this mechanism was designed to make that kind of theft less effective.

A second anti-distillation mechanism - connector-text summarization - buffers the assistant’s reasoning between tool calls, summarizes it, and returns the summary with a cryptographic signature. Anyone recording API traffic gets summaries instead of the full reasoning chain.

The community reaction was mixed. The technical community acknowledged Anthropic’s right to protect its intellectual property. But the mechanism - silently injecting false information into API responses - struck many as something that should have been disclosed. As one analysis on Winbuzzer

noted, “the tool definitions a developer sees should match the tools that actually exist.” Alex Kim’s deeper technical analysis found the protections were trivially bypassable: a MITM proxy stripping the anti_distillation field from request bodies would defeat it, and setting the CLAUDE_CODE_DISABLE_EXPERIMENTAL_BETAS environment variable disables the whole thing.

Undercover Mode (undercover.ts) actively instructs Claude Code to never mention internal codenames like “Capybara” or “Tengu” when used in non-internal repositories. The module has a hard-coded NO force-OFF - you can force it on but never force it off. In external builds, the entire function gets dead-code-eliminated to trivial returns. This means AI-authored commits and PRs from Anthropic employees working on open source projects carry no indication that an AI wrote them.

Desktop Extensions and the .mcpb Ecosystem

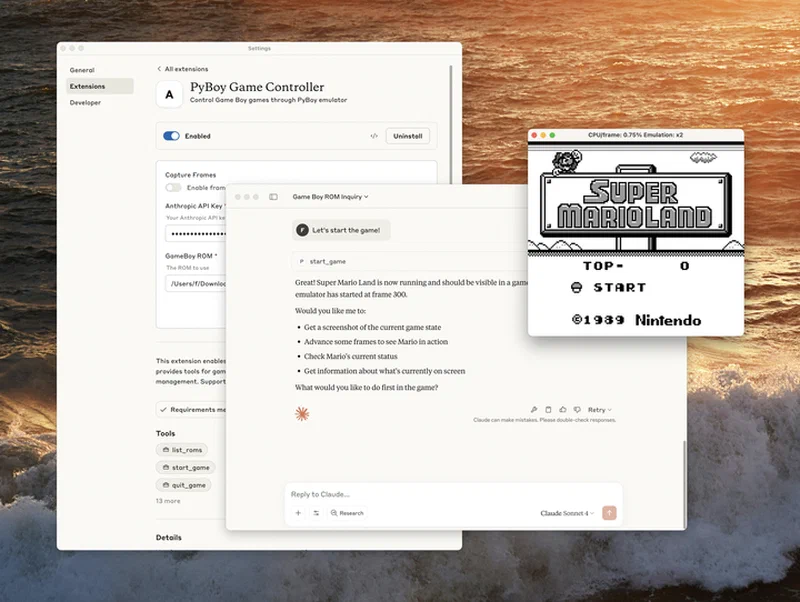

Because Claude Code’s architecture treats all MCP servers identically, the Desktop Extensions format (.mcpb) becomes more significant than a typical plugin system. Desktop Extensions are ZIP archives containing a local MCP server bundled with a manifest.json and all dependencies - similar to browser extensions but for AI tools.

One-click installation in Claude Desktop solves the biggest MCP adoption barrier: getting non-technical users past manual server configuration. The Connectors panel and Extensions marketplace let users install MCP servers without editing JSON config files.

For enterprise deployment, Team and Enterprise plan owners can manage extension access with fine-grained admin controls, upload custom .mcpb files for team-wide distribution, and enable or disable public extensions based on security policy. Organizations are building MCPBs as secure proxies to internal MCP servers, documentation access tools, and development tool connectors while maintaining their existing security architecture.

Anthropic open-sourced the Desktop Extension specification , toolchain, and schemas, hoping other AI desktop apps adopt the format. Distribution is evolving: mpak functions as a public registry where developers can publish and discover MCP bundles, operating like npm for MCP servers. But the ecosystem is still young, and most distribution remains somewhat ad-hoc compared to mature package registries.

A .mcpb extension, once installed, gets the same execution path and permission model as Claude Code’s own built-in capabilities. First-party and third-party tools run through the same pipeline.

MCP at Production Scale

Forty-plus production tool modules running through a single protocol is a strong data point that MCP works beyond demo projects. A comment in autoCompact.ts reveals the kind of scale involved: before a three-line fix was applied, Claude Code was wasting approximately 250,000 API calls per day globally due to a compaction retry bug. That is the volume of a production system.

Since built-in and external tools share a permission model, extensions are not second-class citizens. A third party can build with the same primitives Anthropic uses internally.

Other AI coding tools are adopting MCP too, though with varying depth. Cursor and GitHub Copilot are adding MCP support, while OpenAI’s Codex CLI already has MCP integration. As the Generect analysis puts it, MCP is “becoming the USB-C of AI agent tooling.” But there is a real difference between building on MCP from day one, as Claude Code did, and adding MCP support to an existing architecture after the fact.

| Tool | MCP Approach | Integration Depth |

|---|---|---|

| Claude Code | Native - all capabilities are MCP tool calls | Every feature runs through MCP |

| Cursor | Adding MCP support | Plugin-level, not core architecture |

| Codex CLI | Supports MCP | Structured tool calling, not full architecture |

| GitHub Copilot | Adding MCP support | Extension-level integration |

Security is the obvious weak point. When every extension gets the same privilege model as built-in tools, supply-chain attacks against MCP servers carry real weight. Package signing, server attestation, and runtime sandboxing are all needed but not yet standardized. Claude Code already implements client attestation at the HTTP transport level - API requests include a placeholder that Bun’s native Zig HTTP stack overwrites with a computed hash, proving the request came from a genuine Claude Code binary. But server-side MCP server attestation for third-party extensions does not yet exist as a standard.

KAIROS points to the likely next step: always-on autonomous agents running the same MCP tool infrastructure to monitor, learn, and act without human prompting. The leaked architecture shows the foundation can support it. The security and governance frameworks are still catching up.

The core takeaway from the leak is architectural. Building everything as a tool call from day one gave Claude Code an architecture that can scale from a single file read to an autonomous agent fleet without structural changes. That same uniformity is also what makes a compromised MCP server so dangerous. The protocol’s strength and its risk are the same thing.