MiniMax M2.7: Model That Almost Matches Claude Opus 4.6

MiniMax M2.7 , released in April 2026, is a 230B-parameter open-weights reasoning model (Mixture-of-Experts, 10B active, 8 of 256 experts routed per token) that scores 50 on the Artificial Analysis Intelligence Index. That lands it on par with Sonnet 4.6 across coding and agent benchmarks and within a couple of points of Claude Opus 4.6. Weights are on HuggingFace at MiniMaxAI/MiniMax-M2.7 , the hosted API runs $0.30 / $1.20 per million input/output tokens (roughly a tenth of Opus), and if you have a 128GB-unified-memory Mac Studio, an AMD Strix Halo box, or an NVIDIA DGX Spark , you can run it offline with zero token bills. Two big asterisks: the M2.7 license is not the permissive M2.5 license (commercial use is restricted), and there is no multimodal support. For homelabbers and agent builders who are text-only and non-commercial, M2.7 is the best locally runnable Opus-class option shipped so far.

Download the weights: huggingface.co/MiniMaxAI/MiniMax-M2.7

What Is MiniMax M2.7? Open Weights, MoE Architecture, and “Self-Evolution”

Most early coverage described M2.7 as a proprietary API model. The weights and config.json published on HuggingFace tell a different story: M2.7 is an open-weights iteration of M2.5 with the same architecture and a sharper focus on tool-using agent behavior.

Weights dropped on HuggingFace the weekend of release, the API and Agent product went live simultaneously, and the OpenRoom interactive agent system was open-sourced alongside. The config.json reveals where the speed comes from: 230B total parameters, roughly 10B active per token, 8 experts selected out of 256 on each forward pass. Because only 10B parameters activate per token, inference throughput behaves closer to a 10B dense model than a 230B one. That is why self-hosting M2.7 on unified-memory hardware is practical at all.

Other specs worth pinning down:

- Context window is about 204.8K tokens, roughly 307 A4 pages of input.

- It is a reasoning model with native chain-of-thought. Evaluation runs emit about 3.3x more tokens than non-reasoning peers (roughly 87M vs 26M average), and time-to-first-token sits around 2.91s.

- No multimodal. Text in, text out. No images, no audio, no video. For workflows that rely on screenshot analysis or document OCR, this is a blind spot you cannot ignore.

What “self-evolving” actually means

MiniMax’s marketing describes M2.7 as self-evolving. The mechanism is less mystical than it sounds: an autonomous training loop where M2.7 edits its own agent harness (tool definitions, prompt scaffolds, retry logic), runs reinforcement-learning evaluations on each variant, and merges the winners. MiniMax reports that more than 100 rounds of this loop produced a +30% lift on internal evaluations without human edits to the harness. This is not “the model fine-tunes itself in production” and it is not online learning. It is a training-time scaffolding search, bolted onto the RL pipeline, run once, and then frozen into the released checkpoint.

Vendor context: MiniMax is a Shanghai-based, Hong Kong-listed AI lab valued north of $2B, with backers including Alibaba and Tencent. They ship large open-weights models on a regular cadence rather than operating as a one-off research outfit, and M2.7 follows the well-received M2.5 release from February.

The difference between M2.5 and M2.7 is narrower than the version bump suggests: same size, same chat template, same MoE structure. What changed is the training data mix and the self-evolution loop, both targeted at tool-use and agent workflows rather than raw knowledge.

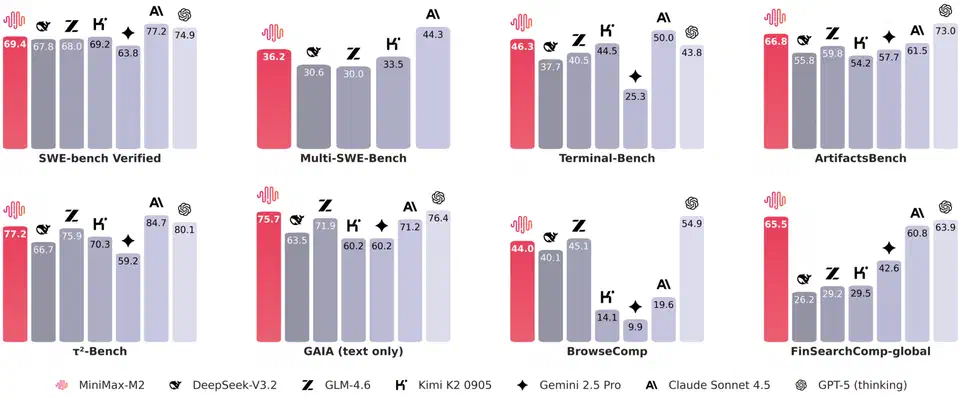

Benchmarks That Actually Matter, and Where M2.7 Sits

MiniMax’s own benchmarks put M2.7 in Opus-class territory. Independent analysis from Artificial Analysis and head-to-heads run by Kilo.ai tell a more nuanced story: on SWE-bench-style coding tasks the model is excellent, on knowledge-worker and office workflows it still trails Sonnet and Opus by several points.

Here is the scoreboard, pulled from MiniMax’s technical report and cross-checked against Artificial Analysis:

| Benchmark | MiniMax M2.7 | Sonnet 4.6 | Opus 4.6 | GPT-5.4 | GLM-5 |

|---|---|---|---|---|---|

| Intelligence Index (AA v4.0) | 50 | 48 | 52 | 51 | 50 |

| SWE-bench Verified | 78.0% | 74.1% | 55.0% | 76.5% | 72.8% |

| SWE-Pro | 56.2% | 55.8% | 58.1% | 57.4% | 53.9% |

| VIBE-Pro (end-to-end) | 55.6% | 54.2% | 57.0% | 56.8% | 52.1% |

| Terminal Bench 2 | 57.0% | 54.6% | 56.3% | 58.2% | 52.8% |

| SWE Multilingual | 76.5% | 73.9% | 75.1% | 77.0% | 71.4% |

| PinchBench (agents) | 86.2% | 84.1% | 85.9% | 86.4% | 86.4% |

| Toolathon | 46.3% | 45.1% | 48.0% | 47.6% | 44.2% |

| MLE Bench Lite | 66.6% | 63.2% | 65.8% | 67.1% | 61.0% |

| GDPval-AA ELO | 1495 | 1472 | 1510 | 1502 | 1468 |

| Office / Knowledge Worker | 62.1% | 68.9% | 70.3% | 69.8% | 60.4% |

The SWE-bench Verified number is the headline. 78% beats every other model on the list and opens a real gap over Opus 4.6’s 55%. If your workload is actually SWE-bench-shaped (pull-request-sized edits to real repositories with tests as oracles), the “Opus killer” framing earns its keep. On the office and knowledge-worker benchmark, M2.7 sits about seven points below Sonnet 4.6 and Opus 4.6, so for docs, spreadsheets, slide generation, and the general shape of white-collar deskwork, Opus remains the safer pick.

One benchmark deserves a skeptical eye: MM Claw Bench at 62.7%. That is MiniMax’s own evaluation, purpose-built around tasks you would run inside agentic coding tools like OpenClaw or Claude Code , explicitly designed to measure “can we rip Claude out of these workflows?” The framing is honest, but it is not a neutral third-party benchmark and should be read accordingly.

Throughput and latency complete the picture. The model sustains about 45.7 tokens/sec with a 2.91s time-to-first-token. That is reasoning-model speed, not chat speed. For IDE-inline autocomplete or voice agents those numbers disqualify it. For async batch work they are fine.

One-sentence summary if you need to paste it into a Slack thread: on par with Sonnet 4.6 on most things, within a couple of points of Opus 4.6 on coding, a few points behind on office and knowledge work, no multimodal.

Self-Hosting M2.7: Hardware, Quantization, and What Actually Runs

M2.7 is open weights, so the real question for anyone running homelab inference is what it takes to host 230B parameters on your own metal. More than a consumer laptop, less than a rack.

Because M2.7 is architecturally identical to M2.5, the quantization ladder mirrors the M2.5 GGUF ladder published by Unsloth :

| Quantization | Disk Size | VRAM Needed | Notes |

|---|---|---|---|

| FP16 full | ~500 GB | ~460 GB | Reference quality, data-center territory only |

| Q8_0 | ~261 GB | ~250 GB | Near-lossless, still enterprise hardware |

| Q4_K_XL | ~160 GB | ~150 GB | Sweet spot: dramatic accuracy recovery vs Q4 |

| Q2_K_L | ~100 GB | ~96 GB | Realistic consumer floor on unified-memory gear |

| Q1 | ~60 GB | ~56 GB | Accuracy collapses, don’t bother |

On drop day there were no community GGUFs at all. Based on the M2.5 cadence, Unsloth and Bartowski quants should land within a few days, so plan your deployment timing accordingly.

Hardware platforms that work in 2026

Four realistic self-hosting targets exist today, and the picture has improved significantly since late 2025:

- Mac Studio with 128GB unified memory is viable for Q2 and Q3 quants thanks to Apple’s shared-memory architecture and mature MLX / llama.cpp support. Expect 8-12 tokens/sec on Q2_K_L at short contexts, dropping as the KV cache fills.

- AMD Strix Halo boxes (128GB unified memory) from HP, Framework, and various OEMs make 100GB+ models feasible on PC hardware without a GPU cluster. Performance sits in the same league as the Mac Studio, with better Linux tooling and less polished consumer software.

- NVIDIA DGX Spark loads M2.7 comfortably, but it is memory-bandwidth-limited and tokens/sec will not match a dedicated GPU cluster. MoE helps here: only 10B params activate per token, so the bandwidth cost per token is bounded.

- Dual-RTX-5090 rigs with 64GB combined VRAM fall short for anything above Q2 and usually require CPU+GPU offload, which kills throughput on reasoning models that generate long CoT traces.

The serving stack splits along the usual lines. Local inference uses llama.cpp , Ollama , LM Studio , and Anything LLM, all of which consume GGUFs. Production serving uses safetensors with vLLM , optionally with tensor-parallel sharding across multiple GPUs.

A worked configuration for anyone pricing this out: a 128GB Mac Studio (M3 Ultra) running Q2_K_L of M2.7 should sustain roughly 10 tokens/sec on short contexts. Time-to-first-token will sit between 4 and 6 seconds including the model’s native chain-of-thought preamble. Context fill at 100K+ tokens slows generation substantially, so budget accordingly for long-repo reviews.

The License Catch: Why M2.7 Is Not a Drop-In Replacement for M2.5

The single biggest gotcha in the M2.7 release is the license change, and it is the piece of information most people searching for “MiniMax M2.7 license” actually want. M2.5 shipped under a broadly permissive modified-MIT license and saw wide adoption as a token-bill replacement for Claude in third-party tools. M2.7 did not repeat that decision. Its license materially narrows commercial use, and the r/LocalLLaMA reaction flagged this as the biggest downside of the release.

Several grey areas are worth thinking through before you commit:

- Does using M2.7 locally to accelerate your own day job at a for-profit company count as “commercial”?

- Is running it inside a company-internal agent “redistribution”?

- If your team self-hosts M2.7 on a private VLAN and exposes an OpenAI-compatible endpoint to other internal teams, is that a hosted service?

The license text, not community interpretation, is what binds you. Read the HuggingFace license file directly before making a call. The likely MiniMax intent is preventing third parties from reselling M2.7 as a hosted API endpoint, but the actual license wording is what matters in a legal review.

Practical guidance for the common cases:

- Personal and hobbyist use is clearly fine.

- Small-team internal tooling is probably fine, but have someone read the license.

- SaaS reselling or embedding into a paid product requires a commercial license conversation with MiniMax.

Why this matters for the “Opus killer” framing: if you cannot legally use M2.7 in the workflow where you want to replace Opus, the cost-per-token comparison is moot. Read the license before you build.

In the broader 2026 open-weights picture, M2.7 now sits in a specific bucket. Llama 4.x , Qwen3.5 , GLM-5, and the rest have different license terms, and several of them remain permissive. M2.7 belongs in the “open weights but restrictive” group, distinct from “open weights and permissive.” That distinction matters when you are picking a model to build a product on.

API or Self-Host: A Workload-Fit Decision Guide

Given the open weights, the restrictive license, the 96GB-VRAM hardware floor, and the reasoning-model latency profile, the question of whether to use M2.7 at all, and if so via API or self-hosted, depends heavily on workload.

Use the hosted API when:

- Your workload is commercial and the license fit is unresolved.

- You need OpenRoom, Agent Teams, or dynamic tool search out of the box.

- Your throughput needs are bursty and a 128GB Mac Studio would sit idle most of the day.

- Your team cannot justify the capital expense of unified-memory hardware.

Self-host when:

- You are non-commercial, or the license clearly fits your use case.

- Your workload is async and batched, not interactive.

- You already have 128GB unified memory or better on hand.

- You want zero token bills on long-running agent tasks.

Strong-fit workloads (either path): headless coding agents, overnight RL or ML research pipelines, log-analysis and incident-response agents, long-context repo review, batch refactoring.

Weak-fit workloads: IDE-inline autocomplete (TTFT too high), low-latency voice agents, multimodal workflows (no image or audio input), regulated-industry compliance work until MiniMax publishes enterprise SLAs.

Migration checklist for teams moving off Claude or GPT

If you are planning a cutover, work through this list before you point production traffic at M2.7:

- Audit your tool schemas for dynamic tool search compatibility.

- Shorten your system prompts. Reasoning models prefer to reason instead of being spoon-fed rules.

- Set explicit thinking-token budgets to cap output-token spend. Without a cap, you will pay for long CoT traces whether you need them or not.

- Verify your license fit in writing before cutting over, not after.

- Benchmark on your actual workload, not on SWE-bench. Your pass rate will differ.

Open questions to monitor

A few threads are still live and worth watching over the next couple of months:

- GGUF quantization quality from Unsloth and Bartowski once the dust settles.

- Independent benchmarks beyond MiniMax’s own numbers, especially office/knowledge-worker evaluations.

- Evaluation-contamination audits of the self-evolution loop.

- Future multimodal or smaller-variant releases (a 30B-active “M2.7-mini” is rumored but not announced).

The bottom line

If you are non-commercial and text-only, and your hardware can carry it, M2.7 is the best locally runnable Opus-class model available in April 2026. The SWE-bench numbers hold up under scrutiny, the MoE architecture makes 128GB unified memory enough to serve it, and the hosted API at $0.30 / $1.20 per million tokens is priced aggressively enough to be worth trying even if you never self-host.

If you are commercial, interactive, or multimodal, stay on Opus, Sonnet, or GPT-5.4 for now and watch the next MiniMax release. Each M2.x release has closed the gap, and M2.8 or M3 may well be the one where the license, the multimodality, and the latency all line up.

Until then, M2.7 is a strong open-weights reasoning model with a couple of footnotes you have to read before you ship.