Claude Code vs Cursor vs GitHub Copilot: Which AI Coding Tool Fits Your Workflow (2026)

Claude Code, Cursor, and GitHub Copilot take three very different shots at AI-assisted coding: a terminal-native agent, an AI-first IDE, and a multi-IDE plugin. Claude Code leads on raw skill and complex multi-file work, scoring highest on SWE-bench at about 74-81%. Cursor offers the best editor experience with background agents and cloud automation. GitHub Copilot has the lowest entry price at $10/month and the widest IDE support. Most pro developers now mix two or more tools, with Claude Code plus Cursor as the top pair per the JetBrains AI Pulse survey from January 2026.

Three Paradigms of AI-Assisted Coding

These tools aren’t swappable. Each has a different way you talk to it. Knowing the design tells you which jobs a tool will do well and where it will struggle.

Claude Code is a terminal-native agent CLI. It lives in your shell, reads your whole codebase via agent search, and works on its own across dozens of files. It plugs into GitHub, GitLab, and shell tools to run the full dev loop: reading issues, writing code, running tests, committing, and opening PRs. Its 1M-token context window (GA since March 2026) holds huge codebases in working memory. Agent Teams, a beta feature from February 2026, lets you orchestrate multiple Claude Code sessions as a team: a lead agent plus helpers, each in its own context. It works with any editor since it never touches your IDE.

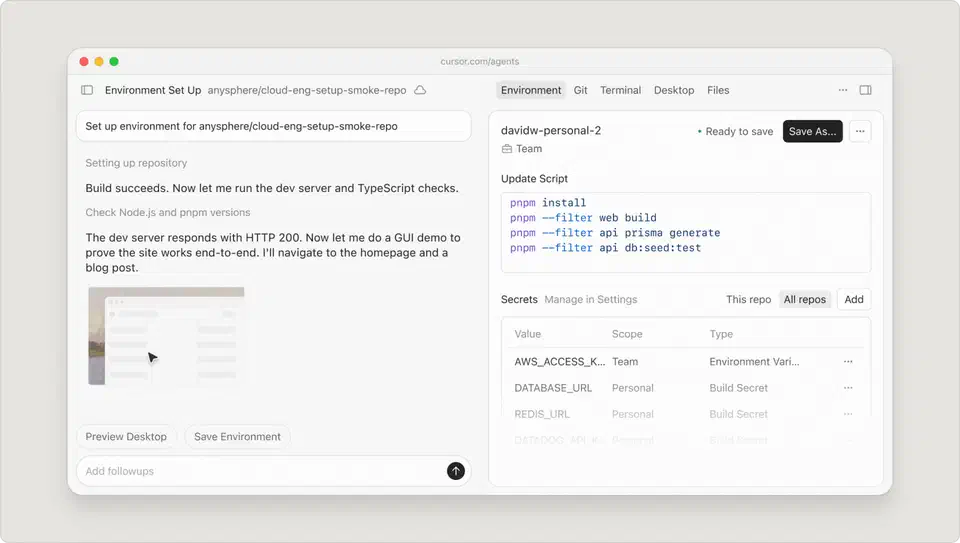

Cursor is a VS Code fork rebuilt around AI from the ground up. It’s a full editor made for AI-first coding, not a plugin bolted on. Cursor 3.0, out in early April 2026, puts agents at the center, running in parallel across repos and setups. Its Supermaven autocomplete hits a 72% accept rate. Composer adds visual multi-file editing. Background agents (GA since late 2025) run on cloud Ubuntu VMs, clone your repo, do the work, and open PRs without touching your laptop. BugBot (February 2026) reviews PRs on its own and can spin up a cloud agent to fix them: 35% or more of its autofix proposals get merged. Cursor Automations fire agents on a schedule or from outside events.

GitHub Copilot is a plugin-based helper that runs in VS Code, JetBrains, Visual Studio, Neovim, and Xcode. Agent mode hit GA in March 2026 on VS Code and JetBrains. It can build files, edit code, run tests, and loop on its own. The coding agent opens PRs from issues with no human in the loop. Semantic code search, new in 2026, finds related code by meaning, not just by keyword. Custom agents get full workspace context, tool access, and MCP links. Its edge is ecosystem reach: it works where you already code.

Picking one comes down to how you like to work with code: from a terminal, a visual editor, or whatever IDE you already use.

Pricing Breakdown

Pricing has split wide open. Each tool targets a different price tier. Hidden costs and credit pools make a clean compare harder than the sticker prices suggest.

| Feature | Claude Code | Cursor | GitHub Copilot |

|---|---|---|---|

| Free tier | No | Yes (limited) | Yes (2,000 completions + 50 premium requests/mo) |

| Entry paid tier | $20/mo (Pro) | $20/mo (Pro) | $10/mo (Pro) |

| Mid tier | $100/mo (Max 5x) | $60/mo (Pro+) | $39/mo (Pro+) |

| Top individual tier | $200/mo (Max 20x) | $200/mo (Ultra) | $39/mo (Pro+) |

| Team/Business | API pay-per-token | $40/user/mo (Teams) | $19/user/mo (Business) |

| Enterprise | Custom | Custom | $39/user/mo |

| Pricing model | Subscription tiers + API tokens | Credit-based pool | Premium requests |

Claude Code ships in three paid tiers. Pro at $20/month is bundled with the Claude Pro plan. Max 5x at $100/month gives you about 88K tokens per 5-hour window. Max 20x at $200/month gives about 220K tokens per 5-hour window. API pay-per-token is also on offer for teams that build their own flows. Agent Teams burn tokens in a roughly linear way: a 3-agent team uses about 3x the tokens.

Cursor opens with a free Hobby tier (capped completions and agent calls). Then Pro at $20/month ($16/month annual, with a $20 credit pool), Pro+ at $60/month (3x credits), Ultra at $200/month (20x credits, priority features), Teams at $40/user/month, and Enterprise with custom pricing. Cursor reworked its pricing in June 2025 into a credit-based model tied to real API costs. Auto mode is free and doesn’t draw from credits. Picking a premium model like Claude Sonnet or GPT-4o does. The rollout went badly. Cursor said sorry in public and gave refunds in July 2025 for surprise charges.

GitHub Copilot has the easiest on-ramp. Free is $0 with 2,000 completions plus 50 premium requests a month. Pro is $10/month with no cap on completions and 300 premium requests. Pro+ is $39/month with 1,500 premium requests and all models, including Claude Opus 4 and o3. Business is $19/user/month, and Enterprise is $39/user/month. Chat, agent mode, code review, and cloud agents all burn premium requests at rates that vary by model.

Copilot Free-to-Pro is the cheapest entry by a wide margin. Claude Code Pro and Cursor Pro both sit at $20/month, but the day-to-day feels very different. For heavy agent use, costs climb fastest with Claude Code Max 20x and Cursor Ultra, both at $200/month. Copilot Enterprise and Cursor Teams aim at different team needs. Copilot leans on GitHub-native ties, while Cursor leans on AI inside the editor.

Benchmarks and Real-World Performance

Benchmarks help you get your bearings, but they shouldn’t be the only call. A model that scores 5 points higher on SWE-bench may feel slower or more buggy in your own work. Still, the gaps on hard tasks are real.

| Metric | Claude Code | Cursor | GitHub Copilot |

|---|---|---|---|

| SWE-bench Verified | ~74-81% (Opus 4.6) | ~52% (standard), 61.3 (CursorBench) | ~56% |

| Autocomplete acceptance | N/A (no inline autocomplete) | 72% (Supermaven) | ~35-45% (estimated) |

| “Most loved” rating | 46% | 19% | 9% |

| Context window | 1M tokens | ~128K tokens | Limited to open files |

| CSAT score | 91% | Not published | Not published |

| NPS | 54 | Not published | Not published |

SWE-bench Verified is the most-cited benchmark for real software work. Claude Code with Opus 4.6 leads at about 74-81% based on setup. GitHub Copilot solves about 56% of SWE-bench tasks. Cursor scores about 52% on plain SWE-bench, 61.3 on CursorBench, and 73.7 on SWE-bench Multilingual.

On speed, Cursor finishes benchmark tasks about 30% faster than Copilot on average. Claude Code’s speed leans on task scale. It’s slower to start since it reads and maps the full codebase, but faster on multi-step jobs where that early read keeps it from backtracking.

The Stack Overflow 2025 survey paints a mixed view of how devs feel about AI tools. 84% of devs use them or plan to. But the positive read fell from over 70% in 2023-2024 to 60%. 46% don’t trust the output, versus 33% who do. The top gripe, named by 66% of devs: “AI solutions that are almost right, but not quite.” Only 17% say agents have made team work better.

The JetBrains AI Pulse survey from January 2026 (sample: 10,000+ pro devs) gives sharper numbers. Claude Code at work hit 18%, a 6x jump from about 3% in mid-2025. Its CSAT hit 91% and NPS hit 54: the top loyalty scores in the field. Google Antigravity , out in November 2025, hit 6% by January 2026, which makes it one to watch.

Security, Privacy, and Compliance

Where your code goes is a big deal, in corporate setups most of all. The three tools treat data very differently.

| Feature | Claude Code | Cursor | GitHub Copilot |

|---|---|---|---|

| SOC 2 Type II | No (HIPAA-ready) | Yes (Business plans) | Yes |

| Code used for training | No (API policy) | No (Business/Enterprise) | No (Business/Enterprise) |

| Privacy mode | N/A | Yes (Business) | Organization-level controls |

| IP indemnity | No | No | Yes (Enterprise) |

| Self-hosted option | Via API | Enterprise (self-hosted agents) | Enterprise |

| Audit logging | API logs | Enterprise | Enterprise |

Claude Code sends code to Anthropic’s API. But Anthropic’s data policy says API inputs aren’t used to train the model. It has the strongest HIPAA story of the three, but it lacks SOC 2 Type II certification.

Cursor offers SOC 2 Type 2 certification on Business plans. The Enterprise tier adds data encryption at rest and in transit, zero-retention policies, and a privacy mode that keeps code inside the team’s setup.

GitHub Copilot leads on compliance and trust. The Enterprise tier adds SOC 2 Type II, central billing, org-level policies, audit logs, and IP indemnity (a perk only Copilot ships). GitHub will defend you in court if Copilot-made code gets a copyright challenge. If your team has a strict security review, Copilot is often the only tool that clears procurement.

MCP and Extension Ecosystems

The Model Context Protocol (MCP) is now the standard way AI coding tools talk to outside services. Each tool’s take on plug-ins shows its design.

Claude Code backs over 300 MCP integrations , among them GitHub, Slack, PostgreSQL, Sentry, Linear, and your own internal systems. It has the deepest plug-in story, with hooks, MCP, agents, skills, and CLAUDE.md config files. Since Claude Code is terminal-native, its plug-in model feels like Unix pipes: stackable and scriptable.

Cursor adds MCP servers through its settings, which keeps setup easy. Its plug-in model also inherits the whole VS Code marketplace. That gives it thousands of plug-ins on top of AI-specific MCP links.

GitHub Copilot backs MCP in Agent Mode and works with standard MCP servers. Copilot’s plug-in story lives mostly inside the GitHub platform: Actions, security tools, PR pipelines, and org-level policies. In March 2026, Copilot added enterprise MCP rules. Admins can set which MCP servers are allowed in the org via allowlist policies. One nice touch: Claude Code runs as a third-party agent inside Copilot Pro+ and Enterprise, so teams can use both without picking a platform side.

Workflow Fit - Matching Tools to How You Work

Your daily coding habits, team shape, and current toolchain count for more than benchmark scores when you pick a tool.

If you’re a solo dev who lives in the terminal, Claude Code is the obvious pick. It runs the full loop (reading issues, planning, coding, testing, committing, and opening PRs) without leaving your shell. It works best when you think in tasks, not keystrokes, and when your changes span many files in a big codebase.

If you prefer a visual editor, Cursor is where you want to be. Composer gives you a multi-file edit view that Claude Code’s CLI can’t match for devs who like to see changes in context. Background agents take the heavy work off your plate while you keep coding in the editor. BugBot adds auto code review without a tool switch.

For enterprise teams already deep in the GitHub world, Copilot has the least friction. It plugs into GitHub Issues, PRs, code review, and Actions. Enterprise-tier codebase indexing gives it org-wide code awareness. If your firm runs on GitHub, Copilot slots in without a procurement fight.

In real life, most devs in 2026 mix tools rather than pick one. Survey data shows pro devs use 2.3 tools on average. The top pairings:

- Cursor for daily editing and autocomplete, Claude Code for complex multi-file tasks and large refactors

- Copilot in your IDE for completions, Claude Code in your terminal for the heavy lifting

- Claude Code as a third-party agent within Copilot Pro+ and Enterprise, so you get both without switching contexts

For a tight look at the editor side, our Cursor vs. VS Code Copilot comparison breaks down how those two tools differ day-to-day inside an IDE.

Each tool has clear weak spots. Claude Code’s main face is the terminal, but the official VS Code extension closes much of the GUI gap. It brings Claude Code’s agent skills right into the editor with new features shipping at a fast pace. That said, devs who lean on inline autocomplete or want the full Cursor-style visual edit feel will still want to pair Claude Code with a real editor. Cursor locks you into a VS Code fork. JetBrains and Vim users can’t use it, though Cursor 3.0 added JetBrains ACP support. Copilot’s context window is capped to open files, which makes it the weakest option for big refactors across a whole codebase.

What Changed in 2026

The AI coding tool market moved fast in late 2025 and early 2026. A few releases shifted the field in real ways.

On the Claude Code side, Agent Teams shipped in February 2026. Several Claude Code instances work as a team, with a lead agent giving out tasks and helpers each in their own context. It’s still in beta and off by default, but it’s the closest any tool has come to parallelized AI development across frontend, backend, and tests. The 1M-token context window hit GA in March 2026, which lifts the biggest cap on big codebase work. No other tool matches this context size for a coding agent.

Cursor released version 3.0 in April 2026. The full redesign puts many agents at the core, running in parallel across repos and setups: locally, in worktrees, in the cloud, and over remote SSH. BugBot Autofix moved from reviewer to fixer with a 35%+ merge rate. Issues found per run nearly doubled, and the fix rate climbed from 52% to 76%.

GitHub Copilot’s agent mode hit GA in March 2026 on VS Code and JetBrains. It can now build files, edit code, build, run tests, and loop on its own. Custom agents gained full workspace context, code understanding, MCP links, and the new find_symbol tool for language-aware moves through code. The coding agent opens PRs from issues with no human in the loop. Semantic code search finds related code by meaning, not just by keyword.

All three tools are moving toward agent skills, but from different starts. Claude Code began as an agent and is adding team coordination. Cursor began as an editor and is adding cloud agents. Copilot began as autocomplete and is adding agent mode. Those roots still shape where each tool does its best work.

The Broader Market

These three aren’t the only players. Windsurf coined the “agent IDE” idea with its Cascade feature and raised its Pro tier from $15 to $20/month in March 2026 to match Cursor. Its SWE-1.5 model scores 40.08% on SWE-Bench but pushes 950 tokens per second: 14x faster than rivals. Google Antigravity hit 6% adoption within two months of launch. JetBrains Junie sits at 5% adoption and draws devs already deep in the IntelliJ world. OpenAI’s Codex takes yet another tack with cloud-sandboxed agents.

The market hasn’t merged. It has split into focused tools that devs mix and match based on the task. The “winner” of this compare comes down to what you value: raw skill (Claude Code), built-in editing (Cursor), or wide reach (Copilot). For most working devs in 2026, the answer is to use at least two of them.

Botmonster Tech

Botmonster Tech