Evaluating AGENTS.md: Are Repository Context Files Actually Helpful?

Software teams keep adding AI coding agents

to their workflow. One popular trend: drop a repo-level context file, often named AGENTS.md or CLAUDE.md, to guide the agent. The idea sounds clean. Give the AI a map of the codebase and a few rules, and it should solve tasks faster.

But does it work? A new paper, “Evaluating AGENTS.md: Are Repository-Level Context Files Helpful for Coding Agents?” , says no. The results push back hard on the default advice.

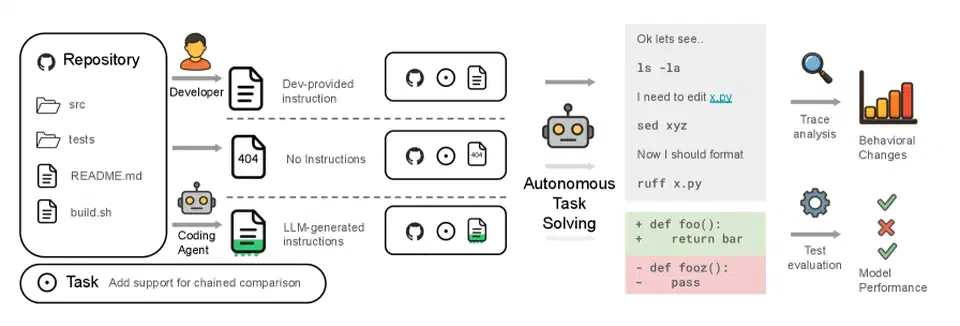

The AGENTBENCH Benchmark

To test these files, the researchers built AGENTBENCH: a benchmark of 138 real GitHub issues. The issues come from repos that already ship developer-written context files. Unlike toy benchmarks, this set mirrors how teams actually talk to agents in the wild.

The study ran each agent in three settings:

- No Context File: the agent gets the issue and the codebase, nothing else.

- LLM-Generated Context: an LLM writes the context file first, a common practice.

- Human-Written Context: the original developer-written file rides along.

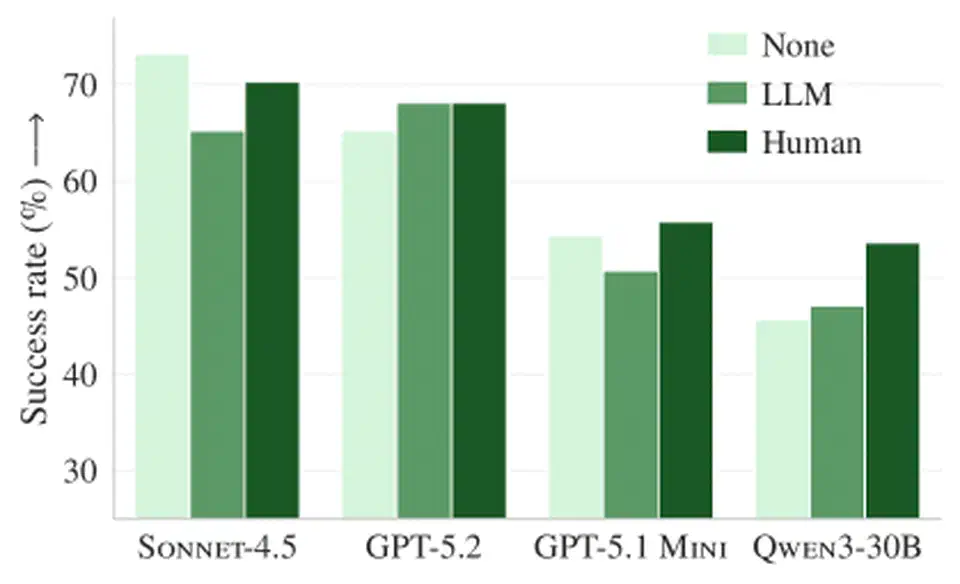

The Surprising Findings: More Cost, Less Success

The numbers go the wrong way. Current habits may be doing harm.

- LLM-Generated Files Hurt Performance: agents with auto-built guides dropped 3% in task success versus no file at all.

- Human-Written Files Offer Marginal Gain: even hand-written files only lifted success rates by 4%, a thin payoff for the work.

- Costs Skyrocketed: worst of all, the files pushed token costs up by more than 20% across the board.

Why Context Files Backfire

Why does more info lead to worse results? The study points to a few clear reasons:

- Redundancy & Noise: LLM-built files often repeat what the agent could find by reading the code. They add noise to the context window and give no new signal.

- “Busy Work” & Over-Exploration: agents follow the file too literally. If it says “run tests with pytest,” the agent runs extra tests or browses far more files than it needs to. That burns tokens and time, and it doesn’t fix the bug.

- Instruction Overload: the extra rules, style guides, and tips often muddle the task. The agent works harder for a smaller win.

The Verdict: Less is More

The paper’s take is blunt: today’s “agent-friendly” docs don’t pay off. The big-overview context file doesn’t help agents read the code better than they would on their own. Benchmark wins on your real workflow beat feature lists and marketing claims.

Key Takeaways for Developers:

- Stop Auto-Generating Context: asking an LLM to summarize your repo for another LLM looks like a losing trade.

- Keep It Minimal: if you do ship an

AGENTS.md, keep it short. Stick to setup commands, odd env quirks, or style rules the code can’t reveal on its own. - Test Your Instructions: test your prompts like you test your code. Check that they lift outcomes, not just bills.

As AI joins more of our daily work, we should check our defaults. For repo context, quality beats quantity. For now, silence might be the better bet.

Botmonster Tech

Botmonster Tech