The 80% Coverage Trap: Why AI-Generated Tests Create a False Sense of Security

AI test generation tools make it trivially easy to hit 80% or even 90%+ line coverage. Point GitHub Copilot

at a codebase, use the @Test directive, and watch it produce hundreds of test methods without a single line of manual effort. The number looks great on a dashboard. The problem is that line coverage only measures execution, not detection. A test suite can run every line of your code without asserting anything meaningful about whether that code is correct. In a documented 2026 experiment, an AI-generated suite scored 93.1% line coverage but only 58.6% on mutation testing - meaning over a third of realistic bugs would have slipped through undetected with CI showing green across the board.

This matters because AI test generation adoption is accelerating fast. In the Automation Guild 2026 pre-event survey , 72.8% of testing professionals selected “AI-powered testing and autonomous test generation” as their top priority. Tools like Copilot Testing for .NET (GA in Visual Studio 2026 v18.3), Qodo Gen , and BlinqIO are shipping features that generate entire test suites from source code analysis. Most teams will use AI for testing within a year or two. The real question is whether they’ll recognize that the coverage number these tools produce tells them almost nothing about actual test quality.

The Coverage Number Everyone Celebrates (and Why It Lies)

Line coverage became the default proxy for test quality decades ago, and for good reason at the time. It was the easiest metric to collect, it correlated loosely with fewer production bugs, and it gave managers a number to put on a slide. AI tools have turned it into a vanity metric by making it effortless to achieve.

Line coverage measures whether a line was executed during a test run. It does not measure whether the test verified that line’s behavior. A test that calls a function without checking its return value counts as “covered.” A test that asserts assertNotNull(result) on a method that should return a specific calculated value counts as “covered.” A test that catches an exception and asserts assertTrue(true) counts as “covered.”

AI-generated tests tend to produce exactly these patterns. They reverse-engineer assertions from current behavior rather than from a specification. The AI reads the code, sees what it does, and generates tests that confirm the code does what it does. This is circular validation. If the code has a bug, the AI-generated test will confirm the buggy behavior as correct.

The CodeRabbit State of AI vs Human Code Generation report analyzed 470 real-world pull requests and found that AI-generated PRs contain 1.7x more issues than human-written ones (10.83 vs 6.45 issues per PR), with 1.4x more critical defects and 75% more logic/correctness errors. These defects survive precisely because the accompanying test suites don’t catch them. The tests execute the code, the coverage number goes up, and the bugs ship.

A 60% coverage suite with thoughtful boundary assertions

, null checks, and error path validation catches more real bugs than a 90% suite full of assertNotNull and assertTrue(result != null) boilerplate. The difference is that the first suite was written by someone who understood what the code should do. The second was written by an AI that only knew what the code currently does.

The 93% to 58% Gap - What Mutation Testing Reveals

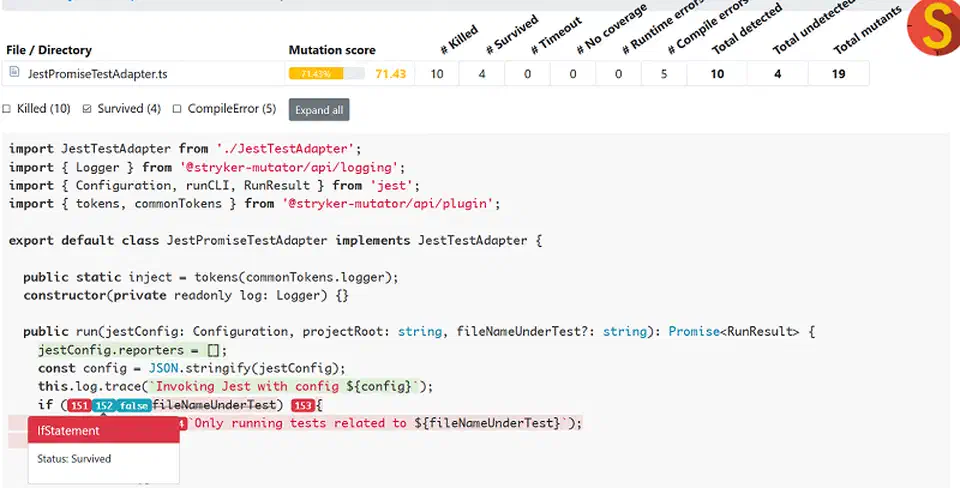

Mutation testing is the lie detector for test suites. It works by injecting small, realistic faults - called “mutants” - into source code: flipped booleans, swapped comparison operators, removed return values, changed arithmetic signs. Then it runs the existing test suite against each mutant. If a test fails, the mutant is “killed” - the test caught the simulated bug. If all tests pass, the mutant “survives” - a real bug with that same shape could ship undetected.

In a 2026 multi-agent adversarial experiment, the gap between coverage theater and real detection was stark:

| Metric | Score |

|---|---|

| Line coverage (AI-generated suite) | 93.1% |

| Mutation score (same suite, Stryker) | 58.6% MSI |

| Gap | 34.5 percentage points |

| Surviving mutants (services layer) | ~48 of 116 |

That 34-point gap represents the fraction of the codebase where a developer (or an AI) can introduce a bug, all tests pass, and CI goes green with zero warning. Nearly half the injected faults in the services layer went undetected.

The fix in that experiment required three rounds of targeted assertion improvements guided by surviving mutant reports:

- Replacing presence checks (

assertNotNull) with correctness checks (assertEquals(expected, actual)) - Adding boundary condition tests for off-by-one errors and edge values

- Validating error paths - confirming that the right exception type is thrown with the right message, not just that “some exception” was thrown

After three rounds, the mutation score climbed from 58.6% to 93.1% MSI, matching the original line coverage number. The same AI that wrote the weak tests was able to write strong ones when given the mutation report as context. The surviving mutant report told the AI exactly where its tests were blind, and the AI fixed those blind spots.

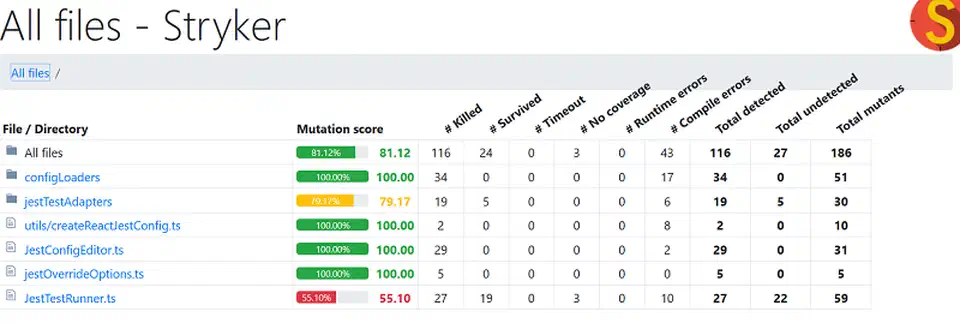

The key tools for mutation testing are Stryker for .NET and JavaScript/TypeScript, PIT for Java, and Cosmic Ray for Python. None of these are AI-specific, but they’re the essential complement to any AI test generation workflow. An emerging industry threshold in 2026 targets 80%+ mutation score on critical business logic modules, with most teams treating anything below 70% as a red flag regardless of how high the line coverage number is.

The 2026 AI Testing Tool Landscape

The AI testing market has split into four distinct categories, each solving a different part of the quality problem. Understanding which category you need prevents buying a coverage tool when you need a validation tool.

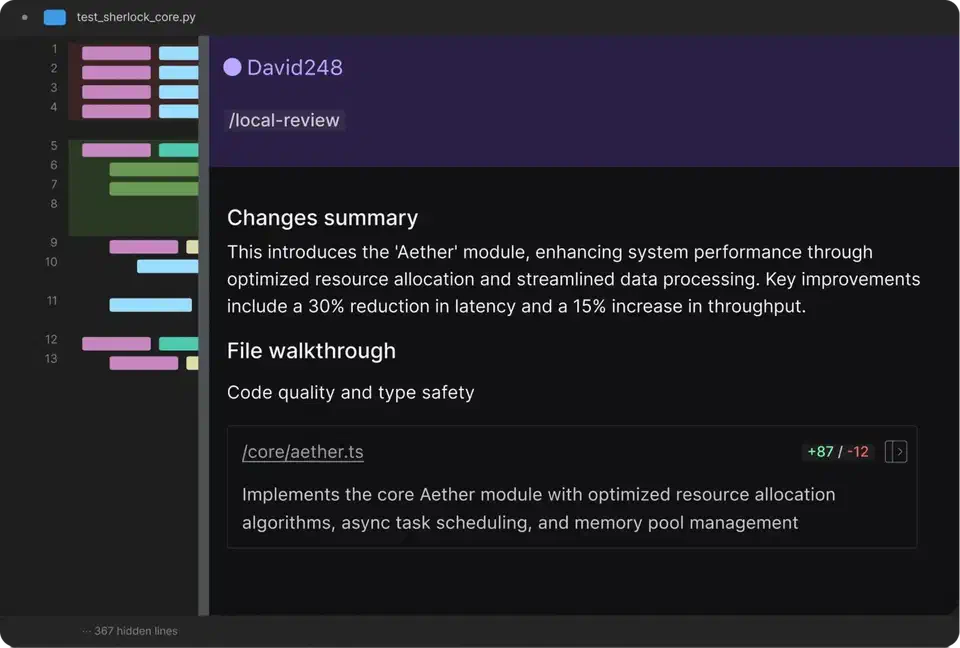

Autonomous test generation tools produce test code from source analysis. GitHub Copilot Testing for .NET

(GA in Visual Studio 2026 v18.3) uses the @Test directive in Copilot Chat to analyze code, create test projects, and generate/build/run tests automatically across xUnit, NUnit, and MSTest. BlinqIO

’s AI Test Engineer receives test descriptions, autonomously navigates the application, and generates real Playwright

code stored in your Git repo. Qodo Gen

takes a behavior-driven approach, analyzing function signatures, type annotations, and implementation logic to generate tests that cover distinct behavioral scenarios rather than just lines.

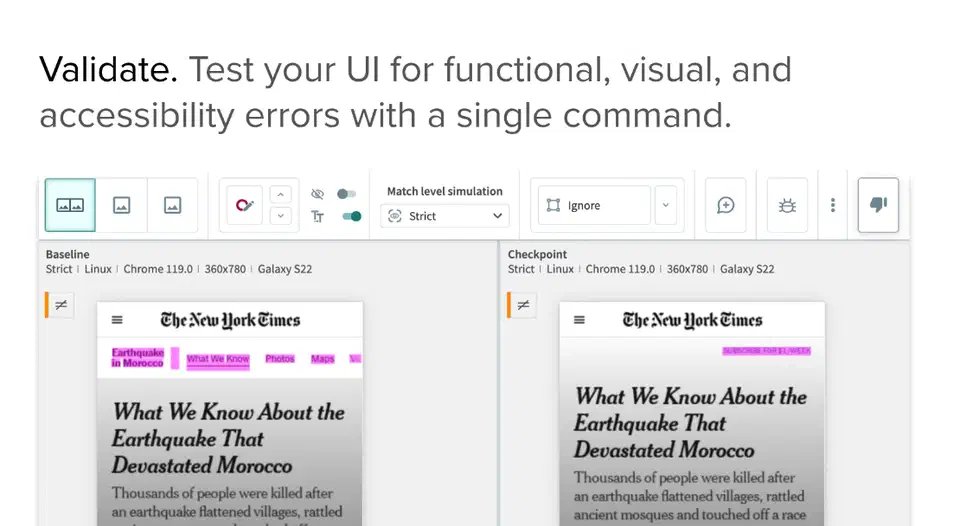

Visual validation tools catch what unit tests structurally cannot. Applitools leads this category with AI trained on millions of screenshots, detecting layout shifts, cross-browser inconsistencies, and visual regressions. Named a Strong Performer in the Forrester Wave: Autonomous Testing Platforms, Q4 2025.

Self-healing execution tools reduce maintenance costs when UIs change. Perfecto (Perforce) and Virtuoso QA auto-fix broken locators - Virtuoso reports roughly 95% auto-fix accuracy, reducing test maintenance costs by up to 85%.

Codeless/NLP-driven tools lower the barrier to test creation. Testsigma converts plain English test descriptions into executable tests across web, mobile, and API surfaces. Mabl shipped Auto TFA (Autonomous Test Failure Analysis) in early 2026, triaging test failures and pushing root-cause insights into Jira or the IDE.

The critical distinction: autonomous generation tools optimize for coverage breadth. Visual validation and mutation testing tools optimize for detection depth. Most teams need both.

| Category | Example Tools | Optimizes For | Catches |

|---|---|---|---|

| Autonomous generation | Copilot Testing, BlinqIO, Qodo | Coverage breadth | Missing test cases |

| Visual validation | Applitools | UI correctness | Layout/rendering bugs |

| Self-healing | Virtuoso, Perfecto | Maintenance reduction | Broken selectors |

| Mutation testing | Stryker, PIT, Cosmic Ray | Detection depth | Weak assertions |

For a broader comparison of individual tools with pricing and language support, the Best AI Test Generation Tools in 2026 guide on Dev.to covers nine tools in detail.

Building a Test Strategy That Survives the Coverage Trap

The teams that actually catch bugs in production treat coverage as a floor, not a ceiling. They layer complementary quality signals on top of it.

Layer 1 - AI-generated baseline. Let AI tools generate the initial test suite targeting 80%+ line coverage. This is commodity work. There is no reason to write boilerplate setUp, tearDown, and happy-path tests by hand anymore. Use Copilot, Qodo, or your tool of choice and move on to the work that matters. For a broader look at how to structure AI-assisted coding across different task sizes and complexity tiers, see our guide on AI pair programming workflows

.

Layer 2 - Mutation testing gate. Run Stryker , Cosmic Ray, or PIT on critical modules and set a CI gate at 80% mutation score minimum. If the AI suite scores below that threshold, feed the surviving mutant report back to the AI and let it strengthen its assertions. This feedback loop is where AI test generation actually gets powerful: each iteration produces better tests because the mutation report tells the AI exactly where its coverage is hollow.

Stryker in particular has optimized for CI pipelines: it compiles all mutants at once using conditional switches rather than recompiling per mutation, and it groups non-overlapping mutants into the same test session to minimize overhead. Incremental mode tests only changed files, cutting CI time from 45 minutes to around 18 minutes in reported benchmarks. The practical approach is running incremental mutation analysis on every PR and full mutation analysis as a nightly job.

Layer 3 - Human-authored edge cases. Humans write tests for business logic invariants, security boundaries, concurrency scenarios, and integration contracts that AI consistently misses. The 2026 survey data shows 67% of testing professionals trust AI-generated tests only with human review - and they’re right to. AI doesn’t understand what your business rules should be. It only knows what your code currently does.

Layer 4 - Visual and behavioral validation. Add Applitools or an equivalent for UI-facing code, catching the class of regressions that unit tests structurally cannot detect. A function can return the correct data while the CSS renders it in white text on a white background.

The feedback loop between layers is what makes this strategy work. Mutation reports from Layer 2 become prompts for Layer 1, creating a cycle where each AI-generated test round is better than the last. Surviving mutant reports are, in effect, the perfect prompt for an AI test generator: they specify exactly what behavior needs to be asserted and where.

The anti-pattern to avoid: using coverage percentage as a team performance metric. When coverage becomes a KPI, developers and AI tools game it with trivial tests that inflate the number without improving quality. Track mutation score on critical modules instead. It’s harder to game because a test that doesn’t assert real behavior will not kill mutants.

There’s also a security dimension to this. Research from Veracode found that AI-generated code in Java showed a 72% security failure rate across tasks. AI-generated code that passes unit tests can still contain injection vulnerabilities, insecure deserialization, or broken authentication. Security testing - SAST, DAST, penetration testing - must remain a separate concern from coverage, because functional correctness and security correctness are different properties.

Coverage Theater vs. Real Quality

The coverage number on your dashboard is an execution metric, not a quality metric. It tells you what ran, not what was verified. AI test generation has made this distinction more urgent than it has ever been, because now teams can hit 90% coverage overnight and declare victory without realizing that a third of their realistic bugs would still ship.

Mutation testing closes the gap. The experiment that went from 93.1% line coverage / 58.6% mutation score to 93.1% line coverage / 93.1% mutation score did it by feeding surviving mutant reports back to the AI for three rounds of assertion improvement. The tools exist. The workflow is straightforward. The only barrier is recognizing that the coverage number you’re celebrating might be lying to you.

The strongest test suites in 2026 come from teams that use AI for generating the initial suite and covering happy paths, then layer human judgment on top for business invariants, security boundaries, and integration contracts. Mutation score, not line coverage, is the metric that tells you whether this combination is working.

Botmonster Tech

Botmonster Tech