Alembic Migrations: From Dev to Production Rolling Deploys

Alembic

is the standard migration tool for SQLAlchemy

projects. You run alembic init, point it at your SQLAlchemy models, and use alembic revision --autogenerate to produce migration scripts. Alembic then applies those scripts in order with alembic upgrade head. You get repeatable, reviewable schema changes that work the same way everywhere your app runs

. The latest stable release is Alembic 1.18.4. It supports SQLAlchemy 2.0 (now at 2.0.48) and its modern typed APIs.

If you’ve ever used Base.metadata.create_all(engine) to set up your tables, you know the problem. It creates tables that don’t exist, but does nothing for tables that already exist and need changes. It can’t add columns, change types, or create indexes on live tables. Once you have a production database with real data, manual ALTER TABLE statements become your only option. They ship with no rollback plan, no audit trail, and no proof that staging matches production. Alembic fixes this by keeping a linear history of schema changes. Each change has a revision ID, stored in an alembic_version table inside the database.

Setting Up Alembic in a SQLAlchemy Project

The initial setup is straightforward. Start by installing the packages:

pip install alembic sqlalchemyOr if you use uv :

uv add alembic sqlalchemyAlembic pulls in Mako to render templates and uses your existing SQLAlchemy engine setup. Set up the migration environment:

alembic init alembicThis creates an alembic/ directory with several key files:

env.py- runtime setup that links Alembic to your database and modelsscript.py.mako- the Mako template used to build new migration scriptsversions/- the folder where migration scripts pile up over timealembic.iniat the project root - main config file

A typical project structure with Alembic alongside a FastAPI or Flask application looks like this:

myproject/

alembic/

env.py

script.py.mako

versions/

001_initial_schema.py

002_add_orders_table.py

app/

__init__.py

models.py

main.py

alembic.ini

pyproject.tomlConfiguring the Database Connection

Open alembic.ini and set the database URL:

sqlalchemy.url = postgresql+psycopg://user:pass@localhost/mydbHardcoding credentials in a config file is a bad idea past local dev. Read the URL from an env variable in env.py instead:

import os

from alembic import context

config = context.config

config.set_main_option(

"sqlalchemy.url",

os.environ["DATABASE_URL"]

)Connecting to Your Models

In env.py, import your declarative base and set the target metadata. This is what turns on autogen. Alembic compares your Python models against the live schema to spot the diffs:

from app.models import Base

target_metadata = Base.metadataGenerating and Applying the First Migration

With the config in place, build your first migration:

alembic revision --autogenerate -m "initial schema"This creates a Python file in alembic/versions/. It has upgrade() and downgrade() functions with the detected op.create_table() and op.create_index() calls. Apply it:

alembic upgrade headA few other commands you will use constantly:

alembic current # shows the current revision

alembic history # shows the full migration chain

alembic heads # shows all branch heads (should be exactly one)Writing and Reviewing Migration Scripts

Autogen is a starting point, not a finished product. It catches a lot. New tables, removed tables, added or removed columns, changed column types (with caveats), added or removed indexes and unique constraints, and added or removed foreign keys. Still, it has blind spots.

What Autogenerate Cannot Detect

Autogen doesn’t catch table renames (it sees a drop and a create). It also misses column renames (it sees a drop and an add, which loses data), changes to check constraints, changes to server defaults on existing columns, and Postgres features like ENUM type changes.

For column renames, write the migration manually:

def upgrade():

op.alter_column("users", "name", new_column_name="full_name")

def downgrade():

op.alter_column("users", "full_name", new_column_name="name")Data Migrations

Alembic migrations are Python scripts, not raw SQL files. So you can ship data migrations next to schema changes in the same revision. This is one of Alembic’s edges over SQL tools like Flyway

. For example, backfill a column before adding a NOT NULL constraint:

def upgrade():

op.add_column("users", sa.Column("role", sa.String(50), nullable=True))

op.execute("UPDATE users SET role = 'member' WHERE role IS NULL")

op.alter_column("users", "role", nullable=False)Safe Column Addition for Production

Adding columns to production tables with live data takes care. The safe pattern is:

- Add the column as nullable with a default value

- Deploy and backfill the data in app code or a data migration

- In a later, separate migration, add the

NOT NULLconstraint

Never add a NOT NULL column without a default to a table that already has rows. The migration will fail outright. Or worse, it will pass on your empty dev database and fail in production.

Always Review Before Committing

Always read the upgrade() and downgrade() functions before you commit a migration. Test both directions:

alembic upgrade head && alembic downgrade -1 && alembic upgrade headThis round-trip catches cases where the downgrade fails to undo the upgrade. That is a common source of pain when you need to roll back in production.

Branching, Merging, and Team Workflows

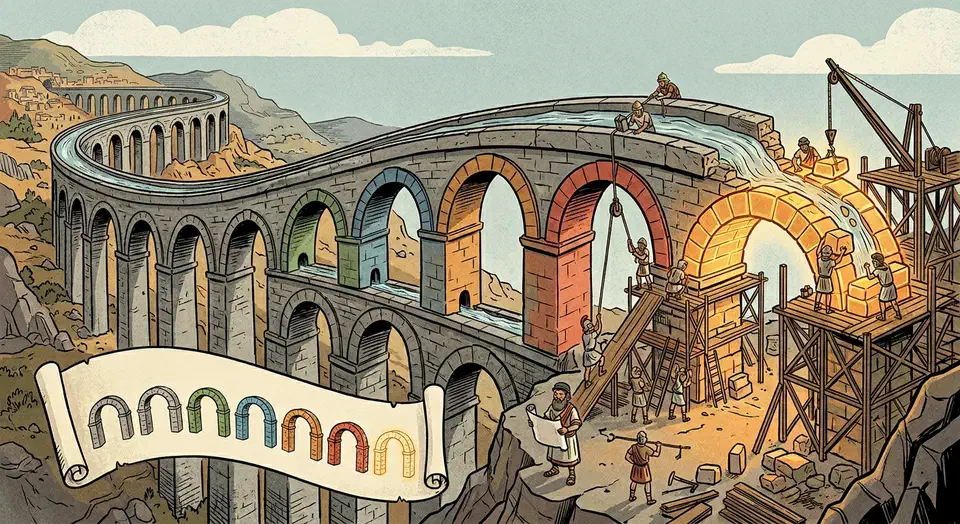

Each migration has a revision ID and a down_revision that points to its parent. Together they form a linked list. When two developers create migrations from the same parent at the same time, Alembic spots a branch: two revisions that share the same down_revision.

Resolving Branches

Resolve branches with the merge command:

alembic merge -m "merge feature_x and feature_y" <rev1> <rev2>This creates a merge migration with down_revision = (<rev1>, <rev2>) and empty upgrade()/downgrade() functions. The merge migration just tells Alembic that both branches must run before anything that comes after.

Preventing Branch Conflicts in CI

A simple way to stop this is to add a CI check

that runs alembic heads and fails if more than one head exists. This forces developers to merge branches before they merge their pull request:

#!/bin/bash

# ci-check-migration-heads.sh

HEADS=$(alembic heads | wc -l)

if [ "$HEADS" -gt 1 ]; then

echo "ERROR: Multiple migration heads detected. Run 'alembic merge' first."

exit 1

fiNaming Conventions

Use descriptive messages when creating revisions:

alembic revision --autogenerate -m "add_orders_table"The revision filename includes the message. That makes alembic/versions/ easy to scan, and the alembic history output stays meaningful. Skip generic messages like “migration” or “update”.

Stamping Existing Databases

If you are bolting Alembic onto a database first built with create_all(), use alembic stamp head. This marks the database as being at the latest revision without running any migrations. That is what you want when the schema already matches your models.

Offline SQL Generation

For setups where the migration tool can’t connect to the database, or where a DBA must review raw SQL before it runs:

alembic upgrade head --sql > migration.sqlThis generates the SQL statements without executing them.

Using Alembic with Async SQLAlchemy

If your app uses async SQLAlchemy with asyncpg or another async driver, Alembic ships a dedicated async template. Start with:

alembic init -t async alembicThis builds an env.py that uses async_engine_from_config and connection.run_sync to bridge the async engine with Alembic’s sync migration runner:

from sqlalchemy.ext.asyncio import async_engine_from_config

from sqlalchemy import pool

async def run_async_migrations():

connectable = async_engine_from_config(

config.get_section(config.config_ini_section, {}),

prefix="sqlalchemy.",

poolclass=pool.NullPool,

)

async with connectable.connect() as connection:

await connection.run_sync(do_run_migrations)

await connectable.dispose()Alembic doesn’t ship an async API. The migration ops always run sync. The async template just opens the database connection async, then hands a sync connection object to the migration context. For a wider look at Python services that pair Postgres with async connection pools, the patterns in building database-backed Python tools apply to the app layer above your migrations.

Common Pitfalls and Production Safety

Migrations that pass on an empty dev database can fail badly on a production database with millions of rows. The next sections walk through the traps that hurt most.

Table Locking

ALTER TABLE on Postgres takes an ACCESS EXCLUSIVE lock on the whole table. Adding a column to a table with 50 million rows can lock it for minutes. That blocks all reads and writes. Set a lock timeout to fail fast instead of stalling your whole app:

def upgrade():

op.execute("SET lock_timeout = '5s'")

op.add_column("users", sa.Column("email", sa.Text()))Index Creation

CREATE INDEX also locks the table. On Postgres, use CREATE INDEX CONCURRENTLY to build the index without holding a lock:

op.create_index(

"ix_users_email",

"users",

["email"],

postgresql_concurrently=True,

)Note that concurrent index creation can’t run inside a transaction. You need to set up the migration to run outside a transaction block by setting autocommit=True in the migration context.

Column Drops and Rolling Deployments

During rolling deploys, old app instances still point at the column you just dropped. So deploy a code change that strips all references to the column first. Wait for old instances to drain. Then drop the column in a later migration. Otherwise, the old code will throw errors on the missing column during the rollover window.

Server Defaults vs Python Defaults

server_default=sa.text("'active'") creates a database-level default. It applies to all inserts, including raw SQL and other apps that hit the same database. default="active" only works in SQLAlchemy’s Python layer. For columns that may be set outside your app, always use server_default.

Testing Migrations Properly

Run alembic upgrade head, then alembic downgrade base, then alembic upgrade head again in CI. Use a real Postgres (or MySQL) instance. Testcontainers

or a Docker service in your CI pipeline works well. SQLite isn’t a valid proxy for testing Postgres migrations. The DDL behavior, type system, and locking rules are just too different.

Transactional DDL

Postgres supports transactional DDL. A failed migration rolls back on its own. MySQL does not. A failed migration leaves the schema in a half-done state, and you have to clean it up by hand. Know your engine’s behavior and plan around it.

Rollback Strategies for Destructive Migrations

Not every migration can be cleanly reversed. Dropping a column destroys data. No downgrade() function can bring it back. For these cases:

- Take a database snapshot or logical dump before you apply destructive migrations in production. This is your real safety net, not the

downgrade()function. - Use a two-phase approach. Stop writing to the column first. Move the data elsewhere if needed. Then drop the column in a separate migration after you confirm the data is no longer needed.

- Don’t write one migration that drops five columns. Write five separate migrations. That lets you revert single changes without touching the rest.

- Some downgrades just aren’t possible. It is better to write a

downgrade()function that raisesNotImplementedErrorwith a clear message than to ship a broken downgrade that silently corrupts data.

Alembic vs Other Migration Tools

| Feature | Alembic | Django Migrations | Flyway | golang-migrate |

|---|---|---|---|---|

| Language | Python | Python (Django) | Java/SQL | Go |

| Migration format | Python scripts | Python scripts | SQL files | SQL files |

| Autogeneration | Yes | Yes | No | No |

| ORM integration | SQLAlchemy | Django ORM | None | None |

| Data migrations | Built-in (Python) | Built-in (Python) | SQL only | SQL only |

| Async support | Yes (template) | No | N/A | N/A |

| Multi-database | Manual setup | Built-in routing | Per-config | Per-config |

| Branching/Merging | Built-in | Built-in | Linear only | Linear only |

Pick Alembic if you already use SQLAlchemy. Pick Django Migrations if you are in a Django project. They are built in and tightly wired to the Django ORM. Flyway is a strong option for JVM projects or teams that prefer raw SQL migrations. golang-migrate fills the same role for Go apps.

Integrating Alembic into CI/CD Pipelines

Running migrations as part of your deploy pipeline is the standard play. A typical flow looks like this:

- CI checks migrations by running

alembic upgrade head && alembic downgrade base && alembic upgrade headagainst a throwaway test database, and confirms thatalembic headsreturns exactly one head. - Before you deploy new app code, run

alembic upgrade headagainst the production database. That makes sure the schema is ready before new code tries to use it. - After the deploy, run

alembic currentto confirm the database is at the expected revision.

For container deploys, a common pattern is an init container or a pre-start script that runs alembic upgrade head before the main app starts:

# Dockerfile entrypoint script

#!/bin/bash

alembic upgrade head

exec uvicorn app.main:app --host 0.0.0.0 --port 8000Migrations should stay backward compatible with the app code that is live. Deploy schema changes that add new structures first. Then deploy the code that uses them. Then deploy migrations that remove old structures. This “expand-contract” pattern stops downtime during rolling deploys.

Botmonster Tech

Botmonster Tech