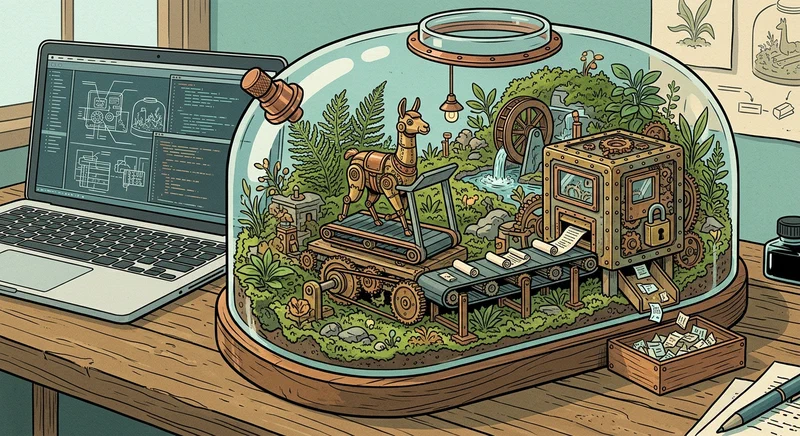

How to Build a Local Code Interpreter Agent with Ollama and Docker

You can build a fully local, sandboxed code interpreter agent by pairing Ollama (running a reasoning model like Llama 4 Scout or DeepSeek R1) with a Docker container that executes the generated Python code. The agent sends a user prompt to the local LLM, which produces Python code; that code gets injected into a locked-down Docker container with no network access and strict resource limits; the stdout/stderr output is captured and fed back to the LLM for reflection and iteration. The entire loop runs on your machine with zero cloud API calls, giving you a private, free, ChatGPT Code Interpreter-style experience.

Below is the full architecture: Ollama model selection, Docker sandbox hardening, and the Python orchestrator script that ties everything together.

Why Run a Code Interpreter Locally Instead of Using ChatGPT

ChatGPT’s Code Interpreter (now called Advanced Data Analysis) is convenient, but it comes with real constraints that matter for serious work. The execution environment times out after about 10 minutes. The filesystem does not persist between sessions. You cannot access a GPU. Many pip packages are restricted or unavailable. And every byte of data you upload leaves your machine and lands on OpenAI’s servers.

Running locally flips all of those limitations. You get full control over the runtime environment, unlimited iteration cycles, and zero data leakage. For anyone working with proprietary codebases, internal APIs, or PII-laden datasets, that last point alone justifies the setup effort.

The cost math is straightforward too. Ollama running Llama 4 Scout 17B at Q4_K_M quantization costs nothing per query. ChatGPT Plus runs $20/month, and the GPT-4o API charges roughly $0.03 per query. If you are doing hundreds of code interpretation requests per month, local inference pays for itself quickly - especially if you already own a decent GPU.

On the capability side, a local setup lets you mount any directory read-only into the sandbox, install arbitrary Python packages, and use GPU-accelerated libraries like CuPy or PyTorch inside the container. You are not limited to whatever subset of packages OpenAI decided to pre-install.

Performance-wise, on an RTX 5070 Ti with 16 GB VRAM you can expect around 30 tokens per second from a 17B model. That makes the feedback loop feel interactive rather than sluggish. The model generates a code block in a few seconds, execution takes a fraction of a second for most tasks, and the next iteration starts almost immediately.

The privacy angle matters most when you are analyzing proprietary datasets that cannot leave your network, running code against internal REST APIs, processing CSV files containing customer PII, or iterating on code that touches trade secrets. With a local agent, none of that data goes anywhere.

Architecture Overview - The Agent Loop

Before writing any code, it helps to understand the three-component architecture and how data flows through it.

The three components are:

- A Python orchestrator script running on the host, responsible for managing the conversation loop and coordinating between the other two components

- Ollama serving the LLM via its REST API on

localhost:11434, handling all code generation and reasoning - A disposable Docker container for code execution, created fresh for each run and destroyed immediately after

The agent loop works like this: the user types a prompt. The orchestrator builds a system prompt plus the user message and sends a POST request to http://localhost:11434/api/chat. It parses the code block from the response. It writes that code to a temporary file and runs it inside a Docker container via docker run. It captures stdout and stderr. It appends the execution result to the conversation history. Then it repeats until the LLM signals completion or the maximum iteration count is reached.

For the message format, use Ollama’s chat completion API with a payload like:

{

"model": "deepseek-r1:14b",

"messages": [

{"role": "system", "content": "You are a code interpreter..."},

{"role": "user", "content": "Analyze this CSV data..."}

],

"stream": false

}Setting stream to false keeps things simple - the entire response comes back in one JSON blob. If you want real-time token display for a more interactive feel, set it to true and iterate over the streaming response chunks.

Set a maximum iteration cap of 5 to 8 rounds. This prevents infinite loops where the model keeps generating code that fails in the same way. Include the current iteration count in the system prompt so the model knows it needs to converge toward a final answer rather than endlessly exploring.

For state management, maintain a conversation list in memory. Each round appends two entries: the assistant’s response containing the code block, and a user message with a [EXECUTION RESULT] prefix containing the stdout/stderr output. This gives the model full context about what it tried and what happened.

Error handling matters here. If Docker returns a non-zero exit code, include both stdout and the full traceback in the feedback message. The LLM needs the complete error information to debug effectively. A truncated traceback leads to the model guessing at fixes rather than addressing the actual problem.

Setting Up Ollama and Choosing the Right Model

Not all local models write equally good executable code. Model selection has a large impact on how often the agent loop succeeds on the first try versus needing multiple debugging iterations.

Install Ollama on Linux with a single command:

curl -fsSL https://ollama.com/install.sh | shVerify the installation with ollama --version - you want v0.6.x or later for the best model compatibility and performance.

Here are the recommended models for code interpretation, ranked by code generation quality:

| Model | Size | VRAM Needed | Strength |

|---|---|---|---|

deepseek-r1:14b | ~8 GB | ~10 GB | Best reasoning and debugging |

llama4-scout:17b | ~10 GB | ~12 GB | Good balance of speed and quality |

qwen3:14b | ~8 GB | ~10 GB | Strong at data analysis tasks |

codellama:34b | ~20 GB | ~22 GB | Best raw code quality (needs 24+ GB VRAM) |

Pull your chosen model:

ollama pull deepseek-r1:14bExpect about 8 GB of download for the Q4_K_M quantized version.

To configure the model specifically for code tasks, create a Modelfile:

FROM deepseek-r1:14b

PARAMETER temperature 0.2

PARAMETER num_ctx 8192

SYSTEM """You are a code interpreter agent. When asked to solve a problem, write Python code wrapped in ```python``` blocks. Use print() for all output you want to see. Write clean, executable code with no placeholders."""Create the custom model with:

ollama create code-interpreter -f ModelfileThe low temperature (0.2) reduces creative randomness in code generation, which is what you want - deterministic, correct code rather than varied but potentially broken alternatives. The 8192 context window gives enough room for multi-turn conversations with execution results.

A critical detail about VRAM: if your model exceeds available VRAM, Ollama silently offloads layers to CPU. Performance tanks from 30 tokens/second to maybe 3-5 tokens/second with no warning. Monitor with nvidia-smi while running your first few queries to make sure everything stays on the GPU.

Test the model standalone before building the full agent:

ollama run code-interpreter "Write a Python script that reads a CSV from stdin and prints summary statistics"Verify it produces clean, immediately runnable code. If the model wraps code in markdown formatting but also adds conversational text mixed in with the code blocks, you may need to adjust the system prompt to be more explicit about output format.

Building the Sandboxed Docker Execution Environment

The Docker container is your security boundary. LLM-generated code is inherently untrusted - the model might produce code that tries to read /etc/passwd, make network requests, or consume all available memory. A properly configured sandbox prevents all of that.

Start with a minimal Dockerfile:

FROM python:3.12-slim

RUN pip install --no-cache-dir \

pandas numpy matplotlib scipy requests \

scikit-learn seaborn

RUN useradd -m -u 1000 sandbox

USER sandbox

WORKDIR /home/sandboxThe --no-cache-dir flag keeps the image lean - under 400 MB even with the data science stack. The non-root sandbox user means that even if the code tries something malicious, it has minimal system privileges.

Build the image:

docker build -t code-sandbox:latest .The security flags for docker run are where the real hardening happens:

docker run --rm \

--network none \

--read-only \

--tmpfs /tmp:size=100m \

--memory 512m \

--cpus 1.0 \

--pids-limit 64 \

--security-opt no-new-privileges \

-v /tmp/agent_code.py:/code/script.py:ro \

code-sandbox:latest \

python /code/script.pyBreaking down each flag:

--network noneremoves all network access, so the code cannot phone home or exfiltrate data--read-onlymakes the root filesystem immutable, preventing writes to system directories--tmpfs /tmp:size=100mprovides a small writable temp directory capped at 100 MB--memory 512m --cpus 1.0limits resource consumption so a runaway script cannot starve the host--pids-limit 64prevents fork bombs--security-opt no-new-privilegesblocks privilege escalation

For code injection, the orchestrator writes the generated Python to a temporary file on the host, then mounts it read-only at /code/script.py. This avoids any shell injection issues that would come from passing code as a command-line argument.

To handle file I/O for data analysis tasks, mount directories separately:

-v ./workspace:/data:ro \

-v ./output:/outputThe workspace directory is read-only - the code can read input files but cannot modify them. The output directory is writable, so the code can save results, plots, and generated files there.

For timeout enforcement, combine Docker’s stop timeout with a process-level timeout:

docker run --rm --stop-timeout 30 \

code-sandbox:latest \

timeout 30 python /code/script.pyIf the script exceeds 30 seconds, the timeout command kills it. If that somehow fails, Docker force-stops the container. Capture this as a specific error message like [TIMEOUT] Script exceeded 30 second execution limit so the LLM knows to optimize its approach.

Putting It All Together - The Orchestrator Script

The orchestrator is a single Python script of roughly 150 lines that ties Ollama and Docker together. No heavy frameworks needed.

Core dependencies are minimal:

import subprocess

import json

import re

import tempfile

import httpxhttpx

provides clean HTTP client functionality for talking to Ollama’s API. You could use requests too, but httpx handles async if you want to add streaming later.

The function that calls Ollama:

OLLAMA_URL = "http://localhost:11434/api/chat"

def call_ollama(messages: list, model: str = "code-interpreter") -> str:

payload = {

"model": model,

"messages": messages,

"stream": False

}

response = httpx.post(OLLAMA_URL, json=payload, timeout=120.0)

response.raise_for_status()

return response.json()["message"]["content"]

def extract_code(text: str) -> str | None:

match = re.search(r"```python\n(.*?)```", text, re.DOTALL)

return match.group(1).strip() if match else NoneThe function that executes code in Docker:

def execute_in_docker(code: str, timeout: int = 30) -> tuple[str, str, int]:

with tempfile.NamedTemporaryFile(

mode="w", suffix=".py", delete=False

) as f:

f.write(code)

code_path = f.name

try:

result = subprocess.run(

[

"docker", "run", "--rm",

"--network", "none",

"--read-only",

"--tmpfs", "/tmp:size=100m",

"--memory", "512m",

"--cpus", "1.0",

"--pids-limit", "64",

"--security-opt", "no-new-privileges",

"-v", f"{code_path}:/code/script.py:ro",

"code-sandbox:latest",

"timeout", str(timeout), "python", "/code/script.py"

],

capture_output=True,

text=True,

timeout=timeout + 10

)

return result.stdout, result.stderr, result.returncode

except subprocess.TimeoutExpired:

return "", "[TIMEOUT] Script exceeded execution limit", 1The main loop brings everything together:

MAX_ITERATIONS = 6

SYSTEM_PROMPT = """You are a code interpreter agent. You solve problems by writing Python code.

Rules:

- Write exactly ONE ```python``` code block per response

- Use print() for all output you want to see

- When you have the final answer, write it inside <FINAL_ANSWER>...</FINAL_ANSWER> tags

- You have {remaining} iterations remaining - converge toward a solution

"""

def run_agent(user_prompt: str):

messages = [

{"role": "system", "content": SYSTEM_PROMPT.format(

remaining=MAX_ITERATIONS)},

{"role": "user", "content": user_prompt}

]

for i in range(MAX_ITERATIONS):

response = call_ollama(messages)

messages.append({"role": "assistant", "content": response})

if "<FINAL_ANSWER>" in response:

answer = re.search(

r"<FINAL_ANSWER>(.*?)</FINAL_ANSWER>",

response, re.DOTALL

)

print(f"\nFinal Answer:\n{answer.group(1).strip()}")

return

code = extract_code(response)

if not code:

messages.append({

"role": "user",

"content": "[ERROR] No code block found. Write a "

"```python``` block."

})

continue

print(f"\n--- Iteration {i+1}/{MAX_ITERATIONS} ---")

print(f"Executing code ({len(code)} chars)...")

stdout, stderr, returncode = execute_in_docker(code)

exec_result = f"[EXECUTION RESULT]\nExit code: {returncode}"

if stdout:

exec_result += f"\n\nSTDOUT:\n{stdout}"

if stderr:

exec_result += f"\n\nSTDERR:\n{stderr}"

remaining = MAX_ITERATIONS - i - 1

exec_result += (

f"\n\nYou have {remaining} iterations remaining."

)

messages.append({"role": "user", "content": exec_result})

# Update system prompt with remaining count

messages[0]["content"] = SYSTEM_PROMPT.format(

remaining=remaining)

print("\nMax iterations reached without final answer.")To run the agent:

if __name__ == "__main__":

import sys

prompt = " ".join(sys.argv[1:]) or input("Enter your prompt: ")

run_agent(prompt)Save this as agent.py and run it:

python agent.py "Read the file /data/sales.csv and create a bar chart of monthly revenue saved to /output/revenue.png"The agent will generate pandas code to read the CSV, create a matplotlib chart, save it to the output directory, and report back with the results.

Extending the Agent

Once the basic loop works, there are a few directions worth exploring.

Matplotlib plot support mostly works out of the box with the output directory mount. Add a note to the system prompt telling the model to save figures to /output/ and use matplotlib.use('Agg') since there is no display server in the container.

A --verbose flag that prints the full conversation history after each iteration helps a lot when debugging. When the model gets stuck in a loop producing the same broken code, seeing the full message chain usually reveals why.

For data input, place files in the workspace/ directory before running the agent. The model can read anything mounted at /data/ inside the container - CSV files, JSON dumps, text logs, whatever Python can parse.

The Dockerfile can be modified to include whatever libraries your workflow needs. If you work with geospatial data, add geopandas and shapely. If you do NLP tasks, add nltk or spacy. Rebuild the image and the agent picks up the new packages on the next run.

What makes this setup genuinely useful compared to single-shot code generation is the iteration loop. A 14B model that fails on the first attempt but succeeds on the third is still more practical than a cloud API you cannot afford to call 500 times a month. And all of your data stays on your hardware, processed locally, with nothing sent to an external server.