OpenClaw on Your $20 Claude Sub After Anthropic Banned It

OpenClaw’s bundled claude-cli backend is officially sanctioned by Anthropic, while OAuth-token extraction tools stay blocked. The carve-out works because shelling out to claude -p preserves prompt caching, so a $20 Pro or $200 Max sub routes through OpenClaw without four-figure API bills. The catch: a roughly 5-hour cap that cron jobs exhaust in minutes.

Key Takeaways

- OpenClaw’s CLI backend is allowed by Anthropic; the older OAuth-token tools are not.

- The reason it is allowed: it preserves Anthropic’s prompt caching exactly like Claude Code does.

- Pro and Max plans cap usage near 5 hours per window, so cron jobs need a cheaper backup.

- Use Claude for planning and chat, route automated tasks to GLM, MiniMax, or Codex.

- Setup is three commands and one config edit on any Mac or Linux host running Claude Code.

What Changed in Anthropic’s Third-Party Tool Policy?

Most users found out about the policy change when their Anthropic bill jumped, not from a press release. Heavy agentic workflows that previously billed against a flat Pro or Max subscription suddenly tracked toward $1,500 a month on Opus 4.6 once Anthropic forced third-party orchestrators onto the pay-per-token API. The original concern was narrower than the community read it as. Anthropic’s target was a specific class of tool that extracts the OAuth token from a local Claude Code install and calls the Anthropic API directly under that identity. That pattern bypasses Anthropic’s prompt caching and pushes load to the API tier without the caching benefit Anthropic gets when Claude Code itself runs the request.

The opposite path took weeks to surface. Shelling out to the local claude binary is fine, because Claude Code itself handles the auth and caching the way Anthropic wants it. The PSA that finally made this clear came from the r/openclaw “you can still use your $20/mo sub” thread

(194 comments). The OP framed the cost gap directly:

If you run heavy agentic workflows like I do, you probably watched your monthly bill skyrocket, I was on track to hit over $1,500 a month just running Opus 4.6.

u/mehdiweb (OP, r/openclaw PSA thread)

Comments in the thread point to Boris Cherny’s X threads as the Anthropic-side confirmation and to OpenClaw GitHub issue #66874 as the implementation log. The wording matters: “sanctioned” here means OpenClaw treats claude -p as allowed unless Anthropic publishes a new policy. That is a soft guarantee from a docs page, not a written contract or a section in Anthropic’s terms of service. If you need a lawyer-grade promise, this workaround is not it. Migration-curious readers should also weigh OpenClaw vs Hermes on the memory question

before committing to either gateway.

How OpenClaw’s claude-cli Backend Works Under the Hood

The reason the workaround is allowed is that nothing about the request looks different from interactive Claude Code use. The detail lives in the OpenClaw CLI backends documentation :

The bundled Anthropic claude-cli backend is supported again. Anthropic staff told us OpenClaw-style Claude CLI usage is allowed again, so OpenClaw treats claude -p usage as sanctioned for this integration unless Anthropic publishes a new policy.

OpenClaw (per the same docs page)

Mechanically, OpenClaw spawns the claude binary with a session id, keeps that Claude stdio process alive for the life of the OpenClaw session, and streams follow-up turns over stream-json stdin. Output gets parsed (JSON or plain text) and the session id persists per backend, so subsequent turns in the same chat reuse the long-lived subprocess. That is why the first message in a session feels slow and turn 2 onward is near-instant: the stdio handshake happens once.

Skills delegation is the other piece worth knowing about. OpenClaw passes its skills catalog two ways at once: a compact summary appended to the system prompt, and a temporary Claude Code plugin via --plugin-dir that contains only the skills eligible for that agent and session. Claude Code’s native skill resolver sees the same filtered set OpenClaw would otherwise advertise, so the user-facing behavior is consistent whether you trigger a skill from inside Claude Code or via OpenClaw routing.

The /think mapping is the last surface detail. OpenClaw’s minimal and low levels map to Claude’s --effort low, adaptive and medium map to medium, and high, xhigh, and max map directly. Any other CLI backend you wire in (Codex, Gemini CLI) needs its owning plugin to declare an equivalent argv mapper, otherwise the level just falls through.

How To Route OpenClaw Through Your Claude Pro Subscription

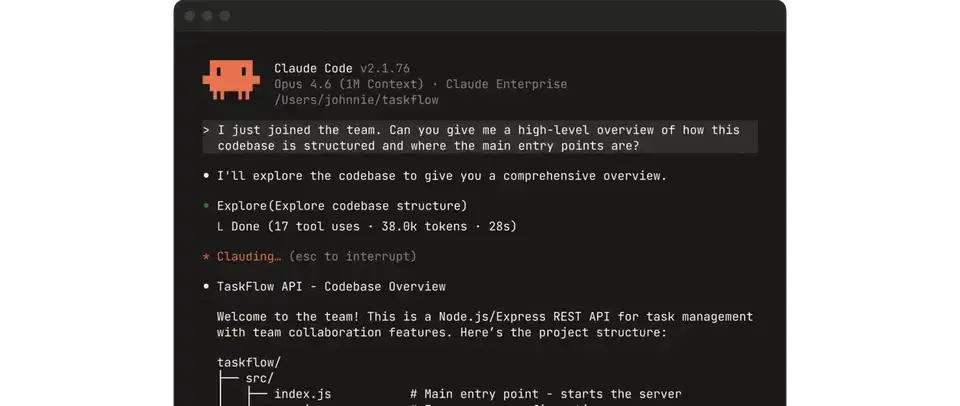

The full setup is three commands and a config sanity check. The prerequisite is that Claude Code is already installed and logged in on the same host where OpenClaw will run, because the bundled backend depends on claude being on $PATH and on a valid Claude Code identity.

Route OpenClaw through your Claude Pro subscription

Confirm Claude Code is logged in

claude auth status --text on the host where OpenClaw will run. The command must report a logged-in identity. If it doesn’t, log in with Claude Code first; the bundled claude-cli backend has no fallback path when there is no active Claude Code session.Install OpenClaw if it is not on the host

curl -fsSL https://openclaw.ai/install.sh | bash to fetch and install the latest OpenClaw release on macOS or Linux. The installer is the canonical path documented on the openclaw.ai homepage

.Set the Anthropic CLI as the default backend

openclaw models auth login --provider anthropic --method cli --set-default. This points OpenClaw at the local claude binary instead of the Anthropic API, and saves the choice as the default for future sessions.Verify the primary model

model.primary is set to anthropic/claude-opus-4-6 (or your preferred Claude model) with codex-cli/gpt-5.5 as a fallback. The default block from the docs reads model: { primary: "anthropic/claude-opus-4-6", fallbacks: ["codex-cli/gpt-5.5"] }.Smoke-test in your linked chat app

claude CLI session id, not an API call signature. If the log line shows provider: anthropic-api, the default did not stick and step 3 needs to be repeated.Add a cheap fallback for cron jobs

agents.defaults.cliBackends so scheduled tasks have a backend that does not hit the Pro/Max 5-hour cap. The OpenClaw plugin inventory at docs.openclaw.ai/plugins/plugin-inventory lists the supported options.The first message you send after setup round-trips through a freshly spawned claude process, which costs a few seconds while the stdio handshake completes. Every subsequent message in the same session reuses the same subprocess, so latency drops to roughly the network round-trip plus Anthropic’s own response time. That latency drop is how you know the session id is being reused instead of a fresh API call going out each turn.

Why Cron Jobs Still Need a Cheaper Backend

The 5-hour Pro and Max usage cap is the rate limit the workaround does not solve. The PSA thread spelled it out:

Your Pro/Max plan is not unlimited. It has a finite usage cap (usually 5 hours), and if you route all your automated background cron jobs through it, you will hit that cap in 10 minutes and your agent will die.

u/mehdiweb (same OP, on the 5-hour cap)

The hybrid stack the community converged on splits traffic by intent. Interactive planning, debugging, and chat sessions that benefit from Opus 4.7 quality stay on the CLI backend. Everything cron-driven, scheduled, or batch routes to a cheaper plan with its own quota. If your scheduled workload is light enough that a single Anthropic identity covers it, Claude Code’s own scheduled tasks and /loop can absorb cron-style jobs without an extra backend. The most-cited options on r/openclaw are:

| Backend | Plan | Approx. monthly cost | Best fit |

|---|---|---|---|

Anthropic via claude-cli | Pro / Max | $20 / $200 | Interactive planning and chat |

| Z.ai GLM Coding Plan Lite | Lite | $18 | Cron, batch refactors |

| Ollama-cloud GLM 5.1 | Standard | $20 | Cron, code review |

| OpenAI Codex (in ChatGPT Business) | Bundled | $0 extra | Sandboxed cron tasks |

| MiniMax highspeed | Pro | $40 | High-throughput agents |

Z.ai’s pricing is the closest near-Claude option. The GLM Coding Plan Lite is priced at $18 per month and exposes GLM-5.1, GLM-5-Turbo, GLM-4.7, and GLM-4.5-Air through an Anthropic-compatible API. GLM-5.1 scored 58.4 on SWE-Bench Pro and reached 94.6% of Claude Opus 4.6’s score on the internal Claude Code eval, which is close enough that cron-driven refactors and code review rarely notice the swap.

One anti-pattern is worth calling out by name: letting OpenClaw self-repair its broken Docker image. The PSA thread documented users burning through $50 of tokens watching the agent recursively break its own install. OpenClaw founder Peter Steinberger acknowledged the broken Docker image and the broader regression in the “rough week” post-mortem :

The trouble started around 2026.4.24. By 2026.4.29 it was obvious enough that nobody could pretend this was just a few weird installs. Gateways got slower. Some installs got stuck in plugin dependency repair loops.

Peter Steinberger (OpenClaw founder, post-mortem)

The newer 2026.5.5 and 2026.5.7-beta.1 releases harden the gateway container by dropping NET_RAW and NET_ADMIN capabilities and adding no-new-privileges in the bundled docker-compose.yml, and they ship pre-built images at the GitHub Container Registry. If you are pinning a Docker tag, pin the explicit version (2026.5.5) rather than latest, and verify any :latest rollout by hand before letting an agent loose on it.

When NOT To Use This

- You don’t have Claude Code installed on the same host where OpenClaw runs. The bundled backend depends on the local

claudebinary and a logged-in Claude Code identity; without both, the login command fails immediately. - Your workload is mostly automated cron jobs that will exhaust the 5-hour Pro or Max cap before doing useful work. Pay-per-token API or a $18 GLM plan is cheaper and survives quota resets.

- You require a written, durable Anthropic policy guarantee. The current sanction is communicated through OpenClaw’s docs page and Anthropic staff statements, not a published policy section. Compliance, audit, and regulated industries should treat this as too soft to commit to.

- You are already on a custom enterprise Anthropic plan with negotiated rate limits. The API path is usually cheaper than the CLI roundabout once volume discounts kick in.

- You run OpenClaw on a multi-tenant host where a single Claude Code identity would be shared across users. The CLI backend has no concept of per-user attribution; everyone in the shared install bills against the same

claude authsession.

FAQ

Is using OpenClaw with my Claude Pro subscription against Anthropic's terms?

claude-cli backend. Per OpenClaw’s own docs, Anthropic staff confirmed that shelling out to the local claude -p binary is sanctioned because it preserves prompt caching. OAuth-token extraction tools, by contrast, stay blocked.Will the CLI backend skip Anthropic's prompt caching?

Why do my cron jobs still get rate-limited if my Pro/Max plan supports it?

What's the cheapest fallback backend for cron jobs?

Why did some OpenClaw users get banned by Anthropic earlier?

claude-cli backend does not do this; it shells out to the local claude binary and lets Claude Code handle auth.Does the long-lived Claude subprocess survive a Claude Code update?

Botmonster Tech

Botmonster Tech