Rust Goes Stable in Linux Kernel 7.0: What It Means for Developers

With Linux 7.0, Rust graduates from a cautious experiment to a permanent part of the kernel’s development model. Kernel builds now require only stable Rust releases, with a minimum of Rust 1.93 anchored to the Debian stable toolchain. Real Rust drivers - NVIDIA’s Nova GPU driver and Google’s Android ashmem subsystem among them - are already running on hundreds of millions of devices. There is no plan to rewrite the kernel in Rust. What changed is policy: new code can now be written in a language that eliminates entire categories of memory-safety bugs at compile time.

Why the Kernel Needed Rust in the First Place

The case for bringing Rust

into the Linux kernel was never ideological. Roughly two-thirds of Linux kernel CVEs trace back to memory safety bugs: buffer overflows, use-after-free errors, null pointer dereferences. These are the predictable cost of writing systems software in C, where manual memory management gives developers unmatched control but no compile-time guardrails. One missed free(), one stale pointer, one incorrect size calculation - any of these can become a privilege escalation exploit or a remotely triggered kernel panic.

C’s memory model made sense in 1972. The kernel was small, hardware was simple, and no alternative existed that could generate comparable machine code. Today the kernel contains tens of millions of lines and runs on everything from embedded sensors to hyperscale data center machines. The surface area for bugs has expanded enormously, and manual memory management remains just as unforgiving as it always was.

Rust addresses this at the language level. Its ownership model tracks object lifetimes at compile time, so there is no runtime garbage collector introducing latency or unpredictability. The borrow checker prevents dangling references and concurrent mutation before the code ever runs. Safe Rust code categorically rejects undefined behavior, which underlies most of the memory corruption bugs that have driven kernel CVE counts for decades.

The commitment made to skeptical kernel maintainers was narrow: Rust would not replace C in existing subsystems. It would be used for new code, particularly new drivers, where compile-time safety could be enforced from day one. No rewrites, no forced migrations.

The Journey from 6.1 to 7.0

Rust’s path into the mainline kernel took four years and generated real controversy along the way.

The first milestone came with Linux 6.1

in December 2022, which merged the foundational Rust infrastructure: toolchain integration, the CONFIG_RUST build hook, and the basic abstractions needed to write Rust code against kernel APIs. It was not yet possible to write a useful driver, but the scaffolding was in and the principle was established.

Linux 6.8 in 2024 moved from infrastructure to actual work. The first real Rust drivers landed in the mainline tree, showing that the language could handle genuine kernel constraints around memory, concurrency, and error handling - not just compile cleanly in a sandbox.

The biggest proof of scale came from Android. Android 16, built on kernel 6.12, shipped Google’s ashmem anonymous shared memory subsystem in Rust. That one subsystem put Rust kernel code onto hundreds of millions of Android devices in daily production use.

The political inflection point came at the Tokyo Kernel Maintainers Summit in 2025. After years of debate, the assembled maintainers reached a formal consensus: Rust stays. The coexistence model - Rust for new code, C for existing subsystems, no forced migrations - became explicit kernel policy.

Linux 7.0 in 2026 delivered the patch that removed Rust’s experimental designation. Four years after the infrastructure merge, the experiment is over.

What “Stable” Actually Means Technically

When kernel developers say “stable Rust,” the word carries a specific technical meaning that goes beyond general Rust release channels.

Before 7.0, building a Rust-enabled kernel required a nightly Rust compiler. Nightly builds include unstable language features that have not yet completed the RFC and stabilization process. Depending on those features meant kernel builds could break whenever the Rust project changed an unstable API - a reproducibility problem that made distributors understandably nervous.

Linux 7.0 drops that requirement. The kernel now builds against the stable Rust release track only, with Rust 1.93 as the minimum supported version. That is the same compiler channel Rust application developers use.

Distribution support is handled through the Debian anchor policy. The kernel project commits to supporting whichever Rust version ships in the current Debian stable release . Since Debian’s toolchain choices set the floor for many major distributions, this policy gives maintainers a clear, reproducible baseline and avoids situations where building the kernel requires pulling in a newer compiler than what the distro provides.

Linux 7.0 also ships new driver core abstractions that extend what Rust drivers can do without dropping into unsafe code:

dev_printksupport for all Rust device types, integrating Rust drivers into the standard kernel logging infrastructuredma_set_max_seg_size()for Rust DMA drivers, enabling proper scatter-gather constraints in Rust-based storage and network drivers- Generic I/O back-ends for register abstractions, covering memory-mapped hardware register access

- A sample SoC driver included as an official template for new contributors

That last item is practically significant. One recurring barrier to Rust kernel contributions has been the absence of clear, sanctioned examples. The SoC template gives reviewers a consistent reference point for what idiomatic Rust kernel code should look like, which is something the ecosystem has needed for a while.

Real Rust Drivers Already Shipping

Rust kernel code is already in production at scale.

Google’s Android ashmem anonymous shared memory subsystem runs on every device with Android 16. Inter-process memory sharing is not a minor kernel feature; it is exercised continuously on a device that is in active use. Rust kernel code has been handling this on hundreds of millions of phones without incident.

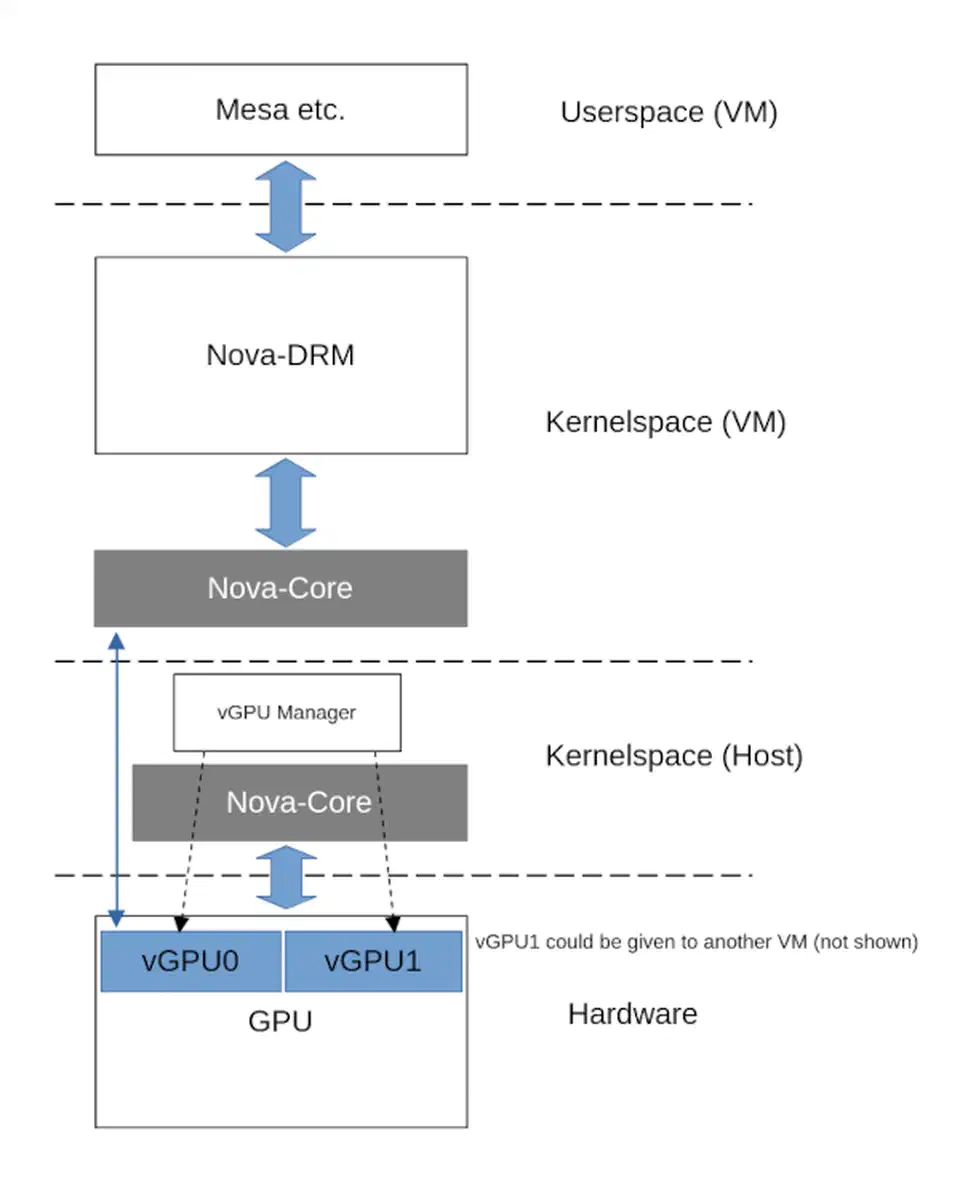

NVIDIA’s Nova GPU driver is the most technically demanding Rust driver project in the kernel. Nova is a full DRM

driver for Turing-era hardware - the RTX 20 series and GTX 1600 series GPUs. It is split into two crates: nova-core handles hardware initialization and communication, while nova-drm implements the Linux DRM API. GPU drivers are among the most complex in the kernel tree, involving asynchronous command submission, firmware interaction, and tight coupling with the display subsystem. Nova being upstream and targeting real consumer hardware shows how far the kernel community’s confidence in Rust has moved.

The Arm Mali Tyr driver is proceeding alongside Nova, bringing Rust GPU driver work to the ARM graphics stack that underlies most mobile Linux devices - phones, tablets, and ARM-based laptops.

For new contributors, the sample SoC driver included in 7.0 provides a working, reviewed, idiomatic Rust driver to study and adapt. Every mature kernel subsystem has reference implementations. Having one for Rust drivers normalizes what reviewers will expect and lowers the barrier to first-time contributions.

The Controversy: A Culture Clash in the Kernel Community

The transition was contentious, and the record is worth being accurate about.

The sharpest public conflict came in February 2025 over a Rust binding for the kernel’s C-based DMA API. Christoph Hellwig, a longtime kernel maintainer responsible for much of the storage and DMA infrastructure, clashed with Hector Martin of Asahi Linux over the approach. The technical disagreement was about how Rust abstractions should wrap existing C APIs, but it carried a harder question underneath: does Rust’s presence in the kernel impose new maintenance burdens on C subsystem owners who never asked for it?

Linus Torvalds stepped in publicly. His message defended the technical validity of the Rust patch while making clear that no individual maintainer’s preferences would override kernel-wide policy. The intervention did not resolve the cultural tension, but it established where authority sits on these questions.

The formal policy is explicit: Rust code will not be forced into any subsystem over a maintainer’s objections. Subsystem owners can actively accept Rust contributions, remain hands-off, or decline them. The model is coexistence, not displacement.

The controversy picked up a new thread when the first CVE traced to a Rust unsafe block was reported. Rust’s safety guarantees apply only to safe code. The unsafe keyword exists for situations where the programmer must take direct responsibility for memory correctness - unavoidable when interfacing with hardware or calling into C APIs. That CVE did not undermine the case for Rust in the kernel, but it reminded everyone that unsafe blocks require the same rigorous review as equivalent C code, and that the kernel’s Rust abstractions are not automatically safe just because they are written in Rust.

Both sides have had to adjust. Rust advocates had to accept that coexistence means respecting C maintainers’ authority over their subsystems. C veterans had to accept that the kernel’s long-term direction now includes a second systems language, and that it is not going anywhere.

What This Means for Kernel Developers

The 7.0 milestone has concrete implications for anyone who writes kernel code or wants to start.

The skill expectations are shifting. For new kernel contributors, proficiency in both C and Rust is becoming the baseline. C remains essential because the overwhelming majority of existing kernel code is C and will stay that way for a long time. But reviewers increasingly expect new driver submissions to at least consider whether Rust is the appropriate choice, and knowing only one language limits where a developer can contribute effectively.

The most practical entry point is what some developers call the “leaf driver” approach. Rust is poorly suited for deep kernel infrastructure - the scheduler, the memory allocator, the VFS layer all have decades of C code, tight inter-subsystem dependencies, and maintainers with good reasons to be conservative. At the edges, the case is clearer: networking drivers, storage controllers, NVMe, GPU drivers. These are more self-contained, interact with the rest of the kernel through defined interfaces, and are precisely where use-after-free and buffer overflow bugs in C have historically done the most damage.

On tooling: Cargo

has been adapted for no-std kernel crates, since kernel code cannot use the Rust standard library. CI pipelines now test Rust builds alongside C. rust-analyzer integration with kernel source trees is functional but not yet as smooth as in a regular Rust project; developers accustomed to the IDE experience in application development will find it rougher than expected. For managing pinned Rust toolchain versions across projects, Nix shells offer a reproducible alternative

to system-wide installations.

The empirical data from Android is relevant. Google’s published results suggest that Rust components introduce significantly fewer memory-safety bugs than equivalent C code. The kernel environment is not the same as Android userspace, but ownership, borrowing, and compile-time lifetime analysis work the same way in both. Those numbers are the clearest evidence available that the kernel’s bet on Rust is likely to produce measurable security improvements over the next several kernel release cycles.

The transition will take years. Existing C drivers are not going to be rewritten, and the kernel’s overall character will remain C-dominant for the foreseeable future. But new drivers written in Rust starting now will still be running in production a decade from now, carrying none of the memory-safety bugs they were never allowed to introduce.

Botmonster Tech

Botmonster Tech