Structured Output from LLMs: JSON Schemas and the Instructor Library

The Instructor

library (v1.7+) patches LLM client libraries to return validated Pydantic

models instead of raw text. It does this through JSON schema enforcement in the system prompt, automatic retries on validation failure, and native structured output modes where the provider supports them. It works with OpenAI, Anthropic, Ollama

, and any OpenAI-compatible API. You define your output as a Python class and get back typed, validated data - no regex parsing, no json.loads() wrapped in try/except, no manual type coercion.

The Problem with Free-Text LLM Output

LLMs return strings. Most production applications need structured data - a JSON object with specific fields, typed values, and validated constraints. Bridging this gap is one of the most common pain points in LLM application development, and the naive solutions tend to fail at the worst times.

The most common approach is prompting the model to return JSON and parsing it with json.loads(). This fails 5-15% of the time in practice. The model wraps the JSON in markdown code fences, adds trailing commas, drops quotes around keys, or prepends an explanation like “Here is the JSON you requested:”. Every one of these breaks the parser.

The next approach is regex extraction - write a pattern to find the JSON block inside the response. This works for simple cases but breaks on nested objects and becomes unmaintainable fast. A schema with more than two levels of nesting will defeat most regex-based parsers eventually.

Even when JSON parses correctly, type safety is not guaranteed. The model might return "count": "twelve" instead of "count": 12, or omit a required field entirely, or add unexpected keys your downstream code doesn’t know how to handle. If you’re feeding this data into a database or another service, a single malformed response can corrupt records or crash the pipeline.

The cost compounds. Without structured output , you need manual error handling, retry logic, and fallback parsing for every LLM call that returns structured data. That’s 50-100 lines of boilerplate per endpoint - code that does nothing except compensate for the model’s unpredictability. Unstructured output also makes it harder to detect and fix LLM hallucinations in production - schema validation gives you a systematic checkpoint that raw text never provides.

How Instructor Works Under the Hood

Instructor solves this with a three-layer approach that sits between your code and the LLM API.

Layer 1 - Schema injection: Instructor converts your Pydantic model into a JSON schema and injects it into the system prompt or the API’s native response_format parameter, depending on the mode. The model receives explicit, machine-generated instructions about what structure to produce.

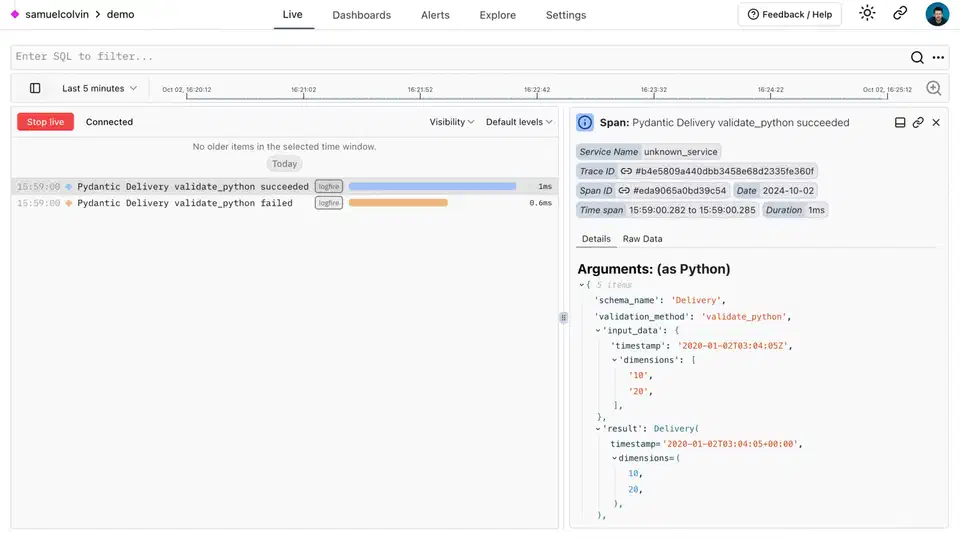

Layer 2 - Response parsing: The library intercepts the raw API response, extracts the JSON content (handling code fences, markdown wrappers, and partial responses), and attempts to parse it into your Pydantic model.

Layer 3 - Validation and retry: If Pydantic validation fails - wrong types, missing fields, constraint violations - Instructor automatically sends the validation error back to the model as a follow-up message and asks it to fix the output. It retries up to max_retries times (default 3). In practice, GPT-4o mini and Claude Haiku both correct their output on the first retry after seeing the actual validation error message.

Instructor supports several operating modes:

TOOLS- Uses the API’s native function/tool-calling interface. Best for OpenAI and Anthropic models.JSON- Usesresponse_format: {"type": "json_object"}. Works universally but requires the schema in the prompt.MD_JSON- Extracts JSON from markdown code blocks. Fallback for older models.

The right mode depends on your provider. For OpenAI and Anthropic, TOOLS mode is the most reliable. For Ollama and other local model servers, JSON mode works better because most local models don’t support the full function-calling format.

The Pydantic model is the single source of truth. Field descriptions feed into the schema that guides the LLM. Validators enforce business rules. Type hints ensure downstream code gets exactly the types it expects. You write one class and get type safety, validation, and LLM guidance all at once.

Getting Started: Installation and First Structured Call

Install Instructor and Pydantic:

pip install instructor pydanticInstructor v1.7+ requires Python 3.9+ and has no heavy dependencies beyond Pydantic v2.

Define your output model:

from pydantic import BaseModel, EmailStr, Field

from typing import Optional

class ExtractedContact(BaseModel):

name: str

email: EmailStr

phone: Optional[str] = None

company: str = Field(description="The company or organization they work for")Patch your client and make a call:

import instructor

from openai import OpenAI

client = instructor.from_openai(OpenAI())

contact = client.chat.completions.create(

model="gpt-4o-mini",

response_model=ExtractedContact,

messages=[{

"role": "user",

"content": "Extract contact info: John Smith, john@acme.com, works at Acme Corp"

}]

)

print(contact.name) # "John Smith"

print(contact.email) # "john@acme.com"

print(contact.company) # "Acme Corp"The returned contact is a fully typed Pydantic object. contact.email is validated as a proper email address. contact.phone is None if the input doesn’t contain one. No JSON parsing, no type casting, no error handling needed at the call site.

For Anthropic, swap the client patch:

import instructor

from anthropic import Anthropic

client = instructor.from_anthropic(Anthropic())

contact = client.messages.create(

model="claude-3-5-haiku-latest",

max_tokens=1024,

response_model=ExtractedContact,

messages=[{"role": "user", "content": "..."}]

)You can add field-level validation with Pydantic validators to enforce business rules the LLM alone can’t guarantee:

from pydantic import field_validator

import re

class ExtractedContact(BaseModel):

name: str

email: EmailStr

phone: Optional[str] = None

@field_validator("phone")

@classmethod

def validate_phone(cls, v):

if v is not None and not re.match(r"^\+?[\d\s\-\(\)]{7,15}$", v):

raise ValueError("Invalid phone number format")

return v

When the validator raises, Instructor catches it, formats the error message, sends it back to the model, and retries. The model sees something like “phone: Invalid phone number format - please correct the value” and adjusts its output accordingly.

Advanced Patterns: Lists, Nested Models, and Streaming

Extracting lists: Use response_model=list[ExtractedContact] to get the model to return an array of validated objects. Instructor handles the schema wrapping automatically. This is useful for batch extractions - process an email thread and get back a list of every contact mentioned.

Nested models: Define models that reference other models:

from decimal import Decimal

class Company(BaseModel):

name: str

tax_id: Optional[str] = None

class LineItem(BaseModel):

description: str

quantity: int

unit_price: Decimal

class Invoice(BaseModel):

vendor: Company

line_items: list[LineItem]

total: Decimal

currency: str = "USD"The JSON schema is generated recursively and the LLM produces nested JSON that Pydantic validates at every level. For GPT-4o and Claude Sonnet, complex nested schemas like this work reliably on the first attempt with TOOLS mode.

Constrained choices with Literal and Enum:

from typing import Literal

class TicketClassification(BaseModel):

category: Literal["billing", "technical", "feature-request", "other"]

urgency: Literal["low", "medium", "high"]

summary: strThe Literal constraint appears in the schema and the model picks from your defined options, not whatever synonym it prefers. This matters when category values feed into routing logic downstream - “BILLING”, “billing issue”, and “payment problem” all become "billing".

Chain of thought with hidden fields: Add a reasoning field that you discard after the call:

class ExtractedSentiment(BaseModel):

reasoning: str = Field(

description="Step-by-step reasoning for the classification"

)

sentiment: Literal["positive", "negative", "neutral"]

confidence: float = Field(ge=0.0, le=1.0)The model fills out reasoning before committing to sentiment. On complex or ambiguous inputs, this improves accuracy noticeably - the model is forced to think before producing its final answer. Discard reasoning in your response handling if you don’t need it downstream.

Streaming partial results: For long extractions where you want progressive UI updates, use create_partial:

for partial_contact in client.chat.completions.create_partial(

model="gpt-4o-mini",

response_model=ExtractedContact,

messages=[...]

):

if partial_contact.name:

print(f"Name so far: {partial_contact.name}")Fields are None until the model has generated them. As each field arrives, you can update a UI or pass partial data to downstream consumers.

Range constraints with Annotated: Use Annotated with Field for numeric range constraints:

from typing import Annotated

class ProductReview(BaseModel):

rating: Annotated[int, Field(ge=1, le=5, description="Rating from 1 to 5")]

summary: str

would_recommend: boolThe description guides the model and Pydantic enforces the constraint as a safety net.

Using Instructor with Ollama and Local Models

For local model inference, Ollama exposes an OpenAI-compatible API that Instructor connects to with a custom base URL:

import instructor

from openai import OpenAI

client = instructor.from_openai(

OpenAI(

base_url="http://localhost:11434/v1",

api_key="ollama" # required by the client library, not checked by Ollama

),

mode=instructor.Mode.JSON

)

contact = client.chat.completions.create(

model="qwen2.5:7b-instruct",

response_model=ExtractedContact,

messages=[{

"role": "user",

"content": "Extract: Jane Doe, jane@startup.io, CTO at StartupIO"

}],

temperature=0

)Several things are worth getting right when using local models:

Use instructor.Mode.JSON, not TOOLS. Most local models don’t implement the full function-calling format. Ollama’s native JSON mode constrains generation to valid JSON tokens at the grammar level, which is more reliable than prompt-only enforcement.

Set temperature=0 for extraction tasks. Extraction doesn’t benefit from creativity. Higher temperatures increase schema violations, which consume tokens on retries and reduce overall throughput.

Model selection matters. Qwen 2.5 7B Instruct and Mistral 7B Instruct v0.3 follow JSON schemas most reliably in practice. Smaller models at 3B parameters and below struggle with anything but the simplest flat schemas. If you need a 3B model for performance reasons, keep schema depth to one level and fields to five or fewer.

Keep schemas manageable for local models. Flat objects with five to eight fields and basic types (str, int, float, bool, Optional) work reliably. Deeply nested schemas with three or more levels may require max_retries=5 to succeed consistently. For complex schemas, consider breaking the extraction into two sequential calls: a fast first call handles simple fields, a slower second call handles nested structure using the first call’s output as context.

Performance in practice: A structured extraction call to Qwen 2.5 7B via Ollama on reasonable hardware (RTX 3080 or better) completes in 1-3 seconds for simple schemas including validation. That’s fast enough for batch processing hundreds of documents per minute - more than adequate for most offline data extraction pipelines.

Handling failure after max retries: If all retries are exhausted and validation still fails, Instructor raises a ValidationError. Catch it and decide what to do - log the raw response for manual review, fall back to a more capable model, or return a default. Don’t silently discard the error:

from pydantic import ValidationError

try:

result = client.chat.completions.create(

model="qwen2.5:7b-instruct",

response_model=ExtractedContact,

max_retries=3,

messages=[...]

)

except ValidationError as e:

# Log the failure, fall back, or escalate

print(f"Extraction failed after retries: {e}")

result = NoneThe combination of Pydantic’s schema generation, Instructor’s retry loop, and Ollama’s grammar-constrained JSON mode gets you to 99%+ success rates on simple schemas with local 7B models. For complex schemas or edge cases that consistently fail, switch to a larger model or a cloud API for those specific documents rather than tuning retry counts upward.

Instructor doesn’t change the programming model for LLM calls - you still write messages and get back a response. It just makes the contract between your code and the model precise: you say exactly what structure you need, the model produces it, and Pydantic enforces it. The result is LLM integration code that handles failures systematically rather than through ad-hoc string manipulation.

Writing Field Descriptions That Actually Help

Field descriptions are part of the schema that gets sent to the model. They’re not just for human readers - they actively guide extraction quality. A few practices that make a real difference:

Be specific about format and source. Instead of description="The date", write description="The invoice date in ISO 8601 format (YYYY-MM-DD)". Instead of description="The amount", write description="The total amount due, as a number without currency symbols".

Clarify ambiguous cases. If a document might contain multiple dates (issue date, due date, payment date), the description is where you tell the model which one to pick: description="The payment due date, not the invoice issue date".

State what to return when the value is absent. For optional fields: description="The purchase order number if present, otherwise None". This prevents the model from inventing a plausible-looking value.

Use examples for constrained formats: description="Two-letter ISO 3166-1 country code, e.g. US, GB, DE". Short examples cut ambiguity faster than lengthy prose descriptions.

Well-written descriptions reduce retry rates and improve accuracy on the first attempt - particularly for local models that have less instruction-following capability baked in. They’re also self-documenting: a Pydantic model with good field descriptions is easier to understand and maintain than one with bare type hints.

When building a new extraction schema, it’s useful to test with a sample document and print the raw API response before plugging in Instructor. If the model is producing the right fields but wrong formats, the fix is almost always a better description rather than a more complex validation rule.

Botmonster Tech

Botmonster Tech