DeepSeek V4 Tech Report: 3 Tricks That Cut Compute 73%

DeepSeek V4 is a 1.6 trillion parameter open-weight Mixture-of-Experts model. It reads 1M tokens at once. It uses 27% of V3.2’s inference FLOPs and 10% of its KV cache. The DeepSeek V4 tech report credits three moves: hybrid CSA plus HCA attention, Manifold-Constrained Hyper-Connections, and the Muon optimizer in place of AdamW.

Key Takeaways

- DeepSeek V4 is a free, open-weight AI that goes toe-to-toe with the top closed models from OpenAI, Anthropic, and Google.

- It reads 1 million tokens in one prompt, enough for several full books or a long agent run without losing track.

- It runs on roughly a quarter of the compute its previous version needed, making long-context AI affordable to operate.

- A smaller team built it without access to top NVIDIA chips, proving clever engineering can rival raw GPU spend.

- It scored a perfect 120 out of 120 on the 2025 Putnam math competition and beats Google’s Gemini 3.1 Pro at 1M-token recall.

DeepSeek V4 at a Glance

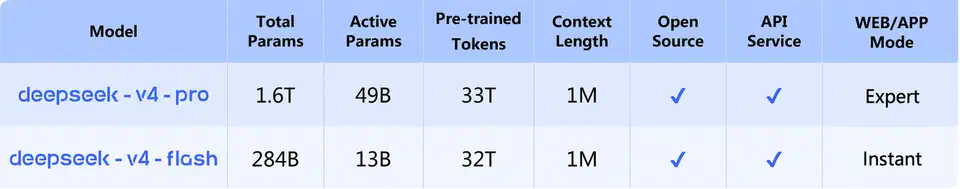

The official launch announcement on April 24, 2026 framed the release as “the era of cost-effective 1M context length.” It shipped two checkpoints under the MIT license. DeepSeek-V4-Pro runs at 1.6T total and 49B active parameters. DeepSeek-V4-Flash runs at 284B total and 13B active. Both models read 1M tokens at once. Both ship as open weights on Hugging Face . The routed expert weights use FP4 math, and most other weights use FP8.

The backdrop is what makes these choices read as forced moves, not as flourishes. DeepSeek runs with a far smaller team than OpenAI, Anthropic, or Google DeepMind. It has no access to the top NVIDIA chips. It also has far less compute than its frontier rivals. The paper opens with the constraint stated as a goal:

In order to break the efficiency barrier in ultra-long contexts, we develop the DeepSeek-V4 series, including the preview versions of DeepSeek-V4-Pro with 1.6T parameters (49B activated) and DeepSeek-V4-Flash with 284B parameters (13B activated). Through architectural innovations, DeepSeek-V4 series achieve a dramatic leap in computational efficiency for processing ultra-long sequences.

DeepSeek-AI (DeepSeek V4 tech report, 2026)

The paper reads more like a systems writeup than a scaling-laws update. Every section trades FLOPs or memory for smarter algorithms and tighter infrastructure. Each trade-off comes with specific numbers attached.

The Attention Wall: Why 1M Tokens Breaks Vanilla Transformers

The original Vaswani et al. attention mechanism checks every new token against every prior token. That gives you a quadratic cost. 10 tokens means 100 checks per step. 100,000 tokens means 10 billion. 1,000,000 tokens means a trillion. The KV cache architecture is the twin issue. Every past token’s key and value tensors sit in HBM GPU memory. At 1M tokens, that footprint hits gigabytes per live request.

FlashAttention and grouped-query attention help, but neither bends the curve enough. Without a deeper fix, 1M-token long context windows stay a stunt and not a default. DeepSeek frames the need bluntly:

The emergence of reasoning models has established a new paradigm of test-time scaling, driving substantial performance gains for Large Language Models. However, this scaling paradigm is fundamentally constrained by the quadratic computational complexity of the vanilla attention mechanism, which creates a prohibitive bottleneck for ultra-long contexts and reasoning processes.

DeepSeek-AI (DeepSeek V4 tech report, 2026)

Both compute cost and memory footprint must come down. Otherwise, a 1M-context model can’t ship at sane production prices.

Hybrid Attention: CSA, HCA, and a Sliding Window

The core of V4 is a three-pathway attention stack stitched across layers. Compressed Sparse Attention (CSA) groups every four KV entries into one compressed entry along the sequence. A “Lightning Indexer” with FP4 math then picks a top-k of those entries for each query. Heavily Compressed Attention (HCA) is the global-overview path. It folds every 128 tokens into one entry, then attends densely over the short result. A Sliding Window branch keeps the most recent 128 tokens raw, so numbers, names, code, and function arguments stay exact.

DeepSeek V4 Pro stacks 61 transformer layers. The first two use HCA. The other 59 interleave CSA and HCA. CSA picks the top-1024 compressed KV entries per query in V4 Pro, and top-512 in V4 Flash. The paper states the goal plainly:

As the context length reaches extreme scales, the attention mechanism emerges as the dominant computational bottleneck in a model. For DeepSeek-V4, we design two efficient attention architectures: Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA), and employ their interleaved hybrid configuration, which substantially reduces the computational cost of attention in long-text scenarios.

DeepSeek-AI (DeepSeek V4 tech report, 2026)

The question DeepSeek answers is simple. How little can we attend to and still grasp the whole input? The three paths give a tiered reply. The sliding window holds exact recent tokens. CSA gives sparse selective recall. HCA captures global structure.

The Efficiency Payoff: 73% FLOP Reduction at 1M Tokens

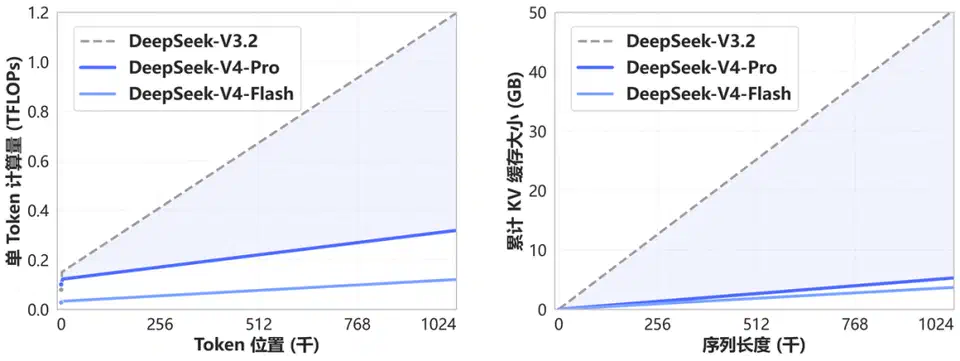

Compared with DeepSeek V3.2 , V4 Pro at 1M-token context uses 27% of the single-token inference FLOPs and 10% of the stored KV cache. V4 Flash, with fewer active parameters, pushes further. It uses 10% of V3.2’s single-token FLOPs and 7% of its KV cache at 1M tokens.

| Metric (1M context) | DeepSeek V3.2 | DeepSeek V4 Pro | DeepSeek V4 Flash |

|---|---|---|---|

| Total parameters | 671B | 1.6T | 284B |

| Activated parameters | 37B | 49B | 13B |

| Single-token FLOPs (rel.) | 100% | 27% | 10% |

| Accumulated KV cache (rel.) | 100% | 10% | 7% |

| Training tokens | - | 33T | 32T |

Against a BF16 GQA8 baseline with head size 128 (a common attention setup), the V4 KV cache shrinks to about 2% of baseline at 1M-token context. In practice, that means less VRAM per concurrent user and smaller batch-cost ratios. It also opens a real path to running large open-weight models on consumer GPUs once FP4 throughput on future GPUs catches up to FP8.

Manifold-Constrained Hyper-Connections (mHC): Stopping Trillion-Parameter Models From Exploding

Residual connections let transformers add layer outputs back to a running signal so gradients can flow during backprop. Hyper-Connections (HC), from the Hyper-Connections paper , widen that residual stream by n_hc to give the model a new scaling axis. At trillion-parameter scale, HC cracks. The residual matrix blows up signals layer over layer. Training crashes with loss spikes. Rolling back to a checkpoint costs millions of GPU-hours.

mHC, from the Xie et al. 2026 paper (arXiv:2512.24880) , is folded into V4. It pins the residual mapping matrix B_l onto the Birkhoff polytope. That’s the manifold of doubly stochastic matrices, where every row and every column sums to 1. The bound on the spectral norm is 1. That makes the residual transform non-expansive and locks in signal stability across deep layer stacks. The paper states the mechanism plainly:

The core innovation of mHC is to constrain the residual mapping matrix B_l to the manifold of doubly stochastic matrices (the Birkhoff polytope) M, and thus enhance the stability of signal propagation across layers. This constraint ensures that the spectral norm of the mapping matrix is bounded by 1, so the residual transformation is non-expansive, which increases the numerical stability during both the forward pass and backpropagation.

DeepSeek-AI (DeepSeek V4 tech report, 2026)

The projection onto that manifold uses the Sinkhorn-Knopp algorithm. You exp the raw matrix, then row-normalize and column-normalize in a loop. DeepSeek runs t_max = 20 steps per layer in production, with an n_hc scale of 4. A 20-step inner loop per layer at trillion-parameter scale sounds brutal for runtime. However, DeepSeek’s fused kernel, selective recompute, and DualPipe overlap cut the total cost: “Collectively, these optimizations constrain the wall-time overhead of mHC to only 6.7% of the overlapped 1F1B pipeline stage.” Cheap insurance against a crashed training run.

Muon Replaces AdamW for Most Parameters

AdamW has been the default optimizer for LLM training for years. It’s safe, well-known, and slow at the trillion-parameter frontier. DeepSeek swapped it for the Muon optimizer from Liu et al. 2025 on most of V4’s parameters. It kept AdamW for the embedding module, the prediction head, the static biases and gates of mHC, and all RMSNorm weights.

Muon’s core trick is orthogonalizing the gradient update matrix using Newton-Schulz iterations. DeepSeek runs 10 steps split into two phases. The first 8 steps use coefficients (3.4445, -4.7750, 2.0315) to drive fast convergence by pushing singular values toward 1. The last 2 steps use coefficients (2, -1.5, 0.5) to lock the singular values right at 1. The paper explains the choice plainly:

We employ the Muon optimizer for the majority of modules in DeepSeek-V4 series due to its faster convergence and improved training stability.

DeepSeek-AI (DeepSeek V4 tech report, 2026)

One detail is load-bearing. Muon needs the full gradient matrix, which clashes with ZeRO sharding . DeepSeek built a hybrid ZeRO bucket scheme. It uses a knapsack solver for dense parameters and a flatten-pad-distribute scheme for MoE expert parameters. That’s paired with stochastic-rounding BF16 reduction of MoE gradients across ranks, which halves the data sent between nodes.

Communication-Compute Overlap, TileLang, and Z3 Verification

At 1.6T parameters the bottleneck shifts from compute to data movement. Layers shard across many racks. Every forward and backward pass crosses the network. DeepSeek’s MoE Expert Parallelism scheme splits experts into waves. It pipes dispatch, GEMM, activation, and combine across them. Wave-1 GEMMs run while wave-2 dispatches are still in flight. Reported speedup over non-fused baselines is 1.50 to 1.73x for general inference and up to 1.96x for fast RL rollouts. The CUDA mega-kernel is open-sourced as MegaMoE , part of the DeepGEMM repo.

The kernels are written in TileLang , a DSL for GPU code that DeepSeek co-built and that ICLR 2026 accepted. TileLang handles host-codegen tuning. CPU-side check overhead drops from “tens or hundreds of microseconds to less than one microsecond per invocation.” It also wires in the Z3 SMT solver for formal integer checks at compile time. Z3 proves kernel correctness for layout inference, memory hazard checks, and bound analysis, instead of leaning on empirical tests alone:

We integrate the Z3 SMT solver into TileLang’s algebraic system, providing formal analysis capability for most integer expressions in tensor programs. Under reasonable resource limits, Z3 elevates overall optimization performance while restricting compilation time overhead to just a few seconds.

DeepSeek-AI (DeepSeek V4 tech report, 2026)

For training reproducibility, DeepSeek ships bit-exact and batch-invariant kernels end to end. Attention uses a dual-kernel decoding strategy. Output bits stay the same whether a sequence runs on one SM or many. Matrix math drops cuBLAS for DeepGEMM with bit-exact split-k. Backward passes for sparse attention, MoE, and mHC each use buffered FP32 sums, not atomicAdd. That removes float order effects. Closed labs almost never publish this layer of detail.

Curriculum Training, 33T Tokens, and Anticipatory Routing

V4 Pro trained on 33T tokens. V4 Flash trained on 32T. Both models use a curriculum that ramps sequence length from 4K through 16K and 64K to the full 1M window. Sparse attention kicks in at the 64K stage, after a 1T-token dense-attention warmup. Batch size climbs from a small start to 75.5M tokens for Flash and 94.4M for Pro. Peak Muon learning rate is 2.7e-4 for Flash and 2.0e-4 for Pro, decayed cosine-style to 10x lower at the end.

The new stability trick is Anticipatory Routing. Rolling back to a checkpoint when loss spikes does not fix the cause. DeepSeek found that loss spikes track with outliers in MoE layers. The routing itself then feeds back and amplifies those outliers. The fix is to decouple routing from the current network state:

We found that decoupling the synchronous updates of the backbone network and the routing network significantly improves training stability. Consequently, at step t, we use the current network parameters for feature computation, but the routing indices are computed and applied using the historical network parameters. We “anticipatorily” compute and cache the routing indices to be used later at step t, which is why we name this approach Anticipatory Routing.

DeepSeek-AI (DeepSeek V4 tech report, 2026)

An auto detector flips on Anticipatory Routing only when a loss spike shows up, runs it for a window, then hands control back to standard real-time routing. Total wall-clock overhead stays around 20%, but it averts loss-spike rollbacks entirely. SwiGLU clamping pairs with the routing fix. The linear part is clamped to [-10, 10] and the gate part is capped at 10, which holds down numerical outliers at the source.

Benchmarks: How V4 Pro Max Compares With Frontier Closed Models

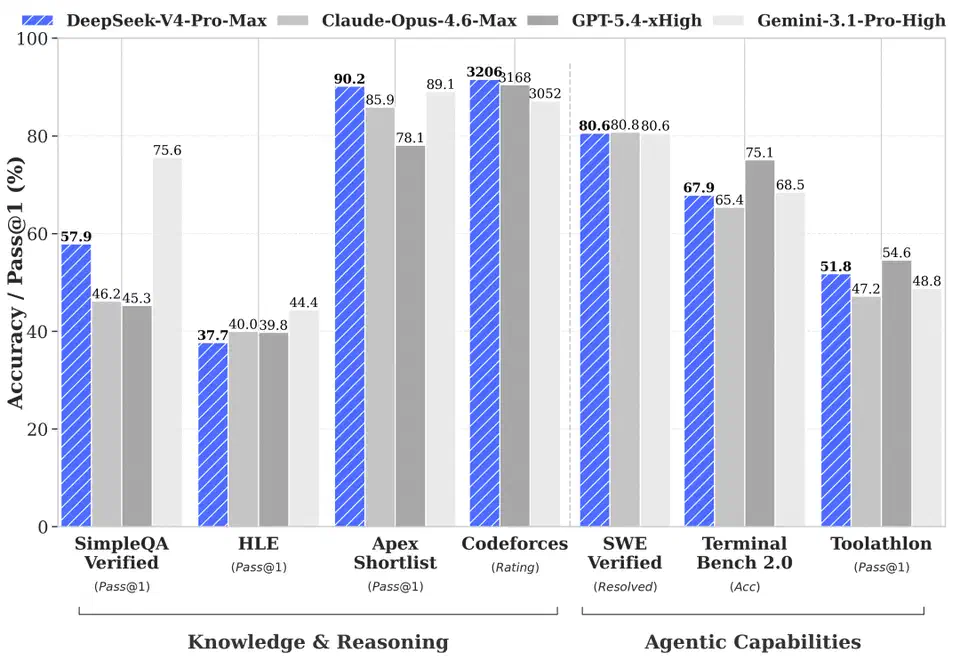

DeepSeek V4 Pro Max is the max-reasoning-effort mode of V4 Pro. That’s the config the paper benchmarks against frontier closed models. All numbers below are self-reported by DeepSeek’s own eval framework. It’s worth waiting for independent runs on Artificial Analysis, LMSys Arena, and Aider Polyglot before you treat any of this as settled.

| Benchmark | V4 Pro Max | Opus 4.6 Max | GPT 5.4 xHigh | Gemini 3.1 Pro High |

|---|---|---|---|---|

| MMLU-Pro (EM) | 87.5 | - | - | - |

| GPQA Diamond (Pass@1) | 90.1 | - | - | - |

| LiveCodeBench (Pass@1-COT) | 93.5 | - | - | - |

| SimpleQA-Verified (Pass@1) | 57.9 | 46.2 | 45.3 | 75.6 |

| Codeforces (Rating) | 3206 | 3168 | 3052 | - |

| SWE Verified (Resolved) | 80.6 | 80.8 | 80.6 | - |

| Terminal Bench 2.0 | 67.9 | 75.1 | 68.5 | 65.4 |

| MRCR 1M (MMR) | 83.5 | - | 76.3 | - |

| HLE (Pass@1) | 37.7 | - | - | - |

Two results stand out. On Putnam-2025, run under a hybrid formal-informal regime with heavy compute scaling, DeepSeek V4 reached a proof-perfect 120/120. That matches the Axiom system and beats Aristotle (100/120) and Seed-1.5-Prover (110/120). On MRCR 1M, V4 Pro Max scored 83.5 MMR. It beats Gemini 3.1 Pro at 76.3 at the far end of the 1M context window, but Claude Opus 4.6 still leads here. On reasoning, the paper places V4 Pro Max above GPT-5.2 and Gemini-3.0-Pro on standard tests, but a half-generation behind GPT-5.4 and Gemini-3.1-Pro: “approximately 3 to 6 months” of catch-up, in the paper’s words.

For Chinese writing, DeepSeek V4 Pro hits a 62.7% win rate against Gemini 3.1 Pro on functional writing and 77.5% on creative writing quality. Claude Opus 4.5 still wins 52% to 45.9% on the hardest multi-turn prompts.

Limitations and Who Should Skip This

- V4 still trails frontier closed models on knowledge-heavy tests (MMLU-Pro, GPQA, HLE). The paper admits a roughly 3 to 6 month gap behind Gemini-3.1-Pro.

- The 1.6T total parameters mean self-hosting V4 Pro is not practical without multi-node hardware, even at FP4. V4 Flash at 284B is the realistic on-prem target.

- Most benchmark numbers in the tech report are self-reported. Public leaderboards (Artificial Analysis, LMSys, Aider Polyglot) had not posted full V4 results as of May 2026.

- Claude Opus 4.6 still beats V4 Pro Max on MRCR 1M recall and on Terminal Bench 2.0 agent tasks. For agentic coding work, the Claude Opus closed-model gap is real.

- The hybrid attention KV cache layout (state cache plus block cache, with separate SWA and CSA/HCA segments) is not yet supported by stock vLLM or SGLang releases without DeepSeek’s patches.

What This Means for Open-Weight AI

DeepSeek shipped the weights, the architecture paper, the MegaMoE kernel inside DeepGEMM, the TileLang DSL, the standalone mHC paper, and the Muon details. Closed labs treat parallelism strategies, optimizer choices, and kernel-level tricks as trade secrets. DeepSeek published the recipe.

The open question is how much of frontier model skill is compute-bound versus idea-bound. V4 is the strongest data point yet that the gap can close with engineering, even when the compute side is shorter by 10x. With MIT-licensed weights, every result here is reproducible. The published kernels are reusable in other training stacks today.

Alibaba’s Qwen3.6-35B-A3B, a 35B-total Apache release , is another April-2026 data point in the same trend: 3B active parameters out of 35B, Apache 2.0, and a 73.4 score on SWE-bench Verified. MiniMax’s M2.7 fits the same picture from the reasoning angle : 230B total with 10B active per token, and SWE-bench Verified at 78.0%. For the consumer-scale flagships in that same wave, our breakdown of the three big open-weight releases of 2026 sorts out which one to deploy.

FAQ

How much VRAM do I need to self-host DeepSeek V4 Pro?

What is the difference between V4 Pro Max and V4 Pro?

<think> and </think> tokens in the chat template.Why does mHC use 20 Sinkhorn-Knopp iterations and not fewer?

How does the 1M context window actually work in practice?

Is V4 better than Claude Opus 4.6 or GPT 5.4?

Botmonster Tech

Botmonster Tech