What X and Reddit Users Are Saying about Claude Opus 4.7

Claude Opus 4.7 landed on April 16, 2026, and after the first 48 hours on X and Reddit the verdict is net-positive but heavily qualified. Power users are calling it state-of-the-art for agentic coding, long refactors, and the viral new Claude Design tool. The loudest complaints cluster around runaway token burn (roughly 1.5-3x more expensive in practice than 4.6), an “ambiguity tax” where the model no longer silently rescues vague prompts, and confidently broken output on marathon runs. Users who prompt like they are writing a spec are getting enormous leverage out of it. Users who prompt the way they used to prompt 4.6 are burning through their usage caps before lunch.

This is a community-reception snapshot as of April 18, 2026, not an independent benchmark study. The goal is to compress two days of timeline noise so you know what to try, what to avoid, and what to wait out.

What Actually Changed Between Opus 4.6 and Opus 4.7

Before getting to the discourse, a short anchor on capabilities. Anthropic pitches Opus 4.7 as its most capable vision and reasoning model to date, aimed at long-running, agentic work rather than quick chat turns. You can read the official framing on the Anthropic news page for the Claude Opus 4.7 launch post .

The headline benchmark moves, which are being quoted in almost every reaction thread:

| Benchmark | Opus 4.6 | Opus 4.7 |

|---|---|---|

| SWE-bench Verified | 80.8% | 87.6% |

| SWE-bench Pro | 53.4% | 64.3% |

| SWE-bench Multilingual | 77.8% | 80.5% |

| OfficeQA Pro | 57.1% | 80.6% |

| Vending-Bench 2 (net) | $8,018 | $10,937 |

| Computer-use visual acuity | 54.5% | 98.5% |

| Max image resolution | smaller | 2,576 px long edge |

New capabilities making the rounds:

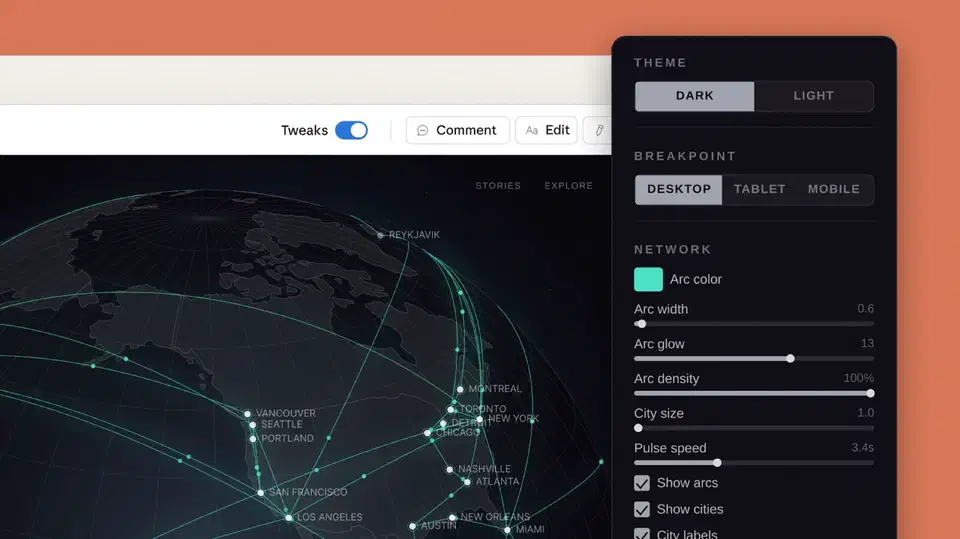

- Claude Design , an Anthropic Labs product that generates polished prototypes, slides, and one-pagers directly from chat.

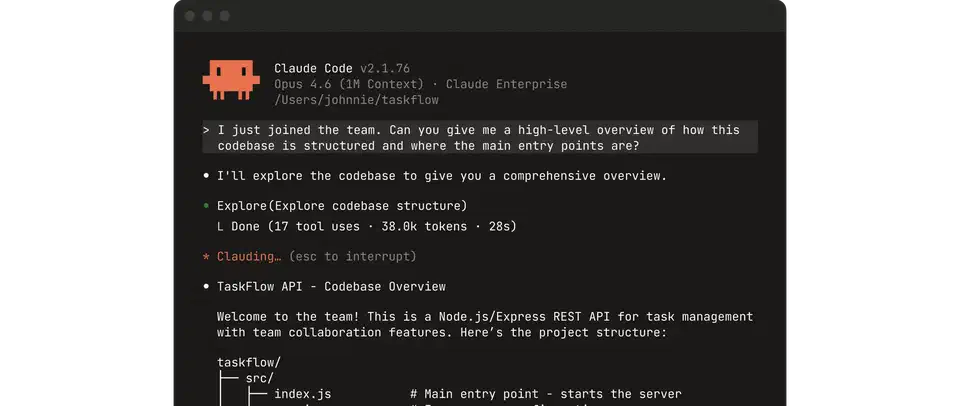

- Stronger self-verifying behavior on long agentic tasks, including a new

/ultrareviewcommand inside Claude Code . - Higher-resolution vision (roughly 3.75 megapixels) and a large jump in computer-use accuracy.

- Gradual rollout across Pro, Max, Team, and Enterprise plans, plus third-party surfaces like GitHub Copilot , Cursor, and Claude for Word.

- Pricing held flat at $5 per million input tokens and $25 per million output tokens.

One design decision sits underneath almost every reaction thread. Anthropic itself calls it out in the migration guide: Opus 4.7 follows instructions much more literally than 4.6, which means prompts that worked before can now produce unexpected results. That single shift from “helpful assistant” toward “precise operator” is the root of most of the 4.7 discourse, both the praise and the complaints.

The Dominant Positive Takes

The hype is real, but it breaks into a few separable threads that together explain why power users are upgrading plans instead of rolling back to 4.6.

On hard problems, the capability jump is genuine. Reddit threads in r/ClaudeAI and r/LocalLLaMA are full of agentic CAD runs, multi-file refactors across large monorepos, and one-shot architectural plans that used to need three or four conversations to arrive at. Users call out how much more introspective and verbose 4.7 is when the task actually warrants it, and how often it self-corrects mid-run instead of charging ahead into obvious failure.

On productivity, the posts getting the most traction are about compounding effects rather than single wins. Cross-session continuity, “vibe coding” runs that produce working MVPs, and one-shot prototypes are driving a wave of “I shipped a SaaS in one Claude Design chat” posts. Startup founder Jeremy Howard described 4.7 as “the first model that ‘gets’ what I’m doing when I’m working,” which has been quote-tweeted hundreds of times. Y Combinator’s Garry Tan and Cursor designer Ryo Lu publicly endorsed it as their new daily driver.

Then there is Claude Design itself. Demos framed around “killing Figma” or “designers in trouble” are the single biggest organic driver of timeline reach this week. Whether or not that framing holds up past week two, the demos are pulling non-developers into the Claude conversation for the first time since Claude 3.

Even the failures are going viral. The canonical quote making the rounds is “68 minutes, millions of tokens, app broken… but god it was beautiful.” Watching an Opus 4.7 run has become content in its own right, separate from whether the artifact at the end works.

The Dominant Complaints: Token Burn, the Ambiguity Tax, and Confidently Broken Output

These three complaints dominate every reply thread, and they are connected. The model does more work per turn, which costs more tokens, and when it silently does the wrong work, the failures are more expensive.

Token burn is the number-one complaint by volume. Users report session caps burning down in minutes instead of hours. One X user claimed Claude Pro subscribers could ask only three questions before hitting their limit on launch day. Another noted that GitHub Copilot was charging a 7.5x premium for Opus 4.7 through the end of April. Independent measurements of the new tokenizer put typical input cost at 1.0-1.35x versus 4.6, with one test on technical docs hitting 1.47x and a real CLAUDE.md file at 1.45x. Combine the tokenizer shift with longer agentic runs and heavier tool use, and the effective per-task cost sits in the 1.5-3x range that users report. Anthropic’s Boris Cherny acknowledged the pressure and raised subscriber rate limits to compensate. A wave of open-source “token optimizer” repos spun up overnight. If the cost pressure stings, reusing a cached context prefix can recover up to 90% of repeated-prefix costs on long agentic runs. Some users went further and switched to open weights, where MiniMax M2.7 lands close to Opus on coding at roughly a tenth of the token cost . Alibaba’s Qwen3.6-35B-A3B, a single-laptop open-weight rival , pushes the same trade further, matching Opus on the pelican-SVG smoke test while running locally on a single laptop.

The “ambiguity tax” is the second complaint and the one worth slowing down for. The framing started as a satirical X thread and then caught fire: Opus 4.6 silently rewrote bad prompts for you for free, and 4.7 now makes you pay for the rewrite in extra tokens, or forces you to prompt more precisely up front. Two honest readings coexist:

- Fans see honest behavior. 4.7 rewards users who treat prompts like specs, and the extra output is the engineering tax you were always implicitly paying in quality. “Yeah I’ll stick to 4.6 for now” is a confession that you were getting a hidden subsidy.

- Critics see gatekeeping. Casual subscribers are paying Pro prices for an effective downgrade, and “you need to prompt better” is revenue optimization wearing a capability hat.

Both readings have merit, and the more interesting point is that prompt quality is now being discussed as a pricing lever, not only a quality lever. That framing will outlive the 4.7 launch cycle.

The third complaint is impressive process, broken output. The canonical viral example is a 68-minute, multi-million-token refactor that finished confidently and produced a completely broken app. A community playbook is emerging in the replies:

- Break tasks into small, reviewable pieces instead of one autonomous marathon.

- Use Opus 4.7 for planning and hard reasoning, and let Sonnet do the bulk of raw coding.

- Insert explicit “review this, make no mistakes” verification checkpoints between phases.

- Never treat tone as a reliability signal. 4.7 is unusually confident when it is wrong.

A small number of users have documented specific regressions worth knowing about: Opus 4.7 claiming “strawberry” has two Ps, admitting it did not cross-reference because it was “being lazy,” and on a few occasions rewriting résumés with invented schools or last names. One Reddit post titled “Claude Opus 4.7 is a serious regression, not an upgrade” cleared 2,300 upvotes in the first 24 hours. These reports are outliers against the volume of positive posts, but they shape how cautious new users feel on their first run.

All three complaints share one root cause: the “operator, not assistant” design choice. That choice is simultaneously what makes 4.7 strong on hard problems and what makes it blow through caps on sloppy ones.

The Quieter Threads: Reliability, Sonnet Routing, and Strategy Memes

Beyond the top three narratives, a few smaller conversations tell you where the power-user meta is heading next.

Reliability is bimodal. 4.7 is excellent with constrained, well-specified prompts and frustrating with vague ones. Several widely shared developer threads recommend a two-model routing pattern : Sonnet as the default, Opus 4.7 on escalation. Single-model workflows are turning into a power-user anti-pattern. If you have been writing your own Claude Code skills, this is the week to revisit them and decide which ones need sharper instructions to behave on 4.7 the way they did on 4.6.

A small “it’s actually bad” contingent is also visible. Early refusals on specific prompts, weak runs on a few narrow domains, and a perception of being “combative” when users push back. These are outliers against the hype, but worth naming so this snapshot does not read one-sided.

The business-strategy thread is unusually loud. Running jokes about Anthropic “finally becoming profitable” by making the model more powerful while taxing vague usage sit alongside serious commentary about compute costs and pricing trajectory. Users see the pricing lever even when Anthropic is not pulling it directly.

Rollout is gradual. Not every Pro or Max account has full access yet, which is actively fueling FOMO posts and secondhand summaries. There is a visible split between “I’ve been using it for 48 hours” takes and “still waiting” takes, and the two groups are reading very different feeds.

Adjacent ecosystems are weighing in. Claude Code skill authors, Cursor users, GitHub Copilot users, and Zed users are all comparing notes on how 4.7 behaves inside existing agent harnesses. The rough consensus: great for long tasks, mixed for tight iterative loops. Cursor users in particular report that the model pairs well with their existing rules files, but chews through usage fast without them. For a head-to-head breakdown of how these tools differ on agentic tasks , see our deeper analysis.

Meme Economy and Vibes: The Unofficial Launch Narrative

Memes are not a sideshow here. They shape how the model is perceived and adopted, especially by users who have not tried it yet. The five formats to know:

- Designer-displacement humor, almost entirely aimed at the Claude Design demos.

- Token-consumption roasts, usually framed as “ran one prompt, here’s the bill” with a screenshot of a blown cap.

- “Anthropic cooked” or “Anthropic is cooking” praise posts, paired with impressive agentic demo clips.

- Sarcastic “best model released today” bits that acknowledge the frontier-model release cadence itself. 4.7 launched into an already crowded week.

- Meta-commentary jokes about 4.7 being “too powerful for casual use,” which double as informal positioning for the model.

These vibes matter because they set the default read for anyone arriving late. A newcomer skimming timelines today comes away thinking “powerful but expensive, and if you prompt badly you deserve the bill.” That framing will stick whether or not the next point release changes the economics.

Reading Between the Lines: What This Reception Actually Signals

Step back from the 48-hour news cycle and a few shifts become visible in the Opus 4.7 reception.

The market is splitting by prompting skill. Precise-prompt power users get roughly 10x leverage from 4.7 compared to 4.6. Casual users get sticker shock. Plan-tier segmentation will get louder because of this, and prompt-engineering content will get a second wind after everyone assumed the skill had been commoditized.

Prompt quality is now a pricing lever, not just a quality lever. Teams that invest in prompt specs, reusable skill templates, and prompt linters will extract a disproportionate share of 4.7’s value. Teams that keep treating prompts as disposable one-liners will overpay for the privilege.

Agentic work is leaving the demo stage. The conversation around 4.7 is dominated by long-running, self-verifying runs rather than chat quality. People use Opus for different things now, and they benchmark it against different things.

Model routing is becoming the default pattern. Sonnet for volume, Opus 4.7 for hard escalations. Expect routers, optimizers, and “Opus-safe” agent harnesses to proliferate on GitHub within weeks. Several are already live 48 hours after launch.

Expect rapid follow-ups. Optimizer libraries, prompt-linter skills, and harness wrappers are already appearing. The half-life of “best practice for Opus 4.7” advice will be measured in days for at least the next month.

Practical Takeaways if You Are Actually Using 4.7 This Week

Here is a short action list you can apply today without waiting for the dust to settle.

- Default to Sonnet for routine coding and keep Opus 4.7 for genuinely hard, multi-step, or agentic tasks.

- Chunk long jobs and insert explicit review or verification checkpoints. Do not hand 4.7 a 68-minute marathon and hope.

- Budget tokens the way you budget API calls. Monitor usage early in a run, not at the cap. Set a hard ceiling on your agent harness if it supports one.

- Write prompts like specs, not wishes. State inputs, outputs, constraints, and what “done” looks like. If you cannot describe “done,” you are not ready for an Opus 4.7 run.

- When output looks confident, verify before shipping. 4.7’s tone is not a reliability signal.

- Revisit your Claude Code skill library. Anything that leaned on 4.6’s silent prompt-rescue will need sharper instructions to behave the same on 4.7.

- If you are on Pro or Max, check whether the increased rate limits have reached your account before you decide to upgrade plans. The economics shift a lot in either direction depending on that.

Opus 4.7 is the most capable model Anthropic has shipped and the least forgiving one. Engineers who treat it like a senior collaborator they are paying by the hour will extract enormous leverage from it. Engineers who treat it like a chatbot will watch it light their usage cap on fire while doing something beautiful and wrong.

Botmonster Tech

Botmonster Tech