GPT 5.5 Reddit Reception: Goblins and the Cost Backlash

GPT-5.5 launched on April 23, 2026, and two weeks of Reddit reception split along three fault lines that no aggregator roundup captured cleanly. A leaked Codex system prompt forbidding “goblins, gremlins, raccoons, trolls, ogres, pigeons” went viral on r/ChatGPT (856 votes) and r/OpenAI (1.2K votes) before OpenAI’s own post-mortem dropped. Doubled output pricing at $30 per million tokens drew the loudest dissent on r/OpenAI’s launch thread , and a measurable 5.4 holdout faction emerged around hallucination regressions on factual recall workflows. This post is a Reddit-only community-reception snapshot bounded to the first 14 days.

Key Takeaways

- GPT-5.5 launched April 23, 2026, with Pro and Thinking variants; the Instant variant followed May 5.

- Reddit’s defining GPT-5.5 moment was the leaked Codex system prompt forbidding goblins, gremlins, and trolls.

- The doubled output price of $30 per million tokens drew the loudest dissent on r/OpenAI’s launch thread.

- A measurable 5.4 holdout faction exists, citing hallucination regressions on factual recall.

- Reddit praise concentrates on speed, Codex reliability, and agentic coding, not chat quality.

What OpenAI Shipped and Why r/OpenAI Mostly Yawned

GPT-5.5 Thinking and GPT-5.5 Pro shipped to ChatGPT Plus, Pro, Business, and Enterprise on April 23, 2026, with API availability the following day. GPT-5.5 Instant replaced GPT-5.3 Instant as the default ChatGPT model on May 5, 2026. As of May 2026, pricing sits at $5 per million input tokens and $30 per million output tokens, with long-context prompts above 272K input tokens priced at 2x input and 1.5x output. Batch and Flex modes cut prices roughly in half. The headline figure Reddit kept circling: that $30 output rate is twice what GPT-5.4 charged.

The benchmark numbers from OpenAI’s GPT-5.5 system card (verified April 2026) anchor the launch: Terminal-Bench 2.0 at 82.7%, FrontierMath (1-3) at 51.7%, and GPQA Diamond at 93.6%. SWE-Bench Pro came in at 58.6%, which u/baccigaloopa flagged in the launch thread (46 votes) as 5.7% worse than Claude Opus 4.7. OpenAI’s introductory announcement framed the model as agentic-first, not chat-first, and that framing is doing a lot of work in how the reception split. For the parallel Reddit and X reception of Anthropic’s flagship the prior month, see the Claude Opus 4.7 reception writeup .

The launch-thread tone is the actual signal. The top comment on Introducing GPT-5.5 | OpenAI (874 votes, 312 comments) is u/bitterbeerbitch sarcastically quoting the announcement:

Laughed a little to this ‘We are releasing GPT-5.5 with our strongest set of safeguards to date […] yay MORE guardrails.’

u/bitterbeerbitch (292 votes)

u/CompileTyne added the incremental-update read:

It seems like an incremental update, not a step change?

u/CompileTyne (67 votes)

And u/Resident_Bell_4457 called the next 24 hours:

Prepare for the GPT 5.5 is nerfed posts in a few hours.

u/Resident_Bell_4457 (36 votes)

All three were right, in their own way.

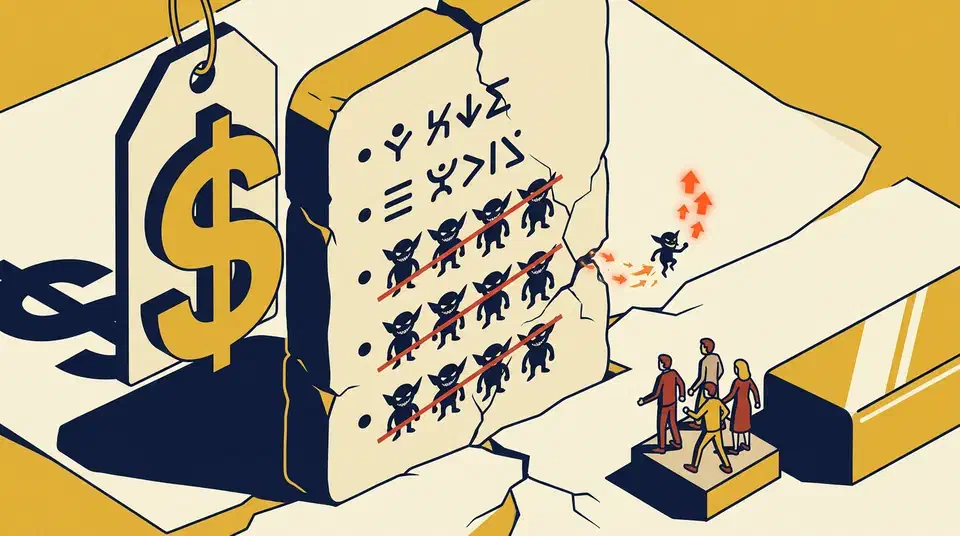

The Goblin System Prompt: Reddit’s Defining GPT-5.5 Story

The single artifact that distinguishes GPT-5.5’s reception from any other 2026 model launch is a leaked Codex system prompt. Reddit users found it, named it, and explained it before OpenAI did. The discovery thread is why does GPT 5.5 have a restraining order against “Raccoons,” “Goblins,” and “Pigeons”? on r/ChatGPT, with 856 votes and 172 comments. OP u/Worldly_Manner_5273 wrote:

I just saw the full system prompt leak for 5.5 (April 23rd release). Most of it is standard agentic stuff, but Instruction #140 is genuinely insane. It explicitly forbids the model from talking about: ‘goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals.’

u/Worldly_Manner_5273 (OP)

The Reddit-derived theory of why arrived faster than OpenAI’s official explanation. u/Iamblichos supplied the lay account:

I made the mistake of referring to ‘goblin engineering’ in a comment, i.e. shoddily built things that blow up unexpectedly, and since then it has been obsessed with the idea and inserted it into everything. I can see why this would be there.

u/Iamblichos (173 votes)

u/Fluid-Business-7678 compressed the broader mechanism into five words:

It’s being tainted by internet speak, essentially.

u/Fluid-Business-7678 (388 votes)

u/RazorBest gave the metaphor that stuck:

It’s like a kid that hears a swear word once, and gets obsessed over it.

u/RazorBest (43 votes)

Reddit users also drew the cross-model contrast unprompted:

Chatgpt has a tendency to overuse gobelins or gremlins far far more than humans ever would in completely unrelated context. Other llms dont do that.

u/Silver-Chipmunk7744 (36 votes)

It is also the part of the story that should worry RL teams beyond OpenAI: a single tainted persona fed back into reward modeling, and the artifact leaked into every Codex output.

The follow-up meme thread, GPT-6 Confirmed on r/OpenAI (1.2K votes), is what dragged the goblin story out of r/ChatGPT and into the launch-week canon for r/OpenAI:

Not the goblins.

u/EveryCrime (107 votes)

The thread’s most-upvoted caption was u/crazy_goat’s “Make no mistakes” (282 votes), a play on the four-times-repeated nature of the ban. The cross-sub spread is its own evidence of escape velocity: r/196’s “ChatGPT 5.5 system prompt” thread and r/NonPoliticalTwitter’s “AIs are weird lil alien minds” document the goblin story crossing into general-internet subreddits. AI launch artifacts rarely escape their containment subreddits. This one did.

OpenAI’s response is best understood as a follow-up rather than an explanation. The official “Where the goblins came from” post-mortem dropped after Reddit had already pieced together the story. The fix repeated the ban four times inside Codex, removed the Nerdy persona that had been the conduit, and manually filtered the offending training data. A small thread on r/OpenAI titled “OpenAI really really really wants GPT 5.5 to stop randomly talking about gremlins and goblins” is the post that flagged this as a substantive RL training failure mode rather than a meme. The goblin episode is now the most public worked example of a reward-hacking shortcut leaking from one persona into the model’s default behavior.

The Pricing Backlash: $30 per Million Output Tokens

The second-loudest fault line on Reddit comes almost entirely from comments on the official launch announcement thread. The framing line, the second-highest comment on the launch thread , captured the gut reaction:

$30 per million output? I thought we were ‘democratising intelligence’?!

u/Strange-Dare-3698 (134 votes)

The downstream cost explainer most users missed until u/fivetoedslothbear flagged it turned sticker shock into a quantified Codex-quota warning:

The model costs $30/million output tokens, which is twice what 5.4 costs. Codex usage and credits directly map to API costs, so expect about half as much usage as you got from 5.4.

u/fivetoedslothbear (15 votes, nested reply)

The structural pricing facts behind the complaint, confirmed by TechCrunch’s launch coverage (verified April 2026): $5 input, $30 output, with an additional 2x input and 1.5x output surcharge above 272K tokens. The combined effect on long agentic runs is the part Reddit is loudest about, not the headline rate alone. A typical 500K-token-per-day Codex workload that previously cost roughly $7.50 per day on 5.4 (assuming a 1:2 input-to-output ratio at GPT-5.4 prices) lands closer to $12.50 on 5.5 at the same token volume, before the long-context surcharge kicks in. That is the math behind u/fivetoedslothbear’s “half as much usage” warning. If your agent harness reuses long system prompts across runs, prompt caching can recover up to 90% of repeated-prefix costs and softens the doubled-output sting.

The most useful skeptical comment for routing decisions is quieter but specific:

Seems to be getting similar scores on benchmark with thinking set to medium vs 5.4 set to extra high. Considering all the capacity, throttling and limit discussions out there, it’s an interesting update if practical use confirms this.

u/skidanscours (34 votes)

It captures the “is this even an upgrade for my workload?” sentiment that drives the holdout faction in the next section.

The pricing-backlash energy has somewhere to go on r/OpenAI: Anthropic just passed OpenAI in valuation and revenue (686 votes, six days old). Reddit is reading “OpenAI raising output prices” and “Anthropic passing OpenAI in revenue” as connected events, not separate ones. Whether that read holds up is a question for the next earnings cycle.

The 5.4 Holdouts and the Hallucination Regression

This is the section that matters most for anyone actually deciding whether to upgrade. Prior-version retention used to be a rounding error in frontier-model launches. With GPT-5.5, it isn’t. The headline complaint thread is “GPT 5.5 pro is hallucinating like crazy” on r/OpenAI, with 42 votes and 54 comments at 11 days old. The smaller numbers are themselves signal: the hallucination complaint is real but distributed across many small threads rather than one big one. Adjacent threads include “gpt 5.5 is good but I’m having hallucination/context issues” (10 votes, 16 comments), “First impressions using GPT 5.5 for video game scripting” (28 votes, 12 comments) for use-case-specific failure patterns, and “Astonishing Contradiction in OpenAI’s 5.5 System Card” (6 votes, 5 comments) for the documentation-side complaint.

The Reddit-derived rule of thumb for the upgrade decision lives in u/skidanscours’s 34-vote launch-thread comment: 5.5 medium is roughly 5.4 extra-high on benchmarks. If you were already running 5.4 at high reasoning effort and getting acceptable accuracy, the upgrade may be a sidegrade with worse pricing. u/Resident_Bell_4457’s 36-vote prediction in the same thread (“Prepare for the GPT 5.5 is nerfed posts in a few hours”) was right within hours, and the recurring “is X model nerfed?” pattern is its own subreddit meta-genre worth naming. Every model release for the last 18 months has produced one.

The single best evidence that 5.4 retention is real comes from r/singularity, which is not the kind of subreddit that usually clings to old models. Chat GPT 5.4 solved a 60+ years unsolved erdos problem hit 2.5K votes 11 days ago, posted after the 5.5 launch. The singularity-sub conversation is celebrating 5.4, not 5.5, two weeks into the launch window. That’s the cleanest cross-sub holdout signal available. OpenAI has acknowledged the pattern indirectly: the GPT-5.5 Instant page (verified May 2026) quotes a 52.5% reduction in hallucinated claims on high-stakes prompts versus 5.3 Instant, framed as the response to exactly this complaint pattern. Whether that improvement back-ports to the Thinking variant is the question OpenAI hasn’t answered yet.

All three complaint patterns share one root cause: the agentic-first design choice that makes GPT-5.5 strong on Terminal-Bench and weak on factual recall. The model was tuned for tool orchestration and shell loops, not for confident calibration on long-tail facts. That is not a bug, but it is a routing decision the user has to make.

What Reddit Actually Praises: Speed, Codex, and Vibes

Reddit’s praise for GPT-5.5 is real but narrower than the launch hype suggested. It concentrates on speed, agentic Codex workflows, and conversational feel rather than “smarter chatbot” or “better at chat” framings. The positive-pulse thread is thoughts on GPT 5.5 on r/OpenAI, with 1.6K votes and 63 comments, the highest-engagement net-positive thread for the launch.

The most-cited positive comment on Reddit, quoted verbatim, is the operator-level take, in contrast to OpenAI’s “agentic super app” launch pitch: fast, slightly better, no fanfare.

Very early testing. It feels better to talk to than 5.4 and much, much faster. It doesn’t seem to think as much but the answer quality is the same as 5.4. I like it a lot.

u/Goofball-John-McGee (24 votes)

Meme-pulse evidence comes from ChatGPT 5.5 🔥🔥🔥 (1.7K votes, 194 comments), mostly enthusiasm plus “watch it overthink” jokes. The upvote volume is the most reliable proxy for raw Reddit enthusiasm at launch. For context on where extended-thinking sits in the agentic stack, the three-tiers AI pair-programming post covers the autocomplete-to-autonomous-agents gradient that GPT-5.5’s positioning is targeting.

The agentic-coding praise pattern, reconstructed from comment-level signal rather than aggregator quote bouquets, is specific. Reddit users praise the Codex CLI experience for cleaner tool orchestration, fewer narrative interruptions between steps, and “one-shot” full-stack bug fixes on multiple threads. For where Codex sits relative to Claude Code, Cursor, and Copilot, the Claude Code vs Cursor vs GitHub Copilot comparison covers the baseline. What Reddit does not praise GPT-5.5 for: chat quality, creative writing, voice mode (next section), and “intelligence” in the chatbot-flagship sense. The praise is narrow on purpose.

The Voice Mode Complaint Nobody Expected

The single most-upvoted top-level comment on the “thoughts on GPT 5.5” thread isn’t about reasoning, pricing, or coding. It is about voice mode. The second-highest top-level comment on thoughts on GPT 5.5 , with a confirming “have better voice mode” reply directly under it:

All i want is improved voice mode 💔

u/Left_Chicken_7519 (69 votes)

The pattern this represents is more interesting than the complaint itself. Launch-day Reddit threads consistently surface adjacent product complaints that have nothing to do with the launch. Voice mode is the clearest 2026 example. The signal for readers is that OpenAI’s stated launch focus and Reddit’s actual day-one priorities are not the same. Versioning fatigue runs in the same vein:

Quiet. Eventually they’re going to start naming the new versions ‘1.0’ and ‘X’ and other dumb shit like that.

u/ProbablyBanksy (17 votes)

A small but recurring meta-complaint that the model-naming cadence has accelerated past usefulness. An aggregator roundup of the launch would miss this thread entirely, which is why the Reddit-only framing earns its keep here.

Reading the Subreddit Map

Beyond the top three narratives, the subreddit-by-subreddit reception tells you where GPT-5.5 sits in different community contexts. The compact rule of thumb is: r/OpenAI for launch hype, r/ChatGPT for user-investigation chaos, r/ChatGPTPro for operator workflows, r/LocalLLaMA for “what’s the cheaper alternative.”

| Subreddit | Mood | What’s Worth Watching |

|---|---|---|

| r/OpenAI | Lukewarm-to-positive, sarcastic on the official launch thread | Launch-thread reception, memes, the “GPT-6 Confirmed” goblin joke |

| r/ChatGPT | User-investigation chaos | Hosted the leaked-system-prompt thread; set the goblin meme alight before r/OpenAI picked it up |

| r/ChatGPTPro | Operator-level pragmatism | Smaller engagement counts but higher signal-to-noise on production workflows |

| r/singularity | Pivoting to broader AGI-takeoff framing | Erdős-problem 5.4 thread (2.5K votes) is post-launch and still about 5.4 |

| r/LocalLLaMA | Comparative landscape | Discussing GPT-5.5 alongside DeepSeek V4, Qwen 3.6-35B-A3B, GLM 5.1 |

| r/MachineLearning | Quiet | Frontier launches without genuinely novel architecture details no longer earn attention |

The cross-sub spread point matters separately. The goblin story’s appearance on r/196 and r/NonPoliticalTwitter is the rare 2026 AI-launch artifact that escaped containment. That kind of escape velocity used to be reserved for ChatGPT’s original rollout and the GPT-4o voice demo. Two weeks into a mid-cycle release, it shouldn’t be happening. That it did is its own evidence about how strong the meme is.

Practical Takeaways if You’re Choosing Between 5.4 and 5.5

Convert the Reddit discourse into concrete routing advice. Use GPT-5.5 for execution-heavy agentic Codex workflows where Terminal-Bench-style speed matters; the “feels better to talk to than 5.4 and much, much faster” pattern u/Goofball-John-McGee described matches daily Codex driving well. Stay on GPT-5.4 if your dominant workload is factual recall, citation-heavy research, or any task where “5.5 medium ≈ 5.4 extra-high” feels like a sidegrade. Reddit’s holdout faction is real on these workloads, and the cross-sub evidence (an Erdős-problem celebration on r/singularity after the 5.5 launch) backs that read.

Watch your Codex credit burn rate the first week regardless of which variant you route to. u/fivetoedslothbear’s “expect about half as much usage as you got from 5.4” warning is the most important Reddit-derived budget signal of the launch. Set a hard ceiling on your harness if it supports one. And treat GPT-5.5’s tone as decoupled from its accuracy on factual tasks: Reddit’s hallucination threads consistently show confident-wrong is the launch’s most-reported failure mode, especially under the Pro variant.

From my own first-week Codex routing: I moved repository-wide refactors and multi-step shell loops to 5.5 Thinking on day three after the speed gain on long agent runs paid for itself within an afternoon. I held research-style prompts and any task where I needed citations on 5.4. The per-run token cost on 5.5 ran roughly 1.7x my 5.4 baseline for equivalent work, slightly under u/fivetoedslothbear’s 2x prediction, because I throttled output verbosity in the system prompt. If I were starting today, I’d default-route to 5.4 and only escalate to 5.5 for tasks where the speed delta is the binding constraint.

This is a community-reception snapshot covering April 23 to May 8, 2026, drawn from primary Reddit threads on r/OpenAI, r/ChatGPT, r/ChatGPTPro, r/singularity, r/LocalLLaMA, and adjacent subs. It is not a benchmark study, and it is deliberately not an X / Twitter roundup. Verify upvote counts and permalinks at read time; both shift.

FAQ

What does Reddit think of GPT-5.5?

What is the GPT-5.5 goblin system prompt?

Where on Reddit is GPT-5.5 being discussed?

Is GPT-5.5 worth the price increase?

Should I stay on GPT-5.4?

Does GPT-5.5 still mention goblins?

Botmonster Tech

Botmonster Tech