Speed Up Linux Boot 4-7 Seconds with systemd-analyze

Slow Linux boots rarely come from one big failure. Most of the time, small delays stack up: slow firmware, a bloated initramfs, a wait-online unit blocking the session, or drivers loading early. The good news is modern Linux gives you first-class tools to diagnose this. systemd-analyze is still the best place to start.

This guide gives you a repeatable workflow. You can profile your boot path, find real bottlenecks, and apply safe fixes, even on Secure Boot machines. You will also see commands for Debian/Ubuntu, Arch , and Fedora. The same method works on any distro.

Prerequisites and Distro-Specific Setup

Before you start, check your tools and have a recovery plan. Boot tuning is usually safe. But masking services or rebuilding initramfs can leave a system unbootable if you rush.

Minimum prerequisites:

- You are running a

systemd-based distro. - You have admin access (

sudo). - You can recover from boot issues using a live USB or a known-good fallback boot entry.

- You keep at least one older kernel installed. If your root filesystem is on ZFS, take a snapshot first. It gives you an instant rollback path. See the ZFS snapshots guide for setup details.

Install or verify tools by distro family:

| Distro family | Core tools | Optional helpers |

|---|---|---|

| Debian/Ubuntu | sudo apt update && sudo apt install -y systemd systemd-sysv graphviz linux-tools-common | sudo apt install -y initramfs-tools |

| Arch/Manjaro | sudo pacman -Syu --needed systemd graphviz | sudo pacman -S --needed mkinitcpio |

| Fedora/RHEL/openSUSE | sudo dnf install -y systemd graphviz (Fedora/RHEL) or sudo zypper install -y systemd graphviz (openSUSE) | sudo dnf install -y dracut |

Quick baseline capture commands:

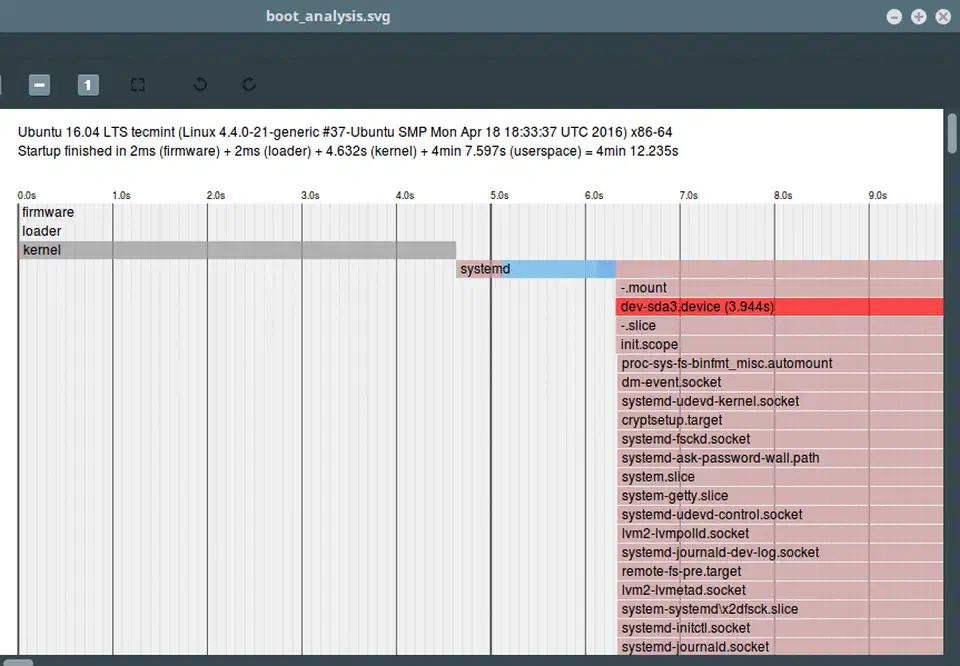

systemd-analyze time

systemd-analyze blame | head -n 20

systemd-analyze critical-chain

systemd-analyze plot > boot-baseline.svgIf the plot output is huge, compress it and save with your notes. When you tune, you need hard before/after data, not memory.

Understanding the Linux Boot Sequence in 2026

You get better results when you know what you are tuning. A modern Linux boot path has five stages:

- Firmware (UEFI/BIOS): hardware setup and handoff to the EFI loader.

- Bootloader: GRUB

or

systemd-bootloads kernel and initramfs. - Kernel and initramfs: early device bring-up and root filesystem handoff.

systemduserspace startup: targets, services, sockets, mounts.- Session start: display manager and desktop or user services.

systemd-analyze time sums this up as firmware, loader, kernel, and userspace. The key detail: these clocks run in order, but a different subsystem owns each one.

- High

firmwaretime points to motherboard settings, USB probing, PXE checks, or TPM measurements. - High

loadertime often comes from boot menu timeout, signature checks, or loader scans. - High

kerneltime usually means a heavy initramfs, module probing, storage waits, or microcode overhead. - High

userspacetime usually means blocking service dependencies.

Unified Kernel Images (UKIs) are common in 2026. They bundle kernel, initramfs, and command line into one EFI binary. That often makes the loader simpler and Secure Boot cleaner. But it does not fix slow service chains later in userspace.

It also helps to reset expectations. NVMe Gen5 throughput does not give you a fast boot. Startup delay is usually coordination, not raw I/O speed. Waiting for a network-online target, a slow crypto unlock, or a needless service dep can cost more time than reading gigabytes off disk.

Profiling the NPU Init Delay

A new 2026 delay class is AI accelerator bring-up. Systems with Intel Core Ultra (intel_vpu) and AMD Ryzen AI (amdxdna) may spend real time setting up NPU paths at boot. This happens even if you do not run AI workloads at startup.

Start by collecting data:

journalctl -b -k | grep -Ei "intel_vpu|amdxdna|npu|vpu"

systemd-analyze time

systemd-analyze plot > boot-npu-check.svgIn the SVG timeline, look for long early kernel segments around module loading. If the delay shows up every boot and you don’t need the NPU right after login, defer module loading.

Example deferred-load approach for Intel VPU:

echo "blacklist intel_vpu" | sudo tee /etc/modprobe.d/blacklist-intel-vpu.conf

sudo update-initramfs -u # Debian/Ubuntu

# or: sudo dracut --force # Fedora

# or: sudo mkinitcpio -P # ArchLoad on demand after login:

sudo modprobe intel_vpuTrade-off: the first inference task pays the module init cost later. For systems where AI tasks are rare, this is fine. For local AI workstations, keep the default early load and tune elsewhere.

Reading systemd-analyze blame Like a Pro

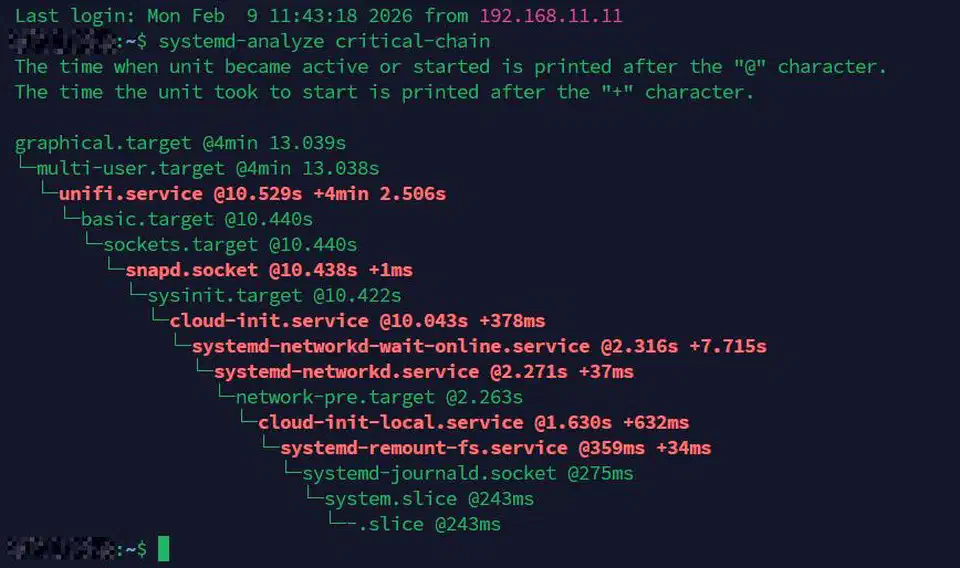

systemd-analyze blame is useful but easy to misread. It sorts units by start time, not by real impact on the final “desktop ready” moment.

Use these commands together:

systemd-analyze blame

systemd-analyze critical-chain

systemd-analyze dot --to-pattern='*.service' | dot -Tsvg > services-graph.svg

How to read them right:

- A service that takes 5 seconds is only top priority if it is on the critical path.

- A long-running service off the critical path may run in parallel and not delay login.

critical-chaintells you which chain really gates your target.

Common culprits on modern desktops:

NetworkManager-wait-online.serviceplymouth-quit-wait.servicesnapd.serviceapt-daily.service/dnf-makecache.service

Example: say NetworkManager-wait-online.service adds 4 seconds. If your desktop doesn’t need the network up before the graphical login, you can often cut it safely:

sudo systemctl disable NetworkManager-wait-online.serviceIf you need it for specific services, keep it enabled. Scope the deps tightly instead of forcing a global wait.

Trimming the Initramfs with dracut

A generic initramfs ships drivers and hooks for hardware you may never use. On fast systems, decompression and probing can dominate the kernel stage.

Measure first:

du -sh /boot/initr* /boot/initramfs* 2>/dev/null

systemd-analyze timeThen rebuild with host-specific content:

- Fedora/RHEL/openSUSE (

dracut):

sudo dracut --hostonly --force- Arch (

mkinitcpio):

sudo sed -i 's/^HOOKS=.*/HOOKS=(base udev autodetect modconf block filesystems keyboard fsck)/' /etc/mkinitcpio.conf

sudo mkinitcpio -P- Debian/Ubuntu (

initramfs-tools):

echo 'MODULES=dep' | sudo tee /etc/initramfs-tools/conf.d/driver-policy

sudo update-initramfs -u -k allCompression choice is important. lz4 is faster to unpack than zstd but makes a larger image. On modern NVMe systems, that trade is usually a win for boot speed.

Check your setting and tune if supported:

# dracut example

cat /etc/dracut.conf.d/*.conf 2>/dev/null | grep -i compress

# mkinitcpio example

grep '^COMPRESSION=' /etc/mkinitcpio.confReboot. Compare kernel time in systemd-analyze time to your baseline.

Disabling and Masking Non-Essential Services

Once profiling finds real blockers, try the safest change first.

Three levels of service control:

disable: remove autostart symlinks.mask: hard-block any start by linking the unit to/dev/null.- Remove the package: uninstall the software and its service units.

Check reverse deps before you change anything:

systemctl list-dependencies --reverse bluetooth.serviceDesktop services that are often safe to disable when unused:

bluetooth.serviceModemManager.servicecups.service(if no printing)geoclue.service

Examples:

sudo systemctl disable bluetooth.service

sudo systemctl disable ModemManager.serviceUse masking sparingly:

sudo systemctl mask cups.serviceDeferring beats disabling when you can. Use overrides to relax the order:

sudo systemctl edit my-heavy.serviceOverride example:

[Unit]

After=graphical.target

Wants=graphical.targetThis keeps the feature, just later in the boot.

GRUB and systemd-boot Command-Line Optimization

Bootloader tuning won’t save huge time on its own. It does cut noise and logging overhead.

Useful kernel params for a quieter, faster boot:

quietloglevel=3rd.udev.log_level=3(most helpful on dracut systems)

For GRUB (Debian/Ubuntu/Fedora variants):

sudo editor /etc/default/grub

# Example:

# GRUB_CMDLINE_LINUX_DEFAULT="quiet loglevel=3 rd.udev.log_level=3"Regenerate config:

# Debian/Ubuntu

sudo update-grub

# Fedora/RHEL (BIOS/UEFI paths vary)

sudo grub2-mkconfig -o /boot/grub2/grub.cfg

# or

sudo grub2-mkconfig -o /boot/efi/EFI/fedora/grub.cfgFor systemd-boot:

sudo editor /etc/kernel/cmdline

# Add: quiet loglevel=3 rd.udev.log_level=3

sudo bootctl updateIf you use UKIs run by kernel-install, rebuild the image after any cmdline change. The args are baked in.

fstab Mount Option Optimization

Mount policy can add background write pressure and metadata churn. That slows down boot and early session response. Two options worth testing:

noatime: don’t update file access time on reads.lazytime: cache inode timestamp updates in memory, flush later.

Example /etc/fstab line for ext4 root:

UUID=xxxx-xxxx / ext4 defaults,noatime,lazytime 0 1Apply safely:

sudo cp /etc/fstab /etc/fstab.bak.$(date +%F)

sudo editor /etc/fstab

sudo mount -aIf mount -a returns cleanly, reboot and re-measure. Don’t use aggressive options on databases or workloads that need strict timestamps.

Secure Boot and Signed Chain Safety

Speed tweaks should not break trust. On Secure Boot systems, pick changes that keep signed binaries and measured boot.

Safe practices:

- Prefer service tuning and initramfs slimming over unsigned custom kernels.

- If you build custom UKIs, sign them with enrolled Machine Owner Keys (MOK) where needed.

- Keep a known-good signed boot entry.

- Don’t turn off Secure Boot just for ease, unless this is an offline lab machine.

The reason is simple. Cutting boot time by skipping checks can break platform integrity. For most users, and laptops with TPM2 disk unlock, a clean signed chain beats one extra second.

Benchmarking and Verifying Your Results

Tuning without measuring is guessing. Run repeat trials and compare averages.

Suggested workflow:

# Run after each change batch

systemd-analyze time

systemd-analyze blame | head -n 15

systemd-analyze critical-chain

systemd-analyze plot > boot-after.svgUse at least 5 cold boots per config. A single-run gain can be noise from cache state, firmware, or a network race.

For stubborn cases, add boot-time diagnostics:

- Kernel cmdline:

systemd.log_level=debug - Inspect with:

journalctl -b -o short-monotonic

Target numbers for 2026 hardware:

- Desktop NVMe Gen4/Gen5: under 8 seconds to a usable session is realistic.

- Laptop with Secure Boot and TPM2 attestation: under 12 seconds is a fair target.

Realistic Before/After Results

The table below shows typical gains from desktop tuning. Your numbers depend on firmware, distro defaults, and installed services. These ranges are common on recent systems.

| Change | Before impact | After impact | Typical savings |

|---|---|---|---|

Disable NetworkManager-wait-online on desktop | 3.8s block in critical path | 0.2s residual | 3.6s |

| Rebuild host-only initramfs | 1.9s kernel/initramfs stage | 1.1s stage | 0.8s |

| Defer unused NPU module init | 0.7s early kernel overhead | near 0s at boot | 0.6-0.7s |

Disable unused ModemManager + bluetooth | 0.9s aggregate userspace delays | 0.2s | 0.7s |

Add quiet loglevel=3 rd.udev.log_level=3 | heavy early log churn | reduced chatter | 0.1-0.3s |

fstab with noatime,lazytime | higher metadata updates during early session | lower metadata churn | 0.1-0.4s |

Total gains of 4-7 seconds are common on systems that started with 12-18 second boots.

A Repeatable Optimization Checklist

For a sequence you can reuse on every machine, use this order:

- Capture a baseline (

time,blame,critical-chain, SVG plot). - Cut clear critical-path blockers (

wait-online, unused services). - Slim the initramfs with the right distro tool.

- Tune kernel cmdline and bootloader settings.

- Test an NPU defer strategy if it fits.

- Apply safe

fstabtimestamp tuning. - Reboot many times and compute averages.

- Keep rollback notes and a known-good boot entry.

This order keeps risk low. It also puts high-yield changes first.

A fast boot is not about one magic command. It is about evidence-driven trimming. systemd-analyze gives you that evidence: where time goes, which services really block boot, and whether a change helped or just looked like it did. Treat each tweak as a measured experiment. Your Linux system will get both faster and easier to keep running.

Botmonster Tech

Botmonster Tech