MCP vs. A2A: The Two Protocols Powering the Agentic Web

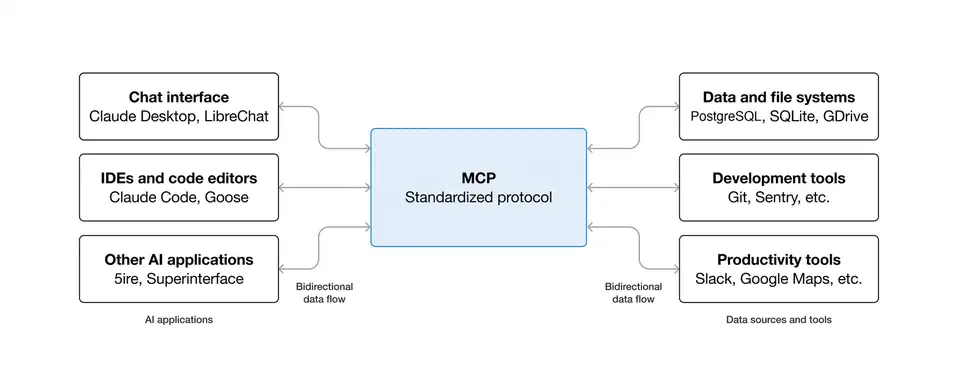

Model Context Protocol (MCP) and Agent-to-Agent Protocol (A2A) aren’t rivals. They solve different layers of the same problem. MCP sets how an AI agent connects to tools and data. A2A sets how agents talk to each other and pass off tasks. Together they form the base plumbing of the agentic web.

If you’re building past a single chatbot in 2026, you need to grasp both.

The Fragmentation Problem

Before these protocols, the AI tooling space was a mess of clashing integrations. Every major framework had its own way to plug into outside tools: LangChain , CrewAI , and AutoGen . Giving a LangChain agent access to the Slack API meant writing a LangChain-only tool wrapper. Wanting the same in a CrewAI workflow meant starting over. None of the adapters carried across.

The result was a tax on integration. Teams burned time on plumbing. Useful features got locked behind framework-only wrappers and couldn’t be shared.

Computing faced a like problem before TCP/IP. Single machines were useful on their own. But linking them up needed custom point-to-point fixes for every pair. TCP/IP didn’t make any one computer better. It made all of them more useful by setting shared terms for talk.

The agentic AI space needed the same kind of standard. But the problem splits into two clear layers:

- Agent-to-tool links: how does an agent reach outside features like APIs, databases, and file systems?

- Agent-to-agent talk: how do agents find each other, work out tasks, and trade results?

MCP handles the first. A2A handles the second.

Model Context Protocol (MCP): The USB-C Port for AI

Anthropic shipped MCP in late 2024. The first goal: give Claude steady access to outside data. The design stayed general on purpose. Instead of building Claude-only integrations, Anthropic set a protocol that any model and any tool maker could adopt.

The setup has three parts. The host is the AI app the user works with: Claude Desktop, the Gemini CLI, VS Code with an AI add-on, or a custom agent. The client is the MCP connector inside the host. It handles protocol talk. The server is a small process that exposes a tool or data source. One might wrap Slack. Another your local file system. Another a Postgres database.

An MCP server written once works with any host that follows the spec. Build one for your firm’s internal knowledge base. It then opens up to Claude Desktop, Gemini CLI, and any other MCP-ready agent. Codex running in the terminal works as both an MCP client and an MCP server, so it can consume your tools or be driven by another orchestrator. No extra glue work. No custom adapter per model. No framework-only wrapper.

Here’s a tiny Python MCP server that exposes one tool:

from mcp.server import Server

from mcp.server.stdio import stdio_server

from mcp import types

server = Server("my-tool-server")

@server.list_tools()

async def list_tools() -> list[types.Tool]:

return [

types.Tool(

name="get_weather",

description="Get current weather for a city",

inputSchema={

"type": "object",

"properties": {

"city": {"type": "string", "description": "City name"}

},

"required": ["city"]

}

)

]

@server.call_tool()

async def call_tool(name: str, arguments: dict) -> list[types.TextContent]:

if name == "get_weather":

city = arguments["city"]

# Your actual weather API call here

return [types.TextContent(type="text", text=f"Weather in {city}: 22C, partly cloudy")]

raise ValueError(f"Unknown tool: {name}")

async def main():

async with stdio_server() as streams:

await server.run(*streams, server.create_initialization_options())

if __name__ == "__main__":

import asyncio

asyncio.run(main())Drop this into the config of any MCP-ready host. That host then gains weather lookup right away. No framework-only adapter needed.

The Anthropic GitHub org hosts the official SDKs for Python and TypeScript. Past those, the community has built thousands of MCP servers for GitHub, Postgres, Google Drive, Notion, local file access, browser control, and more. The protocol has moved past “Anthropic-only standard” into a tool the wider ecosystem has truly picked up.

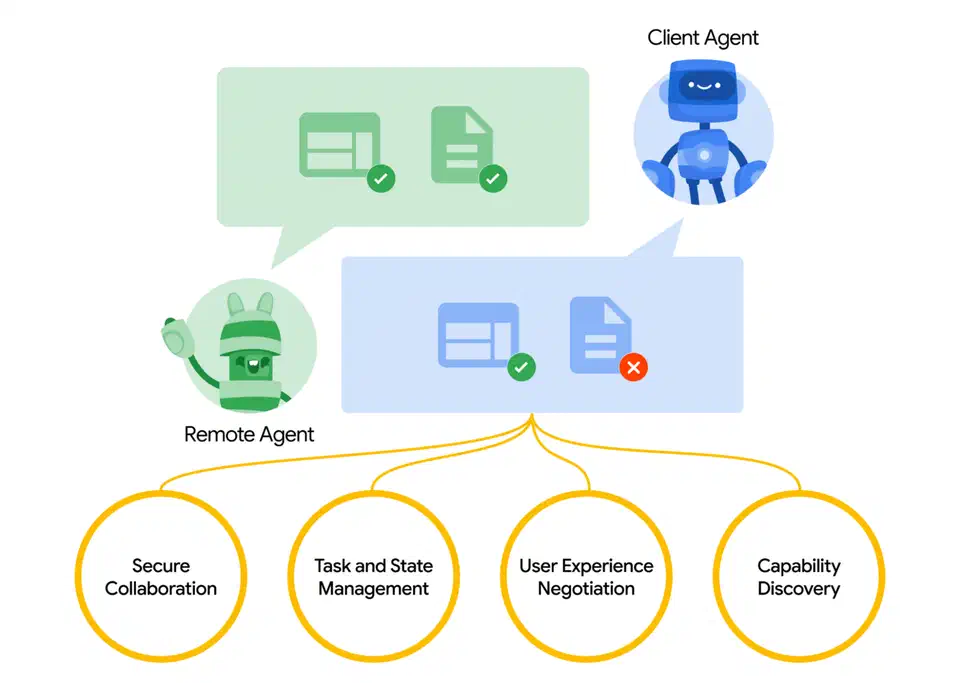

Agent-to-Agent Protocol (A2A): The Network Layer for Multi-Agent Systems

MCP handles tool access well. What it doesn’t cover is the higher problem. How do agents find each other? How do they sort out who does what? How do they team up on hard tasks across firm or framework lines?

Google shipped A2A in April 2025. MCP stayed under Anthropic. A2A went to the Linux Foundation for open rule almost right away. Multi-agent plumbing is too key for wide use to sit under one vendor. The Linux Foundation move was a clear signal to the enterprise market.

A2A adds three ideas with no twin in MCP:

Agent Cards are JSON files that act as a profile for an agent. They list what the agent can do, how to reach it, what auth it needs, and what kinds of tasks it takes. When an orchestrator needs a specialist, it queries for Agent Cards and picks from them.

Here’s a simple Agent Card:

{

"name": "ResearchAgent",

"description": "Searches the web and summarizes findings on any topic",

"version": "1.0.0",

"url": "https://agents.example.com/research",

"capabilities": {

"streaming": true,

"pushNotifications": false

},

"skills": [

{

"id": "web_research",

"name": "Web Research",

"description": "Searches and synthesizes information from the web",

"inputModes": ["text"],

"outputModes": ["text", "data"]

}

],

"authentication": {

"schemes": ["bearer"]

}

}Dynamic discovery means an orchestrator doesn’t need to hardcode the address of a sub-agent. It searches for agents whose cards match the needed skill profile. Multi-agent systems can shift as new agents come online. No manual re-setup.

Artifacts are the standard output format for done tasks. When a specialist wraps up, it packs the result in an Artifact: a typed object with content, type tags, and provenance. The orchestrator gets it back on the same A2A channel it used to assign the task.

The real payoff is cross-framework reach. A LangChain orchestrator can hand off to a CrewAI specialist. A custom Python agent can pass work to an AutoGen flow. As long as both sides speak A2A, the framework below doesn’t matter. For a hands-on look at how multi-agent coordination patterns play out in real work, LangGraph’s stateful graph model is one of the cleaner builds out there today.

Head-to-Head: Key Differences

| Feature | MCP | A2A |

|---|---|---|

| Layer | Execution / Tooling | Orchestration / Communication |

| Philosophy | “Here is a hammer, use it.” | “Can you build this for me?” |

| Interaction style | Instruction-oriented (low-level) | Goal-oriented (high-level) |

| Discovery | Static (pre-configured tools) | Dynamic (searching for available agents) |

| Maintained by | Anthropic | Linux Foundation |

MCP is the USB port standard. It sets a port so any device plugs into any computer. A2A is more like email. It lets free agents address each other and team up without knowing each other’s internals.

When to use each:

- MCP alone: one agent linking to local tools and data. The classic chatbot-plus-tools setup.

- A2A alone: many specialist agents teaming up. Each works from its own preset tool kit. No MCP needed.

- Both: workflows where orchestrator agents hand off via A2A to specialists. Those specialists then use MCP to reach their tools.

Better Together: The Unified Agentic Workflow

A concrete case shows why pairing them is important. Take a “Project Manager Agent” set to research a competitive landscape and draft a summary doc.

The flow:

- The Project Manager Agent gets the task. It queries the A2A network. It finds a Researcher Agent whose card lists web research as a skill.

- Via A2A, the Project Manager hands off the research subtask. It states the topic and the output format.

- The Researcher Agent takes the task. It uses two MCP links: a Search MCP server that wraps a web search API, and a Google Docs MCP server with read access to a shared doc library.

- The Researcher gathers and folds its findings. Then it packs the result as an A2A Artifact.

- That Artifact flows back to the Project Manager. The manager uses it to write the final deliverable.

MCP handles the tool access the specialist needs to do the work. A2A handles discovery, hand-off, and result transfer between agents. Pull out either layer. The workflow falls apart. That’s why “Manager-Specialist” setups lean on both protocols, not one.

Challenges, Security, and What Comes Next

Use at scale brings security problems that neither protocol fully solves on its own. The core issue is permission spread. When a human user grants an orchestrator agent access to their email, calendar, and file system, those grants shouldn’t auto-flow to every sub-agent the orchestrator hands off to. A specialist that gets a task via A2A and isn’t trusted shouldn’t inherit the orchestrator’s full key set.

Both protocols cover part of this. MCP servers can set fine-grained capability caps. They limit what any client can ask for. A2A supports standard auth schemes at the agent level. So each agent runs its own access checks apart from the caller’s grants. But wiring these together right across a real multi-agent system takes careful design. The tooling to make that easy is still growing up.

On adoption: MCP launched in late 2024. By 2025 it had become the default for tool access in the major AI development environments . A2A is newer. The ecosystem around it is still being built. Linux Foundation stewardship is important here. Firms are more willing to build core plumbing on protocols not held by a direct rival.

A few things worth tracking over the next year:

The SDK gap between MCP and A2A is closing. MCP’s Python and TypeScript SDKs have been solid for a while. A2A tooling has been catching up fast. The quality of the dev experience drives uptake more than the spec itself.

Agent registries are starting to show up. For A2A’s dynamic discovery to work in real use, you need plumbing for publishing and searching Agent Cards. Both public registries (for shared agents) and private firm-side registries (for in-house specialists) are getting built.

Security tooling is the open problem. Production agent deployments are stacking up faster than guidance on how to wire MCP and A2A together without privilege escalation risks . Expect frameworks and concrete advice to follow.

The protocols themselves are well-designed. The work now is building the plumbing around them. That plumbing should reach devs who aren’t already specialists in agent system design.

Further reading and official resources:

Botmonster Tech

Botmonster Tech