OpenClaw vs Hermes and Why Memory Kills Agent Loyalty

Hermes Agent , built by Nous Research, has taken about 30% of OpenClaw’s user base by fixing one failure: memory. The Kilo.ai synthesis of 1,300+ r/openclaw comments confirms the figure. OpenClaw still wins on multi-agent breadth and 100+ skills. The right answer depends on which failure mode hurts you more.

Key Takeaways

- About 30% of r/openclaw users have switched to Hermes Agent, mainly for memory reliability.

- Memory failures, not features, are the top reason people leave OpenClaw.

- Hermes ships with memory that works by default; OpenClaw needs heavy prompt-engineering to behave.

- OpenClaw still wins for multi-bot setups across Telegram, Slack, and Discord.

- A growing minority skip both and use OpenAI Codex business-tier instead.

Why r/openclaw Is Migrating to Hermes

The most-cited migration thread on the subreddit is the 167-comment OpenClaw vs Hermes thread . The top-voted answer to “is Hermes worth a look” reads as a clean defection notice. The poster ran OpenClaw for weeks on the same workload, then switched in an afternoon:

In just a few hours it was completing tasks that I had to configure openclaw to do over weeks due to poor memory. It REMEMEBERS things. Hermes to me feels like what openclaw is trying to be.

u/Soul_Mate_4ever (+106, top-voted reply on the vs-Hermes thread)

The misspelling is in the original. That comment sits at the top of the thread for a reason. Most of the migration testimony repeats the same memory complaint. u/spinsilo (+42 karma) put the issue more plainly in the Kilo.ai 1,300-comment synthesis :

Main reason is the memory issue. I’ve wrestled with it since about day 3 and I’m just finding that I’m having to put way too much time into figuring out how to stop it forgetting stuff.

A second thread in the same window, the 182-comment “After 3 months, I’m done. OpenClaw has officially become a money pit” post, repeats the pattern. People leave for Hermes to escape memory churn and the cron jobs OpenClaw silently misses.

The other common failure mode is config-file breakage between releases. Long-form commenters in the vs-Hermes thread describe two-month OpenClaw runs where every release broke a config file with no backward fit. Sixty percent of session time went to babysitting the agent. A switch to Hermes cut their debug load to under five percent. Sixty-to-five is enough of a gap to move a subreddit by 30%.

The Architectural Inversion Behind Both Tools

Feature checklists make OpenClaw and Hermes look near-identical. Both ship multi-channel bot integrations. Both expose a skill or plugin model. Both can drive cron jobs. Both wire into Telegram, Slack, and Discord. The real split is structural, and the boundary line maps cleanly onto the emerging agent-to-agent protocols (MCP and A2A) that newer agents are built around. Brendan O’Leary’s framing on the Kilo blog , surfaced in the Kilo.ai synthesis, sums it up in one line:

Hermes packages a gateway around a learning agent. OpenClaw packages an agent around a messaging gateway.

That single flip drives almost every downstream tradeoff. OpenClaw treats messaging channels as first-class and slots an agent inside. That’s why it ships ten ways to wire up a bot persona, but breaks easily when its plugin graph churns. Hermes treats the agent (with memory and a self-learning loop) as the first-class object and slots channels around it. That’s why memory feels built-in rather than something you fight to retain.

Stability follows from the layout. OpenClaw’s gateway leans on a churning skill catalog. When bundled and external plugins half-split, or when ClawHub artifact metadata is mid-migration, gateway cold paths do too much work and the agent slips. Hermes’ agent-first design dodges this class of breakage because its surface area is smaller. Its self-evaluation pass-rate is suspect though (more on that below).

Founder Peter Steinberger admitted the design cost in public. In the “OpenClaw Had a Rough Week” post , he wrote:

The trouble started around 2026.4.24. By 2026.4.29 it was obvious enough that nobody could pretend this was just a few weird installs. Gateways got slower. Some installs got stuck in plugin dependency repair loops. Discord, Telegram, WhatsApp and other channels behaved worse than they should. People downgraded. People lost time.

He pledged to make the core “smaller, safer, and more infrastructure-grade,” with an LTS release planned next to the faster update cycle. The gap may narrow. For now it is the gap that explains the migration.

Where OpenClaw Still Wins

The Reddit noise reads as a one-sided rout. Yet OpenClaw keeps real edges, and each one is enough to keep a real slice of users in place.

The multi-agent and persona stack is the most concrete. Each OpenClaw agent can have its own channel, its own bot identity, and its own persona. Users running 5 to 10 agent setups across Telegram, Slack, and Discord cite this as the reason they stay. The Kilo.ai analysis treats this as the edge no rival has matched. Hermes is a single-agent story stretched across channels. OpenClaw is a fleet. If you have not yet picked coordination primitives for that fleet, the multi-agent orchestration patterns that work for parallel coding agents transfer right over to multi-channel bot fleets.

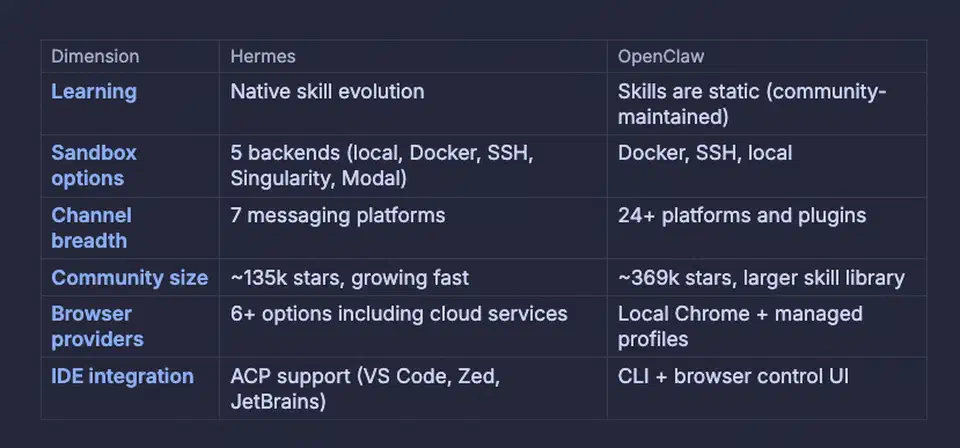

Skill breadth is the second. The KDnuggets explainer from March 17, 2026 confirms 100+ built-in skills, plus the ClawHub plugin marketplace. Hermes’ integration list is thinner. Because the ecosystems don’t talk to each other, the skills don’t carry over when you migrate.

Cron determinism counts for more than people give it credit for. OpenClaw’s scheduler is imperfect, but you can shape it in a way most LLM-powered agents won’t allow. For people who treat their agent as cron-with-an-LLM-on-top, that beats memory.

Then there is raw iteration history. OpenClaw has shipped 137 releases versus Hermes’ 11, per the Kilo.ai count. More releases means more breaking changes. It also means the project has been pressure-tested in public for longer. If your goal is to learn how agentic systems break, OpenClaw is the better classroom.

Where Hermes Wins

Cross-check the wins against the verbatim migration testimony in the threads, and four advantages survive scrutiny.

The first is setup quality. u/Eastern_Interest_908 (+5 karma) put the polish gap bluntly in the Kilo synthesis:

Looking through code it looks like an actual app where openclaw is more like tech demo.

That maps to repeated migration claims. Hermes is up and running in an afternoon where OpenClaw needed weeks of config.

The second is default memory. Things stick by default. OpenClaw’s memory is there in theory, but it needs heavy prompt-engineering and skill-side bookkeeping to behave. Hermes asks the user to opt out of retention rather than opt in. The retention pattern is what stateful agent frameworks like LangGraph for multi-step AI agents bake into their graph nodes by default, and Hermes ships with the same instinct.

The third is the self-learning loop, which is also Hermes’ biggest weakness. The agent tunes skill behavior based on outcomes. Its self-evaluation pass-rate is suspect though: the Kilo.ai analysis flags a false-positive bias, and the learning loop can silently overwrite user customizations. Accept it as a feature with caveats.

The fourth is checkpoint and rollback. Hermes’ release model is friendlier to “I broke something, give me yesterday’s state” than OpenClaw’s plugin-graph repair loops. When OpenClaw’s update path dies in dependency repair, the recovery surface is much wider.

The design details live in the Hermes Agent project repo on GitHub. The honest Hermes pitch is not “more capable than OpenClaw”; it is “fewer ways to fail in the first 48 hours of use.”

Comparison at a Glance

| Dimension | OpenClaw | Hermes Agent | Codex business-tier |

|---|---|---|---|

| Memory model | Manual, prompt-engineered | Default-on, agent-curated | Built into the platform |

| Multi-agent personas | Yes (5 to 10 typical) | Single-agent, multi-channel | No native persona model |

| Channel coverage | Telegram, Slack, Discord, WhatsApp, more | Same channels, fewer integrations | Browser/GitHub-driven, no Telegram bot |

| Skill catalog | 100+ built-in plus ClawHub | Smaller, growing | None (general-purpose codex) |

| Release cadence | 137 releases | 11 releases | Hosted, no public cadence |

| Self-evaluation reliability | N/A | Flagged false-positive bias | Hosted; user-invisible |

| Where it shines | Multi-bot fleets, cron jobs | Long-running unsupervised tasks | Heavy coding workloads |

The Third Option: Codex Business-Tier as a Meta-Agent

A growing minority of users in the vs-Hermes thread skip the comparison and run OpenAI Codex on the business-tier subscription as their agent layer. The pattern in the thread: ran OpenClaw and Hermes side by side on the same model (minimax 2.5), deleted both after a week, moved to business-tier Codex, reported zero repeat issues across the same workload.

The same commenter pinned the protocol-level gap. Codex 5.4 reportedly rebuilt Hermes’ tool-calling protocol with a 70% drop in token usage. Hermes-on-minimax-2.5 was still token-burny and debug-heavy in their tests. Codex was not. The terminal-side cousin is the OpenAI Codex CLI Rust agent, which ships the same model with sandbox-level OS isolation and MCP support.

Codex still loses for some users, and the failure modes are concrete. There is no native Telegram bot story, so you need a phone or a browser to drive the GitHub-attached cloud Codex. There is no per-channel persona model, so a fleet of distinct bots has to be glued together with outside scripting. There is no skill marketplace, so the long tail of community-built integrations does not carry over. If your workflows live in chat channels with personas, Codex is not a drop-in.

A scope note: the headline matchup here is OpenClaw vs Hermes. Codex earns a section because it is the honest answer for some users, not because it deserves the whole comparison.

When NOT to Use This

- You already run a 5 to 10 persona OpenClaw setup across Telegram, Slack, and Discord. Hermes’ multi-agent story is much thinner.

- You depend on OpenClaw skills with no Hermes equivalent. The ecosystems are not interoperable.

- You can’t tolerate Hermes’ self-learning agent overwriting your custom config without warning.

- You want a stable platform with a long release-history. Hermes has shipped 11 releases versus OpenClaw’s 137.

- You have already migrated to Codex business-tier. The OpenClaw vs Hermes choice is moot at that point.

- You only run interactive (not unsupervised) workflows. Memory matters less when a human is in the loop on every turn.

FAQ

Is Hermes actually better than OpenClaw?

Is the Hermes hype real or astroturfed?

Can I run OpenClaw and Hermes together?

Why is OpenClaw's memory unreliable?

Should I just use Codex business-tier instead of either?

Why does OpenClaw's release cadence sometimes hurt rather than help?

Botmonster Tech

Botmonster Tech