OpenAI Codex CLI

is an open-source (Apache 2.0), Rust-built terminal coding agent. It has over 72,000 GitHub stars. It pairs GPT-5.4’s 272K default context window, which you can push to 1M tokens, with OS-level sandboxing. That sandbox runs on Apple Seatbelt on macOS and Landlock plus seccomp on Linux. Here is the key point: Codex CLI is the only major AI coding agent that enforces security at the kernel level, not through application-layer hooks. With codex exec for CI pipelines, MCP client and server support, and a GitHub Action for PR review, it is the most infrastructure-ready rival to Claude Code

in 2026.

OpenAI Codex CLI: The Rust-Powered Terminal Agent Taking on Claude Code

Qwen3.6-35B-A3B: Alibaba's Open-Weight Coding MoE

Qwen3.6-35B-A3B is Alibaba Cloud’s Apache 2.0 sparse Mixture-of-Experts model released April 14, 2026. It carries 35 billion total parameters but activates only about 3 billion per token, and on agentic coding suites it beats Gemma 4-31B and matches Claude Sonnet 4.5 on most vision tasks. A 20.9GB Q4 quantization runs on a MacBook Pro M5, which is the reason this release has taken over half the AI timeline for the past week.

Structured Output from LLMs: JSON Schemas and the Instructor Library

The Instructor

library (v1.7+) patches LLM client libraries to return validated Pydantic

models instead of raw text. It does this with JSON schema enforcement in the system prompt, auto retries on validation failure, and native structured output modes where the provider supports them. It works with OpenAI, Anthropic, Ollama

, and any OpenAI-compatible API. You define your output as a Python class and get back typed, validated data. No regex parsing, no json.loads() wrapped in try/except, no manual type casting.

What Are the Best Ergonomic Split Keyboards for Programmers (2026)?

The three best ergonomic split keyboards for programmers in 2026 are the MoErgo Glove80 ($399, best overall comfort with contoured key wells and aggressive tenting), the ZSA Voyager ($365, best portable option with a low-profile design and magnetic tenting legs), and the Kinesis Advantage360 Pro ($499, best for deep key well fans with wireless ZMK firmware). All three offer full Linux support, open-source firmware tweaks, and columnar stagger layouts that cut finger strain on long coding days.

WireGuard Site-to-Site VPN: 400-500 Mbps on Raspberry Pi

To connect two remote LANs over WireGuard

, you configure a WireGuard peer on one gateway device at each site, set AllowedIPs to include the remote site’s subnet, enable IP forwarding on both gateways, and add routing so LAN clients send cross-site traffic through the tunnel. Once configured, every device on either LAN can reach devices on the other LAN transparently - no VPN client installation on individual machines. A single UDP port open on at least one side is all you need.

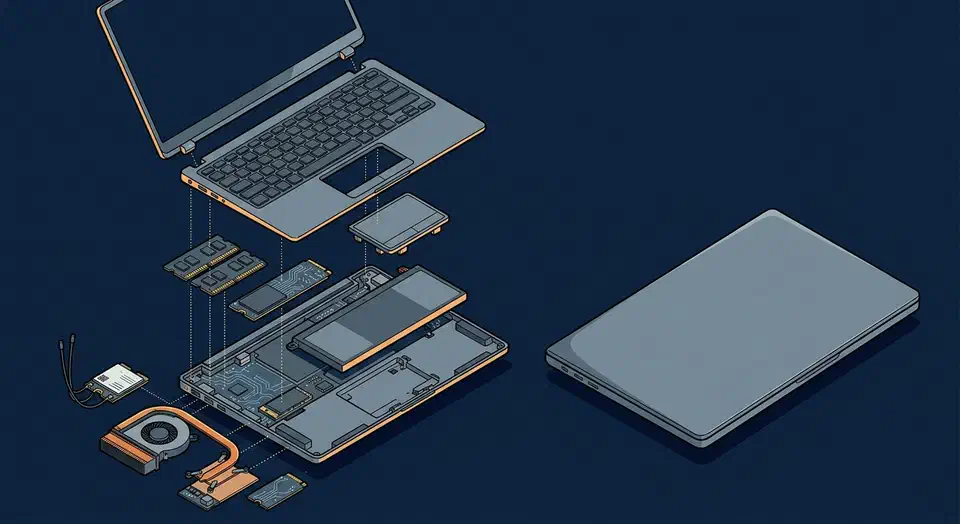

Framework 16 vs. ThinkPad X1 Carbon: Best Linux Dev Laptop in 2026

The ThinkPad X1 Carbon Gen 13 is the better daily-driver for developers who prioritize battery life, keyboard quality, and a polished out-of-the-box Linux experience. The Framework Laptop 16 wins if you value user-replaceable components, GPU modularity, and the ability to upgrade RAM and storage years down the line. Both run Linux excellently in 2026, but they serve different philosophies: the ThinkPad is a refined appliance, and the Framework is a repairable platform.