You can switch your Linux install from X11 to Wayland without reinstalling anything. The move comes down to picking a Wayland session at your login screen. After that, three things need follow-up: Xwayland for legacy X11 apps, input setup through libinput instead of xorg.conf, and a few environment variables. Those variables let toolkits like Qt, GTK, and Electron render through Wayland instead of falling back to X11. Most people finish in an afternoon. You can keep an X11 session as a fallback until you’re happy everything works.

Docker Image Hardening: Minimal Bases, Non-Root, and Trivy Scans

Hardening a Docker image means cutting the attack surface at every layer. Start from a minimal base like distroless or Alpine. Run as a non-root user. Set the filesystem read-only. Drop all Linux capabilities and add back only what the app needs. Pin dependency versions with checksums. Scan images with Trivy or Grype before you push. Each layer of this checklist stands on its own, so you can adopt them one at a time.

Implement OAuth 2.0 with PKCE: Flask + GitHub Login

You implement OAuth 2.0 login by using the Authorization Code flow with PKCE (Proof Key for Code Exchange). Your web app redirects the user to the provider’s authorization endpoint with a code_challenge, the user authenticates and consents, the provider redirects back with an authorization code, and your backend exchanges that code along with the code_verifier for an access token. PKCE is mandatory for all OAuth 2.0 clients under the OAuth 2.1 draft specification

(currently at draft-ietf-oauth-v2-1-15) and eliminates the need for a client secret in public clients. Building this from scratch - without Auth0, Clerk, or NextAuth - takes roughly 200 lines of code and teaches you exactly how token exchange, session management, and token refresh actually work.

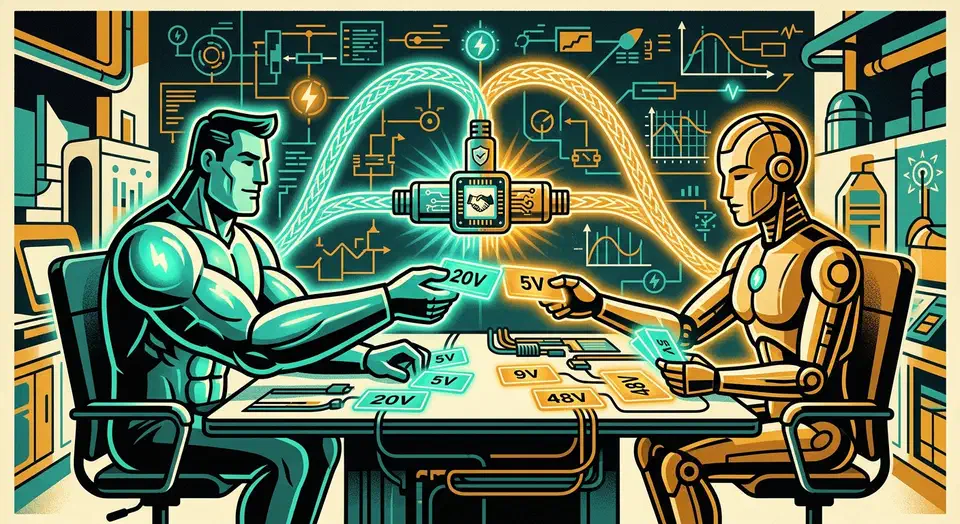

Why Is My USB-C Charger So Slow? Understanding USB Power Delivery

USB Power Delivery (USB-PD) is supposed to be the universal charging standard that ends cable chaos. In practice, plugging in the wrong cable or charger gives you a device that charges at 5W instead of 100W - or refuses to charge at all. The root cause is almost always one of three things: a cable rated below what the device needs, a charger that advertises high wattage but only supports a narrow set of voltage profiles, or confusion between USB-PD and the half-dozen proprietary fast-charging protocols that coexist with it.

Manage Your Dev Environment with Nix Shells (No Docker Required)

If you have ever handed a new team member a README full of “install Node 22, then Python 3.12, then make sure your openssl headers match” instructions, you already know the problem. Nix flakes solve it at the root: instead of documenting what to install, you declare the exact toolchain in a flake.nix file, commit it alongside your code, and every developer runs nix develop to get an identical environment - same compiler, same CLI versions, same system libraries. In 2026, Nix flakes

are stable, the Nixpkgs

repository holds over 100,000 packages, and the ecosystem around flakes has matured to the point where the learning curve is manageable even for teams with no prior Nix experience.

MiniMax M2.7: Model That Almost Matches Claude Opus 4.6

MiniMax M2.7 , released in April 2026, is a 230B-parameter open-weights reasoning model (Mixture-of-Experts, 10B active, 8 of 256 experts routed per token) that scores 50 on the Artificial Analysis Intelligence Index. That lands it on par with Sonnet 4.6 across coding and agent benchmarks and within a couple of points of Claude Opus 4.6. Weights are on HuggingFace at MiniMaxAI/MiniMax-M2.7 , the hosted API runs $0.30 / $1.20 per million input/output tokens (roughly a tenth of Opus), and if you have a 128GB-unified-memory Mac Studio, an AMD Strix Halo box, or an NVIDIA DGX Spark , you can run it offline with zero token bills. Two big asterisks: the M2.7 license is not the permissive M2.5 license (commercial use is restricted), and there is no multimodal support. For homelabbers and agent builders who are text-only and non-commercial, M2.7 is the best locally runnable Opus-class option shipped so far.