Ditching Claude Opus for GLM 5.1 in OpenClaw at $18/Mo

After Anthropic’s third-party tool restrictions priced agentic users off Claude Opus 4.6, the cheapest working OpenClaw stack is Z.ai’s $18/mo GLM 5 Turbo plan, with Ollama-cloud’s $20/mo GLM 5.1 and MiniMax’s $40/mo highspeed tier as the next two rungs. Kimi 2.6 stays API-only because local deployment needs roughly 750 GB of RAM.

Key Takeaways

- Z.ai’s $18/mo plan running GLM 5 Turbo is the cheapest OpenClaw backend that actually works.

- MiniMax highspeed at $40/mo handles heavier workloads without the four-figure surprise bills.

- Kimi 2.6 needs around 750 GB of RAM to self-host, so almost everyone runs it through the API.

- Keep Claude on the planner role; route scheduled jobs to the cheap backends.

- China-hosted models trade dollars for privacy on iMessage, contacts, and email skills.

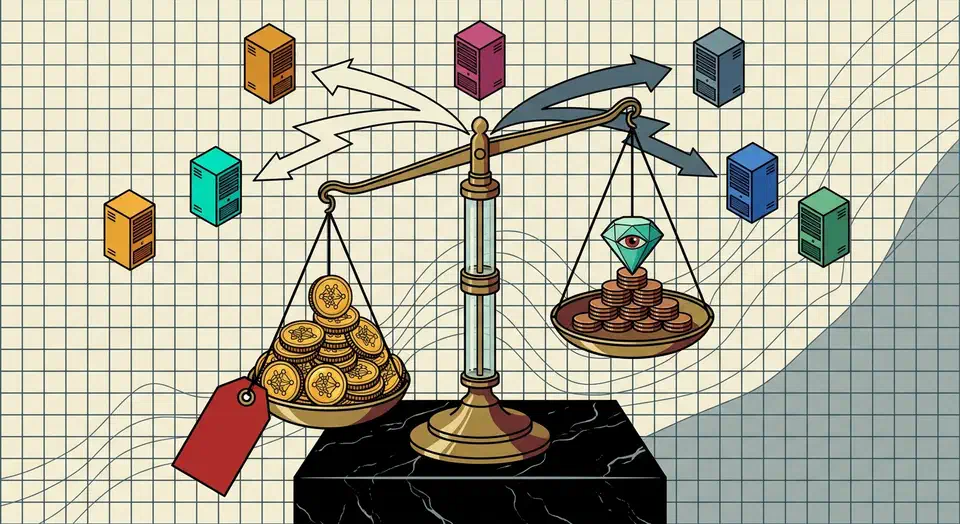

Why $1,500/mo Opus Bills Pushed Users to GLM

The pressure here is simple. The moment Anthropic’s third-party tool restrictions kicked in, OpenClaw users who had been running on the Claude Pro CLI got nudged onto pay-per-token API access. At Opus 4.6 list pricing of $15 per million input tokens and $75 per million output tokens, agentic loops add up fast. The OP of the r/openclaw PSA thread tracked his own bill at roughly $1,500/mo before he switched. That figure is the reference point most cost-comparison threads on the sub now cite.

OpenClaw’s design is what makes this so painful. A single user-facing instruction often expands into a long sequence of small tool calls, each one growing the context. Pay-per-token loves that pattern; your wallet does not. As the Carly AI explainer put it, OpenClaw can “quietly run up four-figure API bills while [users] slept” if nobody is watching the gateway.

The obvious workaround is to keep using the Claude CLI on a Pro or Max subscription . That works for interactive sessions, but the Pro and Max plans cap usage roughly every five hours, and a cron-driven OpenClaw fleet (news fetch every 15 minutes, social posts on a schedule, productivity rollups overnight) burns through that cap in minutes. So users went looking for something cheaper that could carry the cron load while a Claude subscription stayed on planning duty.

The Cheap-Stack Pricing Table

Here is what people on r/openclaw are actually paying, with sources tagged so you can audit each figure:

| Backend | Price | Best for | Hosted in |

|---|---|---|---|

| Z.ai GLM 5 Turbo (coding plan) | $18/mo | Cron jobs, news, social, summaries | China |

| Ollama-cloud GLM 5.1 | $20/mo | Same workloads, higher rate limit | China-model |

| OpenAI Codex bundled with ChatGPT | $0 incremental | Coding, chat with rate-limit watch | US |

| MiniMax highspeed | $40/mo | Hermes-class agents, heavier loads | China |

| DeepSeek V4 Flash | API metered | High-context cheap inference | China |

| Anthropic Claude Pro | $20/mo | Planning, complex tool-calling | US |

The Z.ai number comes from a commenter on the r/openclaw money-pit thread who said plainly:

$18 a month Z.ai coding plan. Have been using GLM 5 Turbo. Works great with OpenClaw. Also replaced my productivity skills and markdown file with a Postgres and mcp server. Haven’t ran into any usage issues, but also don’t use OpenClaw for coding projects or stuff like that. Mostly just automated news, social media posts, and track productivity stuff.

u/CommitteeDry5570 (r/openclaw money-pit thread, Z.ai GLM 5 Turbo on the $18/mo plan)

The Ollama-cloud number lands one tier up: the same thread documents users abandoning Codex auth after hitting rate limits inside 2 to 3 days and routing $20/mo to Ollama-cloud GLM 5.1 for a much higher cron-friendly limit. That is why two GLM tiers coexist in the cost table: Z.ai for the hobbyist load, Ollama-cloud when your cron spend pushes the rate-limit walls. MiniMax shows up at the next price point on the highspeed plan. DeepSeek V4 Flash gets glowing mentions in a sibling thread where the OP joked about dropping $25,000 on a workstation to feed it; treat it as the high-context cheap option once you already have hardware. Codex bundled with a ChatGPT business subscription is the one path with zero incremental cost, with the obvious caveat that you are paying for the higher ChatGPT tier anyway, and the rate limit is the thing to monitor.

How OpenClaw’s CLI-Backend System Actually Swaps Providers

Reading the docs is the only way to make any of this concrete. The official CLI backends doc ships this default config:

model: {

primary: "anthropic/claude-opus-4-6",

fallbacks: ["codex-cli/gpt-5.5"],

},

models: {

"anthropic/claude-opus-4-6": { alias: "Opus" },

"codex-cli/gpt-5.5": {},

},Out of the box OpenClaw routes between Anthropic and the Codex CLI . Everything else (Z.ai GLM, MiniMax, Ollama-cloud) plugs in through the CLI backend plugin system . The login pattern is identical across backends:

openclaw models auth login --provider anthropic --method cli --set-defaultSubstitute --provider codex or --provider z-ai to switch routing. That is the entire mechanical change for swapping backends; the rest is a config edit on agents.defaults.cliBackends to declare which agents prefer which backend.

There is one gotcha that the docs flag and most blog posts skip. The bundled claude-cli backend is the only one that maps OpenClaw’s /think levels to Claude Code’s native --effort flag automatically. The docs put it like this:

The bundled Anthropic claude-cli backend also maps OpenClaw /think levels to Claude Code’s native –effort flag for non-off levels. minimal and low map to low, adaptive and medium map to medium, and high, xhigh, and max map directly. Other CLI backends need their owning plugin to declare an equivalent argv mapper before /think can affect the spawned CLI.

In practice, that means: if you flip the primary backend to GLM 5 Turbo and your skills assume /think high means “spend more compute on the next call,” that flag silently becomes a no-op until the Z.ai plugin author wires up the equivalent argv mapper. So GLM only replaces Opus for skills that don’t depend on think-level escalation. Your planner agent stays on Anthropic.

The Privacy Decision Tree (China-Hosted vs US-Hosted)

This is the trade-off most cost comparisons dodge. GLM, MiniMax, and Kimi are all hosted on Chinese infrastructure. The cheap inference is genuinely cheap, but the OpenClaw skills graph in many setups now includes iMessage, contacts, SMS, and email send. The framing repeated across the money-pit thread is bluntly that anything sent to a China-hosted endpoint should be treated as eventually-public, since US privacy laws and US legal recourse don’t apply. Commenters in the same thread describe their own installs: emails plus contacts plus SMS routed through the agent, with the realization that running those prompts through a China-hosted model effectively logs personal correspondence into a jurisdiction they cannot subpoena.

If you have already enabled personal-account skills (iMessage, SMS, contacts , email send), every prompt the agent issues against those skills carries identifiable correspondence. Routing those prompts through a China-hosted endpoint to save $180/mo is a real cost, just not the one that shows up on the credit card statement.

The decision tree that fits the community pattern looks like this. If iMessage, SMS, contacts, or email-send skills are enabled on the OpenClaw instance, stay on US-hosted backends only (Anthropic CLI, Codex business-tier). If OpenClaw is sandboxed on a disposable VPS with no personal-account access, China-hosted GLM and MiniMax are on the table. If you are running it on a personal Mac with full skill access, do not optimize for cost first. The skills you have enabled determine the backend you can afford to run.

Kimi 2.6 Is API-Only and That Matters

A meaningful share of “I’m running Kimi locally” claims on the sub turn out to mean “I’m paying for the Moonshot API.” The hardware floor is the reason. The community ballpark from the money-pit thread is roughly 750 GB of RAM and multiple high-end GPUs (multiple 5090-class cards) to get the model functional locally with a small context window. The canonical reference is the Kimi K2.6 deploy guidance on Hugging Face . The model card confirms a Mixture-of-Experts architecture where the total parameter count and the per-token VRAM math both push you well past consumer GPU territory. So when a thread tells you to “just run Kimi locally,” the realistic interpretation is API-routed inference on Moonshot’s servers. Skip the weekend you would otherwise spend trying to make a 750 GB rig work.

The version-pinning footnote belongs in the same section because anyone swapping backends inherits OpenClaw’s release-stability story. The community pattern across the money-pit thread is to stop auto-updating, sit on a known-good build for a month or two at a time, and let the project ship a few releases before re-evaluating. That advice exists because OpenClaw shipped two regressive releases recently, documented in the founder’s rough-week post-mortem . When you swap to a cheap backend, pin the OpenClaw version to a known-good build until the LTS branch ships.

How To Swap OpenClaw From Claude Opus to GLM 5 Turbo

Swap OpenClaw's primary backend from Claude Opus to GLM 5 Turbo

Subscribe to Z.ai's $18/mo coding plan

Install the Z.ai CLI backend plugin in OpenClaw

Authenticate against Z.ai

openclaw models auth login --provider z-ai --method cli --set-default (substituting whatever provider name your plugin declares); confirm the gateway shows the new backend as available.Edit agents.defaults.cliBackends in your config

z-ai/glm-5-turbo) and keep anthropic/claude-opus-4-6 as a fallback for sessions tagged interactive.Tag interactive vs cron agents

Smoke-test cost behavior for 24 hours

Pin OpenClaw to a known-good release

When NOT to Use This

- You require deterministic prompt-caching behavior the cheap backends do not guarantee at the same level Anthropic’s CLI path does.

- Your OpenClaw skills depend on Claude-specific tool-calling formats; some do not translate cleanly to GLM or Codex.

- Your workflow is bound by compliance rules that exclude China-hosted inference (most enterprise data, regulated industries).

- You do not have the Codex or Anthropic CLI binaries available on the host; this recipe assumes a working multi-CLI setup.

- You only run light interactive workloads: your $20 Claude Pro subscription on the sanctioned claude-cli backend already covers them at no extra cost.

FAQ

Will my OpenClaw skills work on GLM 5.1 the same as on Claude Opus?

Is the China-hosted privacy concern real?

What's the cheapest plan that handles cron jobs without rate-limit anxiety?

Can I run Kimi 2.6 locally?

Should I pin OpenClaw to a specific version after I switch backends?

Botmonster Tech

Botmonster Tech