Qwen 2.5 Coder 7B is the most accurate of the three for Python and TypeScript completions. Phi-4 Mini (3.8B) uses the least VRAM and generates tokens nearly twice as fast, making it the right pick when memory headroom or latency matters more than raw accuracy. Gemma 3 4B sits in the middle - not the fastest, not the most accurate at code - but the most capable when you need one model for coding, commit messages, documentation, and error explanations. Below are the actual benchmark numbers, the full test methodology, and how to configure each model in VS Code or Neovim.

Qwen3.6-35B-A3B: Alibaba's Open-Weight Coding MoE

Qwen3.6-35B-A3B is Alibaba Cloud’s Apache 2.0 sparse Mixture-of-Experts model released April 14, 2026. It carries 35 billion total parameters but activates only about 3 billion per token, and on agentic coding suites it beats Gemma 4-31B and matches Claude Sonnet 4.5 on most vision tasks. A 20.9GB Q4 quantization runs on a MacBook Pro M5, which is the reason this release has taken over half the AI timeline for the past week.

Gemma 4 vs Qwen 3.5 vs Llama 4: Which Open Model Should You Actually Use? (2026)

For most developers in 2026, Gemma 4 31B is the best all-around open model. It ranks #3 on the LMArena leaderboard, scores 85.2% on MMLU Pro, and ships under Apache 2.0 with zero usage restrictions. Qwen 3.5 27B edges it on coding benchmarks - 72.4% on SWE-bench Verified versus Gemma 4’s strength in math reasoning - and its Omni variant offers real-time speech output that no other open model matches. Llama 4 Maverick (400B MoE) wins on raw scale but requires datacenter hardware and carries Meta’s restrictive 700M MAU license. Pick Gemma 4 for the best quality-to-size ratio under a true open-source license, Qwen 3.5 for coding-heavy workflows, and Llama 4 only when you need the largest available open model and can absorb the legal overhead.

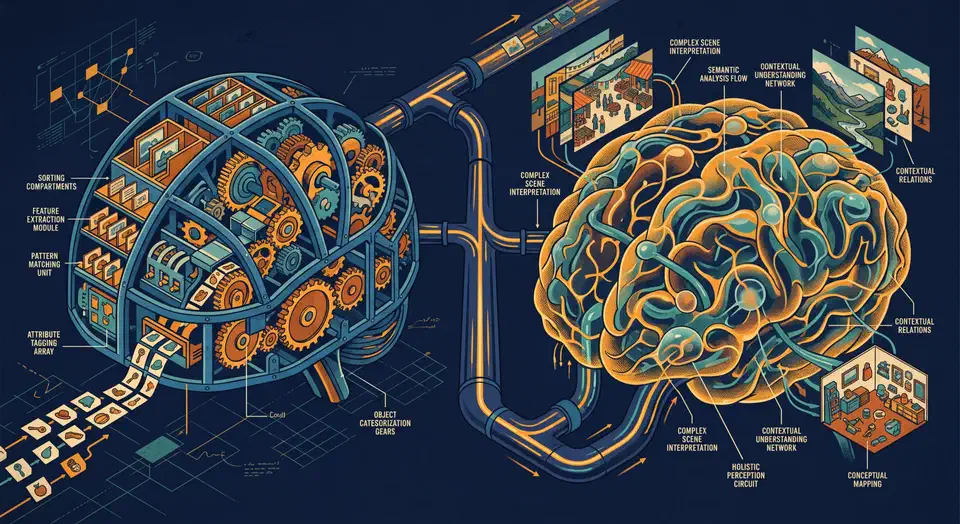

Run Vision Models Locally: Florence-2 and Qwen-VL for Image Analysis

Florence-2 and Qwen2-VL both run on consumer NVIDIA GPUs starting at 8 GB VRAM and handle OCR, object detection, image captioning, and visual question answering entirely offline. Florence-2 uses a compact sequence-to-sequence architecture with task-specific prompt tokens, which makes it fast and reliable for structured extraction work. Qwen2-VL takes a conversational approach and handles open-ended reasoning, complex documents, and follow-up questions - making the two models complementary rather than interchangeable.

Botmonster Tech

Botmonster Tech