Running CI/CD through GitHub Actions or GitLab CI is handy until it isn’t. Free tier minute limits run out fast. Private repos cost more than you’d expect. And if your code is sensitive, you’re sending every push through someone else’s servers. Self-hosting your pipeline sidesteps all of that.

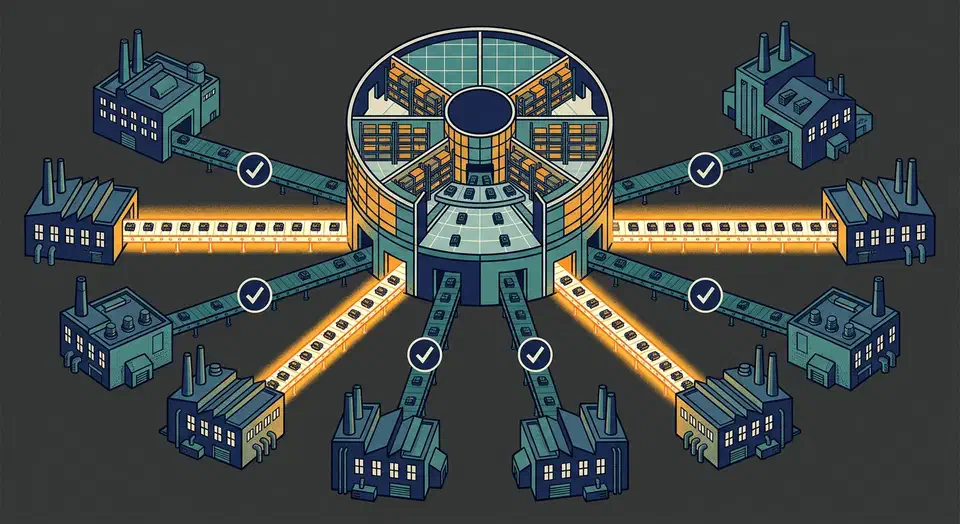

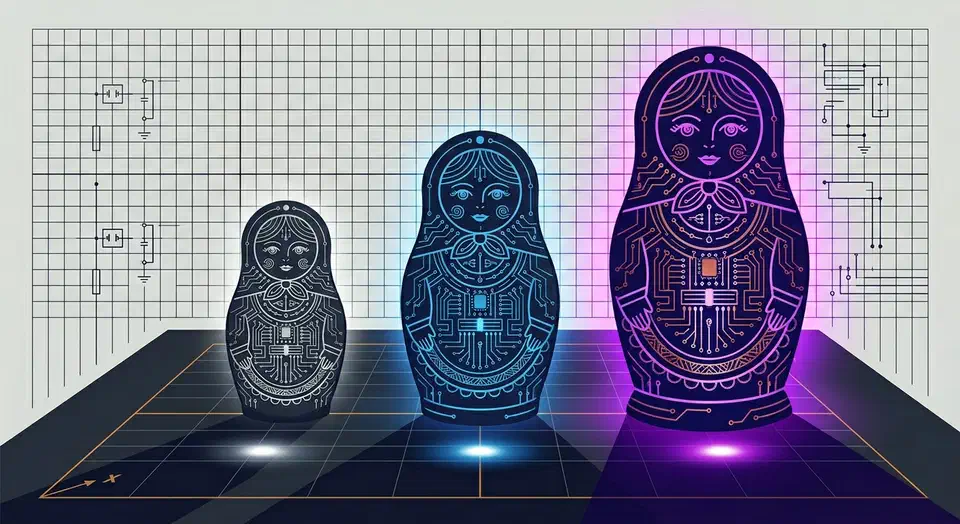

Gitea is a light, self-hosted Git service. It has added GitHub Actions-compatible workflow support through a piece called act_runner . The workflow YAML syntax is near-identical to GitHub Actions. So teams who already know that ecosystem can move over with little friction. This guide walks through a complete, production-ready CI/CD stack on Linux using Docker Compose.