SQLite can now run at the edge. It works inside Cloudflare Workers via D1, on Fly.io via LiteFS replicated volumes, and in any V8 isolate through embedded WASM builds. This gives you sub-millisecond read queries. You get them by placing your database close to your users on a global CDN. A few tools made this practical: LiteFS for transparent SQLite replication, Cloudflare D1 as a managed edge service, Turso for libSQL with server mode and replication, and Litestream for streaming the WAL to S3. SQLite ships as a single file with zero dependencies. So you get a relational database that deploys with your app binary, needs no connection pooling, and handles thousands of reads per second per node.

Why AI is Killing the Internet: Model Collapse and the Knowledge Commons

The open web ran on a fragile premise: that people would share what they know, for free, in public. For about two decades that premise held. Developers posted answers on Stack Overflow . Students argued on Reddit. Journalists broke stories that Google indexed. The result was a vast, searchable knowledge commons. AI did not just consume that commons. It’s now wrecking the conditions that built it.

This isn’t a wild claim or a Luddite gripe. It’s an economic collapse, on the record, playing out in real time, with hard knock-on effects for AI model quality. The story is worth knowing whether you write code, publish content, do research, or just use the web to learn.

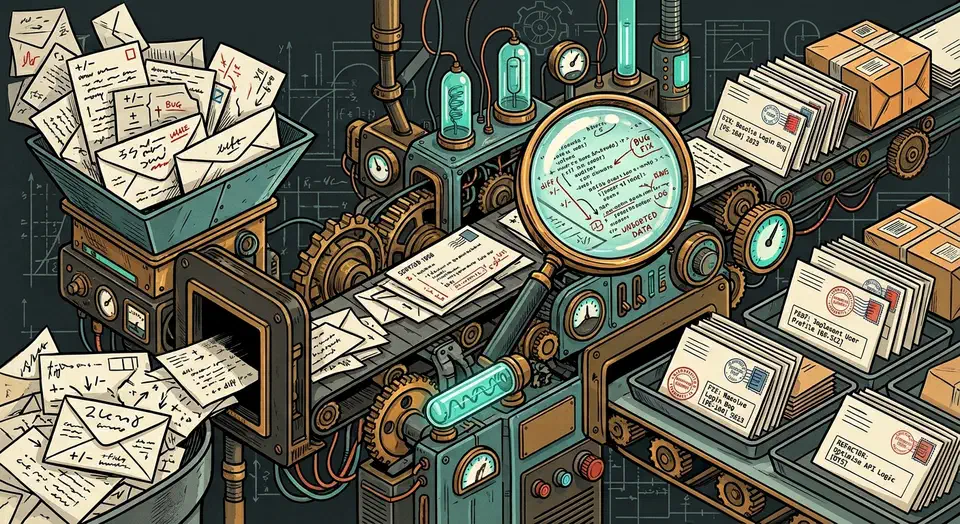

Generate Conventional Commits Locally with Ollama and Git Hooks

You can wire a local LLM into your Git workflow to write conventional commit messages from staged diffs. The trick is a prepare-commit-msg Git

hook. The hook runs git diff --cached and sends the output to Ollama

. Ollama runs a model like Llama 4 Scout on a consumer GPU

or Qwen3, then writes the message into the commit file for you to review. The whole setup is about 30 lines of shell or Python. It costs nothing to run, keeps your code local, and follows the Conventional Commits

format. That beats the “fix stuff” messages most of us write when we just want to move on.

Intel Arc 140V on Linux: The Best GPU Control Panel Apps and Driver Setup

Got a Lunar Lake laptop and went looking for Intel’s Arc Control app on Linux? It doesn’t exist. Intel only ships Arc Control for Windows. Linux users get a community tool instead: LACT

, the Linux GPU Configuration and Monitoring Tool. It covers temperature, power limits, clock speeds, and voltage through a proper GUI. For live performance data, intel_gpu_top and nvtop handle the rest from the terminal.

Below: driver setup, LACT installation, CLI monitoring tools, power tuning, and the most common things that go wrong on a fresh install.

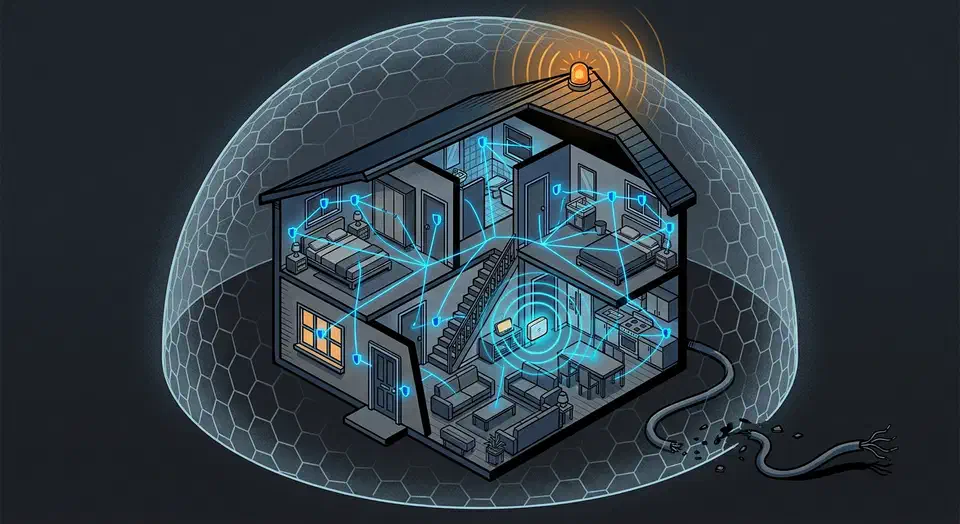

Local Z-Wave Alarm: $250 Setup, No Monthly Fee

You can build a fully local, cloud-free home alarm system with Z-Wave door and window sensors, motion detectors, and a siren wired to Home Assistant

through a Z-Wave JS controller. The built-in alarm_control_panel integration plus a few automations handle arming, disarming, entry delays, and the siren. It all runs on your local network. No cloud subscription, no monthly fee, and the alarm keeps working even when your internet goes down.

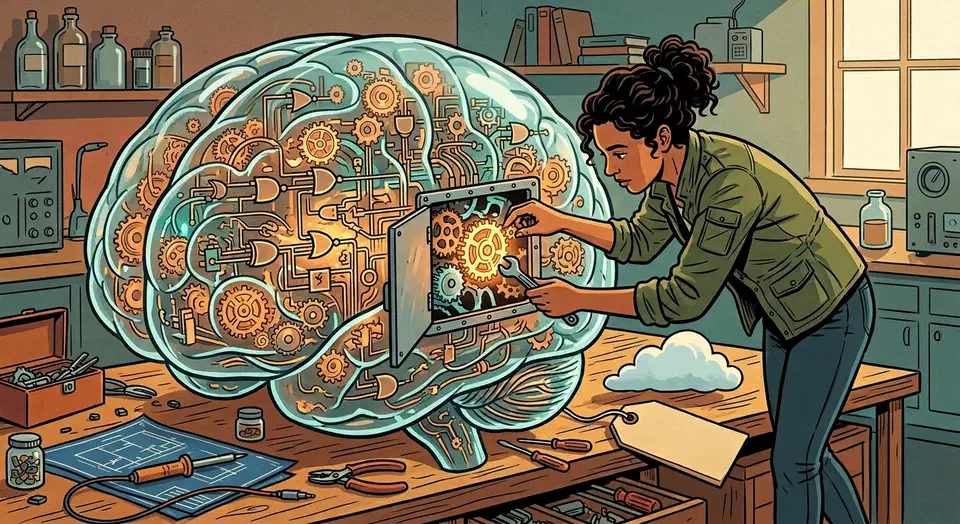

Run DeepSeek R1 Locally: Reasoning Models on Consumer Hardware

You can run DeepSeek R1

’s distilled reasoning models on an RTX 5080 with 16 GB of VRAM. Use Ollama

or llama.cpp

with 4-bit quantization. The 14B distilled variant (Q4_K_M) fits in about 10 GB of VRAM. It shows visible <think> reasoning traces that rival cloud quality on math, coding, and logic. The full 671B model needs multi-GPU rigs, but the distilled models give you 80-90% of the quality for far less hardware.