A fanless home server under $300 is real in 2026. Using an Intel N150 or N305 mini PC - the Beelink EQ12 Pro or GMK NucBox G3 - you get a passively cooled machine that draws 6-15W under load, makes zero noise, and handles a full stack of self-hosted services: Home Assistant, Jellyfin, Vaultwarden, Nextcloud, Immich, and a WireGuard VPN all running simultaneously without a single fan spinning.

Podman vs Docker on Linux: Which Container Runtime Should You Use?

For most Linux users in 2026, Podman is the better default choice. Its daemonless, rootless architecture eliminates the security surface area that comes with Docker’s persistent root-level daemon, and its native systemd integration means containers behave like any other service on a modern Linux box. That said, Docker remains the safer pick if your workflow leans heavily on Docker Compose v2 plugins, Docker Desktop’s GUI and extension ecosystem, or third-party tooling that still assumes the Docker socket API.

Self-Host Plausible Analytics: 1 KB Script, No Cookies

You can deploy a fully self-hosted Plausible Analytics

instance on a $6/month VPS using Docker Compose and a Caddy

reverse proxy for automatic HTTPS. The whole process takes under 30 minutes. Once running, you add a single <script> tag to your site and you are done - no cookie banners needed, no personal data collected. Plausible’s tracking script weighs under 1 KB gzipped, stores everything in a ClickHouse

database on your own server, and gives you a clean, fast dashboard that shows exactly what you need to know about your traffic.

Private Package Registries: PyPI, npm, Supply Chain Control

You can self-host a private PyPI registry with pypiserver and a private npm registry with Verdaccio . Both run on a single box or inside Docker containers . You get three wins that public registries cannot match: faster installs from a LAN cache, a safe home for private packages, and cover against outages, typosquatting, and supply chain attacks. Both tools are free, open-source, and take under 30 minutes to set up.

Testcontainers: PostgreSQL, Redis, Kafka Testing

Testcontainers spins up real databases and services as Docker containers inside your test suite. Tests run against production-grade PostgreSQL, Redis, or Kafka instead of flaky mocks. The testcontainers-python v4.14.2 library works with pytest . It automates the container life cycle. You get isolated, reproducible integration tests that catch bugs unit tests miss.

Below: setup with pytest, testing services beyond databases, performance patterns, and CI/CD configuration.

Why Mocks and In-Memory Databases Are Not Enough

Mocking db.execute() only checks if your code calls the function. It does not check if the SQL is valid. It also misses schema errors and type mismatches. You might have passing tests while your queries fail in production.

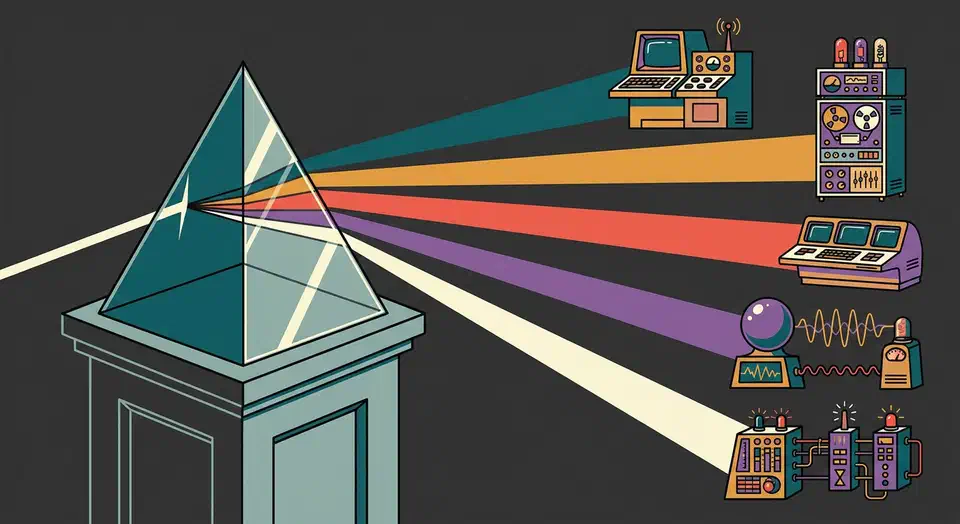

Route Ollama, vLLM, OpenAI through one LiteLLM API

You can unify access to Ollama, vLLM, cloud providers like OpenAI, Anthropic, and Google, plus custom model servers behind one OpenAI-compatible endpoint using LiteLLM Proxy

. LiteLLM is a reverse proxy. It maps the standard /v1/chat/completions request to each provider’s native API. From one YAML file it handles auth, model routing, load balancing, fallbacks, rate limits, and spend tracking. Your app calls one endpoint with one key, and LiteLLM picks the right backend. You can swap models, add providers, or run A/B tests without touching app code.

Botmonster Tech

Botmonster Tech