AI writes about 41% of all committed code in 2026, and some teams report well above 50%. AI review tools have cut PR cycle times by as much as 59%. Yet when Sonar asked 1,149 developers for their 2026 State of Code report , 47% ranked “reviewing and validating AI-generated code for quality and security” the top skill in the AI era, above prompting at 42%. The paradox: the more code AI writes, the more vital human review becomes.

Ditching Claude Opus for GLM 5.1 in OpenClaw at $18/Mo

Anthropic’s third-party tool rules priced agent users off Claude Opus 4.7. The cheapest working OpenClaw stack now is Z.ai’s $18/mo GLM 5 Turbo plan. Next rungs: Ollama-cloud’s $20/mo GLM 5.1, then MiniMax’s $40/mo highspeed tier. Kimi 2.6 stays API-only since local setup needs about 750 GB of RAM.

Key Takeaways

- Z.ai’s $18/mo plan running GLM 5 Turbo is the cheapest OpenClaw backend that actually works.

- MiniMax highspeed at $40/mo handles heavier workloads without the four-figure surprise bills.

- Kimi 2.6 needs around 750 GB of RAM to self-host, so almost everyone runs it through the API.

- Keep Claude on the planner role; route scheduled jobs to the cheap backends.

- China-hosted models trade dollars for privacy on iMessage, contacts, and email skills.

Why $1,500/mo Opus Bills Pushed Users to GLM

The pressure here is simple. Once Anthropic’s third-party tool rules kicked in, OpenClaw users on the Claude Pro CLI got nudged onto pay-per-token API access. At Opus 4.7 list pricing of $15 per million input tokens and $75 per million output tokens, agent loops add up fast. The OP of the r/openclaw PSA thread tracked his own bill at about $1,500/mo before he switched. That figure is the anchor most cost threads on the sub now cite. The pricing pain did not ease with the next model either: the community reception of Opus 4.7 leaned on token-burn complaints from power users hitting caps in minutes, which is exactly the pattern that turns an OpenClaw cron fleet into a four-figure surprise.

OpenClaw vs Hermes and Why Memory Kills Agent Loyalty

Hermes Agent , built by Nous Research, has taken about 30% of OpenClaw’s user base by fixing one failure: memory. The Kilo.ai synthesis of 1,300+ r/openclaw comments confirms the figure. OpenClaw still wins on multi-agent breadth and 100+ skills. The right answer depends on which failure mode hurts you more.

Key Takeaways

- About 30% of r/openclaw users have switched to Hermes Agent, mainly for memory reliability.

- Memory failures, not features, are the top reason people leave OpenClaw.

- Hermes ships with memory that works by default; OpenClaw needs heavy prompt-engineering to behave.

- OpenClaw still wins for multi-bot setups across Telegram, Slack, and Discord.

- A growing minority skip both and use OpenAI Codex business-tier instead.

Why r/openclaw Is Migrating to Hermes

The most-cited migration thread on the subreddit is the 167-comment OpenClaw vs Hermes thread . The top-voted answer to “is Hermes worth a look” reads as a clean defection notice. The poster ran OpenClaw for weeks on the same workload, then switched in an afternoon:

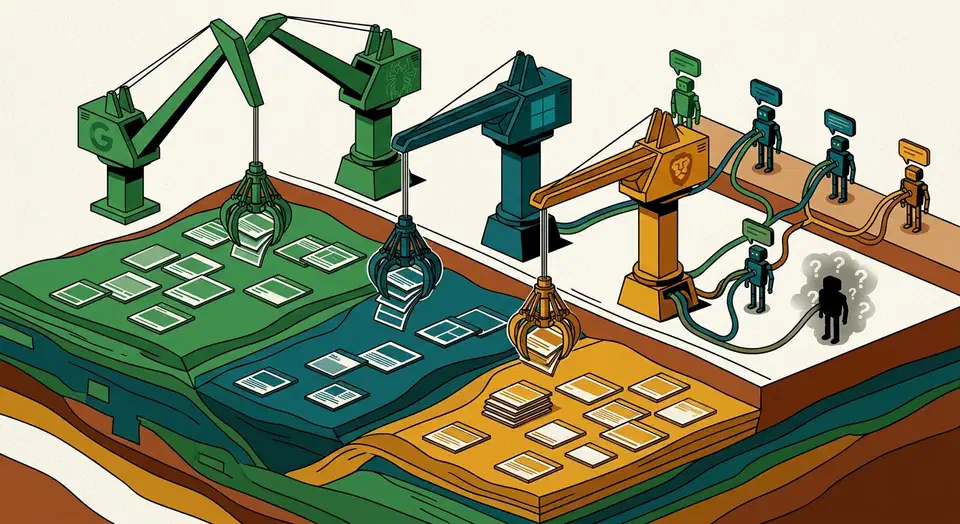

AI Web Search Backends: Who Owns, Who Rents

Only Google Gemini and Microsoft Copilot run on a search index their parent company crawls itself. Anthropic Claude rents Brave Search

, Mistral Le Chat rents Brave too, OpenAI ChatGPT rents Bing

plus its own crawler, and Meta AI rents both. The key clue: Claude’s web_search tool exposes a literal BraveSearchParams field, and citation overlap with Brave runs about 86.7%.

Key Takeaways

- Only Google and Microsoft own a web-scale search index.

- Claude and Mistral both reportedly run on the Brave Search API.

- ChatGPT uses Bing, OpenAI’s own crawler, and publisher deals.

- IndexNow helps Bing-backed AI products, not Brave or Google.

- Brave now acts as AI’s third search pole beside Google and Bing.

Only Five Companies Actually Crawl the Open Web

Before mapping each AI lab to its backend, the key constraint is simple: only five operators crawl the open web at scale. Everything else sold as a “search engine” resells one of those indexes. The five are Google, Microsoft Bing, Yandex, Baidu, and Brave Search, with Mojeek as a much smaller niche sixth.

Claude Code vs COBOL: The AI Migration Controversy That Crashed IBM's Stock 13%

On February 23, 2026, Anthropic published a blog post titled “How AI Helps Break the Cost Barrier to COBOL Modernization” . It shipped with a Code Modernization Playbook . By market close, IBM’s stock had fallen 13.2% to $223.35 per share. That was IBM’s worst single day since October 2000. More than $31 billion in market cap vanished. Accenture fell 6.5%. Cognizant dropped 6%. One blog post had shaken the whole legacy migration sector.

OpenClaw on Your $20 Claude Sub After Anthropic Banned It

OpenClaw’s bundled claude-cli backend is officially sanctioned by Anthropic. OAuth-token extraction tools stay blocked. The carve-out works because shelling out to claude -p preserves prompt caching, so a $20 Pro or $200 Max sub routes through OpenClaw without four-figure API bills. The catch used to be a 5-hour usage cap. From June 15, 2026, that claude -p traffic moves onto a separate monthly Agent SDK credit, so the real limit is now a modest dollar budget.

Botmonster Tech

Botmonster Tech