After publishing a 7-minute OpenClaw deploy video and watching roughly 1,000 isolated VMs spin up afterward, one r/LocalLLaMA cloud-infra operator concluded the only OpenClaw workflow that survives unsupervised execution is a daily news digest. Memory is the load-bearing failure mode, not a fixable bug. OpenClaw sits at 370K+ GitHub stars, but the working-workflow count has barely moved.

Key Takeaways

- A cloud-infra operator watched roughly 1,000 OpenClaw deploys and found one reliable use case.

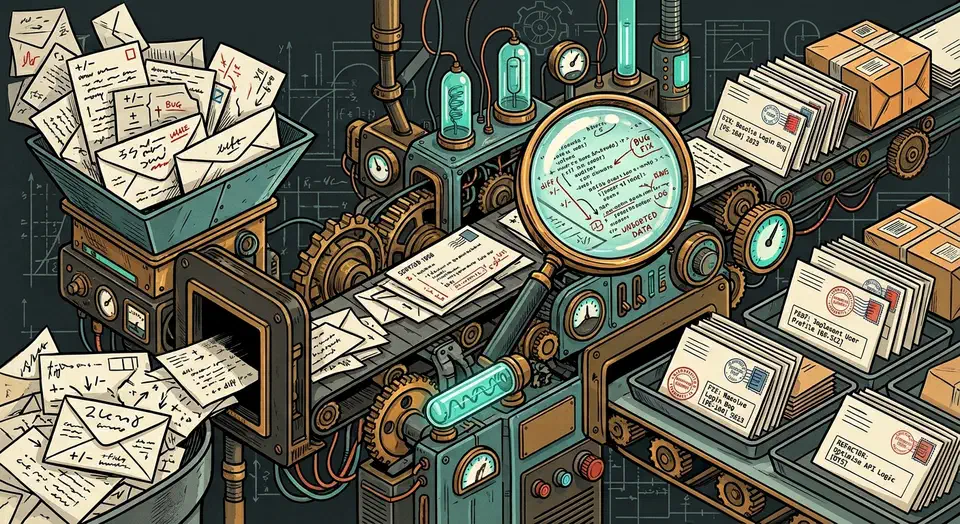

- Memory unreliability is built into how the agent works, not a bug a patch can fix.

- Daily news digests are the exception because they keep no state between runs.

- The same digest can be built with a cron job and any LLM API in about ten lines.

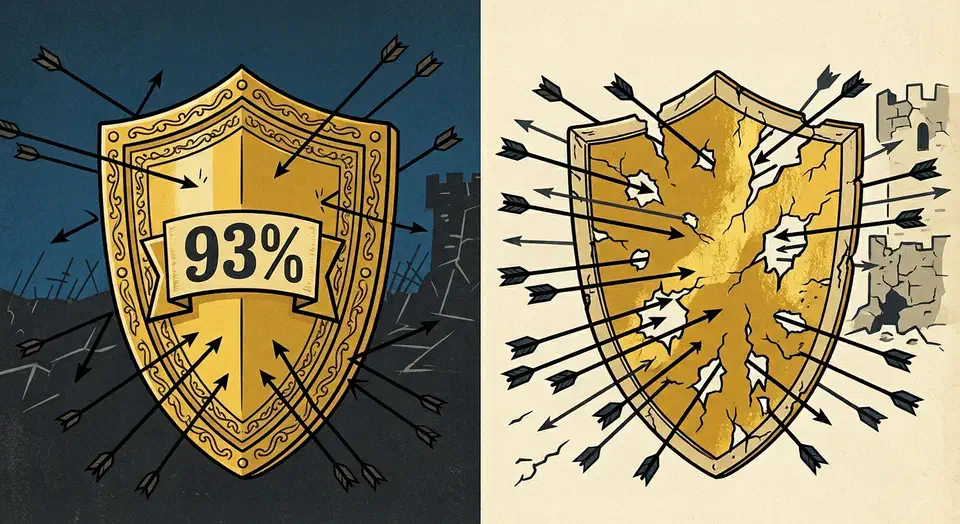

- OpenClaw’s founder admitted that recent releases were a “rough week”.

The 1,000-Deploy Post That Broke the Consensus

The contrarian thesis is anchored to one specific source: an r/LocalLLaMA post titled “OpenClaw has 250K GitHub stars. The only reliable use case I’ve found is daily news digests” , with 335 comments and 891 votes. The OP is not a casual skeptic. He runs cloud infrastructure where strangers spin up Linux VMs, published a deploy walkthrough that took off, and now has a dataset most reviewers do not have access to.

Botmonster Tech

Botmonster Tech