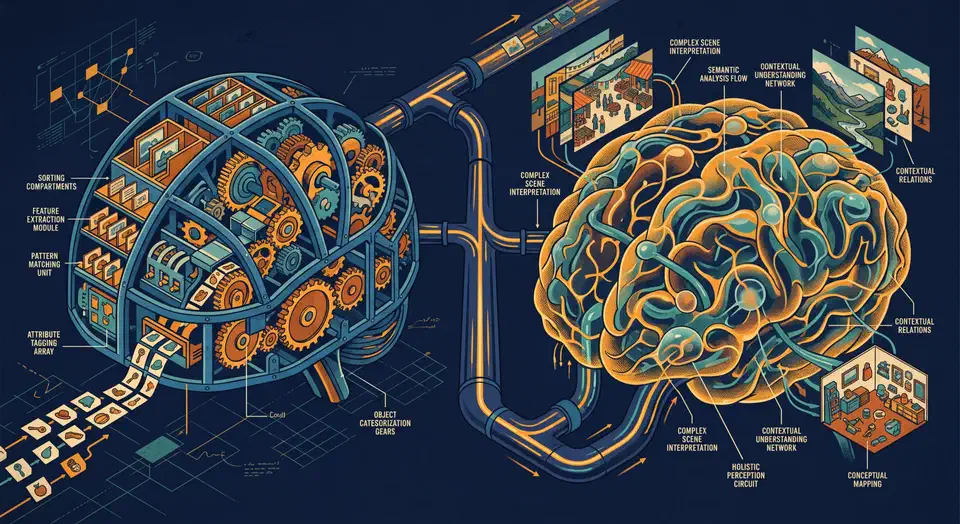

Florence-2 and Qwen2-VL both run on consumer NVIDIA GPUs starting at 8 GB VRAM and handle OCR, object detection, image captioning, and visual question answering entirely offline. Florence-2 uses a compact sequence-to-sequence architecture with task-specific prompt tokens, which makes it fast and reliable for structured extraction work. Qwen2-VL takes a conversational approach and handles open-ended reasoning, complex documents, and follow-up questions - making the two models complementary rather than interchangeable.

The Claude Code Source Leak: What 512,000 Lines of TypeScript Revealed About AI Agent Architecture

One missing line in a build config caused the worst source leak in AI tooling history. On March 31, 2026, Anthropic shipped version 2.1.88 of its @anthropic-ai/claude-code package with a 59.8 MB JavaScript source map inside. That map held the full client agent harness for Claude Code : 512,000 lines of readable TypeScript in 1,906 files. Mirrors of the code spread thousands of times in hours. A clean-room Python/Rust rewrite then became the fastest-growing repo in GitHub history. Anthropic’s legal response hit the wrong targets. The day got worse: a supply-chain attack hit the axios npm package, piling on for devs who rely on these tools.

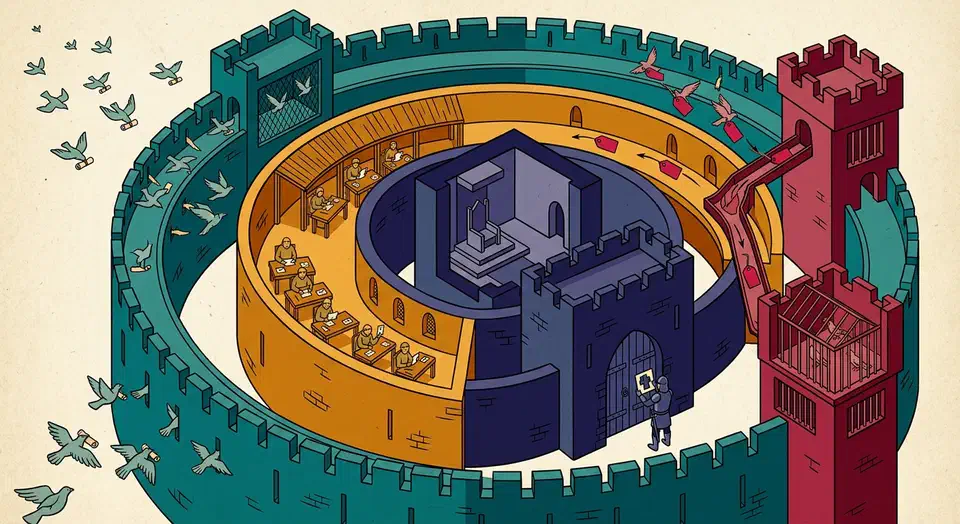

Claude Code with MCP: Local Agent for Files, SQL, APIs

Claude Code combined with custom MCP (Model Context Protocol) servers creates a local AI coding agent that can read and write files, query databases, call APIs, and execute shell commands - all orchestrated by Claude through a standardized tool-use interface. You set up the Claude Code CLI, configure MCP servers in your project or user settings, and the agent automatically discovers and uses the tools you expose. The result is a development workflow where you describe tasks in natural language and Claude executes multi-step coding operations with full access to your project context.

LLM Security: 7-Stage Defense Pipeline Against Prompt Injection

You can harden LLM apps against prompt injection and data leaks by stacking defenses. Input cleanup strips control tokens before they hit the model. Output filters scan replies for PII and secrets. Structured output forces the model to follow a fixed schema. Add a system prompt firewall that walls off trusted rules from user input. Together they turn one bare API call into a pipeline. Bad prompts get caught before the model runs. Risky data gets redacted after. No single layer is bulletproof. Stacked, they cut the attack surface enough that most threats give up.

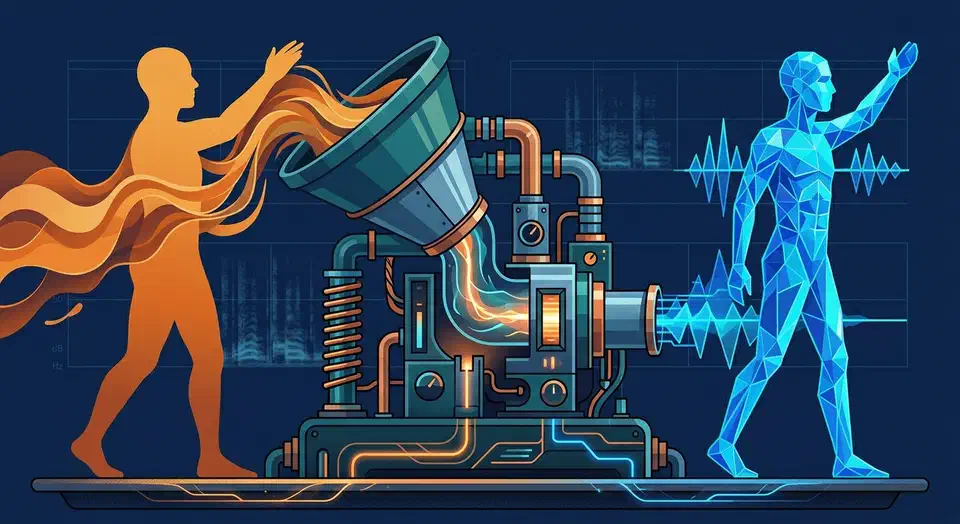

Clone Your Voice with Coqui TTS: 5 Minutes to Custom Speech

You can clone your own voice with Coqui TTS using just 5 minutes of recorded audio, all on your own hardware. The steps are simple. Record clean audio. Turn it into a training set. Fine-tune an XTTS v2 or VITS model. Export the result for real-time use. On a modern GPU like the RTX 5070 with 12 GB of VRAM, fine-tuning takes 2 to 4 hours. The output sounds natural and keeps the target voice’s timbre, pacing, and accent.

MCP Server Development: Build Custom Tools for Claude and Local LLMs

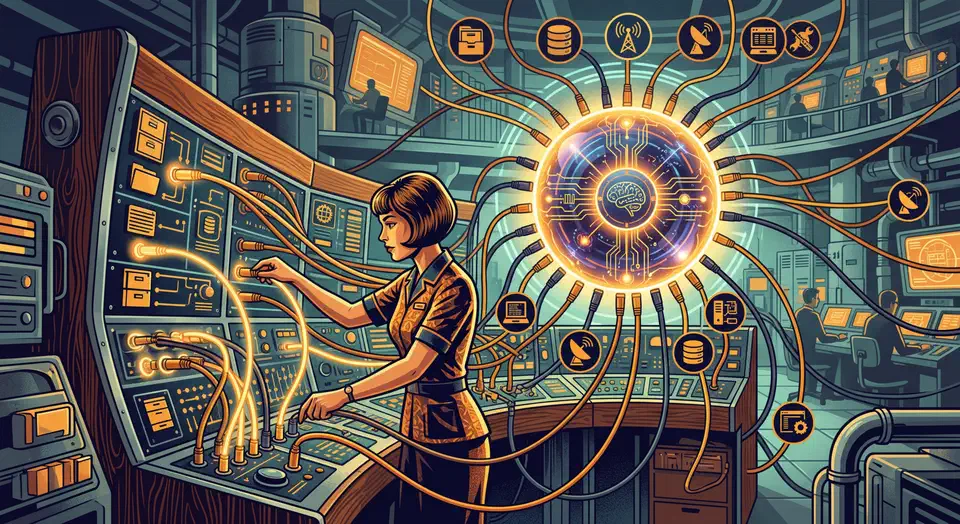

The Model Context Protocol

gives LLMs a standard way to call external tools, read files, and query databases. You skip the rewrite each time you switch models. You can build a working MCP server in Python with the official mcp SDK in under 100 lines. It runs with Claude Desktop or Claude Code in minutes. This guide walks the full path, from a tiny first server to production.

What MCP Is and Why It Changes Tool Use

MCP is a JSON-RPC 2.0 protocol. It lets an LLM client (like Claude Desktop

, Claude Code, or Cursor) find and call tools exposed by a server process. The big shift from older function-calling is the discovery step. Instead of hard-coding tool defs into every prompt, the client sends a tools/list request when it connects. It gets back the full schema for everything the server exposes. Add a new tool, restart the server, and any client sees it on the next connect.

Botmonster Tech

Botmonster Tech