For most developers in 2026, Gemma 4 31B is the best all-around open model. It ranks #3 on the LMArena leaderboard, scores 85.2% on MMLU Pro, and ships under Apache 2.0 with zero usage restrictions. Qwen 3.5 27B edges it on coding benchmarks - 72.4% on SWE-bench Verified versus Gemma 4’s strength in math reasoning - and its Omni variant offers real-time speech output that no other open model matches. Llama 4 Maverick (400B MoE) wins on raw scale but requires datacenter hardware and carries Meta’s restrictive 700M MAU license. Pick Gemma 4 for the best quality-to-size ratio under a true open-source license, Qwen 3.5 for coding-heavy workflows, and Llama 4 only when you need the largest available open model and can absorb the legal overhead.

Local Meeting Transcriber: Whisper, Ollama, Structured Notes

You can build a fully local meeting transcriber on Linux. Capture system audio with PipeWire. Transcribe with Faster-Whisper on your GPU. Pipe the transcript to a local LLM through Ollama for structured summaries with names, decisions, and action items. The pipeline runs on 16GB of RAM and a mid-range NVIDIA GPU, and produces notes within seconds of the call ending. No data leaves your network.

Commercial services like Otter.ai and Fireflies.ai route your audio through their servers. If your meetings cover sensitive topics like product plans, HR, or legal reviews, that’s a non-starter. A local pipeline gives you the same structured output, and nothing leaves your building.

Route Ollama, vLLM, OpenAI through one LiteLLM API

You can unify access to Ollama, vLLM, cloud providers like OpenAI, Anthropic, and Google, plus custom model servers behind one OpenAI-compatible endpoint using LiteLLM Proxy

. LiteLLM is a reverse proxy. It maps the standard /v1/chat/completions request to each provider’s native API. From one YAML file it handles auth, model routing, load balancing, fallbacks, rate limits, and spend tracking. Your app calls one endpoint with one key, and LiteLLM picks the right backend. You can swap models, add providers, or run A/B tests without touching app code.

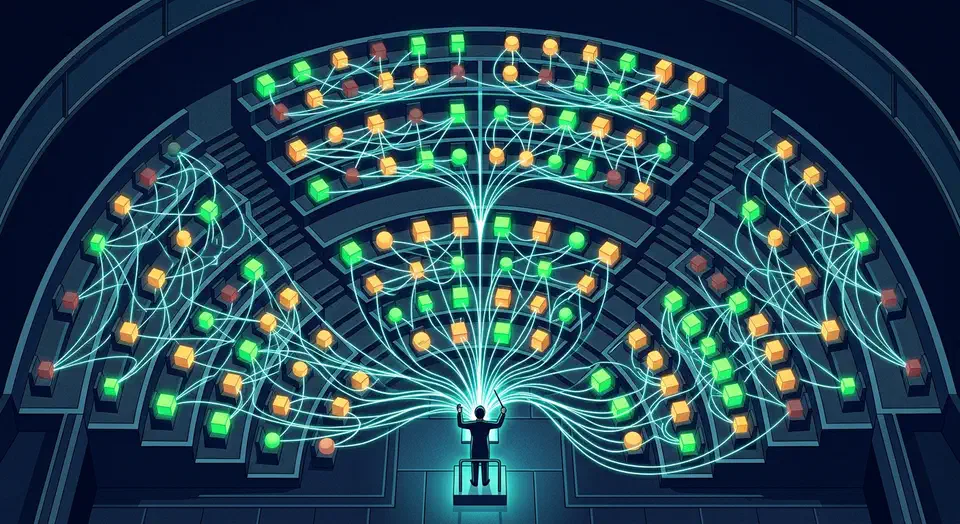

Running Multiple AI Coding Agents in Parallel: Patterns That Actually Work

Three focused AI coding agents consistently outperform one generalist agent working three times as long. That finding, presented by Addy Osmani at O’Reilly AI CodeCon in March 2026, captures the central promise - and central difficulty - of multi-agent development. The throughput gains are real, but they only materialize when you solve the coordination problem. Without file isolation, iteration caps, and review gates, parallel agents produce a mess of merge conflicts and duplicated work that takes longer to untangle than doing everything sequentially.

Claude Code vs Cursor vs GitHub Copilot: Which AI Coding Tool Fits Your Workflow (2026)

Claude Code, Cursor, and GitHub Copilot take three very different shots at AI-assisted coding: a terminal-native agent, an AI-first IDE, and a multi-IDE plugin. Claude Code leads on raw skill and complex multi-file work, scoring highest on SWE-bench at about 74-81%. Cursor offers the best editor experience with background agents and cloud automation. GitHub Copilot has the lowest entry price at $10/month and the widest IDE support. Most pro developers now mix two or more tools, with Claude Code plus Cursor as the top pair per the JetBrains AI Pulse survey from January 2026.

Git Worktrees for Parallel Claude Code Sessions: Run 10+ AI Agents Without File Conflicts

Git worktrees

let you attach many working directories to a single repo. Each one has its own branch checked out. Claude Code

ships a native --worktree (-w) flag that handles the setup in one command. It creates a worktree, checks out a new branch, and launches Claude inside it. Run the same command in another terminal and you’ve got a second agent. Scale to five, ten, or more sessions and none of them clash on disk.

Botmonster Tech

Botmonster Tech