Five open source repositories dropped in March 2026 that expand what Claude Code can actually do. Karpathy’s AutoResearch runs overnight ML experiments without human input. OpenSpace makes your agent skills fix and improve themselves. CLI-Anything turns GUI software into agent-ready command-line tools. Claude Peers MCP lets multiple Claude Code sessions coordinate on the same machine. And Google Workspace CLI opens Gmail, Drive, Calendar, and Sheets to programmatic agent access. All five are free, open source, and plug directly into Claude Code.

ControlNet for Stable Diffusion: Sketch-to-Image, Depth Control

ControlNet lets you guide Stable Diffusion’s image generation with spatial conditioning inputs - hand-drawn sketches, Canny edge maps, depth images, or OpenPose skeletons - so the output follows your compositional intent rather than relying on prompt engineering alone. You feed a preprocessed control image alongside your text prompt, and the model generates artwork that matches the structure of your input while filling in texture, lighting, and detail from the prompt. This gives you pixel-level compositional control that no amount of prompt tweaking can replicate.

Generating SVG Graphics with AI

For precise technical diagrams, prompt an LLM to output SVG or Mermaid.js syntax instead of pixel-based images. This creates lightweight, resolution-independent graphics that search engines can read. Vector formats offer performance and clarity that raster images simply can’t match.

Why SVG? The Case Against Raster Images for Technical Diagrams

Most bloggers use screenshots or PNG exports for diagrams. This habit seems easy but carries hidden costs. A PNG flowchart often weighs 100 KB to 400 KB. In contrast, the same SVG diagram usually stays between 5 KB and 20 KB. This huge difference improves Core Web Vitals metrics like Largest Contentful Paint . Better performance helps your search rankings.

Production LLM Hallucinations: Taxonomy, Evals, and RAG Defenses

Fixing LLM hallucinations in production needs a layered defense. Use Chain-of-Verification at inference time. Ground the model in trusted data. Build eval suites that give you a hallucination rate you can track and gate in CI . No single trick fixes this. But pair prompt rules with retrieval-augmented grounding , self-checking, and validation layers, and you turn it into a problem you can measure and ship against.

What Is Hallucination? A Taxonomy for Developers

“Hallucination” has become an umbrella label for almost any unexpected LLM output. That fuzziness is dangerous in production. Each failure mode has a distinct cause and a distinct fix. Lump them together and you’ll apply the wrong remedy to the wrong problem. You’ll spend cycles on prompt tuning when the real issue is retrieval quality, or add RAG when the failure is instruction-following. Before you can fix hallucinations, you need a precise vocabulary for what you’re seeing.

Build a Private Local AI Voice Assistant (2026 Guide)

A private voice assistant that runs on your own hardware is practical in 2026. No Amazon, no Google, no cloud. With Whisper v3 for speech-to-text, a quantized Llama model for intent, and Piper for natural speech, you can build a voice-controlled setup on a Raspberry Pi 5 that never sends one audio sample to the internet. This guide walks the full stack, from wake word to Home Assistant , with a focus on low latency so it feels like a real assistant.

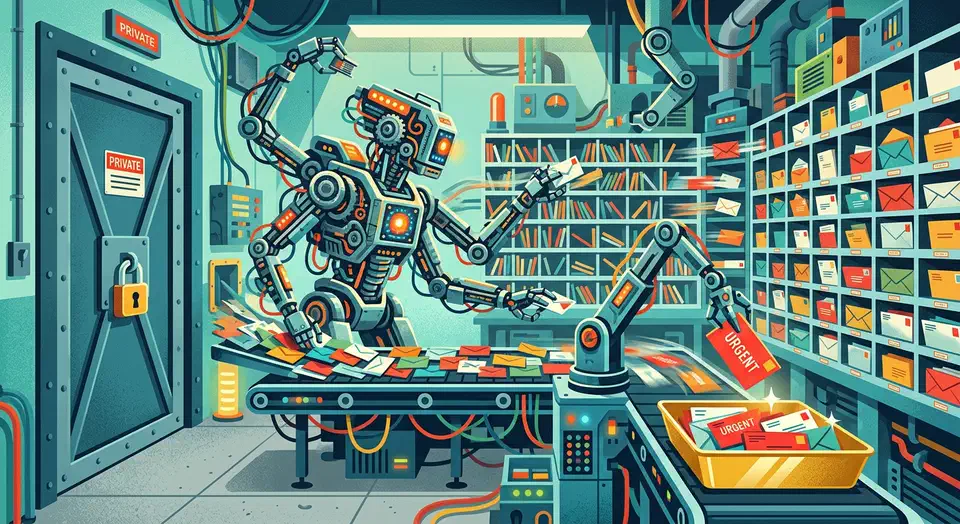

Automating Gmail with Local AI Agents and Python

You can automate your Gmail inbox on your own machine. The Gmail API feeds messages into a private Python script. A local LLM then handles summaries, sorting, and draft replies. You get the smart inbox features that tools like Google’s Gemini sidebar or Microsoft Copilot for Outlook offer. None of your email content ever leaves your computer.

This guide walks through the full build. You’ll set up the Gmail API with minimal OAuth scopes. You’ll fetch and parse raw email data, then mask any PII with Microsoft Presidio before the model sees it. You’ll build a daily summarizer that ranks mail by urgency. You’ll also build a smart draft writer that learns from your sent mail, and you’ll wire the whole pipeline up with cron. By the end, you’ll have a working local email agent that runs on any mid-range Linux or macOS box with Ollama installed.

Botmonster Tech

Botmonster Tech