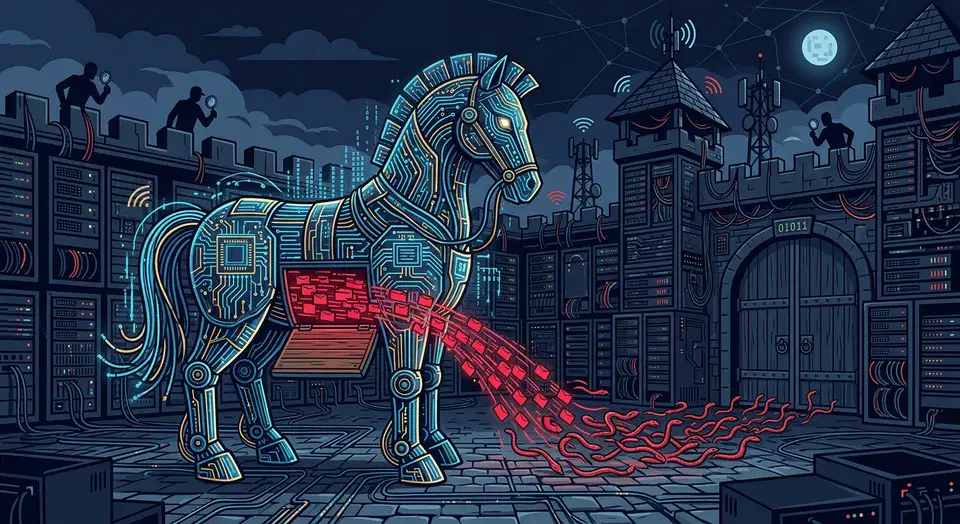

Your AI coding agent has the same file access, shell rights, and database keys you do. A review of 78 studies from January 2026 (arXiv:2601.17548 ) tested every big coding agent. The list ran Claude Code, GitHub Copilot, Cursor . All fell to prompt injection. Adaptive attacks landed more than 85% of the time. This isn’t theory. CVE-2026-23744 gave attackers remote code execution on MCPJam Inspector at CVSS 9.8. A booby-trapped PDF tripped a physical pump through a Claude MCP link at a plant. Attackers hit GitHub’s MCP server to exfiltrate private repository data via malicious issues . And 47 firms fell to a poisoned plugin ecosystem that hid for six months.

Claude Code Skills Ecosystem: 1,340+ Installable Agent Skills for AI Coding Assistants

The Claude Code

skills ecosystem passed 1,340 installable skills in early 2026, and the number keeps climbing. These skills use the universal SKILL.md format

: folders of structured instructions that teach AI coding tools to do special tasks. They work across Claude Code, Cursor, Codex CLI, and Gemini CLI without changes. Official skills have shipped from teams at Anthropic, Trail of Bits, Vercel, Stripe, Cloudflare, and dozens of solo devs. Install takes one npx command.

Running Gemma 4 Locally with Ollama: All Four Model Sizes Compared

Google’s Gemma 4 is not one model - it is a family of four, each targeting different hardware and different use cases. The smallest runs on a Raspberry Pi. The largest ranks #3 on LMArena across all models, open and closed. All four ship under the Apache 2.0 license, a first for the Gemma family. This guide walks through installing each variant with Ollama (currently at v0.20.2), benchmarks them on real consumer hardware, and helps you decide which one fits your setup.

Self-Hosted AI Search: Combine SearXNG and a Local RAG Pipeline

You can build a private AI search engine modeled on Perplexity

by combining SearXNG

with a local language model running through Ollama

. The stack is: SearXNG aggregates results from multiple search engines simultaneously, a Python scraper fetches and cleans the actual page content, and the LLM synthesizes everything into a cited answer with inline references like [1], [2]. No API keys, no telemetry, no query logging to third-party AI services. A machine with 12 GB VRAM handles the whole pipeline, and most queries come back in 5-15 seconds.

Three Tiers of AI Pair Programming: From Autocomplete to Autonomous Overnight Agents

The most productive developers in 2026 don’t use a single AI tool. They run a three-tier stack. Tier 1 is inline completions for line-by-line speed. Tier 2 is parallel agent sprints that take on feature-sized work. Tier 3 is overnight batch agents that run 30 to 50 improvement cycles while you sleep. GitHub’s research shows AI pair programming makes developers 55% faster, but that gain comes mostly from Tier 1. The real win comes from running all three tiers at once, with clear rules about which task goes where.

Fine-Tuning Gemma 4 with Unsloth on a Single GPU: A Practical Guide

Google’s Gemma 4 family - spanning the 2.3B E2B, 4.5B E4B, 26B MoE, and 31B dense variants - delivers frontier-level open-weight performance across text, vision, and audio. But general-purpose models still struggle with narrow, domain-specific tasks where you need consistent output formats, specialized terminology, or knowledge that wasn’t in the pretraining data. Fine-tuning fixes this, and Unsloth (version 2026.4.2 as of this writing) makes it possible on a single consumer GPU through custom CUDA kernels that cut VRAM by up to 60% and double training speed compared to standard Hugging Face + PEFT.

Botmonster Tech

Botmonster Tech