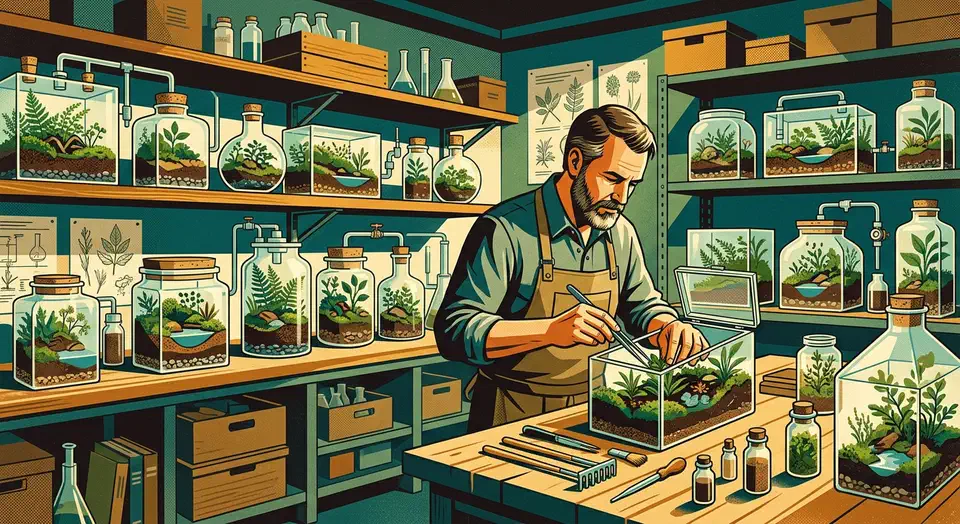

Rust error handling in 2026 rests on four patterns. You use Result<T, E> with custom enums for libraries. You reach for thiserror

to derive those enums with less boilerplate. You pick anyhow

to pass errors up through application code. And you add miette

or color-eyre

for friendly diagnostic reports. The right choice depends on whether you write a library or an application. Most real Rust projects use both: thiserror in their library crates and anyhow in their binary crates.

Defensive Coding in Rust: Error Handling Patterns That Scale

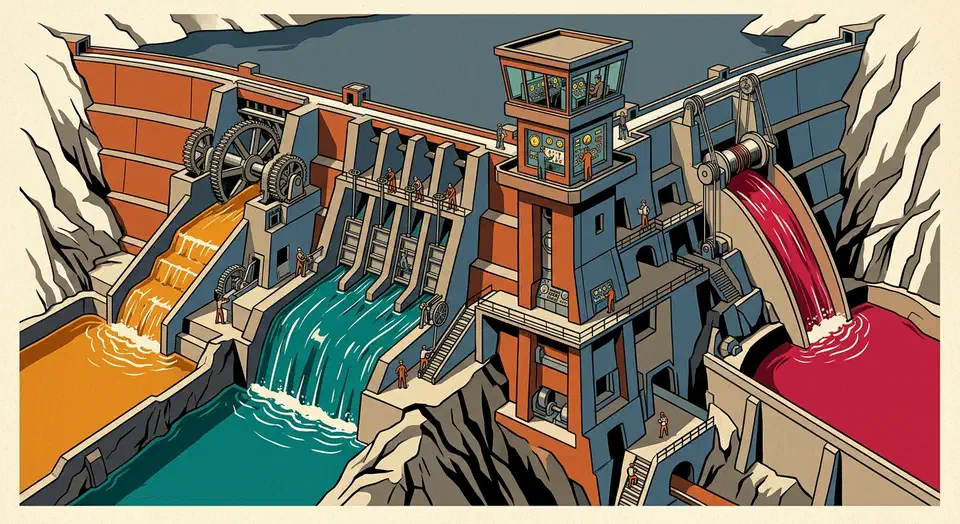

Is Systemd-Nspawn a Better Alternative to Docker for Linux Containers?

Yes. For many workloads, systemd-nspawn

beats Docker on leanness, simplicity, and host integration. It shines on servers and homelabs where you want isolated environments without daemon overhead. You launch a container with one command, manage it with machinectl, and run it as a systemd service. All the tools already ship with every modern Linux system.

That said, Docker and nspawn solve slightly different problems. Knowing where each one wins makes the choice easy.

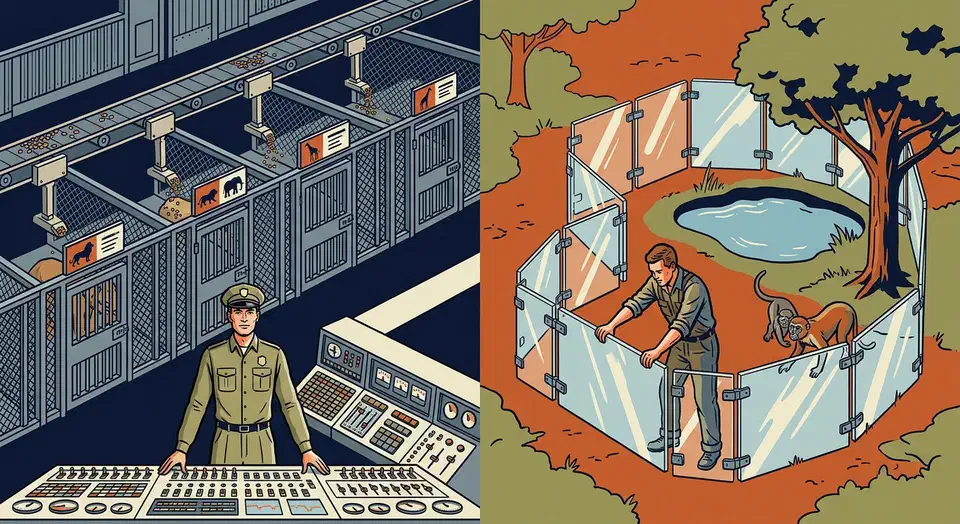

Sandbox Untrusted Linux Apps and CLI Tools with Bubblewrap

Bubblewrap (bwrap) is a small, unprivileged tool that sandboxes untrusted Linux apps and CLI tools with no root and no SUID binary. You build the sandbox mount by mount, so you control exactly what a program can see. It’s the same engine Flatpak runs inside. There is no daemon and no container image.

This guide is built around Bubblewrap: sandboxing desktop apps, locking down CLI tools and build scripts, network isolation, and runtime overhead. It also weighs bwrap against Firejail , the friendlier SUID-root sandbox with 1,000-plus ready-made profiles. That way you can see which one fits your threat model.

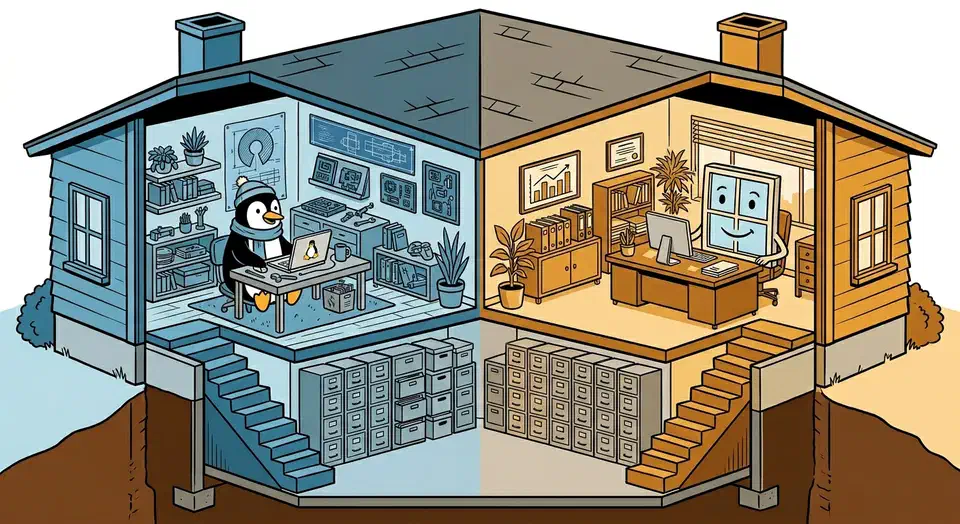

Windows 11 + Linux: Shared exFAT, systemd-boot Bootloader

Install Windows first. Then install Linux with systemd-boot as the bootloader on a shared EFI System Partition. Add a dedicated exFAT partition for cross-OS file sharing. This setup avoids the classic problem of Windows Update wiping out GRUB , since systemd-boot entries sit next to Windows Boot Manager in the ESP without a fight. Both systems read and write exFAT out of the box, with no risk of corruption.

Caddy Reverse Proxy for Self-Hosted Services: Zero-Config HTTPS

Caddy is the simplest reverse proxy for self-hosted services. It gets and renews TLS certificates from Let’s Encrypt with zero config. Install the static binary, write a Caddyfile with three lines per service, and Caddy handles HTTPS, HTTP/2, OCSP stapling, and renewal on its own. That replaces hundreds of lines of Nginx config and separate Certbot cron jobs.

If you run even a handful of services on a home server or VPS, a reverse proxy with proper TLS is non-negotiable. Caddy makes this painless, so there’s no excuse to skip it.

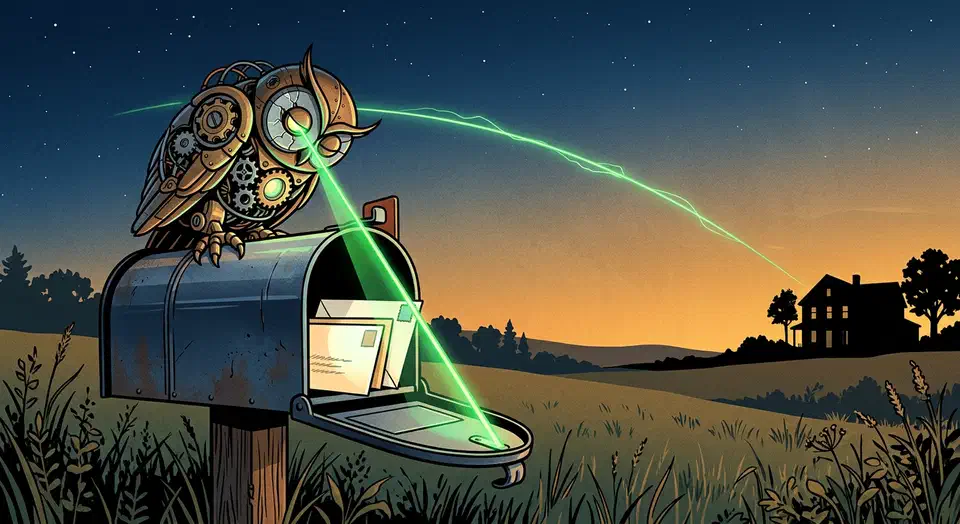

ESP32 Mailbox Sensor: Reed Switch, VL53L0X, $15, Months Battery

Mount an ESP32-C3 Super Mini with a reed switch on the mailbox door (or a VL53L0X time-of-flight distance sensor inside the box), flash it with ESPHome 2026.3, and wire it into Home Assistant - you will get instant push notifications on your phone the moment mail lands. The total parts cost sits under $15, and deep sleep keeps the whole thing alive for months on a single 18650 cell.