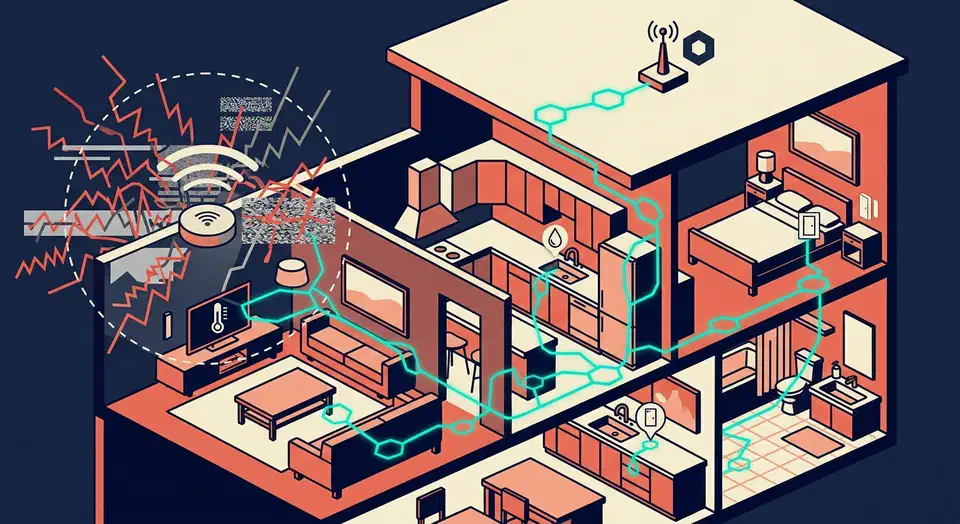

Thread is a low-power, IPv6-based mesh protocol for smart home devices. Since ESPHome

2025.6.0, you can flash Thread-native firmware onto any ESP32-H2 or ESP32-C6 board. No Zigbee2MQTT, no WiFi congestion. Grab an ESP32-H2-DevKitM-1, write a short ESPHome config with the esp-idf framework and the openthread component, then join it to a Thread border router

like Home Assistant Yellow or a HomePod mini. Your sensors show up over IPv6 with sub-second latency and battery life measured in months.

Build a Thread Device With ESPHome and the ESP32-H2

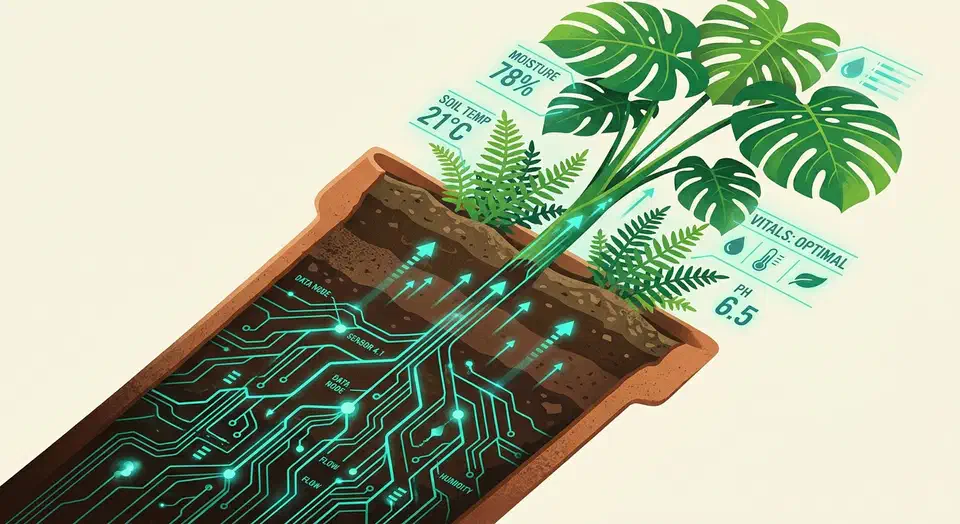

Plant Monitor System ESP32: Under $10 Per Plant

Yes, you can monitor every houseplant in your home for under $10 per plant. A single ESP32 board running ESPHome (currently at version 2026.3.0) reads capacitive soil moisture sensors, a BH1750 light sensor, and an AHT20 temperature/humidity sensor, then feeds everything straight into Home Assistant . From there, automations send you a notification when a plant needs water, dashboards show moisture trends over weeks, and you stop guessing whether that fern in the corner is actually happy. This guide covers sensor selection, wiring a 4-plant monitoring hub, the complete ESPHome YAML configuration, Home Assistant dashboards, and tips for long-term reliability.

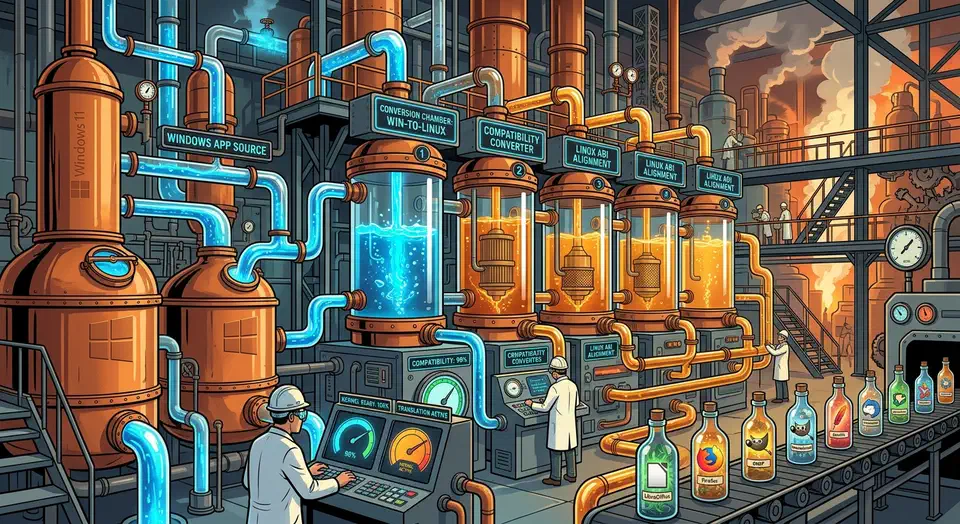

Running Windows Apps on Linux: Proton, Bottles, and the Full Compatibility Stack

Use Proton for Windows games on Steam. Use Bottles for everything else: Office, Adobe apps, business tools, non-Steam games. Both run on Wine, which maps Windows API calls to Linux without a virtual machine. DXVK and VKD3D-Proton handle the DirectX side. Wine 11.0 closes most of the remaining gap to native Windows.

This guide covers the full stack in 2026: what each piece does, how to set up Proton and Bottles, how to tune DirectX translation, and what still breaks.

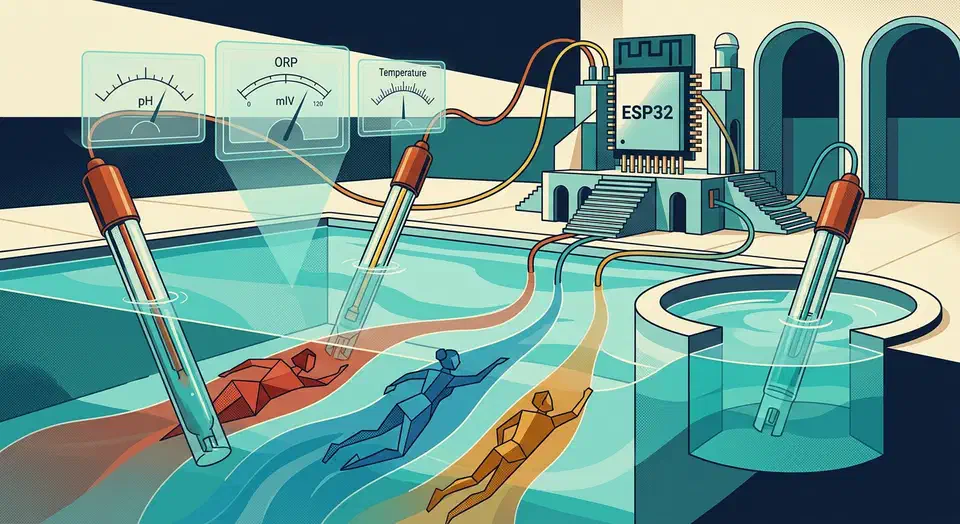

Automate Your Pool or Hot Tub with Home Assistant and ESPHome Sensors

Pool and hot tub chemistry can swing from safe to damaging in a few hours. A paper strip you dip once a week will not catch it. The fix is cheap: a waterproof ESPHome sensor built around an ESP32 , reading water temperature, pH, and ORP, piped into Home Assistant for pump schedules, chemical alerts, and cover reminders. A full setup runs under $80. It replaces guesswork with a live dashboard and push alerts that fire before your heater corrodes.

CSS Subgrid Reaches 92% Baseline: Align Cards Natively

CSS subgrid lets a nested grid inherit its parent’s track sizes. Child elements inside nested components line up with the parent layout. No flat HTML, no JavaScript height math, no hardcoded min-heights. It shipped in every major browser by late 2023, sits at about 92% global usage, and is safe on any modern web project today.

Ever fought a card grid where the buttons won’t line up because one card has a longer description? Subgrid is the fix you’ve been waiting for.

Gemini 3.5 Flash: 76% on Terminal-Bench, 4x Faster Output

Google released Gemini 3.5 Flash on May 19, 2026. The fast, lower-cost tier scored 76.2% on Terminal-Bench 2.1 and, by Google’s own measure, generates output about 4 times faster than other frontier models. Flash is available today across the Gemini app, Search, and the API. Gemini 3.5 Pro is confirmed for next month.

Key Takeaways

- Gemini 3.5 Flash launched on May 19, 2026 and is free to use in the Gemini app and Google Search.

- It scored 76.2% on Terminal-Bench 2.1, a test of finishing real terminal tasks end to end.

- Google says Flash produces output about 4 times faster than rival frontier models.

- The model is built for agents that run long, multi-step jobs and call tools.

- Gemini 3.5 Pro, the larger sibling, is confirmed for next month.

What is Gemini 3.5 Flash?

Gemini 3.5 Flash is Google’s new fast, lower-cost tier of the Gemini 3.5 family. It was announced and made generally available on May 19, 2026, according to the Google announcement post . The “Flash” name has always meant a model tuned for speed and price.